New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Do NCCL support multi-NIC on ethernet? #601

Comments

|

We do support multi-NIC on Ethernet. Can you run the NCCL perf tests, and also post the log with If NCCL is not using all NICs, it's probably because it would not make performance better. Ethernet has no GPU Direct RDMA, so everything has to go through the CPU, hence it is bottlenecked by the TCP/IP stack in the linux kernel. On top of that, multi-NIC aggregation works well when GPUs are connected through NVLink (because we can use NVLink to split the operations in N parts then balance the traffic onto the N NICs) but without NVLink it would not help much except if NICs have low bandwidth, e.g. 10Gb/s or 25Gb/s. |

|

I can confirm that NCCL detects the 4 NICs correctly and creates 4 rings, each using a different NIC. If you run with just Now for some reason, you end up seeing traffic on only one NIC. Did you define a different subnet for each interface or did you put all interfaces in the same subnet? That could cause the linux kernel to route all TCP/IP traffic through a single interface. |

|

I think it's possible to have all NICs in the same subnet, but that might require complex ARP and IP routing configuration to make sure all traffic uses the right NIC. So to understand what's happening here, the easiest is to have them in different subnets, even if later to run alltoall-like patterns you might need to either have routing between subnets or put them back in the same subnet. And even if all 3 NICs in net 11.1.2.x were to only use a single NIC, I'd think we should see 2x1.2 = 2.4 GB/s, yet it seems that all traffic goes through the 192.168.2.x network. I'd still think this is an IP configuration issue, but to be sure, can you double check that the NCCL log shows |

|

I'd run the NCCL perf test with gpu23:2625:2640 [2] NCCL INFO threadThresholds 8/8/64 | 64/8/64 | 8/8/64

gpu23:2625:2640 [2] NCCL INFO Trees [0] 3/-1/-1->2->1|1->2->3/-1/-1 [1] 3/4/-1->2->0|0->2->3/4/-1 [2] 1/-1/-1->2->-1|-1->2->1/-1/-1 [3] 3/-1/-1->2->-1|-1->2->3/-1/-1 [4] 3/-1/-1->2->1|1->2->3/-1/-1 [5] 3/-1/-1->2->0|0->2->3/-1/-1 [6] 1/-1/-1->2->5|5->2->1/-1/-1 [7] 3/-1/-1->2->7|7->2->3/-1/-1

gpu24:2321:2333 [0] NCCL INFO Channel 00 : 3[84000] -> 4[2000] [receive] via NET/Socket/0

gpu23:2625:2638 [0] NCCL INFO Channel 00/08 : 0 1 2 3 4 5 6 7

gpu23:2625:2639 [1] NCCL INFO threadThresholds 8/8/64 | 64/8/64 | 8/8/64

gpu23:2625:2639 [1] NCCL INFO Trees [0] 2/4/-1->1->0|0->1->2/4/-1 [1] -1/-1/-1->1->3|3->1->-1/-1/-1 [2] 0/6/-1->1->2|2->1->0/6/-1 [3] -1/-1/-1->1->0|0->1->-1/-1/-1 [4] 2/-1/-1->1->0|0->1->2/-1/-1 [5] -1/-1/-1->1->3|3->1->-1/-1/-1 [6] 0/-1/-1->1->2|2->1->0/-1/-1 [7] -1/-1/-1->1->0|0->1->-1/-1/-1

gpu23:2625:2639 [1] NCCL INFO Setting affinity for GPU 1 to 10,00000001

gpu23:2625:2638 [0] NCCL INFO Channel 01/08 : 0 1 2 3 4 5 6 7

gpu23:2625:2638 [0] NCCL INFO Channel 02/08 : 0 1 2 3 4 5 6 7

gpu23:2625:2638 [0] NCCL INFO Channel 03/08 : 0 1 6 7 4 5 2 3

gpu23:2625:2638 [0] NCCL INFO Channel 04/08 : 0 1 2 3 4 5 6 7

gpu23:2625:2638 [0] NCCL INFO Channel 05/08 : 0 1 2 3 4 5 6 7

gpu23:2625:2638 [0] NCCL INFO Channel 06/08 : 0 1 2 3 4 5 6 7

gpu23:2625:2638 [0] NCCL INFO Channel 07/08 : 0 1 6 7 4 5 2 3

gpu23:2625:2638 [0] NCCL INFO threadThresholds 8/8/64 | 64/8/64 | 8/8/64

gpu23:2625:2638 [0] NCCL INFO Trees [0] 1/-1/-1->0->-1|-1->0->1/-1/-1 [1] 2/-1/-1->0->-1|-1->0->2/-1/-1 [2] 3/-1/-1->0->1|1->0->3/-1/-1 [3] 1/-1/-1->0->3|3->0->1/-1/-1 [4] 1/-1/-1->0->5|5->0->1/-1/-1 [5] 2/-1/-1->0->6|6->0->2/-1/-1 [6] 3/-1/-1->0->1|1->0->3/-1/-1 [7] 1/-1/-1->0->3|3->0->1/-1/-1

gpu23:2625:2638 [0] NCCL INFO Setting affinity for GPU 0 to 10,00000001

gpu24:2321:2333 [0] NCCL INFO Channel 00 : 4[2000] -> 5[3000] via direct shared memory

gpu24:2321:2334 [1] NCCL INFO Channel 00 : 5[3000] -> 6[83000] via direct shared memory

gpu23:2625:2638 [0] NCCL INFO Channel 00 : 7[84000] -> 0[2000] [receive] via NET/Socket/0

gpu24:2321:2336 [3] NCCL INFO Channel 00 : 7[84000] -> 0[2000] [send] via NET/Socket/0

gpu23:2625:2639 [1] NCCL INFO Channel 00 : 1[3000] -> 2[83000] via direct shared memory

gpu23:2625:2640 [2] NCCL INFO Channel 00 : 2[83000] -> 3[84000] via direct shared memory

gpu23:2625:2641 [3] NCCL INFO Channel 00 : 3[84000] -> 2[83000] via direct shared memory

gpu23:2625:2638 [0] NCCL INFO Channel 00 : 0[2000] -> 1[3000] via direct shared memory

gpu24:2321:2335 [2] NCCL INFO Channel 00 : 6[83000] -> 7[84000] via direct shared memory

gpu24:2321:2333 [0] NCCL INFO Channel 00 : 4[2000] -> 1[3000] [send] via NET/Socket/1

gpu24:2321:2336 [3] NCCL INFO Channel 00 : 7[84000] -> 6[83000] via direct shared memory

gpu24:2321:2334 [1] NCCL INFO Channel 00 : 5[3000] -> 4[2000] via direct shared memory

gpu23:2625:2640 [2] NCCL INFO Channel 00 : 2[83000] -> 1[3000] via direct shared memoryYou can see from the log that there are total 8 channels, and the inter-node communication is through

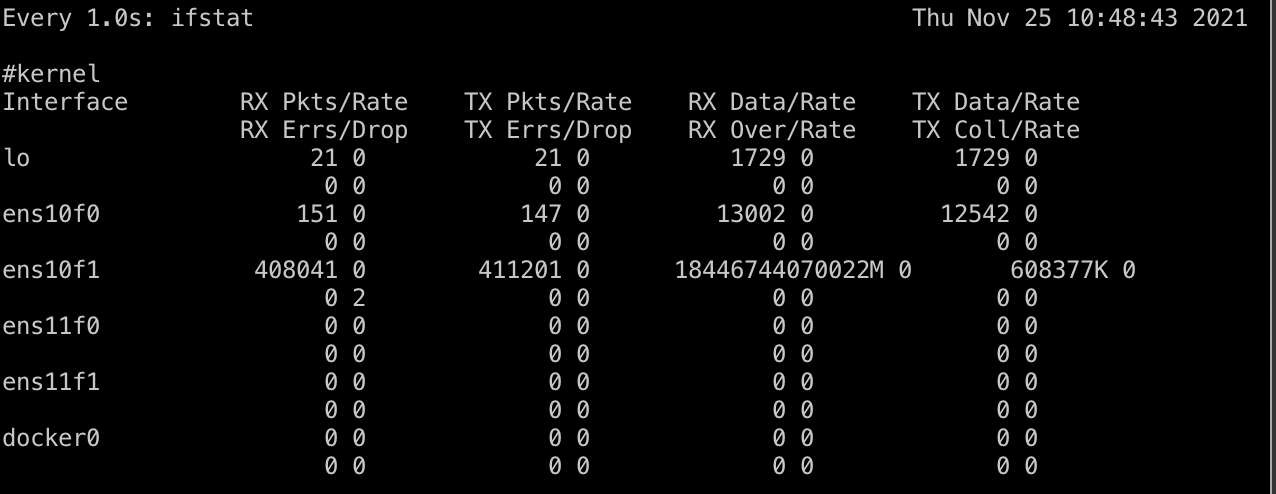

I didn't find Also, there is another info that maybe useful, I changed the perf algo from |

|

Yes, Ok so you seem to have indeed a routing issue due to 3 NICs being in the same subnet and causing I still can't quite explain why allreduce is causing only one NIC to see traffic and not 2 (with the 3/4-1/4 balance you're seeing on alltoall) but it would be good to put all 3 NICs in different subnets and try again alltoall and allreduce. That will probably give us more insight as to what's happening. |

|

Much appreciate for your rely, I will change the subnet config (it's a bit complex) to see if there is any difference later. And I found one more info about this, that no matter how I set the So, could you explain how does NCCL support NUMA Affinity? does NCCL always perform the socket operations on a single NUMA node? I'm also curious about how NCCL route its packet between different GPUs, is there any doc or code related that I shall read? I read some code of |

|

Ah, sorry about missing that earlier. By default mpirun binds every task to a single core. Can you try again adding |

I was testing NCCL between two nodes, with each node have 4 GPU and 4 NIC on ethernet.

But I found that NCCL only make use of one ethernet NIC even when I have set

NCCL_SOCKET_IFNAME=nic0,nic1,nic2,nic3.And the NCCL debug info shows that it has detect all these 4 NICs (

NCCL INFO Bootstrap : Using xxx).I see the #452 and knows that NCCL do support multi-NIC on RDMA automatically, so I wonder if NCCL support multi-NIC on ethernet?

The text was updated successfully, but these errors were encountered: