+

Python SDK快速开始(点开查看详情)

#### 快速安装

@@ -131,7 +131,7 @@ cv2.imwrite("vis_image.jpg", vis_im)

-

+

C++ SDK快速开始(点开查看详情)

diff --git a/README_EN.md b/README_EN.md

old mode 100644

new mode 100755

diff --git a/cmake/opencv.cmake b/cmake/opencv.cmake

index 912cf2917d..87f8c8bcd9 100755

--- a/cmake/opencv.cmake

+++ b/cmake/opencv.cmake

@@ -41,10 +41,12 @@ elseif(IOS)

else()

if(CMAKE_HOST_SYSTEM_PROCESSOR MATCHES "aarch64")

set(OPENCV_FILENAME "opencv-linux-aarch64-3.4.14")

- elseif(TARGET_ABI MATCHES "armhf")

- set(OPENCV_FILENAME "opencv-armv7hf")

else()

- set(OPENCV_FILENAME "opencv-linux-x64-3.4.16")

+ if(ENABLE_TIMVX)

+ set(OPENCV_FILENAME "opencv-armv7hf")

+ else()

+ set(OPENCV_FILENAME "opencv-linux-x64-3.4.16")

+ endif()

endif()

if(ENABLE_OPENCV_CUDA)

if(CMAKE_HOST_SYSTEM_PROCESSOR MATCHES "aarch64")

@@ -57,7 +59,7 @@ endif()

set(OPENCV_INSTALL_DIR ${THIRD_PARTY_PATH}/install/)

if(ANDROID)

set(OPENCV_URL_PREFIX "https://bj.bcebos.com/fastdeploy/third_libs")

-elseif(TARGET_ABI MATCHES "armhf")

+elseif(ENABLE_TIMVX)

set(OPENCV_URL_PREFIX "https://bj.bcebos.com/fastdeploy/test")

else() # TODO: use fastdeploy/third_libs instead.

set(OPENCV_URL_PREFIX "https://bj.bcebos.com/paddle2onnx/libs")

@@ -185,7 +187,7 @@ else()

file(RENAME ${THIRD_PARTY_PATH}/install/${OPENCV_FILENAME}/ ${THIRD_PARTY_PATH}/install/opencv)

set(OPENCV_FILENAME opencv)

set(OpenCV_DIR ${THIRD_PARTY_PATH}/install/${OPENCV_FILENAME})

- if(TARGET_ABI MATCHES "armhf")

+ if(ENABLE_TIMVX)

set(OpenCV_DIR ${OpenCV_DIR}/lib/cmake/opencv4)

endif()

if (WIN32)

diff --git a/cmake/paddlelite.cmake b/cmake/paddlelite.cmake

index d86ccf2d1d..12a069f6e4 100755

--- a/cmake/paddlelite.cmake

+++ b/cmake/paddlelite.cmake

@@ -59,10 +59,12 @@ elseif(ANDROID)

else() # Linux

if(CMAKE_HOST_SYSTEM_PROCESSOR MATCHES "aarch64")

set(PADDLELITE_URL "${PADDLELITE_URL_PREFIX}/lite-linux-arm64-20220920.tgz")

- elseif(TARGET_ABI MATCHES "armhf")

- set(PADDLELITE_URL "https://bj.bcebos.com/fastdeploy/test/lite-linux_armhf_1101.tgz")

else()

- message(FATAL_ERROR "Only support Linux aarch64 now, x64 is not supported with backend Paddle Lite.")

+ if(ENABLE_TIMVX)

+ set(PADDLELITE_URL "https://bj.bcebos.com/fastdeploy/test/lite-linux_armhf_1130.tgz")

+ else()

+ message(FATAL_ERROR "Only support Linux aarch64 or ENABLE_TIMVX now, x64 is not supported with backend Paddle Lite.")

+ endif()

endif()

endif()

diff --git a/cmake/timvx.cmake b/cmake/timvx.cmake

index 153f0de190..c6a7d54d27 100755

--- a/cmake/timvx.cmake

+++ b/cmake/timvx.cmake

@@ -1,11 +1,10 @@

-if (NOT DEFINED TARGET_ABI)

+if (NOT DEFINED CMAKE_SYSTEM_PROCESSOR)

set(CMAKE_SYSTEM_NAME Linux)

set(CMAKE_SYSTEM_PROCESSOR arm)

set(CMAKE_C_COMPILER "arm-linux-gnueabihf-gcc")

set(CMAKE_CXX_COMPILER "arm-linux-gnueabihf-g++")

set(CMAKE_CXX_FLAGS "-march=armv7-a -mfloat-abi=hard -mfpu=neon-vfpv4 ${CMAKE_CXX_FLAGS}")

set(CMAKE_C_FLAGS "-march=armv7-a -mfloat-abi=hard -mfpu=neon-vfpv4 ${CMAKE_C_FLAGS}" )

- set(TARGET_ABI armhf)

set(CMAKE_BUILD_TYPE MinSizeRel)

else()

if(NOT ${ENABLE_LITE_BACKEND})

diff --git a/docs/cn/build_and_install/README.md b/docs/cn/build_and_install/README.md

index a40d571f5a..8be1f745a8 100755

--- a/docs/cn/build_and_install/README.md

+++ b/docs/cn/build_and_install/README.md

@@ -8,10 +8,10 @@

## 自行编译安装

- [GPU部署环境](gpu.md)

- [CPU部署环境](cpu.md)

-- [CPU部署环境](ipu.md)

+- [IPU部署环境](ipu.md)

- [Jetson部署环境](jetson.md)

- [Android平台部署环境](android.md)

-- [瑞芯微RK1126部署环境](rk1126.md)

+- [瑞芯微RV1126部署环境](rv1126.md)

## FastDeploy编译选项说明

@@ -22,6 +22,7 @@

| ENABLE_PADDLE_BACKEND | 默认OFF,是否编译集成Paddle Inference后端(CPU/GPU上推荐打开) |

| ENABLE_LITE_BACKEND | 默认OFF,是否编译集成Paddle Lite后端(编译Android库时需要设置为ON) |

| ENABLE_RKNPU2_BACKEND | 默认OFF,是否编译集成RKNPU2后端(RK3588/RK3568/RK3566上推荐打开) |

+| ENABLE_TIMVX | 默认OFF,需要在RV1126/RV1109上部署时,需设置为ON |

| ENABLE_TRT_BACKEND | 默认OFF,是否编译集成TensorRT后端(GPU上推荐打开) |

| ENABLE_OPENVINO_BACKEND | 默认OFF,是否编译集成OpenVINO后端(CPU上推荐打开) |

| ENABLE_VISION | 默认OFF,是否编译集成视觉模型的部署模块 |

diff --git a/docs/cn/build_and_install/rk1126.md b/docs/cn/build_and_install/rk1126.md

deleted file mode 100755

index 4eda019810..0000000000

--- a/docs/cn/build_and_install/rk1126.md

+++ /dev/null

@@ -1,63 +0,0 @@

-# 瑞芯微 RK1126 部署环境编译安装

-

-FastDeploy基于 Paddle-Lite 后端支持在瑞芯微(Rockchip)Soc 上进行部署推理。

-更多详细的信息请参考:[PaddleLite部署示例](https://paddle-lite.readthedocs.io/zh/develop/demo_guides/verisilicon_timvx.html)。

-

-本文档介绍如何编译基于 PaddleLite 的 C++ FastDeploy 交叉编译库。

-

-相关编译选项说明如下:

-|编译选项|默认值|说明|备注|

-|:---|:---|:---|:---|

-|ENABLE_LITE_BACKEND|OFF|编译RK库时需要设置为ON| - |

-

-更多编译选项请参考[FastDeploy编译选项说明](./README.md)

-

-## 交叉编译环境搭建

-

-### 宿主机环境需求

-- os:Ubuntu == 16.04

-- cmake: version >= 3.10.0

-

-### 环境搭建

-```bash

- # 1. Install basic software

-apt update

-apt-get install -y --no-install-recommends \

- gcc g++ git make wget python unzip

-

-# 2. Install arm gcc toolchains

-apt-get install -y --no-install-recommends \

- g++-arm-linux-gnueabi gcc-arm-linux-gnueabi \

- g++-arm-linux-gnueabihf gcc-arm-linux-gnueabihf \

- gcc-aarch64-linux-gnu g++-aarch64-linux-gnu

-

-# 3. Install cmake 3.10 or above

-wget -c https://mms-res.cdn.bcebos.com/cmake-3.10.3-Linux-x86_64.tar.gz && \

- tar xzf cmake-3.10.3-Linux-x86_64.tar.gz && \

- mv cmake-3.10.3-Linux-x86_64 /opt/cmake-3.10 && \

- ln -s /opt/cmake-3.10/bin/cmake /usr/bin/cmake && \

- ln -s /opt/cmake-3.10/bin/ccmake /usr/bin/ccmake

-```

-

-## 基于 PaddleLite 的 FastDeploy 交叉编译库编译

-搭建好交叉编译环境之后,编译命令如下:

-```bash

-# Download the latest source code

-git clone https://github.com/PaddlePaddle/FastDeploy.git

-cd FastDeploy

-mkdir build && cd build

-

-# CMake configuration with RK toolchain

-cmake -DCMAKE_TOOLCHAIN_FILE=./../cmake/timvx.cmake \

- -DENABLE_TIMVX=ON \

- -DCMAKE_INSTALL_PREFIX=fastdeploy-tmivx \

- -DENABLE_VISION=ON \ # 是否编译集成视觉模型的部署模块,可选择开启

- -Wno-dev ..

-

-# Build FastDeploy RK1126 C++ SDK

-make -j8

-make install

-```

-编译完成之后,会生成 fastdeploy-tmivx 目录,表示基于 PadddleLite TIM-VX 的 FastDeploy 库编译完成。

-

-RK1126 上部署 PaddleClas 分类模型请参考:[PaddleClas RK1126开发板 C++ 部署示例](../../../examples/vision/classification/paddleclas/rk1126/README.md)

diff --git a/docs/cn/build_and_install/rv1126.md b/docs/cn/build_and_install/rv1126.md

new file mode 100755

index 0000000000..f3cd4ed6a7

--- /dev/null

+++ b/docs/cn/build_and_install/rv1126.md

@@ -0,0 +1,100 @@

+# 瑞芯微 RV1126 部署环境编译安装

+

+FastDeploy基于 Paddle-Lite 后端支持在瑞芯微(Rockchip)Soc 上进行部署推理。

+更多详细的信息请参考:[PaddleLite部署示例](https://www.paddlepaddle.org.cn/lite/develop/demo_guides/verisilicon_timvx.html)。

+

+本文档介绍如何编译基于 PaddleLite 的 C++ FastDeploy 交叉编译库。

+

+相关编译选项说明如下:

+|编译选项|默认值|说明|备注|

+|:---|:---|:---|:---|

+|ENABLE_LITE_BACKEND|OFF|编译RK库时需要设置为ON| - |

+|ENABLE_TIMVX|OFF|编译RK库时需要设置为ON| - |

+

+更多编译选项请参考[FastDeploy编译选项说明](./README.md)

+

+## 交叉编译环境搭建

+

+### 宿主机环境需求

+- os:Ubuntu == 16.04

+- cmake: version >= 3.10.0

+

+### 环境搭建

+```bash

+ # 1. Install basic software

+apt update

+apt-get install -y --no-install-recommends \

+ gcc g++ git make wget python unzip

+

+# 2. Install arm gcc toolchains

+apt-get install -y --no-install-recommends \

+ g++-arm-linux-gnueabi gcc-arm-linux-gnueabi \

+ g++-arm-linux-gnueabihf gcc-arm-linux-gnueabihf \

+ gcc-aarch64-linux-gnu g++-aarch64-linux-gnu

+

+# 3. Install cmake 3.10 or above

+wget -c https://mms-res.cdn.bcebos.com/cmake-3.10.3-Linux-x86_64.tar.gz && \

+ tar xzf cmake-3.10.3-Linux-x86_64.tar.gz && \

+ mv cmake-3.10.3-Linux-x86_64 /opt/cmake-3.10 && \

+ ln -s /opt/cmake-3.10/bin/cmake /usr/bin/cmake && \

+ ln -s /opt/cmake-3.10/bin/ccmake /usr/bin/ccmake

+```

+

+## 基于 PaddleLite 的 FastDeploy 交叉编译库编译

+搭建好交叉编译环境之后,编译命令如下:

+```bash

+# Download the latest source code

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy

+mkdir build && cd build

+

+# CMake configuration with RK toolchain

+cmake -DCMAKE_TOOLCHAIN_FILE=./../cmake/timvx.cmake \

+ -DENABLE_TIMVX=ON \

+ -DCMAKE_INSTALL_PREFIX=fastdeploy-tmivx \

+ -DENABLE_VISION=ON \ # 是否编译集成视觉模型的部署模块,可选择开启

+ -Wno-dev ..

+

+# Build FastDeploy RV1126 C++ SDK

+make -j8

+make install

+```

+编译完成之后,会生成 fastdeploy-tmivx 目录,表示基于 PadddleLite TIM-VX 的 FastDeploy 库编译完成。

+

+## 准备设备运行环境

+部署前要保证芯原 Linux Kernel NPU 驱动 galcore.so 版本及所适用的芯片型号与依赖库保持一致,在部署前,请登录开发板,并通过命令行输入以下命令查询 NPU 驱动版本,Rockchip建议的驱动版本为: 6.4.6.5

+```bash

+dmesg | grep Galcore

+```

+

+如果当前版本不符合上述,请用户仔细阅读以下内容,以保证底层 NPU 驱动环境正确。

+

+有两种方式可以修改当前的 NPU 驱动版本:

+1. 手动替换 NPU 驱动版本。(推荐)

+2. 刷机,刷取 NPU 驱动版本符合要求的固件。

+

+### 手动替换 NPU 驱动版本

+1. 使用如下命令下载解压 PaddleLite demo,其中提供了现成的驱动文件

+```bash

+wget https://paddlelite-demo.bj.bcebos.com/devices/generic/PaddleLite-generic-demo.tar.gz

+tar -xf PaddleLite-generic-demo.tar.gz

+```

+2. 使用 `uname -a` 查看 `Linux Kernel` 版本,确定为 `Linux` 系统 4.19.111 版本,

+3. 将 `PaddleLite-generic-demo/libs/PaddleLite/linux/armhf/lib/verisilicon_timvx/viv_sdk_6_4_6_5/lib/1126/4.19.111/` 路径下的 `galcore.ko` 上传至开发板。

+

+4. 登录开发板,命令行输入 `sudo rmmod galcore` 来卸载原始驱动,输入 `sudo insmod galcore.ko` 来加载传上设备的驱动。(是否需要 sudo 根据开发板实际情况,部分 adb 链接的设备请提前 adb root)。此步骤如果操作失败,请跳转至方法 2。

+5. 在开发板中输入 `dmesg | grep Galcore` 查询 NPU 驱动版本,确定为:6.4.6.5

+

+### 刷机

+根据具体的开发板型号,向开发板卖家或官网客服索要 6.4.6.5 版本 NPU 驱动对应的固件和刷机方法。

+

+更多细节请参考:[PaddleLite准备设备环境](https://www.paddlepaddle.org.cn/lite/develop/demo_guides/verisilicon_timvx.html#zhunbeishebeihuanjing)

+

+## 基于 FastDeploy 在 RV1126 上的部署示例

+1. RV1126 上部署 PaddleClas 分类模型请参考:[PaddleClas 分类模型在 RV1126 上的 C++ 部署示例](../../../examples/vision/classification/paddleclas/rv1126/README.md)

+

+2. RV1126 上部署 PPYOLOE 检测模型请参考:[PPYOLOE 检测模型在 RV1126 上的 C++ 部署示例](../../../examples/vision/detection/paddledetection/rv1126/README.md)

+

+3. RV1126 上部署 YOLOv5 检测模型请参考:[YOLOv5 检测模型在 RV1126 上的 C++ 部署示例](../../../examples/vision/detection/yolov5/rv1126/README.md)

+

+4. RV1126 上部署 PP-LiteSeg 分割模型请参考:[PP-LiteSeg 分割模型在 RV1126 上的 C++ 部署示例](../../../examples/vision/segmentation/paddleseg/rv1126/README.md)

diff --git a/examples/vision/classification/paddleclas/quantize/README.md b/examples/vision/classification/paddleclas/quantize/README.md

old mode 100644

new mode 100755

index 0a814e0e37..e55f95e261

--- a/examples/vision/classification/paddleclas/quantize/README.md

+++ b/examples/vision/classification/paddleclas/quantize/README.md

@@ -4,7 +4,7 @@ FastDeploy已支持部署量化模型,并提供一键模型自动化压缩的工

## FastDeploy一键模型自动化压缩工具

FastDeploy 提供了一键模型自动化压缩工具, 能够简单地通过输入一个配置文件, 对模型进行量化.

-详细教程请见: [一键模型自动化压缩工具](../../../../../tools/auto_compression/)

+详细教程请见: [一键模型自动化压缩工具](../../../../../tools/common_tools/auto_compression/)

注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的inference_cls.yaml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可。

## 下载量化完成的PaddleClas模型

diff --git a/examples/vision/classification/paddleclas/quantize/cpp/README.md b/examples/vision/classification/paddleclas/quantize/cpp/README.md

old mode 100644

new mode 100755

index 3dced039ca..999cc7fde3

--- a/examples/vision/classification/paddleclas/quantize/cpp/README.md

+++ b/examples/vision/classification/paddleclas/quantize/cpp/README.md

@@ -8,7 +8,7 @@

### 量化模型准备

- 1. 用户可以直接使用由FastDeploy提供的量化模型进行部署.

-- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的inference_cls.yaml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

+- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/common_tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的inference_cls.yaml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

## 以量化后的ResNet50_Vd模型为例, 进行部署,支持此模型需保证FastDeploy版本0.7.0以上(x.x.x>=0.7.0)

在本目录执行如下命令即可完成编译,以及量化模型部署.

diff --git a/examples/vision/classification/paddleclas/quantize/python/README.md b/examples/vision/classification/paddleclas/quantize/python/README.md

old mode 100644

new mode 100755

index 6b874e77ab..a7fc4f9d3a

--- a/examples/vision/classification/paddleclas/quantize/python/README.md

+++ b/examples/vision/classification/paddleclas/quantize/python/README.md

@@ -8,7 +8,7 @@

### 量化模型准备

- 1. 用户可以直接使用由FastDeploy提供的量化模型进行部署.

-- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的inference_cls.yaml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

+- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/common_tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的inference_cls.yaml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

## 以量化后的ResNet50_Vd模型为例, 进行部署

diff --git a/examples/vision/classification/paddleclas/rk1126/README.md b/examples/vision/classification/paddleclas/rv1126/README.md

similarity index 61%

rename from examples/vision/classification/paddleclas/rk1126/README.md

rename to examples/vision/classification/paddleclas/rv1126/README.md

index bac6d7bf8d..065939a278 100755

--- a/examples/vision/classification/paddleclas/rk1126/README.md

+++ b/examples/vision/classification/paddleclas/rv1126/README.md

@@ -1,11 +1,11 @@

-# PaddleClas 量化模型在 RK1126 上的部署

-目前 FastDeploy 已经支持基于 PaddleLite 部署 PaddleClas 量化模型到 RK1126 上。

+# PaddleClas 量化模型在 RV1126 上的部署

+目前 FastDeploy 已经支持基于 PaddleLite 部署 PaddleClas 量化模型到 RV1126 上。

模型的量化和量化模型的下载请参考:[模型量化](../quantize/README.md)

## 详细部署文档

-在 RK1126 上只支持 C++ 的部署。

+在 RV1126 上只支持 C++ 的部署。

- [C++部署](cpp)

diff --git a/examples/vision/classification/paddleclas/rk1126/cpp/CMakeLists.txt b/examples/vision/classification/paddleclas/rv1126/cpp/CMakeLists.txt

similarity index 100%

rename from examples/vision/classification/paddleclas/rk1126/cpp/CMakeLists.txt

rename to examples/vision/classification/paddleclas/rv1126/cpp/CMakeLists.txt

diff --git a/examples/vision/classification/paddleclas/rk1126/cpp/README.md b/examples/vision/classification/paddleclas/rv1126/cpp/README.md

similarity index 65%

rename from examples/vision/classification/paddleclas/rk1126/cpp/README.md

rename to examples/vision/classification/paddleclas/rv1126/cpp/README.md

index 6d8ecd1512..feaba462fe 100755

--- a/examples/vision/classification/paddleclas/rk1126/cpp/README.md

+++ b/examples/vision/classification/paddleclas/rv1126/cpp/README.md

@@ -1,22 +1,22 @@

-# PaddleClas RK1126开发板 C++ 部署示例

-本目录下提供的 `infer.cc`,可以帮助用户快速完成 PaddleClas 量化模型在 RK1126 上的部署推理加速。

+# PaddleClas RV1126 开发板 C++ 部署示例

+本目录下提供的 `infer.cc`,可以帮助用户快速完成 PaddleClas 量化模型在 RV1126 上的部署推理加速。

## 部署准备

### FastDeploy 交叉编译环境准备

-- 1. 软硬件环境满足要求,以及交叉编译环境的准备,请参考:[FastDeploy 交叉编译环境准备](../../../../../../docs/cn/build_and_install/rk1126.md#交叉编译环境搭建)

+- 1. 软硬件环境满足要求,以及交叉编译环境的准备,请参考:[FastDeploy 交叉编译环境准备](../../../../../../docs/cn/build_and_install/rv1126.md#交叉编译环境搭建)

### 量化模型准备

- 1. 用户可以直接使用由 FastDeploy 提供的量化模型进行部署。

-- 2. 用户可以使用 FastDeploy 提供的[一键模型自动化压缩工具](../../../../../../tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署。(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的inference_cls.yaml文件, 自行量化的模型文件夹内不包含此 yaml 文件, 用户从 FP32 模型文件夹下复制此 yaml 文件到量化后的模型文件夹内即可.)

+- 2. 用户可以使用 FastDeploy 提供的[一键模型自动化压缩工具](../../../../../../tools/common_tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署。(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的inference_cls.yaml文件, 自行量化的模型文件夹内不包含此 yaml 文件, 用户从 FP32 模型文件夹下复制此 yaml 文件到量化后的模型文件夹内即可.)

- 更多量化相关相关信息可查阅[模型量化](../../quantize/README.md)

-## 在 RK1126 上部署量化后的 ResNet50_Vd 分类模型

-请按照以下步骤完成在 RK1126 上部署 ResNet50_Vd 量化模型:

-1. 交叉编译编译 FastDeploy 库,具体请参考:[交叉编译 FastDeploy](../../../../../../docs/cn/build_and_install/rk1126.md#基于-paddlelite-的-fastdeploy-交叉编译库编译)

+## 在 RV1126 上部署量化后的 ResNet50_Vd 分类模型

+请按照以下步骤完成在 RV1126 上部署 ResNet50_Vd 量化模型:

+1. 交叉编译编译 FastDeploy 库,具体请参考:[交叉编译 FastDeploy](../../../../../../docs/cn/build_and_install/rv1126.md#基于-paddlelite-的-fastdeploy-交叉编译库编译)

2. 将编译后的库拷贝到当前目录,可使用如下命令:

```bash

-cp -r FastDeploy/build/fastdeploy-tmivx/ FastDeploy/examples/vision/classification/paddleclas/rk1126/cpp/

+cp -r FastDeploy/build/fastdeploy-tmivx/ FastDeploy/examples/vision/classification/paddleclas/rv1126/cpp/

```

3. 在当前路径下载部署所需的模型和示例图片:

@@ -32,7 +32,7 @@ cp -r ILSVRC2012_val_00000010.jpeg images

4. 编译部署示例,可使入如下命令:

```bash

mkdir build && cd build

-cmake -DCMAKE_TOOLCHAIN_FILE=../fastdeploy-tmivx/timvx.cmake -DFASTDEPLOY_INSTALL_DIR=fastdeploy-tmivx ..

+cmake -DCMAKE_TOOLCHAIN_FILE=${PWD}/../fastdeploy-tmivx/timvx.cmake -DFASTDEPLOY_INSTALL_DIR=${PWD}/../fastdeploy-tmivx ..

make -j8

make install

# 成功编译之后,会生成 install 文件夹,里面有一个运行 demo 和部署所需的库

@@ -41,7 +41,7 @@ make install

5. 基于 adb 工具部署 ResNet50_vd 分类模型到 Rockchip RV1126,可使用如下命令:

```bash

# 进入 install 目录

-cd FastDeploy/examples/vision/classification/paddleclas/rk1126/cpp/build/install/

+cd FastDeploy/examples/vision/classification/paddleclas/rv1126/cpp/build/install/

# 如下命令表示:bash run_with_adb.sh 需要运行的demo 模型路径 图片路径 设备的DEVICE_ID

bash run_with_adb.sh infer_demo ResNet50_vd_infer ILSVRC2012_val_00000010.jpeg $DEVICE_ID

```

@@ -50,4 +50,4 @@ bash run_with_adb.sh infer_demo ResNet50_vd_infer ILSVRC2012_val_00000010.jpeg $

-需要特别注意的是,在 RK1126 上部署的模型需要是量化后的模型,模型的量化请参考:[模型量化](../../../../../../docs/cn/quantize.md)

+需要特别注意的是,在 RV1126 上部署的模型需要是量化后的模型,模型的量化请参考:[模型量化](../../../../../../docs/cn/quantize.md)

diff --git a/examples/vision/classification/paddleclas/rk1126/cpp/infer.cc b/examples/vision/classification/paddleclas/rv1126/cpp/infer.cc

similarity index 100%

rename from examples/vision/classification/paddleclas/rk1126/cpp/infer.cc

rename to examples/vision/classification/paddleclas/rv1126/cpp/infer.cc

diff --git a/examples/vision/classification/paddleclas/rk1126/cpp/run_with_adb.sh b/examples/vision/classification/paddleclas/rv1126/cpp/run_with_adb.sh

similarity index 100%

rename from examples/vision/classification/paddleclas/rk1126/cpp/run_with_adb.sh

rename to examples/vision/classification/paddleclas/rv1126/cpp/run_with_adb.sh

diff --git a/examples/vision/detection/paddledetection/quantize/README.md b/examples/vision/detection/paddledetection/quantize/README.md

old mode 100644

new mode 100755

index b041b34684..f0caf81123

--- a/examples/vision/detection/paddledetection/quantize/README.md

+++ b/examples/vision/detection/paddledetection/quantize/README.md

@@ -4,7 +4,7 @@ FastDeploy已支持部署量化模型,并提供一键模型自动化压缩的工

## FastDeploy一键模型自动化压缩工具

FastDeploy 提供了一键模型自动化压缩工具, 能够简单地通过输入一个配置文件, 对模型进行量化.

-详细教程请见: [一键模型自动化压缩工具](../../../../../tools/auto_compression/)

+详细教程请见: [一键模型自动化压缩工具](../../../../../tools/common_tools/auto_compression/)

## 下载量化完成的PP-YOLOE-l模型

用户也可以直接下载下表中的量化模型进行部署.(点击模型名字即可下载)

diff --git a/examples/vision/detection/paddledetection/quantize/cpp/README.md b/examples/vision/detection/paddledetection/quantize/cpp/README.md

old mode 100644

new mode 100755

index 793fc3938e..4511eb5089

--- a/examples/vision/detection/paddledetection/quantize/cpp/README.md

+++ b/examples/vision/detection/paddledetection/quantize/cpp/README.md

@@ -9,7 +9,7 @@

### 量化模型准备

- 1. 用户可以直接使用由FastDeploy提供的量化模型进行部署.

-- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

+- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/common_tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的检测模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

## 以量化后的PP-YOLOE-l模型为例, 进行部署。支持此模型需保证FastDeploy版本0.7.0以上(x.x.x>=0.7.0)

在本目录执行如下命令即可完成编译,以及量化模型部署.

diff --git a/examples/vision/detection/paddledetection/quantize/python/README.md b/examples/vision/detection/paddledetection/quantize/python/README.md

old mode 100644

new mode 100755

index 3efcd232b9..15e0d463e0

--- a/examples/vision/detection/paddledetection/quantize/python/README.md

+++ b/examples/vision/detection/paddledetection/quantize/python/README.md

@@ -8,7 +8,7 @@

### 量化模型准备

- 1. 用户可以直接使用由FastDeploy提供的量化模型进行部署.

-- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

+- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/common_tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

## 以量化后的PP-YOLOE-l模型为例, 进行部署

diff --git a/examples/vision/detection/paddledetection/rk1126/download_models_and_libs.sh b/examples/vision/detection/paddledetection/rk1126/download_models_and_libs.sh

deleted file mode 100755

index e7a0fca7a2..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/download_models_and_libs.sh

+++ /dev/null

@@ -1,25 +0,0 @@

-#!/bin/bash

-

-set -e

-

-DETECTION_MODEL_DIR="$(pwd)/picodet_detection/models"

-LIBS_DIR="$(pwd)"

-

-DETECTION_MODEL_URL="https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/models/picodetv2_relu6_coco_no_fuse.tar.gz"

-LIBS_URL="https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/Paddle-Lite-libs.tar.gz"

-

-download_and_uncompress() {

- local url="$1"

- local dir="$2"

-

- echo "Start downloading ${url}"

- curl -L ${url} > ${dir}/download.tar.gz

- cd ${dir}

- tar -zxvf download.tar.gz

- rm -f download.tar.gz

-}

-

-download_and_uncompress "${DETECTION_MODEL_URL}" "${DETECTION_MODEL_DIR}"

-download_and_uncompress "${LIBS_URL}" "${LIBS_DIR}"

-

-echo "Download successful!"

diff --git a/examples/vision/detection/paddledetection/rk1126/picodet_detection/CMakeLists.txt b/examples/vision/detection/paddledetection/rk1126/picodet_detection/CMakeLists.txt

deleted file mode 100755

index 575db78adf..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/picodet_detection/CMakeLists.txt

+++ /dev/null

@@ -1,62 +0,0 @@

-cmake_minimum_required(VERSION 3.10)

-set(CMAKE_SYSTEM_NAME Linux)

-if(TARGET_ARCH_ABI STREQUAL "armv8")

- set(CMAKE_SYSTEM_PROCESSOR aarch64)

- set(CMAKE_C_COMPILER "aarch64-linux-gnu-gcc")

- set(CMAKE_CXX_COMPILER "aarch64-linux-gnu-g++")

-elseif(TARGET_ARCH_ABI STREQUAL "armv7hf")

- set(CMAKE_SYSTEM_PROCESSOR arm)

- set(CMAKE_C_COMPILER "arm-linux-gnueabihf-gcc")

- set(CMAKE_CXX_COMPILER "arm-linux-gnueabihf-g++")

-else()

- message(FATAL_ERROR "Unknown arch abi ${TARGET_ARCH_ABI}, only support armv8 and armv7hf.")

- return()

-endif()

-

-project(object_detection_demo)

-

-message(STATUS "TARGET ARCH ABI: ${TARGET_ARCH_ABI}")

-message(STATUS "PADDLE LITE DIR: ${PADDLE_LITE_DIR}")

-include_directories(${PADDLE_LITE_DIR}/include)

-link_directories(${PADDLE_LITE_DIR}/libs/${TARGET_ARCH_ABI})

-set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++11")

-if(TARGET_ARCH_ABI STREQUAL "armv8")

- set(CMAKE_CXX_FLAGS "-march=armv8-a ${CMAKE_CXX_FLAGS}")

- set(CMAKE_C_FLAGS "-march=armv8-a ${CMAKE_C_FLAGS}")

-elseif(TARGET_ARCH_ABI STREQUAL "armv7hf")

- set(CMAKE_CXX_FLAGS "-march=armv7-a -mfloat-abi=hard -mfpu=neon-vfpv4 ${CMAKE_CXX_FLAGS}")

- set(CMAKE_C_FLAGS "-march=armv7-a -mfloat-abi=hard -mfpu=neon-vfpv4 ${CMAKE_C_FLAGS}" )

-endif()

-

-include_directories(${PADDLE_LITE_DIR}/libs/${TARGET_ARCH_ABI}/third_party/yaml-cpp/include)

-link_directories(${PADDLE_LITE_DIR}/libs/${TARGET_ARCH_ABI}/third_party/yaml-cpp)

-

-find_package(OpenMP REQUIRED)

-if(OpenMP_FOUND OR OpenMP_CXX_FOUND)

- set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} ${OpenMP_C_FLAGS}")

- set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} ${OpenMP_CXX_FLAGS}")

- message(STATUS "Found OpenMP ${OpenMP_VERSION} ${OpenMP_CXX_VERSION}")

- message(STATUS "OpenMP C flags: ${OpenMP_C_FLAGS}")

- message(STATUS "OpenMP CXX flags: ${OpenMP_CXX_FLAGS}")

- message(STATUS "OpenMP OpenMP_CXX_LIB_NAMES: ${OpenMP_CXX_LIB_NAMES}")

- message(STATUS "OpenMP OpenMP_CXX_LIBRARIES: ${OpenMP_CXX_LIBRARIES}")

-else()

- message(FATAL_ERROR "Could not found OpenMP!")

- return()

-endif()

-find_package(OpenCV REQUIRED)

-if(OpenCV_FOUND OR OpenCV_CXX_FOUND)

- include_directories(${OpenCV_INCLUDE_DIRS})

- message(STATUS "OpenCV library status:")

- message(STATUS " version: ${OpenCV_VERSION}")

- message(STATUS " libraries: ${OpenCV_LIBS}")

- message(STATUS " include path: ${OpenCV_INCLUDE_DIRS}")

-else()

- message(FATAL_ERROR "Could not found OpenCV!")

- return()

-endif()

-

-

-add_executable(object_detection_demo object_detection_demo.cc)

-

-target_link_libraries(object_detection_demo paddle_full_api_shared dl ${OpenCV_LIBS} yaml-cpp)

diff --git a/examples/vision/detection/paddledetection/rk1126/picodet_detection/README.md b/examples/vision/detection/paddledetection/rk1126/picodet_detection/README.md

deleted file mode 100755

index ddebdeb7ad..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/picodet_detection/README.md

+++ /dev/null

@@ -1,343 +0,0 @@

-# 目标检测 C++ API Demo 使用指南

-

-在 ARMLinux 上实现实时的目标检测功能,此 Demo 有较好的的易用性和扩展性,如在 Demo 中跑自己训练好的模型等。

- - 如果该开发板使用搭载了芯原 NPU (瑞芯微、晶晨、JQL、恩智浦)的 Soc,将有更好的加速效果。

-

-## 如何运行目标检测 Demo

-

-### 环境准备

-

-* 准备 ARMLiunx 开发版,将系统刷为 Ubuntu,用于 Demo 编译和运行。请注意,本 Demo 是使用板上编译,而非交叉编译,因此需要图形界面的开发板操作系统。

-* 如果需要使用 芯原 NPU 的计算加速,对 NPU 驱动版本有严格要求,请务必注意事先参考 [芯原 TIM-VX 部署示例](https://paddle-lite.readthedocs.io/zh/develop/demo_guides/verisilicon_timvx.html#id6),将 NPU 驱动改为要求的版本。

-* Paddle Lite 当前已验证的开发板为 Khadas VIM3(芯片为 Amlogic A311d)、荣品 RV1126、荣品RV1109,其它平台用户可自行尝试;

- - Khadas VIM3:由于 VIM3 出厂自带 Android 系统,请先刷成 Ubuntu 系统,在此提供刷机教程:[VIM3/3L Linux 文档](https://docs.khadas.com/linux/zh-cn/vim3),其中有详细描述刷机方法。以及系统镜像:VIM3 Linux:VIM3_Ubuntu-gnome-focal_Linux-4.9_arm64_EMMC_V1.0.7-210625:[官方链接](http://dl.khadas.com/firmware/VIM3/Ubuntu/EMMC/VIM3_Ubuntu-gnome-focal_Linux-4.9_arm64_EMMC_V1.0.7-210625.img.xz);[百度云备用链接](https://paddlelite-demo.bj.bcebos.com/devices/verisilicon/firmware/khadas/vim3/VIM3_Ubuntu-gnome-focal_Linux-4.9_arm64_EMMC_V1.0.7-210625.img.xz)

- - 荣品 RV1126、1109:由于出场自带 buildroot 系统,如果使用 GUI 界面的 demo,请先刷成 Ubuntu 系统,在此提供刷机教程:[RV1126/1109 教程](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/rockchip/RV1126-RV1109%E4%BD%BF%E7%94%A8%E6%8C%87%E5%AF%BC%E6%96%87%E6%A1%A3-V3.0.pdf),[刷机工具](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/rockchip/RKDevTool_Release.zip),以及镜像:[1126镜像](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/update-pro-rv1126-ubuntu20.04-5-720-1280-v2-20220505.img),[1109镜像](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/update-pro-rv1109-ubuntu20.04-5.5-720-1280-v2-20220429.img)。完整的文档和各种镜像请参考[百度网盘链接](https://pan.baidu.com/s/1Id0LMC0oO2PwR2YcYUAaiQ#list/path=%2F&parentPath=%2Fsharelink2521613171-184070898837664),密码:2345。

-* 准备 usb camera,注意使用 openCV capture 图像时,请注意 usb camera 的 video序列号作为入参。

-* 请注意,瑞芯微芯片不带有 HDMI 接口,图像显示是依赖 MIPI DSI,所以请准备好 MIPI 显示屏(我们提供的镜像是 720*1280 分辨率,网盘中有更多分辨率选择,注意:请选择 camera-gc2093x2 的镜像)。

-* 配置开发板的网络。如果是办公网络红区,可以将开发板和PC用以太网链接,然后PC共享网络给开发板。

-* gcc g++ opencv cmake 的安装(以下所有命令均在设备上操作)

-

-```bash

-$ sudo apt-get update

-$ sudo apt-get install gcc g++ make wget unzip libopencv-dev pkg-config

-$ wget https://www.cmake.org/files/v3.10/cmake-3.10.3.tar.gz

-$ tar -zxvf cmake-3.10.3.tar.gz

-$ cd cmake-3.10.3

-$ ./configure

-$ make

-$ sudo make install

-```

-

-### 部署步骤

-

-1. 将本 repo 上传至 VIM3 开发板,或者直接开发板上下载或者 git clone 本 repo

-2. 目标检测 Demo 位于 `Paddle-Lite-Demo/object_detection/linux/picodet_detection` 目录

-3. 进入 `Paddle-Lite-Demo/object_detection/linux` 目录, 终端中执行 `download_models_and_libs.sh` 脚本自动下载模型和 Paddle Lite 预测库

-

-```shell

-cd Paddle-Lite-Demo/object_detection/linux # 1. 终端中进入 Paddle-Lite-Demo/object_detection/linux

-sh download_models_and_libs.sh # 2. 执行脚本下载依赖项 (需要联网)

-```

-

-下载完成后会出现提示: `Download successful!`

-4. 执行用例(保证 ARMLinux 环境准备完成)

-

-```shell

-cd picodet_detection # 1. 终端中进入

-sh build.sh armv8 # 2. 编译 Demo 可执行程序,默认编译 armv8,如果是 32bit 环境,则改成 sh build.sh armv7hf。

-sh run.sh armv8 # 3. 执行物体检测(picodet 模型) demo,会直接开启摄像头,启动图形界面并呈现检测结果。如果是 32bit 环境,则改成 sh run.sh armv7hf

-```

-

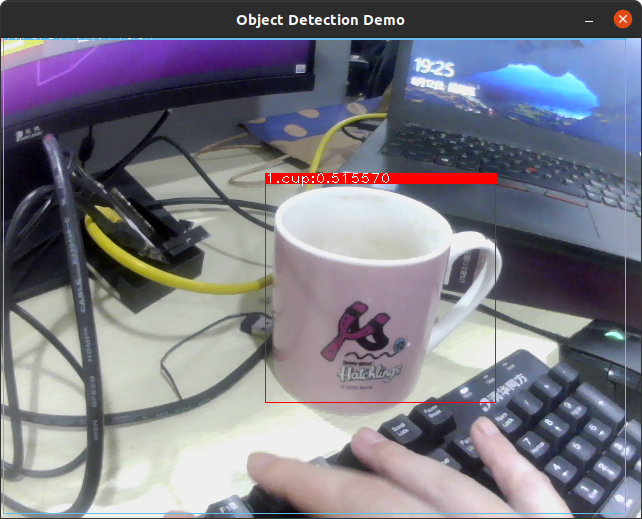

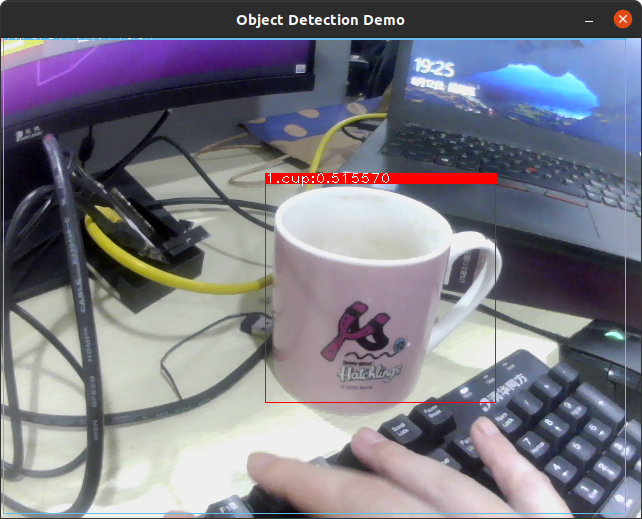

-### Demo 结果如下:(注意,示例的 picodet 仅使用 coco 数据集,在实际场景中效果一般,请使用实际业务场景重新训练)

-

-

-需要特别注意的是,在 RK1126 上部署的模型需要是量化后的模型,模型的量化请参考:[模型量化](../../../../../../docs/cn/quantize.md)

+需要特别注意的是,在 RV1126 上部署的模型需要是量化后的模型,模型的量化请参考:[模型量化](../../../../../../docs/cn/quantize.md)

diff --git a/examples/vision/classification/paddleclas/rk1126/cpp/infer.cc b/examples/vision/classification/paddleclas/rv1126/cpp/infer.cc

similarity index 100%

rename from examples/vision/classification/paddleclas/rk1126/cpp/infer.cc

rename to examples/vision/classification/paddleclas/rv1126/cpp/infer.cc

diff --git a/examples/vision/classification/paddleclas/rk1126/cpp/run_with_adb.sh b/examples/vision/classification/paddleclas/rv1126/cpp/run_with_adb.sh

similarity index 100%

rename from examples/vision/classification/paddleclas/rk1126/cpp/run_with_adb.sh

rename to examples/vision/classification/paddleclas/rv1126/cpp/run_with_adb.sh

diff --git a/examples/vision/detection/paddledetection/quantize/README.md b/examples/vision/detection/paddledetection/quantize/README.md

old mode 100644

new mode 100755

index b041b34684..f0caf81123

--- a/examples/vision/detection/paddledetection/quantize/README.md

+++ b/examples/vision/detection/paddledetection/quantize/README.md

@@ -4,7 +4,7 @@ FastDeploy已支持部署量化模型,并提供一键模型自动化压缩的工

## FastDeploy一键模型自动化压缩工具

FastDeploy 提供了一键模型自动化压缩工具, 能够简单地通过输入一个配置文件, 对模型进行量化.

-详细教程请见: [一键模型自动化压缩工具](../../../../../tools/auto_compression/)

+详细教程请见: [一键模型自动化压缩工具](../../../../../tools/common_tools/auto_compression/)

## 下载量化完成的PP-YOLOE-l模型

用户也可以直接下载下表中的量化模型进行部署.(点击模型名字即可下载)

diff --git a/examples/vision/detection/paddledetection/quantize/cpp/README.md b/examples/vision/detection/paddledetection/quantize/cpp/README.md

old mode 100644

new mode 100755

index 793fc3938e..4511eb5089

--- a/examples/vision/detection/paddledetection/quantize/cpp/README.md

+++ b/examples/vision/detection/paddledetection/quantize/cpp/README.md

@@ -9,7 +9,7 @@

### 量化模型准备

- 1. 用户可以直接使用由FastDeploy提供的量化模型进行部署.

-- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

+- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/common_tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的检测模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

## 以量化后的PP-YOLOE-l模型为例, 进行部署。支持此模型需保证FastDeploy版本0.7.0以上(x.x.x>=0.7.0)

在本目录执行如下命令即可完成编译,以及量化模型部署.

diff --git a/examples/vision/detection/paddledetection/quantize/python/README.md b/examples/vision/detection/paddledetection/quantize/python/README.md

old mode 100644

new mode 100755

index 3efcd232b9..15e0d463e0

--- a/examples/vision/detection/paddledetection/quantize/python/README.md

+++ b/examples/vision/detection/paddledetection/quantize/python/README.md

@@ -8,7 +8,7 @@

### 量化模型准备

- 1. 用户可以直接使用由FastDeploy提供的量化模型进行部署.

-- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

+- 2. 用户可以使用FastDeploy提供的[一键模型自动化压缩工具](../../../../../../tools/common_tools/auto_compression/),自行进行模型量化, 并使用产出的量化模型进行部署.(注意: 推理量化后的分类模型仍然需要FP32模型文件夹下的infer_cfg.yml文件, 自行量化的模型文件夹内不包含此yaml文件, 用户从FP32模型文件夹下复制此yaml文件到量化后的模型文件夹内即可.)

## 以量化后的PP-YOLOE-l模型为例, 进行部署

diff --git a/examples/vision/detection/paddledetection/rk1126/download_models_and_libs.sh b/examples/vision/detection/paddledetection/rk1126/download_models_and_libs.sh

deleted file mode 100755

index e7a0fca7a2..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/download_models_and_libs.sh

+++ /dev/null

@@ -1,25 +0,0 @@

-#!/bin/bash

-

-set -e

-

-DETECTION_MODEL_DIR="$(pwd)/picodet_detection/models"

-LIBS_DIR="$(pwd)"

-

-DETECTION_MODEL_URL="https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/models/picodetv2_relu6_coco_no_fuse.tar.gz"

-LIBS_URL="https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/Paddle-Lite-libs.tar.gz"

-

-download_and_uncompress() {

- local url="$1"

- local dir="$2"

-

- echo "Start downloading ${url}"

- curl -L ${url} > ${dir}/download.tar.gz

- cd ${dir}

- tar -zxvf download.tar.gz

- rm -f download.tar.gz

-}

-

-download_and_uncompress "${DETECTION_MODEL_URL}" "${DETECTION_MODEL_DIR}"

-download_and_uncompress "${LIBS_URL}" "${LIBS_DIR}"

-

-echo "Download successful!"

diff --git a/examples/vision/detection/paddledetection/rk1126/picodet_detection/CMakeLists.txt b/examples/vision/detection/paddledetection/rk1126/picodet_detection/CMakeLists.txt

deleted file mode 100755

index 575db78adf..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/picodet_detection/CMakeLists.txt

+++ /dev/null

@@ -1,62 +0,0 @@

-cmake_minimum_required(VERSION 3.10)

-set(CMAKE_SYSTEM_NAME Linux)

-if(TARGET_ARCH_ABI STREQUAL "armv8")

- set(CMAKE_SYSTEM_PROCESSOR aarch64)

- set(CMAKE_C_COMPILER "aarch64-linux-gnu-gcc")

- set(CMAKE_CXX_COMPILER "aarch64-linux-gnu-g++")

-elseif(TARGET_ARCH_ABI STREQUAL "armv7hf")

- set(CMAKE_SYSTEM_PROCESSOR arm)

- set(CMAKE_C_COMPILER "arm-linux-gnueabihf-gcc")

- set(CMAKE_CXX_COMPILER "arm-linux-gnueabihf-g++")

-else()

- message(FATAL_ERROR "Unknown arch abi ${TARGET_ARCH_ABI}, only support armv8 and armv7hf.")

- return()

-endif()

-

-project(object_detection_demo)

-

-message(STATUS "TARGET ARCH ABI: ${TARGET_ARCH_ABI}")

-message(STATUS "PADDLE LITE DIR: ${PADDLE_LITE_DIR}")

-include_directories(${PADDLE_LITE_DIR}/include)

-link_directories(${PADDLE_LITE_DIR}/libs/${TARGET_ARCH_ABI})

-set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++11")

-if(TARGET_ARCH_ABI STREQUAL "armv8")

- set(CMAKE_CXX_FLAGS "-march=armv8-a ${CMAKE_CXX_FLAGS}")

- set(CMAKE_C_FLAGS "-march=armv8-a ${CMAKE_C_FLAGS}")

-elseif(TARGET_ARCH_ABI STREQUAL "armv7hf")

- set(CMAKE_CXX_FLAGS "-march=armv7-a -mfloat-abi=hard -mfpu=neon-vfpv4 ${CMAKE_CXX_FLAGS}")

- set(CMAKE_C_FLAGS "-march=armv7-a -mfloat-abi=hard -mfpu=neon-vfpv4 ${CMAKE_C_FLAGS}" )

-endif()

-

-include_directories(${PADDLE_LITE_DIR}/libs/${TARGET_ARCH_ABI}/third_party/yaml-cpp/include)

-link_directories(${PADDLE_LITE_DIR}/libs/${TARGET_ARCH_ABI}/third_party/yaml-cpp)

-

-find_package(OpenMP REQUIRED)

-if(OpenMP_FOUND OR OpenMP_CXX_FOUND)

- set(CMAKE_C_FLAGS "${CMAKE_C_FLAGS} ${OpenMP_C_FLAGS}")

- set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} ${OpenMP_CXX_FLAGS}")

- message(STATUS "Found OpenMP ${OpenMP_VERSION} ${OpenMP_CXX_VERSION}")

- message(STATUS "OpenMP C flags: ${OpenMP_C_FLAGS}")

- message(STATUS "OpenMP CXX flags: ${OpenMP_CXX_FLAGS}")

- message(STATUS "OpenMP OpenMP_CXX_LIB_NAMES: ${OpenMP_CXX_LIB_NAMES}")

- message(STATUS "OpenMP OpenMP_CXX_LIBRARIES: ${OpenMP_CXX_LIBRARIES}")

-else()

- message(FATAL_ERROR "Could not found OpenMP!")

- return()

-endif()

-find_package(OpenCV REQUIRED)

-if(OpenCV_FOUND OR OpenCV_CXX_FOUND)

- include_directories(${OpenCV_INCLUDE_DIRS})

- message(STATUS "OpenCV library status:")

- message(STATUS " version: ${OpenCV_VERSION}")

- message(STATUS " libraries: ${OpenCV_LIBS}")

- message(STATUS " include path: ${OpenCV_INCLUDE_DIRS}")

-else()

- message(FATAL_ERROR "Could not found OpenCV!")

- return()

-endif()

-

-

-add_executable(object_detection_demo object_detection_demo.cc)

-

-target_link_libraries(object_detection_demo paddle_full_api_shared dl ${OpenCV_LIBS} yaml-cpp)

diff --git a/examples/vision/detection/paddledetection/rk1126/picodet_detection/README.md b/examples/vision/detection/paddledetection/rk1126/picodet_detection/README.md

deleted file mode 100755

index ddebdeb7ad..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/picodet_detection/README.md

+++ /dev/null

@@ -1,343 +0,0 @@

-# 目标检测 C++ API Demo 使用指南

-

-在 ARMLinux 上实现实时的目标检测功能,此 Demo 有较好的的易用性和扩展性,如在 Demo 中跑自己训练好的模型等。

- - 如果该开发板使用搭载了芯原 NPU (瑞芯微、晶晨、JQL、恩智浦)的 Soc,将有更好的加速效果。

-

-## 如何运行目标检测 Demo

-

-### 环境准备

-

-* 准备 ARMLiunx 开发版,将系统刷为 Ubuntu,用于 Demo 编译和运行。请注意,本 Demo 是使用板上编译,而非交叉编译,因此需要图形界面的开发板操作系统。

-* 如果需要使用 芯原 NPU 的计算加速,对 NPU 驱动版本有严格要求,请务必注意事先参考 [芯原 TIM-VX 部署示例](https://paddle-lite.readthedocs.io/zh/develop/demo_guides/verisilicon_timvx.html#id6),将 NPU 驱动改为要求的版本。

-* Paddle Lite 当前已验证的开发板为 Khadas VIM3(芯片为 Amlogic A311d)、荣品 RV1126、荣品RV1109,其它平台用户可自行尝试;

- - Khadas VIM3:由于 VIM3 出厂自带 Android 系统,请先刷成 Ubuntu 系统,在此提供刷机教程:[VIM3/3L Linux 文档](https://docs.khadas.com/linux/zh-cn/vim3),其中有详细描述刷机方法。以及系统镜像:VIM3 Linux:VIM3_Ubuntu-gnome-focal_Linux-4.9_arm64_EMMC_V1.0.7-210625:[官方链接](http://dl.khadas.com/firmware/VIM3/Ubuntu/EMMC/VIM3_Ubuntu-gnome-focal_Linux-4.9_arm64_EMMC_V1.0.7-210625.img.xz);[百度云备用链接](https://paddlelite-demo.bj.bcebos.com/devices/verisilicon/firmware/khadas/vim3/VIM3_Ubuntu-gnome-focal_Linux-4.9_arm64_EMMC_V1.0.7-210625.img.xz)

- - 荣品 RV1126、1109:由于出场自带 buildroot 系统,如果使用 GUI 界面的 demo,请先刷成 Ubuntu 系统,在此提供刷机教程:[RV1126/1109 教程](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/rockchip/RV1126-RV1109%E4%BD%BF%E7%94%A8%E6%8C%87%E5%AF%BC%E6%96%87%E6%A1%A3-V3.0.pdf),[刷机工具](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/rockchip/RKDevTool_Release.zip),以及镜像:[1126镜像](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/update-pro-rv1126-ubuntu20.04-5-720-1280-v2-20220505.img),[1109镜像](https://paddlelite-demo.bj.bcebos.com/Paddle-Lite-Demo/os_img/update-pro-rv1109-ubuntu20.04-5.5-720-1280-v2-20220429.img)。完整的文档和各种镜像请参考[百度网盘链接](https://pan.baidu.com/s/1Id0LMC0oO2PwR2YcYUAaiQ#list/path=%2F&parentPath=%2Fsharelink2521613171-184070898837664),密码:2345。

-* 准备 usb camera,注意使用 openCV capture 图像时,请注意 usb camera 的 video序列号作为入参。

-* 请注意,瑞芯微芯片不带有 HDMI 接口,图像显示是依赖 MIPI DSI,所以请准备好 MIPI 显示屏(我们提供的镜像是 720*1280 分辨率,网盘中有更多分辨率选择,注意:请选择 camera-gc2093x2 的镜像)。

-* 配置开发板的网络。如果是办公网络红区,可以将开发板和PC用以太网链接,然后PC共享网络给开发板。

-* gcc g++ opencv cmake 的安装(以下所有命令均在设备上操作)

-

-```bash

-$ sudo apt-get update

-$ sudo apt-get install gcc g++ make wget unzip libopencv-dev pkg-config

-$ wget https://www.cmake.org/files/v3.10/cmake-3.10.3.tar.gz

-$ tar -zxvf cmake-3.10.3.tar.gz

-$ cd cmake-3.10.3

-$ ./configure

-$ make

-$ sudo make install

-```

-

-### 部署步骤

-

-1. 将本 repo 上传至 VIM3 开发板,或者直接开发板上下载或者 git clone 本 repo

-2. 目标检测 Demo 位于 `Paddle-Lite-Demo/object_detection/linux/picodet_detection` 目录

-3. 进入 `Paddle-Lite-Demo/object_detection/linux` 目录, 终端中执行 `download_models_and_libs.sh` 脚本自动下载模型和 Paddle Lite 预测库

-

-```shell

-cd Paddle-Lite-Demo/object_detection/linux # 1. 终端中进入 Paddle-Lite-Demo/object_detection/linux

-sh download_models_and_libs.sh # 2. 执行脚本下载依赖项 (需要联网)

-```

-

-下载完成后会出现提示: `Download successful!`

-4. 执行用例(保证 ARMLinux 环境准备完成)

-

-```shell

-cd picodet_detection # 1. 终端中进入

-sh build.sh armv8 # 2. 编译 Demo 可执行程序,默认编译 armv8,如果是 32bit 环境,则改成 sh build.sh armv7hf。

-sh run.sh armv8 # 3. 执行物体检测(picodet 模型) demo,会直接开启摄像头,启动图形界面并呈现检测结果。如果是 32bit 环境,则改成 sh run.sh armv7hf

-```

-

-### Demo 结果如下:(注意,示例的 picodet 仅使用 coco 数据集,在实际场景中效果一般,请使用实际业务场景重新训练)

-

-  -

-## 更新预测库

-

-* Paddle Lite 项目:https://github.com/PaddlePaddle/Paddle-Lite

- * 参考 [芯原 TIM-VX 部署示例](https://paddle-lite.readthedocs.io/zh/develop/demo_guides/verisilicon_timvx.html#tim-vx),编译预测库

- * 编译最终产物位于 `build.lite.xxx.xxx.xxx` 下的 `inference_lite_lib.xxx.xxx`

- * 替换 c++ 库

- * 头文件

- 将生成的 `build.lite.linux.armv8.gcc/inference_lite_lib.armlinux.armv8.nnadapter/cxx/include` 文件夹替换 Demo 中的 `Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/include`

- * armv8

- 将生成的 `build.lite.linux.armv8.gcc/inference_lite_lib.armlinux.armv8.nnadapter/cxx/libs/libpaddle_full_api_shared.so、libnnadapter.so、libtim-vx.so、libverisilicon_timvx.so` 库替换 Demo 中的 `Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/libs/armv8/` 目录下同名 so

- * armv7hf

- 将生成的 `build.lite.linux.armv7hf.gcc/inference_lite_lib.armlinux.armv7hf.nnadapter/cxx/libs/libpaddle_full_api_shared.so、libnnadapter.so、libtim-vx.so、libverisilicon_timvx.so` 库替换 Demo 中的 `Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/libs/armv7hf/` 目录下同名 so

-

-## Demo 内容介绍

-

-先整体介绍下目标检测 Demo 的代码结构,然后再简要地介绍 Demo 每部分功能.

-

-1. `object_detection_demo.cc`: C++ 预测代码

-

-```shell

-# 位置:

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/object_detection_demo.cc

-```

-

-2. `models` : 模型文件夹 (执行 download_models_and_libs.sh 后会下载 picodet Paddle 模型), label 使用 Paddle-Lite-Demo/object_detection/assets/labels 目录下 coco_label_list.txt

-

-```shell

-# 位置:

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/models/picodetv2_relu6_coco_no_fuse

-Paddle-Lite-Demo/object_detection/assets/labels/coco_label_list.txt

-```

-

-3. `Paddle-Lite`:内含 Paddle-Lite 头文件和 动态库,默认带有 timvx 加速库,以及第三方库 yaml-cpp 用于解析 yml 配置文件(执行 download_models_and_libs.sh 后会下载)

-

-```shell

-# 位置

-# 如果要替换动态库 so,则将新的动态库 so 更新到此目录下

-Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/libs/armv8

-Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/include

-```

-

-4. `CMakeLists.txt` : C++ 预测代码的编译脚本,用于生成可执行文件

-

-```shell

-# 位置

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/CMakeLists.txt

-# 如果有cmake 编译选项更新,可以在 CMakeLists.txt 进行修改即可,默认编译 armv8 可执行文件;

-```

-

-5. `build.sh` : 编译脚本

-

-```shell

-# 位置

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/build.sh

-```

-

-6. `run.sh` : 运行脚本,请注意设置 arm-aarch,armv8 或者 armv7hf。默认为armv8

-

-```shell

-# 位置

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/run.sh

-```

-- 请注意,运行需要5个元素:测试程序、模型、label 文件、异构配置、yaml 文件。

-

-## 代码讲解 (使用 Paddle Lite `C++ API` 执行预测)

-

-ARMLinux 示例基于 C++ API 开发,调用 Paddle Lite `C++s API` 包括以下五步。更详细的 `API` 描述参考:[Paddle Lite C++ API ](https://paddle-lite.readthedocs.io/zh/latest/api_reference/cxx_api_doc.html)。

-

-```c++

-#include

-

-## 更新预测库

-

-* Paddle Lite 项目:https://github.com/PaddlePaddle/Paddle-Lite

- * 参考 [芯原 TIM-VX 部署示例](https://paddle-lite.readthedocs.io/zh/develop/demo_guides/verisilicon_timvx.html#tim-vx),编译预测库

- * 编译最终产物位于 `build.lite.xxx.xxx.xxx` 下的 `inference_lite_lib.xxx.xxx`

- * 替换 c++ 库

- * 头文件

- 将生成的 `build.lite.linux.armv8.gcc/inference_lite_lib.armlinux.armv8.nnadapter/cxx/include` 文件夹替换 Demo 中的 `Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/include`

- * armv8

- 将生成的 `build.lite.linux.armv8.gcc/inference_lite_lib.armlinux.armv8.nnadapter/cxx/libs/libpaddle_full_api_shared.so、libnnadapter.so、libtim-vx.so、libverisilicon_timvx.so` 库替换 Demo 中的 `Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/libs/armv8/` 目录下同名 so

- * armv7hf

- 将生成的 `build.lite.linux.armv7hf.gcc/inference_lite_lib.armlinux.armv7hf.nnadapter/cxx/libs/libpaddle_full_api_shared.so、libnnadapter.so、libtim-vx.so、libverisilicon_timvx.so` 库替换 Demo 中的 `Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/libs/armv7hf/` 目录下同名 so

-

-## Demo 内容介绍

-

-先整体介绍下目标检测 Demo 的代码结构,然后再简要地介绍 Demo 每部分功能.

-

-1. `object_detection_demo.cc`: C++ 预测代码

-

-```shell

-# 位置:

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/object_detection_demo.cc

-```

-

-2. `models` : 模型文件夹 (执行 download_models_and_libs.sh 后会下载 picodet Paddle 模型), label 使用 Paddle-Lite-Demo/object_detection/assets/labels 目录下 coco_label_list.txt

-

-```shell

-# 位置:

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/models/picodetv2_relu6_coco_no_fuse

-Paddle-Lite-Demo/object_detection/assets/labels/coco_label_list.txt

-```

-

-3. `Paddle-Lite`:内含 Paddle-Lite 头文件和 动态库,默认带有 timvx 加速库,以及第三方库 yaml-cpp 用于解析 yml 配置文件(执行 download_models_and_libs.sh 后会下载)

-

-```shell

-# 位置

-# 如果要替换动态库 so,则将新的动态库 so 更新到此目录下

-Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/libs/armv8

-Paddle-Lite-Demo/object_detection/linux/Paddle-Lite/include

-```

-

-4. `CMakeLists.txt` : C++ 预测代码的编译脚本,用于生成可执行文件

-

-```shell

-# 位置

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/CMakeLists.txt

-# 如果有cmake 编译选项更新,可以在 CMakeLists.txt 进行修改即可,默认编译 armv8 可执行文件;

-```

-

-5. `build.sh` : 编译脚本

-

-```shell

-# 位置

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/build.sh

-```

-

-6. `run.sh` : 运行脚本,请注意设置 arm-aarch,armv8 或者 armv7hf。默认为armv8

-

-```shell

-# 位置

-Paddle-Lite-Demo/object_detection/linux/picodet_detection/run.sh

-```

-- 请注意,运行需要5个元素:测试程序、模型、label 文件、异构配置、yaml 文件。

-

-## 代码讲解 (使用 Paddle Lite `C++ API` 执行预测)

-

-ARMLinux 示例基于 C++ API 开发,调用 Paddle Lite `C++s API` 包括以下五步。更详细的 `API` 描述参考:[Paddle Lite C++ API ](https://paddle-lite.readthedocs.io/zh/latest/api_reference/cxx_api_doc.html)。

-

-```c++

-#include

-// 引入 C++ API

-#include "include/paddle_api.h"

-#include "include/paddle_use_ops.h"

-#include "include/paddle_use_kernels.h"

-

-// 使用在线编译模型的方式(等价于使用 opt 工具)

-

-// 1. 设置 CxxConfig

-paddle::lite_api::CxxConfig cxx_config;

-std::vector valid_places;

-valid_places.push_back(

- paddle::lite_api::Place{TARGET(kARM), PRECISION(kInt8)});

-valid_places.push_back(

- paddle::lite_api::Place{TARGET(kARM), PRECISION(kFloat)});

-// 如果只需要 cpu 计算,那到此结束即可,下面是设置 NPU 的代码段

-valid_places.push_back(

- paddle::lite_api::Place{TARGET(kNNAdapter), PRECISION(kInt8)});

-cxx_config.set_valid_places(valid_places);

-std::string device = "verisilicon_timvx";

-cxx_config.set_nnadapter_device_names({device});

-// 设置定制化的异构策略 (如需要)

-cxx_config.set_nnadapter_subgraph_partition_config_buffer(

- nnadapter_subgraph_partition_config_string);

-

-// 2. 生成 nb 模型 (等价于 opt 工具的产出)

-std::shared_ptr predictor = nullptr;

-predictor = paddle::lite_api::CreatePaddlePredictor(cxx_config);

-predictor->SaveOptimizedModel(

- model_path, paddle::lite_api::LiteModelType::kNaiveBuffer);

-

-// 3. 设置 MobileConfig

-MobileConfig config;

-config.set_model_from_file(modelPath); // 设置 NaiveBuffer 格式模型路径

-config.set_power_mode(LITE_POWER_NO_BIND); // 设置 CPU 运行模式

-config.set_threads(4); // 设置工作线程数

-

-// 4. 创建 PaddlePredictor

-predictor = CreatePaddlePredictor(config);

-

-// 5. 设置输入数据,注意,如果是带后处理的 picodet ,则是有两个输入

-std::unique_ptr input_tensor(std::move(predictor->GetInput(0)));

-input_tensor->Resize({1, 3, 416, 416});

-auto* data = input_tensor->mutable_data();

-// scale_factor tensor

-auto scale_factor_tensor = predictor->GetInput(1);

-scale_factor_tensor->Resize({1, 2});

-auto scale_factor_data = scale_factor_tensor->mutable_data();

-scale_factor_data[0] = 1.0f;

-scale_factor_data[1] = 1.0f;

-

-// 6. 执行预测

-predictor->run();

-

-// 7. 获取输出数据

-std::unique_ptr output_tensor(std::move(predictor->GetOutput(0)));

-

-```

-

-## 如何更新模型和输入/输出预处理

-

-### 更新模型

-1. 请参考 PaddleDetection 中 [picodet 重训和全量化文档](https://github.com/PaddlePaddle/PaddleDetection/blob/develop/configs/picodet/FULL_QUANTIZATION.md),基于用户自己数据集重训并且重新全量化

-2. 将模型存放到目录 `object_detection_demo/models/` 下;

-3. 模型名字跟工程中模型名字一模一样,即均是使用 `model`、`params`;

-

-```shell

-# shell 脚本 `object_detection_demo/run.sh`

-TARGET_ABI=armv8 # for 64bit, such as Amlogic A311D

-#TARGET_ABI=armv7hf # for 32bit, such as Rockchip 1109/1126

-if [ -n "$1" ]; then

- TARGET_ABI=$1

-fi

-export LD_LIBRARY_PATH=../Paddle-Lite/libs/$TARGET_ABI/

-export GLOG_v=0 # Paddle-Lite 日志等级

-export VSI_NN_LOG_LEVEL=0 # TIM-VX 日志等级

-export VIV_VX_ENABLE_GRAPH_TRANSFORM=-pcq:1 # NPU 开启 perchannel 量化模型

-export VIV_VX_SET_PER_CHANNEL_ENTROPY=100 # 同上

-build/object_detection_demo models/picodetv2_relu6_coco_no_fuse ../../assets/labels/coco_label_list.txt models/picodetv2_relu6_coco_no_fuse/subgraph.txt models/picodetv2_relu6_coco_no_fuse/picodet.yml # 执行 Demo 程序,4个 arg 分别为:模型、 label 文件、 自定义异构配置、 yaml

-```

-

-- 如果需要更新 `label_list` 或者 `yaml` 文件,则修改 `object_detection_demo/run.sh` 中执行命令的第二个和第四个 arg 指定为新的 label 文件和 yaml 配置文件;

-

-```shell

-# 代码文件 `object_detection_demo/rush.sh`

-export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:${PADDLE_LITE_DIR}/libs/${TARGET_ARCH_ABI}

-build/object_detection_demo {模型} {label} {自定义异构配置文件} {yaml}

-```

-

-### 更新输入/输出预处理

-

-1. 更新输入预处理

-预处理完全根据 yaml 文件来,如果完全按照 PaddleDetection 中 picodet 重训,只需要替换 yaml 文件即可

-

-2. 更新输出预处理

-此处需要更新 `object_detection_demo/object_detection_demo.cc` 中的 `postprocess` 方法

-

-```c++

-std::vector postprocess(const float *output_data, int64_t output_size,

- const std::vector &word_labels,

- const float score_threshold,

- cv::Mat &output_image, double time) {

- std::vector results;

- std::vector colors = {

- cv::Scalar(237, 189, 101), cv::Scalar(0, 0, 255),

- cv::Scalar(102, 153, 153), cv::Scalar(255, 0, 0),

- cv::Scalar(9, 255, 0), cv::Scalar(0, 0, 0),

- cv::Scalar(51, 153, 51)};

- for (int64_t i = 0; i < output_size; i += 6) {

- if (output_data[i + 1] < score_threshold) {

- continue;

- }

- int class_id = static_cast(output_data[i]);

- float score = output_data[i + 1];

- RESULT result;

- std::string class_name = "Unknown";

- if (word_labels.size() > 0 && class_id >= 0 &&

- class_id < word_labels.size()) {

- class_name = word_labels[class_id];

- }

- result.class_name = class_name;

- result.score = score;

- result.left = output_data[i + 2] / 416; // 此处416根据输入的 HW 得来

- result.top = output_data[i + 3] / 416;

- result.right = output_data[i + 4] / 416;

- result.bottom = output_data[i + 5] / 416;

- int lx = static_cast(result.left * output_image.cols);

- int ly = static_cast(result.top * output_image.rows);

- int w = static_cast(result.right * output_image.cols) - lx;

- int h = static_cast(result.bottom * output_image.rows) - ly;

- cv::Rect bounding_box =

- cv::Rect(lx, ly, w, h) &

- cv::Rect(0, 0, output_image.cols, output_image.rows);

- if (w > 0 && h > 0 && score <= 1) {

- cv::Scalar color = colors[results.size() % colors.size()];

- cv::rectangle(output_image, bounding_box, color);

- cv::rectangle(output_image, cv::Point2d(lx, ly),

- cv::Point2d(lx + w, ly - 10), color, -1);

- cv::putText(output_image, std::to_string(results.size()) + "." +

- class_name + ":" + std::to_string(score),

- cv::Point2d(lx, ly), cv::FONT_HERSHEY_PLAIN, 1,

- cv::Scalar(255, 255, 255));

- results.push_back(result);

- }

- }

- return results;

-}

-```

-

-## 更新模型后,自定义 NPU-CPU 异构配置(如需使用 NPU 加速)

-由于使用芯原 NPU 在 8bit 量化的情况下有最优的性能,因此部署时,我们往往会考虑量化

- - 由于量化可能会引入一定程度的精度问题,所以我们可以通过自定义的异构定制,来将部分有精度问题的 layer 异构至cpu,从而达到最优的精度

-

-### 第一步,确定模型量化后在 arm cpu 上的精度

-如果在 arm cpu 上,精度都无法满足,那量化本身就是失败的,此时可以考虑修改训练集或者预处理。

- - 修改 Demo 程序,仅用 arm cpu 计算

-```c++

-paddle::lite_api::CxxConfig cxx_config;

-std::vector valid_places;

-valid_places.push_back(

- paddle::lite_api::Place{TARGET(kARM), PRECISION(kInt8)});

-valid_places.push_back(

- paddle::lite_api::Place{TARGET(kARM), PRECISION(kFloat)});

-// 仅用 arm cpu 计算, 注释如下代码即可

-/*

-valid_places.push_back(

- paddle::lite_api::Place{TARGET(kNNAdapter), PRECISION(kInt8)});

-valid_places.push_back(

- paddle::lite_api::Place{TARGET(kNNAdapter), PRECISION(kFloat)});

-*/

-```

-如果 arm cpu 计算结果精度达标,则继续

-

-### 第二步,获取整网拓扑信息

- - 回退第一步的修改,不再注释,使用 NPU 加速

- - 运行 Demo,如果此时精度良好,则无需参考后面步骤,模型部署和替换非常顺利,enjoy it。

- - 如果精度不行,请参考后续步骤。

-

-### 第三步,获取整网拓扑信息

- - 回退第一步的修改,使用

- - 修改 run.sh ,将其中 export GLOG_v=0 改为 export GLOG_v=5

- - 运行 Demo,等摄像头启动,即可 ctrl+c 关闭 Demo

- - 收集日志,搜索关键字 "subgraph operators" 随后那一段,便是整个模型的拓扑信息,其格式如下:

- - 每行记录由『算子类型:输入张量名列表:输出张量名列表』组成(即以分号分隔算子类型、输入和输出张量名列表),以逗号分隔输入、输出张量名列表中的每个张量名;

- - 示例说明:

- ```

- op_type0:var_name0,var_name1:var_name2 表示将算子类型为 op_type0、输入张量为var_name0 和 var_name1、输出张量为 var_name2 的节点强制运行在 ARM CPU 上

- ```

-

-### 第四步,修改异构配置文件

- - 首先看到示例 Demo 中 Paddle-Lite-Demo/object_detection/linux/picodet_detection/models/picodetv2_relu6_coco_no_fuse 目录下的 subgraph.txt 文件。(feed 和 fetch 分别代表整个模型的输入和输入)

- ```

- feed:feed:scale_factor

- feed:feed:image

-

- sqrt:tmp_3:sqrt_0.tmp_0

- reshape2:sqrt_0.tmp_0:reshape2_0.tmp_0,reshape2_0.tmp_1

-

- matmul_v2:softmax_0.tmp_0,auto_113_:linear_0.tmp_0

- reshape2:linear_0.tmp_0:reshape2_2.tmp_0,reshape2_2.tmp_1

-

- sqrt:tmp_6:sqrt_1.tmp_0

- reshape2:sqrt_1.tmp_0:reshape2_3.tmp_0,reshape2_3.tmp_1

-

- matmul_v2:softmax_1.tmp_0,auto_113_:linear_1.tmp_0

- reshape2:linear_1.tmp_0:reshape2_5.tmp_0,reshape2_5.tmp_1

-

- sqrt:tmp_9:sqrt_2.tmp_0

- reshape2:sqrt_2.tmp_0:reshape2_6.tmp_0,reshape2_6.tmp_1

-

- matmul_v2:softmax_2.tmp_0,auto_113_:linear_2.tmp_0

- ...

- ```

- - 在 txt 中的都是需要异构至 cpu 计算的 layer,在示例 Demo 中,我们把 picodet 后处理的部分异构至 arm cpu 做计算,不必担心,Paddle-Lite 的 arm kernel 性能也是非常卓越。

- - 如果新训练的模型没有额外修改 layer,则直接复制使用示例 Demo 中的 subgraph.txt 即可

- - 此时 ./run.sh 看看精度是否符合预期,如果精度符合预期,恭喜,可以跳过本章节,enjoy it。

- - 如果精度不符合预期,则将上文『第二步,获取整网拓扑信息』中获取的拓扑信息,从 "feed" 之后第一行,直到 "sqrt" 之前,都复制进 sugraph.txt。这一步代表了将大量的 backbone 部分算子放到 arm cpu 计算。

- - 此时 ./run.sh 看看精度是否符合预期,如果精度达标,那说明在 backbone 中确实存在引入 NPU 精度异常的层(再次重申,在 subgraph.txt 的代表强制在 arm cpu 计算)。

- - 逐行删除、成片删除、二分法,发挥开发人员的耐心,找到引入 NPU 精度异常的 layer,将其留在 subgraph.txt 中,按照经验,如果有 NPU 精度问题,可能会有 1~5 层conv layer 需要异构。

- - 剩余没有精度问题的 layer 在 subgraph.txt 中删除即可

diff --git a/examples/vision/detection/paddledetection/rk1126/picodet_detection/build.sh b/examples/vision/detection/paddledetection/rk1126/picodet_detection/build.sh

deleted file mode 100755

index 1219c481b9..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/picodet_detection/build.sh

+++ /dev/null

@@ -1,17 +0,0 @@

-#!/bin/bash

-USE_FULL_API=TRUE

-# configure

-TARGET_ARCH_ABI=armv8 # for RK3399, set to default arch abi

-#TARGET_ARCH_ABI=armv7hf # for Raspberry Pi 3B

-PADDLE_LITE_DIR=../Paddle-Lite

-THIRD_PARTY_DIR=./third_party

-if [ "x$1" != "x" ]; then

- TARGET_ARCH_ABI=$1

-fi

-

-# build

-rm -rf build

-mkdir build

-cd build

-cmake -DPADDLE_LITE_DIR=${PADDLE_LITE_DIR} -DTARGET_ARCH_ABI=${TARGET_ARCH_ABI} -DTHIRD_PARTY_DIR=${THIRD_PARTY_DIR} ..

-make

diff --git a/examples/vision/detection/paddledetection/rk1126/picodet_detection/object_detection_demo.cc b/examples/vision/detection/paddledetection/rk1126/picodet_detection/object_detection_demo.cc

deleted file mode 100644

index 8c132dea27..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/picodet_detection/object_detection_demo.cc

+++ /dev/null

@@ -1,411 +0,0 @@

-// Copyright (c) 2019 PaddlePaddle Authors. All Rights Reserved.

-//

-// Licensed under the Apache License, Version 2.0 (the "License");

-// you may not use this file except in compliance with the License.

-// You may obtain a copy of the License at

-//

-// http://www.apache.org/licenses/LICENSE-2.0

-//

-// Unless required by applicable law or agreed to in writing, software

-// distributed under the License is distributed on an "AS IS" BASIS,

-// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

-// See the License for the specific language governing permissions and

-// limitations under the License.

-

-#include "paddle_api.h"

-#include "yaml-cpp/yaml.h"

-#include

-#include

-#include

-#include

-#include

-#include

-#include

-#include

-#include

-#include

-

-int WARMUP_COUNT = 0;

-int REPEAT_COUNT = 1;

-const int CPU_THREAD_NUM = 2;

-const paddle::lite_api::PowerMode CPU_POWER_MODE =

- paddle::lite_api::PowerMode::LITE_POWER_HIGH;

-const std::vector INPUT_SHAPE = {1, 3, 416, 416};

-std::vector INPUT_MEAN = {0.f, 0.f, 0.f};

-std::vector INPUT_STD = {1.f, 1.f, 1.f};

-float INPUT_SCALE = 1 / 255.f;

-const float SCORE_THRESHOLD = 0.35f;

-

-struct RESULT {

- std::string class_name;

- float score;

- float left;

- float top;

- float right;

- float bottom;

-};

-

-inline int64_t get_current_us() {

- struct timeval time;

- gettimeofday(&time, NULL);

- return 1000000LL * (int64_t)time.tv_sec + (int64_t)time.tv_usec;

-}

-

-bool read_file(const std::string &filename, std::vector *contents,

- bool binary = true) {

- FILE *fp = fopen(filename.c_str(), binary ? "rb" : "r");

- if (!fp)

- return false;

- fseek(fp, 0, SEEK_END);

- size_t size = ftell(fp);

- fseek(fp, 0, SEEK_SET);

- contents->clear();

- contents->resize(size);

- size_t offset = 0;

- char *ptr = reinterpret_cast(&(contents->at(0)));

- while (offset < size) {

- size_t already_read = fread(ptr, 1, size - offset, fp);

- offset += already_read;

- ptr += already_read;

- }

- fclose(fp);

- return true;

-}

-

-std::vector load_labels(const std::string &path) {

- std::ifstream file;

- std::vector labels;

- file.open(path);

- while (file) {

- std::string line;

- std::getline(file, line);

- labels.push_back(line);

- }

- file.clear();

- file.close();

- return labels;

-}

-

-bool load_yaml_config(std::string yaml_path) {

- YAML::Node cfg;

- try {

- std::cout << "before loadFile" << std::endl;

- cfg = YAML::LoadFile(yaml_path);

- } catch (YAML::BadFile &e) {

- std::cout << "Failed to load yaml file " << yaml_path

- << ", maybe you should check this file." << std::endl;

- return false;

- }

- auto preprocess_cfg = cfg["TestReader"]["sample_transforms"];

- for (const auto &op : preprocess_cfg) {

- if (!op.IsMap()) {

- std::cout << "Require the transform information in yaml be Map type."

- << std::endl;

- std::abort();

- }

- auto op_name = op.begin()->first.as();

- if (op_name == "NormalizeImage") {

- INPUT_MEAN = op.begin()->second["mean"].as>();

- INPUT_STD = op.begin()->second["std"].as>();

- INPUT_SCALE = op.begin()->second["scale"].as();

- }

- }

- return true;

-}

-

-void preprocess(cv::Mat &input_image, std::vector &input_mean,

- std::vector &input_std, float input_scale,

- int input_width, int input_height, float *input_data) {

- cv::Mat resize_image;

- cv::resize(input_image, resize_image, cv::Size(input_width, input_height), 0,

- 0);

- if (resize_image.channels() == 4) {

- cv::cvtColor(resize_image, resize_image, cv::COLOR_BGRA2RGB);

- }

- cv::Mat norm_image;

- resize_image.convertTo(norm_image, CV_32FC3, input_scale);

- // NHWC->NCHW

- int image_size = input_height * input_width;

- const float *image_data = reinterpret_cast(norm_image.data);

- float32x4_t vmean0 = vdupq_n_f32(input_mean[0]);

- float32x4_t vmean1 = vdupq_n_f32(input_mean[1]);

- float32x4_t vmean2 = vdupq_n_f32(input_mean[2]);

- float32x4_t vscale0 = vdupq_n_f32(1.0f / input_std[0]);

- float32x4_t vscale1 = vdupq_n_f32(1.0f / input_std[1]);

- float32x4_t vscale2 = vdupq_n_f32(1.0f / input_std[2]);

- float *input_data_c0 = input_data;

- float *input_data_c1 = input_data + image_size;

- float *input_data_c2 = input_data + image_size * 2;

- int i = 0;

- for (; i < image_size - 3; i += 4) {

- float32x4x3_t vin3 = vld3q_f32(image_data);

- float32x4_t vsub0 = vsubq_f32(vin3.val[0], vmean0);

- float32x4_t vsub1 = vsubq_f32(vin3.val[1], vmean1);

- float32x4_t vsub2 = vsubq_f32(vin3.val[2], vmean2);

- float32x4_t vs0 = vmulq_f32(vsub0, vscale0);

- float32x4_t vs1 = vmulq_f32(vsub1, vscale1);

- float32x4_t vs2 = vmulq_f32(vsub2, vscale2);

- vst1q_f32(input_data_c0, vs0);

- vst1q_f32(input_data_c1, vs1);

- vst1q_f32(input_data_c2, vs2);

- image_data += 12;

- input_data_c0 += 4;

- input_data_c1 += 4;

- input_data_c2 += 4;

- }

- for (; i < image_size; i++) {

- *(input_data_c0++) = (*(image_data++) - input_mean[0]) / input_std[0];

- *(input_data_c1++) = (*(image_data++) - input_mean[1]) / input_std[1];

- *(input_data_c2++) = (*(image_data++) - input_mean[2]) / input_std[2];

- }

-}

-

-std::vector postprocess(const float *output_data, int64_t output_size,

- const std::vector &word_labels,

- const float score_threshold,

- cv::Mat &output_image, double time) {

- std::vector results;

- std::vector colors = {

- cv::Scalar(237, 189, 101), cv::Scalar(0, 0, 255),

- cv::Scalar(102, 153, 153), cv::Scalar(255, 0, 0),

- cv::Scalar(9, 255, 0), cv::Scalar(0, 0, 0),

- cv::Scalar(51, 153, 51)};

- for (int64_t i = 0; i < output_size; i += 6) {

- if (output_data[i + 1] < score_threshold) {

- continue;

- }

- int class_id = static_cast(output_data[i]);

- float score = output_data[i + 1];

- RESULT result;

- std::string class_name = "Unknown";

- if (word_labels.size() > 0 && class_id >= 0 &&

- class_id < word_labels.size()) {

- class_name = word_labels[class_id];

- }

- result.class_name = class_name;

- result.score = score;

- result.left = output_data[i + 2] / 416;

- result.top = output_data[i + 3] / 416;

- result.right = output_data[i + 4] / 416;

- result.bottom = output_data[i + 5] / 416;

- int lx = static_cast(result.left * output_image.cols);

- int ly = static_cast(result.top * output_image.rows);

- int w = static_cast(result.right * output_image.cols) - lx;

- int h = static_cast(result.bottom * output_image.rows) - ly;

- cv::Rect bounding_box =

- cv::Rect(lx, ly, w, h) &

- cv::Rect(0, 0, output_image.cols, output_image.rows);

- if (w > 0 && h > 0 && score <= 1) {

- cv::Scalar color = colors[results.size() % colors.size()];

- cv::rectangle(output_image, bounding_box, color);

- cv::rectangle(output_image, cv::Point2d(lx, ly),

- cv::Point2d(lx + w, ly - 10), color, -1);

- cv::putText(output_image, std::to_string(results.size()) + "." +

- class_name + ":" + std::to_string(score),

- cv::Point2d(lx, ly), cv::FONT_HERSHEY_PLAIN, 1,

- cv::Scalar(255, 255, 255));

- results.push_back(result);

- }

- }

- return results;

-}

-

-cv::Mat process(cv::Mat &input_image, std::vector &word_labels,

- std::shared_ptr &predictor) {

- // Preprocess image and fill the data of input tensor

- std::unique_ptr input_tensor(

- std::move(predictor->GetInput(0)));

- input_tensor->Resize(INPUT_SHAPE);

- int input_width = INPUT_SHAPE[3];

- int input_height = INPUT_SHAPE[2];

- auto *input_data = input_tensor->mutable_data();

-#if 1

- // scale_factor tensor

- auto scale_factor_tensor = predictor->GetInput(1);

- scale_factor_tensor->Resize({1, 2});

- auto scale_factor_data = scale_factor_tensor->mutable_data();

- scale_factor_data[0] = 1.0f;

- scale_factor_data[1] = 1.0f;

-#endif

-

- double preprocess_start_time = get_current_us();

- preprocess(input_image, INPUT_MEAN, INPUT_STD, INPUT_SCALE, input_width,

- input_height, input_data);

- double preprocess_end_time = get_current_us();

- double preprocess_time =

- (preprocess_end_time - preprocess_start_time) / 1000.0f;

-

- double prediction_time;

- // Run predictor

- // warm up to skip the first inference and get more stable time, remove it in

- // actual products

- for (int i = 0; i < WARMUP_COUNT; i++) {

- predictor->Run();

- }

- // repeat to obtain the average time, set REPEAT_COUNT=1 in actual products

- double max_time_cost = 0.0f;

- double min_time_cost = std::numeric_limits::max();

- double total_time_cost = 0.0f;

- for (int i = 0; i < REPEAT_COUNT; i++) {

- auto start = get_current_us();

- predictor->Run();

- auto end = get_current_us();

- double cur_time_cost = (end - start) / 1000.0f;

- if (cur_time_cost > max_time_cost) {

- max_time_cost = cur_time_cost;

- }

- if (cur_time_cost < min_time_cost) {

- min_time_cost = cur_time_cost;

- }

- total_time_cost += cur_time_cost;

- prediction_time = total_time_cost / REPEAT_COUNT;

- printf("iter %d cost: %f ms\n", i, cur_time_cost);

- }

- printf("warmup: %d repeat: %d, average: %f ms, max: %f ms, min: %f ms\n",

- WARMUP_COUNT, REPEAT_COUNT, prediction_time, max_time_cost,

- min_time_cost);

-

- // Get the data of output tensor and postprocess to output detected objects

- std::unique_ptr output_tensor(

- std::move(predictor->GetOutput(0)));

- const float *output_data = output_tensor->mutable_data();

- int64_t output_size = 1;

- for (auto dim : output_tensor->shape()) {

- output_size *= dim;

- }

- cv::Mat output_image = input_image.clone();

- double postprocess_start_time = get_current_us();

- std::vector results =

- postprocess(output_data, output_size, word_labels, SCORE_THRESHOLD,

- output_image, prediction_time);

- double postprocess_end_time = get_current_us();

- double postprocess_time =

- (postprocess_end_time - postprocess_start_time) / 1000.0f;

-

- printf("results: %d\n", results.size());

- for (int i = 0; i < results.size(); i++) {

- printf("[%d] %s - %f %f,%f,%f,%f\n", i, results[i].class_name.c_str(),

- results[i].score, results[i].left, results[i].top, results[i].right,

- results[i].bottom);

- }

- printf("Preprocess time: %f ms\n", preprocess_time);

- printf("Prediction time: %f ms\n", prediction_time);

- printf("Postprocess time: %f ms\n\n", postprocess_time);

-

- return output_image;

-}

-

-int main(int argc, char **argv) {

- if (argc < 5 || argc == 6) {

- printf("Usage: \n"

- "./object_detection_demo model_dir label_path [input_image_path] "

- "[output_image_path]"

- "use images from camera if input_image_path and input_image_path "

- "isn't provided.");

- return -1;

- }

-

- std::string model_path = argv[1];

- std::string label_path = argv[2];

- std::vector word_labels = load_labels(label_path);

- std::string nnadapter_subgraph_partition_config_path = argv[3];

-

- std::string yaml_path = argv[4];

- if (yaml_path != "null") {

- load_yaml_config(yaml_path);

- }

-

- // Run inference by using full api with CxxConfig

- paddle::lite_api::CxxConfig cxx_config;

- if (1) { // combined model

- cxx_config.set_model_file(model_path + "/model");

- cxx_config.set_param_file(model_path + "/params");

- } else {

- cxx_config.set_model_dir(model_path);

- }

- cxx_config.set_threads(CPU_THREAD_NUM);

- cxx_config.set_power_mode(CPU_POWER_MODE);

-

- std::shared_ptr predictor = nullptr;

- std::vector valid_places;

- valid_places.push_back(

- paddle::lite_api::Place{TARGET(kARM), PRECISION(kInt8)});

- valid_places.push_back(

- paddle::lite_api::Place{TARGET(kARM), PRECISION(kFloat)});

- valid_places.push_back(

- paddle::lite_api::Place{TARGET(kNNAdapter), PRECISION(kInt8)});

- valid_places.push_back(

- paddle::lite_api::Place{TARGET(kNNAdapter), PRECISION(kFloat)});

- cxx_config.set_valid_places(valid_places);

- std::string device = "verisilicon_timvx";

- cxx_config.set_nnadapter_device_names({device});

- // cxx_config.set_nnadapter_context_properties(nnadapter_context_properties);

-

- // cxx_config.set_nnadapter_model_cache_dir(nnadapter_model_cache_dir);

- // Set the subgraph custom partition configuration file

-

- if (!nnadapter_subgraph_partition_config_path.empty()) {

- std::vector nnadapter_subgraph_partition_config_buffer;

- if (read_file(nnadapter_subgraph_partition_config_path,

- &nnadapter_subgraph_partition_config_buffer, false)) {

- if (!nnadapter_subgraph_partition_config_buffer.empty()) {

- std::string nnadapter_subgraph_partition_config_string(

- nnadapter_subgraph_partition_config_buffer.data(),

- nnadapter_subgraph_partition_config_buffer.size());

- cxx_config.set_nnadapter_subgraph_partition_config_buffer(

- nnadapter_subgraph_partition_config_string);

- }

- } else {

- printf("Failed to load the subgraph custom partition configuration file "

- "%s\n",

- nnadapter_subgraph_partition_config_path.c_str());

- }

- }

-

- try {

- predictor = paddle::lite_api::CreatePaddlePredictor(cxx_config);

- predictor->SaveOptimizedModel(

- model_path, paddle::lite_api::LiteModelType::kNaiveBuffer);

- } catch (std::exception e) {

- printf("An internal error occurred in PaddleLite(cxx config).\n");

- }

-

- paddle::lite_api::MobileConfig config;

- config.set_model_from_file(model_path + ".nb");

- config.set_threads(CPU_THREAD_NUM);

- config.set_power_mode(CPU_POWER_MODE);

- config.set_nnadapter_device_names({device});

- predictor =

- paddle::lite_api::CreatePaddlePredictor(

- config);

- if (argc > 5) {

- WARMUP_COUNT = 1;

- REPEAT_COUNT = 5;

- std::string input_image_path = argv[5];

- std::string output_image_path = argv[6];

- cv::Mat input_image = cv::imread(input_image_path);

- cv::Mat output_image = process(input_image, word_labels, predictor);

- cv::imwrite(output_image_path, output_image);

- cv::imshow("Object Detection Demo", output_image);

- cv::waitKey(0);

- } else {

- cv::VideoCapture cap(1);

- cap.set(cv::CAP_PROP_FRAME_WIDTH, 640);

- cap.set(cv::CAP_PROP_FRAME_HEIGHT, 480);

- if (!cap.isOpened()) {

- return -1;

- }

- while (1) {

- cv::Mat input_image;

- cap >> input_image;

- cv::Mat output_image = process(input_image, word_labels, predictor);

- cv::imshow("Object Detection Demo", output_image);

- if (cv::waitKey(1) == char('q')) {

- break;

- }

- }

- cap.release();

- cv::destroyAllWindows();

- }

- return 0;

-}

diff --git a/examples/vision/detection/paddledetection/rk1126/picodet_detection/run.sh b/examples/vision/detection/paddledetection/rk1126/picodet_detection/run.sh

deleted file mode 100755

index a6025104a1..0000000000

--- a/examples/vision/detection/paddledetection/rk1126/picodet_detection/run.sh

+++ /dev/null

@@ -1,15 +0,0 @@

-#!/bin/bash

-#run

-

-TARGET_ABI=armv8 # for 64bit

-#TARGET_ABI=armv7hf # for 32bit

-if [ -n "$1" ]; then

- TARGET_ABI=$1

-fi

-export LD_LIBRARY_PATH=../Paddle-Lite/libs/$TARGET_ABI/

-export GLOG_v=0

-export VSI_NN_LOG_LEVEL=0

-export VIV_VX_ENABLE_GRAPH_TRANSFORM=-pcq:1

-export VIV_VX_SET_PER_CHANNEL_ENTROPY=100

-export TIMVX_BATCHNORM_FUSION_MAX_ALLOWED_QUANT_SCALE_DEVIATION=30000

-build/object_detection_demo models/picodetv2_relu6_coco_no_fuse ../../assets/labels/coco_label_list.txt models/picodetv2_relu6_coco_no_fuse/subgraph.txt models/picodetv2_relu6_coco_no_fuse/picodet.yml

diff --git a/examples/vision/detection/paddledetection/rv1126/README.md b/examples/vision/detection/paddledetection/rv1126/README.md

new file mode 100755

index 0000000000..ff1d58bab6

--- /dev/null

+++ b/examples/vision/detection/paddledetection/rv1126/README.md

@@ -0,0 +1,11 @@

+# PP-YOLOE 量化模型在 RV1126 上的部署

+目前 FastDeploy 已经支持基于 PaddleLite 部署 PP-YOLOE 量化模型到 RV1126 上。

+

+模型的量化和量化模型的下载请参考:[模型量化](../quantize/README.md)

+

+

+## 详细部署文档

+

+在 RV1126 上只支持 C++ 的部署。

+

+- [C++部署](cpp)

diff --git a/examples/vision/detection/paddledetection/rv1126/cpp/CMakeLists.txt b/examples/vision/detection/paddledetection/rv1126/cpp/CMakeLists.txt

new file mode 100755

index 0000000000..7a145177ee

--- /dev/null

+++ b/examples/vision/detection/paddledetection/rv1126/cpp/CMakeLists.txt

@@ -0,0 +1,38 @@

+PROJECT(infer_demo C CXX)

+CMAKE_MINIMUM_REQUIRED (VERSION 3.10)

+

+# 指定下载解压后的fastdeploy库路径

+option(FASTDEPLOY_INSTALL_DIR "Path of downloaded fastdeploy sdk.")

+

+include(${FASTDEPLOY_INSTALL_DIR}/FastDeploy.cmake)

+

+# 添加FastDeploy依赖头文件

+include_directories(${FASTDEPLOY_INCS})

+include_directories(${FastDeploy_INCLUDE_DIRS})

+

+add_executable(infer_demo ${PROJECT_SOURCE_DIR}/infer_ppyoloe.cc)

+# 添加FastDeploy库依赖

+target_link_libraries(infer_demo ${FASTDEPLOY_LIBS})

+

+set(CMAKE_INSTALL_PREFIX ${CMAKE_SOURCE_DIR}/build/install)

+

+install(TARGETS infer_demo DESTINATION ./)

+

+install(DIRECTORY models DESTINATION ./)

+install(DIRECTORY images DESTINATION ./)

+# install(DIRECTORY run_with_adb.sh DESTINATION ./)

+

+file(GLOB FASTDEPLOY_LIBS ${FASTDEPLOY_INSTALL_DIR}/lib/*)

+install(PROGRAMS ${FASTDEPLOY_LIBS} DESTINATION lib)

+

+file(GLOB OPENCV_LIBS ${FASTDEPLOY_INSTALL_DIR}/third_libs/install/opencv/lib/lib*)

+install(PROGRAMS ${OPENCV_LIBS} DESTINATION lib)

+

+file(GLOB PADDLELITE_LIBS ${FASTDEPLOY_INSTALL_DIR}/third_libs/install/paddlelite/lib/lib*)

+install(PROGRAMS ${PADDLELITE_LIBS} DESTINATION lib)

+