New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Fix for issue #1136 - Error 500 deleting DB without quorum #1139

Conversation

Complete deletion tests with not found

src/fabric/src/fabric_db_delete.erl

Outdated

| {N, M, _} when N >= (W div 2 + 1), M > 0 -> | ||

| {stop, accepted}; |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@kocolosk as the original decision maker for these semantics, do you have a problem with this? We've accepted the same idea on the create db side but before we accept this one, and make a formal release including both changes, I wanted to hear your thoughts. The idea here is that you should not get a 500 error when creating or deleting a db in a degraded cluster.

|

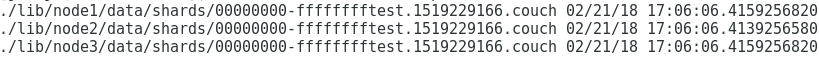

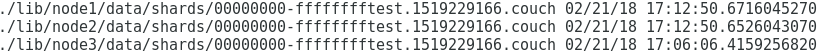

@rnewson I've done some more testing on the deletion with different cluster conditions. It seems that some .couch files keep orphaned if a database is deleted with one of the nodes of the cluster stopped.

The database is not found but the .couch file is propagated from the restarted node to the rest It seems that .couch files keep orphaned in the system after a deletion with at least a node stopped. This PR does not modify this behavior. Do you think, this is an issue? |

|

Thanks for this PR @jjrodrig, very well done. The orphaned files are a known condition. They are OK from the perspective of database correctness, as a new database created with the same name will have a different creation timestamp and so the old data would not be surfaced. It could be a useful future enhancement to remove orphaned shard files in a background process. The previous behavior of distinguishing between a majority or minority of committed updates to the replicas of the shard table is not something I'm interested in preserving. I think we can do better. I do think the use of 202 Accepted as an indicator to the client that "hey, things are a little messy right now, you might see surprises while we work things out" is a good thing. My concern with the current PR is that we may return 200 OK to a user even though some nodes in the cluster still host the old (soon-to-be-deleted) version of the database. For example, consider the following sequence of events:

In this sequence the Perhaps a preferable approach is to use

The downside of this approach is that we wait around to hear back from every cluster member, and the What do you think? |

|

Thanks @kocolosk for your comments. I see that the orphaned files question is a different issue. We are facing it as part of a cleanup process that we have implemented in our system. Databases are periodically transferred to a temporary database and then moved back to the work database. It is like a purging procedure that allows us to keep the databases small. During this process we are removing and creating databases and orphaned files are produced. +1 for the orphaned files cleanup process enhancement. Respect to the main issue of this PR, I think that the main problem is to respond with a The idea of responding |

|

@jjrodrig Are there updates coming to this PR from you? |

…error-deleting-db

|

@wohali I'll modify the condition to respond with 200 - Ok only if we have a response from all the nodes, If we have a positive response from some of them 202-Accepted is returned. The problem I see is that the behaviour is not consistent with the creation where the quorum is considered, but is better than the current situation which returns a 500-Error |

|

@jjrodrig can you resolve the conflict? Thanks! |

|

@janl I've updated db deletion tests to cover new behaviour Thanks |

apache/couchdb#1139 changes the meaning of some response codes for database deletion request. This PR documents the response codes according to the change.

Overview

The current behaviour for database deletion in a cluster is:

After this PR the behaviour for database deletetion will be:

Testing recommendations

This PR can be tested in this way

New test for db deletion is included

Side change in test/javascript/run

I've included a change in test/javascript/run. Now the script does not exit with error if the parameter suites or ignore_js_suites is provided with a value that is not matched with an existing test.

After this PR, it is possible to run

make check ignore_js_suites=reduce_builtinormake check suites=all_docswithout getting an error in the new cluster testing targets.Related Issues or Pull Requests

Fixes #1136

Checklist