New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Broken tests on multiple challenges in the Information Security with HelmetJS #39190

Comments

|

I can confirm this is an issue. It appears the likely cause is helmetjs renaming their middleware. These are the new name: I cannot confirm anything wrong with the CSP tests. |

|

Some of the function names changed module aliases tests Shouldn't we get this fixed sooner rather than later? Unless someone jumps on this fairly quickly I'd suggest removing the Side note: The way the tests are done (_router.stack) seems like it would be pretty error/breakage-prone. |

|

@lasjorg You are right, it would be best to get it fixed sooner. I just expected someone to jump on it, as it is quick and easy. The tests are done in a way that keeps us on our toes, regarding changes. |

|

Is it okay if I raised a PR to fix this? or is someone else on it? I haven't really dug through the fcc code base so I'm not sure where the tests live but now that I know the root cause it seems simple enough. |

|

@obsessedyouth , No one has mentioned to be working on it. So, go ahead. Here is where the tests live: https://github.com/freeCodeCamp/freeCodeCamp/tree/master/curriculum/challenges/english/09-information-security/information-security-with-helmetjs |

|

Just to confirm, an old version of HelmetJS we specified in the project's |

|

@RandellDawson The starter boilerplate does not have helmetjs in the package.json, the first challenge is to add it. Not so sure locking the boilerplate to a specific version is the right choice, although it is certainly an option. |

|

Also just as an aside. If we want to keep backward compatibility with projects made before this change we have to check for both the old and new function names. Not sure what the consensus on this is? |

|

If we must, I would suggest checking for both old and new, as lasjorg mentioned. Originally, I assumed backwards compatibility was not a problem, because this is related to the lesson content, and not projects. So, all a user currently on them has to do, is update the version of Helmetjs in their package.json. |

|

I'm just calling the finished version of the code a camper would end up with from this section of challenges a "project". I know it isn't really a project. Sure it is easy to fix, but you have to know what the problem is and to update the dependencies. I guess there isn't any expectation that the code used to pass the challenges will be accessible or remain valid. Once you have passed all the challenges you can delete the code and still have a valid passing section of challenges. So it doesn't matter much (from a validation point of view) if the code doesn't pass at a later date. But from a camper's point of view, it may be confusing why code that was passing now doesn't. |

|

In order to pass the test Mitigate the Risk of Clickjacking with helmet.frameguard() i had to go back to version: 2.3.0 |

|

Hi @lasjorg @SKY020 @RandellDawson can you guys please reach a consensus about what will be done regarding this issue? It seems it is starting to affect more campers. I think my PR addresses this and backward compatibility is extremely unlikely since I doubt anyone would try to attempt a lesson with an older version of a project using helmet.js. However if you don't find it a satisfactory solution could you please suggest a better fix? |

@obsessedyouth Fixing the current issue is definitely a lot more important than any backward compatibility considerations. I think it might be fine to break old "projects". I doubt that there are many people that will go back and re-run the tests on their old code. However, it is possible that some campers may have started doing the challenges and then moved on to something else, or took a break, and when they return back their starter code will be broken. I just wanted to point out that updating the tests would break any older starter code. But like I said backward compatibility issues are not as important as fixing the current one. |

|

@moT01 @ojeytonwilliams Any thoughts on this issue? |

|

How about rewriting the lesson that installs the package to make users install the specific version we were using when the lessons were written? This will keep everything compatible and save us from running into this issue again if something else in the package changes. I don't like teaching older versions of things, but it may be a good way to make sure things keep working - I think that would work anyway. |

|

@moT01 That is a good point. Many companies purposely do not upgrade a package, to avoid breaking existing code. |

|

Well, this was an unexpected outcome but I'm happy this will get addressed either way. |

|

Yep, I agree with Tom. The packages should definitely get pinned to specific versions and get updated when a contributor intends to move to new versions. Ideally they should be updated to the latest stable builds, but it's important that the process is controlled. |

|

I honestly think the real problem here is how we are testing for the middleware.

Ideally, we should able to check for the middleware by looking at publicly exposed APIs and not depend on the internal function names. I'm just not sure how you would normally test/check for properly mounted middleware. I'm guessing most just check the functionality and look to see if the middleware is performing as expected and not check for the mounting itself. I'd still expect there to be some way to perform testing for properly mounted middleware without having to do what we are doing. |

|

@RandellDawson @ojeytonwilliams @moT01 So what is the plan here? Should we update the challenge description to ask the camper to install a specific version or do we just abandon backward compatibility and go with the PR from obsessedyouth? BTW, I believe I tested all the challenges at one point with version |

|

I think there was pretty good support for rewriting the lesson to use a specific version of helmet - If the current tests all pass with |

|

The instructions of the tests does not say you should use v3.21.3 on all the tests, only on the first test. It does say you should clone the repo for each and every test though. So it's a bit misleading, should one use v3.21.3 or the latest? What about express, which version? |

Where does it say that? As far as I can tell it just gives you a reminder of the starting project used.

You have to keep things as-is for the next challenge, whatever came before the current challenge has to stay (unless it is explicitly said otherwise). |

There are plenty of sections in the curriculum where challenges build upon previous challenges. Sometimes challenges depend on code from previous challenges, sometimes not. One challenge might ask you to add a Version field in package.json and then the next challenge might ask you to add some npm package. In this instance, the current challenge does not depend on the previous challenge. But this also doesn't mean you should or need to remove the Version field from package.json just because the current challenge doesn't depend on it or test for it. Another challenge might ask you to serve an HTML page and then the next challenge might ask you to serve a CSS file. Here the latter is dependent on the code from the previous challenge and you can not remove it.

Yes, but the opposite is not true. If all you need is one or two of the middleware functions there is no reason to bring in the kitchen sink. |

I wish this would be more clear

Perhaps this should be be clarified in the tests, because the test says to enable the filter, calling |

I think it is pretty clear if a previous challenge has set up something the next challenge would need. As I said, you shouldn't need to remove anything you did in a previous challenge unless it is explicitly said so.

You are specifically asked to enable the middleware by using The test checks the return of this endpoint This is what using This is what using The test is looking at

You can look in the starting boilerplate code, inside |

You shouldn't keep the "precious work" either unless it explicitly says to. Because you're moving from one challenge to a new one. Nothing says they are connected to one another other than it being started from the same "template-repo". Things like "assumption" should never be a part of a challenge, the instructions should be very clear. Like right now I'm working on the Anonymous message board, the instructions here also appears to be incomplete to me. Sure the instructions says

Normally in a message board it's like a forum. But if you try this link, you'll see it behaves in an unnatural way, it doesn't have the expected behavior. Create a thread, what do you expect to happen? Well for me, I expect to see the thread. But instead, I appear to be presented with all of the previously-created threads? makes no sense at all to me imo.

This isn't really true, because the instructions says the following: "You will add any security features to server.js". Which means as long as I've added my security features to server.js it should be good enough. Although, since the instruction doesn't specify which features, any features should suffice. I thank you though for taking your time to explain and discus this with me, I appreciate that. I just tend to have problems when the instructions are based on guess-work, I like my instructions clearly defined so there's no room for misunderstandings. Except at work of course, but that's different, because then I can just ask the product owner how they want it. |

|

I had the same problem, I managed to fix it with the following configuration in package.json: |

|

This will work but you change the version of the helmet on other task "dependencies": { |

@digenaldo this was a huge help! Thank you all for sharing your insights. |

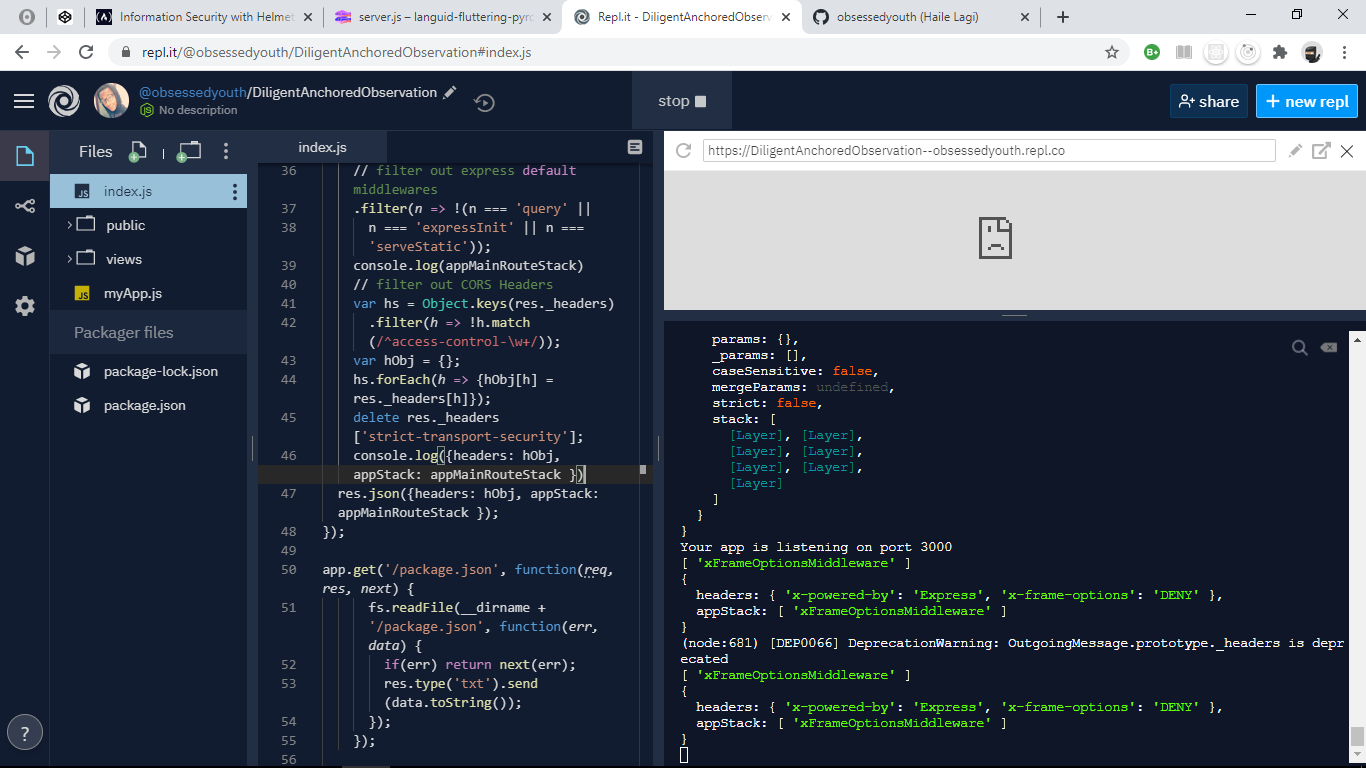

Describe your problem and how to reproduce it:

Multiple challenge tests fail for no obvious reasons related to the challenge -

Add a Link to the page with the problem:

https://glitch.com/edit/#!/busy-rambunctious-tern

this server fails the tests for reasons beyond my understanding.

I tried to replicate the server on a different service and tweak the tests and I still don't understand the cause.

https://repl.it/@obsessedyouth/DiligentAnchoredObservation#package.json

Tell us about your browser and operating system:

Google Chrome

Version 83.0.4103.116 (Official Build) (64-bit)

Windows 10 Enterprise

If possible, add a screenshot here (you can drag and drop, png, jpg, gif, etc. in this box):

The text was updated successfully, but these errors were encountered: