🚀 100 Times Faster Natural Language Processing in Python¶

+This iPython notebook contains the examples detailed in our post "🚀 100 Times Faster Natural Language Processing in Python".

+To run the notebook, you will first need to:

+-

+

- install Cython, e.g.

pip install cython

+ - install spaCy, e.g.

pip install spacy

+ - download a language model for spaCy, e.g.

python -m spacy download en

+

Cython then has to be activated in the notebook as follows:

+ +%load_ext Cython

+Fast loops in Python with a bit of Cython¶

+

In this simple example we have a large set of rectangles that we store as a list of Python objects, e.g. instances of a Rectangle class. The main job of our module is to iterate over this list in order to count how many rectangles have an area larger than a specific threshold.

+Our Python module is quite simple and looks like this (see also here: https://gist.github.com/thomwolf/0709b5a72cf3620cd00d94791213d38e):

+ +from random import random

+

+class Rectangle:

+ def __init__(self, w, h):

+ self.w = w

+ self.h = h

+ def area(self):

+ return self.w * self.h

+

+def check_rectangles_py(rectangles, threshold):

+ n_out = 0

+ for rectangle in rectangles:

+ if rectangle.area() > threshold:

+ n_out += 1

+ return n_out

+

+def main_rectangles_slow():

+ n_rectangles = 10000000

+ rectangles = list(Rectangle(random(), random()) for i in range(n_rectangles))

+ n_out = check_rectangles_py(rectangles, threshold=0.25)

+ print(n_out)

+# Let's run it:

+main_rectangles_slow()

+The check_rectangles function which loops over a large number of Python objects is our bottleneck!

Let's write it in Cython.

+We indicate the cell is a Cython cell by using the %%cython magic command. We the cell is run, the cython code will be written in a temporary file, compiled and reimported in the iPython space. The Cython code thus have to be somehow self contained.

%%cython

+from cymem.cymem cimport Pool

+from random import random

+

+cdef struct Rectangle:

+ float w

+ float h

+

+cdef int check_rectangles_cy(Rectangle* rectangles, int n_rectangles, float threshold):

+ cdef int n_out = 0

+ # C arrays contain no size information => we need to state it explicitly

+ for rectangle in rectangles[:n_rectangles]:

+ if rectangle.w * rectangle.h > threshold:

+ n_out += 1

+ return n_out

+

+cpdef main_rectangles_fast():

+ cdef int n_rectangles = 10000000

+ cdef float threshold = 0.25

+ cdef Pool mem = Pool()

+ cdef Rectangle* rectangles = <Rectangle*>mem.alloc(n_rectangles, sizeof(Rectangle))

+ for i in range(n_rectangles):

+ rectangles[i].w = random()

+ rectangles[i].h = random()

+ n_out = check_rectangles_cy(rectangles, n_rectangles, threshold)

+ print(n_out)

+main_rectangles_fast()

+In this simple case we are about 20 times faster in Cython.

+The ratio of improvement depends a lot on the specific syntax of the Python program.

+While the speed in Cython is rather predictible once your code make only use of C level objects (it is usually directly the fastest possible speed), the speed of Python can vary a lot depending on how your program is written and how much overhead the interpreter will add.

+ +How can you be sure you Cython program makes only use of C level structures?

+Use the -a or --annotate flag in the %%cython magic command to display a code analysis with the line accessing and using Python objects highlighted in yellow.

Here is how our the code analysis of previous program looks:

+ +%%cython -a

+from cymem.cymem cimport Pool

+from random import random

+

+cdef struct Rectangle:

+ float w

+ float h

+

+cdef int check_rectangles_cy(Rectangle* rectangles, int n_rectangles, float threshold):

+ cdef int n_out = 0

+ # C arrays contain no size information => we need to state it explicitly

+ for rectangle in rectangles[:n_rectangles]:

+ if rectangle.w * rectangle.h > threshold:

+ n_out += 1

+ return n_out

+

+cpdef main_rectangles_fast():

+ cdef int n_rectangles = 10000000

+ cdef float threshold = 0.25

+ cdef Pool mem = Pool()

+ cdef Rectangle* rectangles = <Rectangle*>mem.alloc(n_rectangles, sizeof(Rectangle))

+ for i in range(n_rectangles):

+ rectangles[i].w = random()

+ rectangles[i].h = random()

+ n_out = check_rectangles_cy(rectangles, n_rectangles, threshold)

+ print(n_out)

+The important element here is that lines 11 to 13 are not highlighted which means they will be running at the fastest possible speed.

+It's ok to have yellow lines in the main_rectangle_fast function as this function will only be called once when we execute our program anyway. The yellow lines 22 and 23 are initialization lines that we could avoid by using a C level random function like stdlib rand() but we didn't want to clutter this example.

Now here is an example of the previous cython program not optimized (with Python objects in the loop):

+ +%%cython -a

+from cymem.cymem cimport Pool

+from random import random

+

+cdef struct Rectangle:

+ float w

+ float h

+

+cdef int check_rectangles_cy(Rectangle* rectangles, int n_rectangles, float threshold):

+ # ========== MODIFICATION ===========

+ # We changed the following line from `cdef int n_out = 0` to

+ n_out = 0

+ # n_out is not defined as an `int` anymore and is now thus a regular Python object

+ # ===================================

+ for rectangle in rectangles[:n_rectangles]:

+ if rectangle.w * rectangle.h > threshold:

+ n_out += 1

+ return n_out

+

+cpdef main_rectangles_not_so_fast():

+ cdef int n_rectangles = 10000000

+ cdef float threshold = 0.25

+ cdef Pool mem = Pool()

+ cdef Rectangle* rectangles = <Rectangle*>mem.alloc(n_rectangles, sizeof(Rectangle))

+ for i in range(n_rectangles):

+ rectangles[i].w = random()

+ rectangles[i].h = random()

+ n_out = check_rectangles_cy(rectangles, n_rectangles, threshold)

+ print(n_out)

+We can see that line 16 in the loop of check_rectangles_cy is highlighted, indicating that the Cython compiler had to add some Python API overhead.

💫 Using Cython with spaCy to speed up NLP¶

+Our blog post go in some details about the way spaCy can help you speed up your code by using Cython for NLP.

+Here is a short summary of the post:

+-

+

- the official Cython documentation advises against the use of C strings:

Generally speaking: unless you know what you are doing, avoid using C strings where possible and use Python string objects instead.

+ - spaCy let us overcome this problem by:

-

+

- converting all strings to 64-bit hashes using a look up between Python unicode strings and 64-bit hashes called the

StringStore

+ - giving us access to fully populated C level structures of the document and vocabulary called

TokenCandLexemeC

+

+ - converting all strings to 64-bit hashes using a look up between Python unicode strings and 64-bit hashes called the

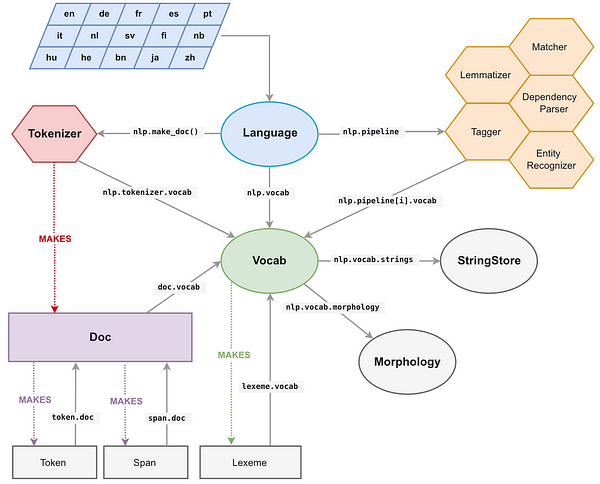

The StringStore object is accessible from everywhere in spaCy and every object (see on the left), for example as nlp.vocab.strings, doc.vocab.strings or span.doc.vocab.string:

Here is now a simple example of NLP processing in Cython.

+First let's build a list of big documents and parse them using spaCy (this takes a few minutes):

+ +import urllib.request

+import spacy

+# Build a dataset of 10 parsed document extracted from the Wikitext-2 dataset

+with urllib.request.urlopen('https://raw.githubusercontent.com/pytorch/examples/master/word_language_model/data/wikitext-2/valid.txt') as response:

+ text = response.read()

+nlp = spacy.load('en')

+doc_list = list(nlp(text[:800000].decode('utf8')) for i in range(10))

+We have about 1.7 million tokens ("words") in our dataset:

+ +sum(len(doc) for doc in doc_list)

+We want to perform some NLP task on this dataset.

+For example, we would like to count the number of times the word "run" is used as a noun in the dataset (i.e. tagged with a "NN" Part-Of-Speech tag).

+A Python loop to do that is short and straightforward:

+ +def slow_loop(doc_list, word, tag):

+ n_out = 0

+ for doc in doc_list:

+ for tok in doc:

+ if tok.lower_ == word and tok.tag_ == tag:

+ n_out += 1

+ return n_out

+

+def main_nlp_slow(doc_list):

+ n_out = slow_loop(doc_list, 'run', 'NN')

+ print(n_out)

+# But it's also quite slow

+main_nlp_slow(doc_list)

+On my laptop this code takes about 1.4 second to get the answer.

+Let's try to speed this up with spaCy and a bit of Cython.

+First, we have to think about the data structure. We will need a C level array for the dataset, with pointers to each document's TokenC array. We'll also need to convert the strings we use for testing to 64-bit hashes: "run" and "NN". When all the data required for our processing is in C level objects, we can then iterate at full C speed over the dataset.

+Here is how this example can be written in Cython with spaCy:

+ +%%cython -+

+import numpy # Sometime we have a fail to import numpy compilation error if we don't import numpy

+from cymem.cymem cimport Pool

+from spacy.tokens.doc cimport Doc

+from spacy.typedefs cimport hash_t

+from spacy.structs cimport TokenC

+

+cdef struct DocElement:

+ TokenC* c

+ int length

+

+cdef int fast_loop(DocElement* docs, int n_docs, hash_t word, hash_t tag):

+ cdef int n_out = 0

+ for doc in docs[:n_docs]:

+ for c in doc.c[:doc.length]:

+ if c.lex.lower == word and c.tag == tag:

+ n_out += 1

+ return n_out

+

+cpdef main_nlp_fast(doc_list):

+ cdef int i, n_out, n_docs = len(doc_list)

+ cdef Pool mem = Pool()

+ cdef DocElement* docs = <DocElement*>mem.alloc(n_docs, sizeof(DocElement))

+ cdef Doc doc

+ for i, doc in enumerate(doc_list): # Populate our database structure

+ docs[i].c = doc.c

+ docs[i].length = (<Doc>doc).length

+ word_hash = doc.vocab.strings.add('run')

+ tag_hash = doc.vocab.strings.add('NN')

+ n_out = fast_loop(docs, n_docs, word_hash, tag_hash)

+ print(n_out)

+main_nlp_fast(doc_list)

+The code is a bit longer because we have to declare and populate the C structures in main_nlp_fast before calling our Cython function.

But it is also a lot faster! In my Jupyter notebook, this cython code takes about 30 milliseconds to run on my laptop which is about 50 times faster than our previous pure Python loop.

+ +The absolute speed is also impressive for a module written in an interactive Jupyter Notebook and which can interface natively with other Python modules and functions: scanning ~1,7 million words in 30ms means we are processing a whopping 56 millions words per seconds.

+ +