+

+

+## Installation Steps

+

+1. Download the `LocalAI.dmg` file from the link above

+2. Open the downloaded DMG file

+3. Drag the LocalAI application to your Applications folder

+4. Launch LocalAI from your Applications folder

+

+## Known Issues

+

+> **Note**: The DMGs are not signed by Apple and may show as quarantined.

+>

+> **Workaround**: See [this issue](https://github.com/mudler/LocalAI/issues/6268) for details on how to bypass the quarantine.

+>

+> **Fix tracking**: The signing issue is being tracked in [this issue](https://github.com/mudler/LocalAI/issues/6244).

+

+## Next Steps

+

+After installing LocalAI, you can:

+

+- Access the WebUI at `http://localhost:8080`

+- [Try it out with examples](/basics/try/)

+- [Learn about available models](/models/)

+- [Customize your configuration](/advanced/model-configuration/)

diff --git a/docs/content/docs/integrations.md b/docs/content/integrations.md

similarity index 100%

rename from docs/content/docs/integrations.md

rename to docs/content/integrations.md

diff --git a/docs/content/docs/overview.md b/docs/content/overview.md

similarity index 77%

rename from docs/content/docs/overview.md

rename to docs/content/overview.md

index 5e3e6d742fb2..850991b98d91 100644

--- a/docs/content/docs/overview.md

+++ b/docs/content/overview.md

@@ -5,11 +5,11 @@ toc = true

description = "What is LocalAI?"

tags = ["Beginners"]

categories = [""]

+url = "/docs/overview"

author = "Ettore Di Giacinto"

icon = "info"

+++

-# Welcome to LocalAI

LocalAI is your complete AI stack for running AI models locally. It's designed to be simple, efficient, and accessible, providing a drop-in replacement for OpenAI's API while keeping your data private and secure.

@@ -51,34 +51,17 @@ LocalAI is more than just a single tool - it's a complete ecosystem:

## Getting Started

+LocalAI can be installed in several ways. **Docker is the recommended installation method** for most users as it provides the easiest setup and works across all platforms.

-### macOS Download

+### Recommended: Docker Installation

-You can use the DMG application for Mac:

-

-

-

+

+

+## Installation Steps

+

+1. Download the `LocalAI.dmg` file from the link above

+2. Open the downloaded DMG file

+3. Drag the LocalAI application to your Applications folder

+4. Launch LocalAI from your Applications folder

+

+## Known Issues

+

+> **Note**: The DMGs are not signed by Apple and may show as quarantined.

+>

+> **Workaround**: See [this issue](https://github.com/mudler/LocalAI/issues/6268) for details on how to bypass the quarantine.

+>

+> **Fix tracking**: The signing issue is being tracked in [this issue](https://github.com/mudler/LocalAI/issues/6244).

+

+## Next Steps

+

+After installing LocalAI, you can:

+

+- Access the WebUI at `http://localhost:8080`

+- [Try it out with examples](/basics/try/)

+- [Learn about available models](/models/)

+- [Customize your configuration](/advanced/model-configuration/)

diff --git a/docs/content/docs/integrations.md b/docs/content/integrations.md

similarity index 100%

rename from docs/content/docs/integrations.md

rename to docs/content/integrations.md

diff --git a/docs/content/docs/overview.md b/docs/content/overview.md

similarity index 77%

rename from docs/content/docs/overview.md

rename to docs/content/overview.md

index 5e3e6d742fb2..850991b98d91 100644

--- a/docs/content/docs/overview.md

+++ b/docs/content/overview.md

@@ -5,11 +5,11 @@ toc = true

description = "What is LocalAI?"

tags = ["Beginners"]

categories = [""]

+url = "/docs/overview"

author = "Ettore Di Giacinto"

icon = "info"

+++

-# Welcome to LocalAI

LocalAI is your complete AI stack for running AI models locally. It's designed to be simple, efficient, and accessible, providing a drop-in replacement for OpenAI's API while keeping your data private and secure.

@@ -51,34 +51,17 @@ LocalAI is more than just a single tool - it's a complete ecosystem:

## Getting Started

+LocalAI can be installed in several ways. **Docker is the recommended installation method** for most users as it provides the easiest setup and works across all platforms.

-### macOS Download

+### Recommended: Docker Installation

-You can use the DMG application for Mac:

-

-

-  -

-

-> Note: the DMGs are not signed by Apple shows as quarantined. See https://github.com/mudler/LocalAI/issues/6268 for a workaround, fix is tracked here: https://github.com/mudler/LocalAI/issues/6244

-

-## Docker

-

-You can use Docker for a quick start:

+The quickest way to get started with LocalAI is using Docker:

```bash

-docker run -p 8080:8080 --name local-ai -ti localai/localai:latest-aio-cpu

+docker run -p 8080:8080 --name local-ai -ti localai/localai:latest

```

-For more detailed installation options and configurations, see our [Getting Started guide](/basics/getting_started/).

-

-## One-liner

-

-The fastest way to get started is with our one-line installer (Linux):

-

-```bash

-curl https://localai.io/install.sh | sh

-```

+For complete installation instructions including Docker, macOS, Linux, Kubernetes, and building from source, see the [Installation guide](/installation/).

## Key Features

@@ -104,7 +87,7 @@ LocalAI is a community-driven project. You can:

Ready to dive in? Here are some recommended next steps:

-1. [Install LocalAI](/basics/getting_started/)

+1. **[Install LocalAI](/installation/)** - Start with [Docker installation](/installation/docker/) (recommended) or choose another method

2. [Explore available models](https://models.localai.io)

3. [Model compatibility](/model-compatibility/)

4. [Try out examples](https://github.com/mudler/LocalAI-examples)

diff --git a/docs/content/docs/reference/_index.en.md b/docs/content/reference/_index.en.md

similarity index 92%

rename from docs/content/docs/reference/_index.en.md

rename to docs/content/reference/_index.en.md

index d8a8f2a71a06..ea6a150bf1fd 100644

--- a/docs/content/docs/reference/_index.en.md

+++ b/docs/content/reference/_index.en.md

@@ -2,6 +2,7 @@

weight: 23

title: "References"

description: "Reference"

+type: chapter

icon: menu_book

lead: ""

date: 2020-10-06T08:49:15+00:00

diff --git a/docs/content/docs/reference/architecture.md b/docs/content/reference/architecture.md

similarity index 96%

rename from docs/content/docs/reference/architecture.md

rename to docs/content/reference/architecture.md

index 23abe11171cb..9f701bc5d325 100644

--- a/docs/content/docs/reference/architecture.md

+++ b/docs/content/reference/architecture.md

@@ -7,7 +7,7 @@ weight = 25

LocalAI is an API written in Go that serves as an OpenAI shim, enabling software already developed with OpenAI SDKs to seamlessly integrate with LocalAI. It can be effortlessly implemented as a substitute, even on consumer-grade hardware. This capability is achieved by employing various C++ backends, including [ggml](https://github.com/ggerganov/ggml), to perform inference on LLMs using both CPU and, if desired, GPU. Internally LocalAI backends are just gRPC server, indeed you can specify and build your own gRPC server and extend LocalAI in runtime as well. It is possible to specify external gRPC server and/or binaries that LocalAI will manage internally.

-LocalAI uses a mixture of backends written in various languages (C++, Golang, Python, ...). You can check [the model compatibility table]({{%relref "docs/reference/compatibility-table" %}}) to learn about all the components of LocalAI.

+LocalAI uses a mixture of backends written in various languages (C++, Golang, Python, ...). You can check [the model compatibility table]({{%relref "reference/compatibility-table" %}}) to learn about all the components of LocalAI.

diff --git a/docs/content/docs/reference/binaries.md b/docs/content/reference/binaries.md

similarity index 96%

rename from docs/content/docs/reference/binaries.md

rename to docs/content/reference/binaries.md

index aa818ec7468e..224c72685311 100644

--- a/docs/content/docs/reference/binaries.md

+++ b/docs/content/reference/binaries.md

@@ -32,10 +32,10 @@ Otherwise, here are the links to the binaries:

| MacOS (arm64) | [Download](https://github.com/mudler/LocalAI/releases/download/{{< version >}}/local-ai-Darwin-arm64) |

-{{% alert icon="⚡" context="warning" %}}

+{{% notice icon="⚡" context="warning" %}}

Binaries do have limited support compared to container images:

- Python-based backends are not shipped with binaries (e.g. `bark`, `diffusers` or `transformers`)

- MacOS binaries and Linux-arm64 do not ship TTS nor `stablediffusion-cpp` backends

- Linux binaries do not ship `stablediffusion-cpp` backend

-{{% /alert %}}

+ {{% /notice %}}

diff --git a/docs/content/docs/reference/cli-reference.md b/docs/content/reference/cli-reference.md

similarity index 91%

rename from docs/content/docs/reference/cli-reference.md

rename to docs/content/reference/cli-reference.md

index 60569b66b746..356e7a14e045 100644

--- a/docs/content/docs/reference/cli-reference.md

+++ b/docs/content/reference/cli-reference.md

@@ -7,21 +7,18 @@ url = '/reference/cli-reference'

Complete reference for all LocalAI command-line interface (CLI) parameters and environment variables.

-> **Note:** All CLI flags can also be set via environment variables. Environment variables take precedence over CLI flags. See [.env files]({{%relref "docs/advanced/advanced-usage#env-files" %}}) for configuration file support.

+> **Note:** All CLI flags can also be set via environment variables. Environment variables take precedence over CLI flags. See [.env files]({{%relref "advanced/advanced-usage#env-files" %}}) for configuration file support.

## Global Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `-h, --help` | | Show context-sensitive help | |

| `--log-level` | `info` | Set the level of logs to output [error,warn,info,debug,trace] | `$LOCALAI_LOG_LEVEL` |

| `--debug` | `false` | **DEPRECATED** - Use `--log-level=debug` instead. Enable debug logging | `$LOCALAI_DEBUG`, `$DEBUG` |

-{{< /table >}}

## Storage Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--models-path` | `BASEPATH/models` | Path containing models used for inferencing | `$LOCALAI_MODELS_PATH`, `$MODELS_PATH` |

@@ -30,11 +27,9 @@ Complete reference for all LocalAI command-line interface (CLI) parameters and e

| `--localai-config-dir` | `BASEPATH/configuration` | Directory for dynamic loading of certain configuration files (currently api_keys.json and external_backends.json) | `$LOCALAI_CONFIG_DIR` |

| `--localai-config-dir-poll-interval` | | Time duration to poll the LocalAI Config Dir if your system has broken fsnotify events (example: `1m`) | `$LOCALAI_CONFIG_DIR_POLL_INTERVAL` |

| `--models-config-file` | | YAML file containing a list of model backend configs (alias: `--config-file`) | `$LOCALAI_MODELS_CONFIG_FILE`, `$CONFIG_FILE` |

-{{< /table >}}

## Backend Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--backends-path` | `BASEPATH/backends` | Path containing backends used for inferencing | `$LOCALAI_BACKENDS_PATH`, `$BACKENDS_PATH` |

@@ -50,13 +45,11 @@ Complete reference for all LocalAI command-line interface (CLI) parameters and e

| `--watchdog-idle-timeout` | `15m` | Threshold beyond which an idle backend should be stopped | `$LOCALAI_WATCHDOG_IDLE_TIMEOUT`, `$WATCHDOG_IDLE_TIMEOUT` |

| `--enable-watchdog-busy` | `false` | Enable watchdog for stopping backends that are busy longer than the watchdog-busy-timeout | `$LOCALAI_WATCHDOG_BUSY`, `$WATCHDOG_BUSY` |

| `--watchdog-busy-timeout` | `5m` | Threshold beyond which a busy backend should be stopped | `$LOCALAI_WATCHDOG_BUSY_TIMEOUT`, `$WATCHDOG_BUSY_TIMEOUT` |

-{{< /table >}}

-For more information on VRAM management, see [VRAM and Memory Management]({{%relref "docs/advanced/vram-management" %}}).

+For more information on VRAM management, see [VRAM and Memory Management]({{%relref "advanced/vram-management" %}}).

## Models Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--galleries` | | JSON list of galleries | `$LOCALAI_GALLERIES`, `$GALLERIES` |

@@ -65,23 +58,19 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--models` | | A list of model configuration URLs to load | `$LOCALAI_MODELS`, `$MODELS` |

| `--preload-models-config` | | A list of models to apply at startup. Path to a YAML config file | `$LOCALAI_PRELOAD_MODELS_CONFIG`, `$PRELOAD_MODELS_CONFIG` |

| `--load-to-memory` | | A list of models to load into memory at startup | `$LOCALAI_LOAD_TO_MEMORY`, `$LOAD_TO_MEMORY` |

-{{< /table >}}

> **Note:** You can also pass model configuration URLs as positional arguments: `local-ai run MODEL_URL1 MODEL_URL2 ...`

## Performance Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--f16` | `false` | Enable GPU acceleration | `$LOCALAI_F16`, `$F16` |

| `-t, --threads` | | Number of threads used for parallel computation. Usage of the number of physical cores in the system is suggested | `$LOCALAI_THREADS`, `$THREADS` |

| `--context-size` | | Default context size for models | `$LOCALAI_CONTEXT_SIZE`, `$CONTEXT_SIZE` |

-{{< /table >}}

## API Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--address` | `:8080` | Bind address for the API server | `$LOCALAI_ADDRESS`, `$ADDRESS` |

@@ -94,11 +83,9 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--disable-gallery-endpoint` | `false` | Disable the gallery endpoints | `$LOCALAI_DISABLE_GALLERY_ENDPOINT`, `$DISABLE_GALLERY_ENDPOINT` |

| `--disable-metrics-endpoint` | `false` | Disable the `/metrics` endpoint | `$LOCALAI_DISABLE_METRICS_ENDPOINT`, `$DISABLE_METRICS_ENDPOINT` |

| `--machine-tag` | | If not empty, add that string to Machine-Tag header in each response. Useful to track response from different machines using multiple P2P federated nodes | `$LOCALAI_MACHINE_TAG`, `$MACHINE_TAG` |

-{{< /table >}}

## Hardening Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--disable-predownload-scan` | `false` | If true, disables the best-effort security scanner before downloading any files | `$LOCALAI_DISABLE_PREDOWNLOAD_SCAN` |

@@ -106,11 +93,9 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--use-subtle-key-comparison` | `false` | If true, API Key validation comparisons will be performed using constant-time comparisons rather than simple equality. This trades off performance on each request for resilience against timing attacks | `$LOCALAI_SUBTLE_KEY_COMPARISON` |

| `--disable-api-key-requirement-for-http-get` | `false` | If true, a valid API key is not required to issue GET requests to portions of the web UI. This should only be enabled in secure testing environments | `$LOCALAI_DISABLE_API_KEY_REQUIREMENT_FOR_HTTP_GET` |

| `--http-get-exempted-endpoints` | `^/$,^/browse/?$,^/talk/?$,^/p2p/?$,^/chat/?$,^/text2image/?$,^/tts/?$,^/static/.*$,^/swagger.*$` | If `--disable-api-key-requirement-for-http-get` is overridden to true, this is the list of endpoints to exempt. Only adjust this in case of a security incident or as a result of a personal security posture review | `$LOCALAI_HTTP_GET_EXEMPTED_ENDPOINTS` |

-{{< /table >}}

## P2P Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--p2p` | `false` | Enable P2P mode | `$LOCALAI_P2P`, `$P2P` |

@@ -119,7 +104,6 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--p2ptoken` | | Token for P2P mode (optional) | `$LOCALAI_P2P_TOKEN`, `$P2P_TOKEN`, `$TOKEN` |

| `--p2p-network-id` | | Network ID for P2P mode, can be set arbitrarily by the user for grouping a set of instances | `$LOCALAI_P2P_NETWORK_ID`, `$P2P_NETWORK_ID` |

| `--federated` | `false` | Enable federated instance | `$LOCALAI_FEDERATED`, `$FEDERATED` |

-{{< /table >}}

## Other Commands

@@ -142,20 +126,16 @@ Use `local-ai

-

-

-> Note: the DMGs are not signed by Apple shows as quarantined. See https://github.com/mudler/LocalAI/issues/6268 for a workaround, fix is tracked here: https://github.com/mudler/LocalAI/issues/6244

-

-## Docker

-

-You can use Docker for a quick start:

+The quickest way to get started with LocalAI is using Docker:

```bash

-docker run -p 8080:8080 --name local-ai -ti localai/localai:latest-aio-cpu

+docker run -p 8080:8080 --name local-ai -ti localai/localai:latest

```

-For more detailed installation options and configurations, see our [Getting Started guide](/basics/getting_started/).

-

-## One-liner

-

-The fastest way to get started is with our one-line installer (Linux):

-

-```bash

-curl https://localai.io/install.sh | sh

-```

+For complete installation instructions including Docker, macOS, Linux, Kubernetes, and building from source, see the [Installation guide](/installation/).

## Key Features

@@ -104,7 +87,7 @@ LocalAI is a community-driven project. You can:

Ready to dive in? Here are some recommended next steps:

-1. [Install LocalAI](/basics/getting_started/)

+1. **[Install LocalAI](/installation/)** - Start with [Docker installation](/installation/docker/) (recommended) or choose another method

2. [Explore available models](https://models.localai.io)

3. [Model compatibility](/model-compatibility/)

4. [Try out examples](https://github.com/mudler/LocalAI-examples)

diff --git a/docs/content/docs/reference/_index.en.md b/docs/content/reference/_index.en.md

similarity index 92%

rename from docs/content/docs/reference/_index.en.md

rename to docs/content/reference/_index.en.md

index d8a8f2a71a06..ea6a150bf1fd 100644

--- a/docs/content/docs/reference/_index.en.md

+++ b/docs/content/reference/_index.en.md

@@ -2,6 +2,7 @@

weight: 23

title: "References"

description: "Reference"

+type: chapter

icon: menu_book

lead: ""

date: 2020-10-06T08:49:15+00:00

diff --git a/docs/content/docs/reference/architecture.md b/docs/content/reference/architecture.md

similarity index 96%

rename from docs/content/docs/reference/architecture.md

rename to docs/content/reference/architecture.md

index 23abe11171cb..9f701bc5d325 100644

--- a/docs/content/docs/reference/architecture.md

+++ b/docs/content/reference/architecture.md

@@ -7,7 +7,7 @@ weight = 25

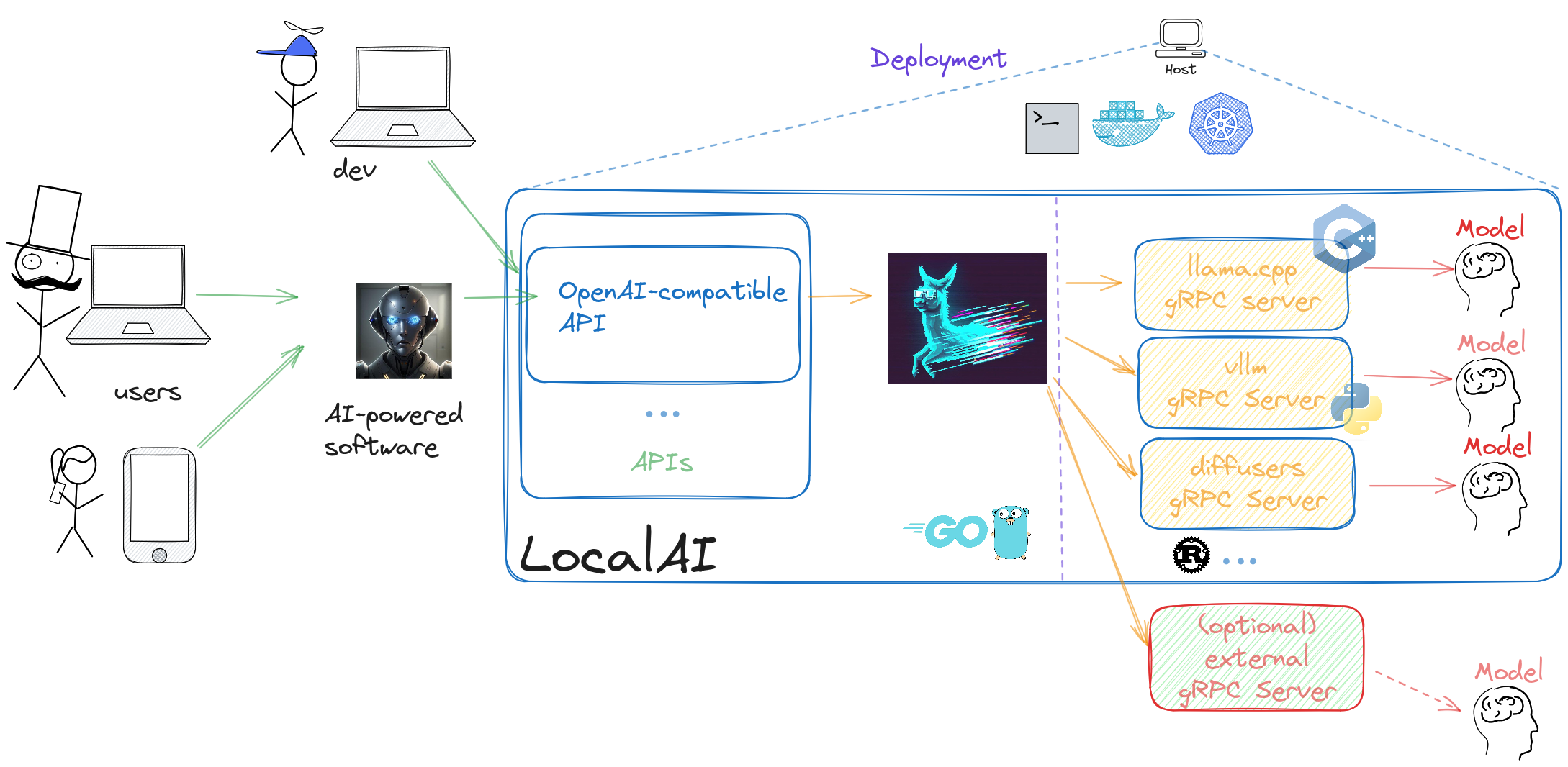

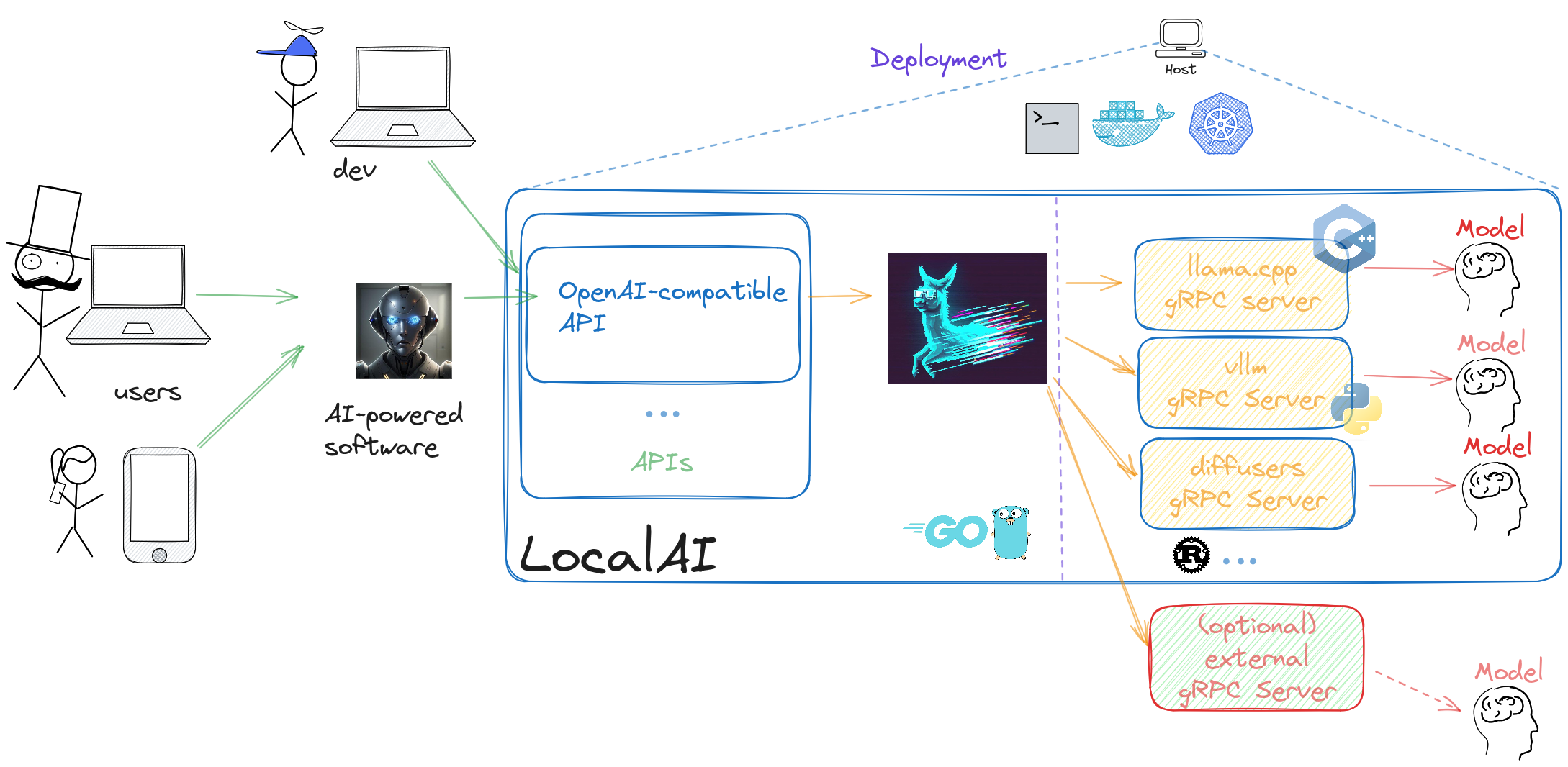

LocalAI is an API written in Go that serves as an OpenAI shim, enabling software already developed with OpenAI SDKs to seamlessly integrate with LocalAI. It can be effortlessly implemented as a substitute, even on consumer-grade hardware. This capability is achieved by employing various C++ backends, including [ggml](https://github.com/ggerganov/ggml), to perform inference on LLMs using both CPU and, if desired, GPU. Internally LocalAI backends are just gRPC server, indeed you can specify and build your own gRPC server and extend LocalAI in runtime as well. It is possible to specify external gRPC server and/or binaries that LocalAI will manage internally.

-LocalAI uses a mixture of backends written in various languages (C++, Golang, Python, ...). You can check [the model compatibility table]({{%relref "docs/reference/compatibility-table" %}}) to learn about all the components of LocalAI.

+LocalAI uses a mixture of backends written in various languages (C++, Golang, Python, ...). You can check [the model compatibility table]({{%relref "reference/compatibility-table" %}}) to learn about all the components of LocalAI.

diff --git a/docs/content/docs/reference/binaries.md b/docs/content/reference/binaries.md

similarity index 96%

rename from docs/content/docs/reference/binaries.md

rename to docs/content/reference/binaries.md

index aa818ec7468e..224c72685311 100644

--- a/docs/content/docs/reference/binaries.md

+++ b/docs/content/reference/binaries.md

@@ -32,10 +32,10 @@ Otherwise, here are the links to the binaries:

| MacOS (arm64) | [Download](https://github.com/mudler/LocalAI/releases/download/{{< version >}}/local-ai-Darwin-arm64) |

-{{% alert icon="⚡" context="warning" %}}

+{{% notice icon="⚡" context="warning" %}}

Binaries do have limited support compared to container images:

- Python-based backends are not shipped with binaries (e.g. `bark`, `diffusers` or `transformers`)

- MacOS binaries and Linux-arm64 do not ship TTS nor `stablediffusion-cpp` backends

- Linux binaries do not ship `stablediffusion-cpp` backend

-{{% /alert %}}

+ {{% /notice %}}

diff --git a/docs/content/docs/reference/cli-reference.md b/docs/content/reference/cli-reference.md

similarity index 91%

rename from docs/content/docs/reference/cli-reference.md

rename to docs/content/reference/cli-reference.md

index 60569b66b746..356e7a14e045 100644

--- a/docs/content/docs/reference/cli-reference.md

+++ b/docs/content/reference/cli-reference.md

@@ -7,21 +7,18 @@ url = '/reference/cli-reference'

Complete reference for all LocalAI command-line interface (CLI) parameters and environment variables.

-> **Note:** All CLI flags can also be set via environment variables. Environment variables take precedence over CLI flags. See [.env files]({{%relref "docs/advanced/advanced-usage#env-files" %}}) for configuration file support.

+> **Note:** All CLI flags can also be set via environment variables. Environment variables take precedence over CLI flags. See [.env files]({{%relref "advanced/advanced-usage#env-files" %}}) for configuration file support.

## Global Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `-h, --help` | | Show context-sensitive help | |

| `--log-level` | `info` | Set the level of logs to output [error,warn,info,debug,trace] | `$LOCALAI_LOG_LEVEL` |

| `--debug` | `false` | **DEPRECATED** - Use `--log-level=debug` instead. Enable debug logging | `$LOCALAI_DEBUG`, `$DEBUG` |

-{{< /table >}}

## Storage Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--models-path` | `BASEPATH/models` | Path containing models used for inferencing | `$LOCALAI_MODELS_PATH`, `$MODELS_PATH` |

@@ -30,11 +27,9 @@ Complete reference for all LocalAI command-line interface (CLI) parameters and e

| `--localai-config-dir` | `BASEPATH/configuration` | Directory for dynamic loading of certain configuration files (currently api_keys.json and external_backends.json) | `$LOCALAI_CONFIG_DIR` |

| `--localai-config-dir-poll-interval` | | Time duration to poll the LocalAI Config Dir if your system has broken fsnotify events (example: `1m`) | `$LOCALAI_CONFIG_DIR_POLL_INTERVAL` |

| `--models-config-file` | | YAML file containing a list of model backend configs (alias: `--config-file`) | `$LOCALAI_MODELS_CONFIG_FILE`, `$CONFIG_FILE` |

-{{< /table >}}

## Backend Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--backends-path` | `BASEPATH/backends` | Path containing backends used for inferencing | `$LOCALAI_BACKENDS_PATH`, `$BACKENDS_PATH` |

@@ -50,13 +45,11 @@ Complete reference for all LocalAI command-line interface (CLI) parameters and e

| `--watchdog-idle-timeout` | `15m` | Threshold beyond which an idle backend should be stopped | `$LOCALAI_WATCHDOG_IDLE_TIMEOUT`, `$WATCHDOG_IDLE_TIMEOUT` |

| `--enable-watchdog-busy` | `false` | Enable watchdog for stopping backends that are busy longer than the watchdog-busy-timeout | `$LOCALAI_WATCHDOG_BUSY`, `$WATCHDOG_BUSY` |

| `--watchdog-busy-timeout` | `5m` | Threshold beyond which a busy backend should be stopped | `$LOCALAI_WATCHDOG_BUSY_TIMEOUT`, `$WATCHDOG_BUSY_TIMEOUT` |

-{{< /table >}}

-For more information on VRAM management, see [VRAM and Memory Management]({{%relref "docs/advanced/vram-management" %}}).

+For more information on VRAM management, see [VRAM and Memory Management]({{%relref "advanced/vram-management" %}}).

## Models Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--galleries` | | JSON list of galleries | `$LOCALAI_GALLERIES`, `$GALLERIES` |

@@ -65,23 +58,19 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--models` | | A list of model configuration URLs to load | `$LOCALAI_MODELS`, `$MODELS` |

| `--preload-models-config` | | A list of models to apply at startup. Path to a YAML config file | `$LOCALAI_PRELOAD_MODELS_CONFIG`, `$PRELOAD_MODELS_CONFIG` |

| `--load-to-memory` | | A list of models to load into memory at startup | `$LOCALAI_LOAD_TO_MEMORY`, `$LOAD_TO_MEMORY` |

-{{< /table >}}

> **Note:** You can also pass model configuration URLs as positional arguments: `local-ai run MODEL_URL1 MODEL_URL2 ...`

## Performance Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--f16` | `false` | Enable GPU acceleration | `$LOCALAI_F16`, `$F16` |

| `-t, --threads` | | Number of threads used for parallel computation. Usage of the number of physical cores in the system is suggested | `$LOCALAI_THREADS`, `$THREADS` |

| `--context-size` | | Default context size for models | `$LOCALAI_CONTEXT_SIZE`, `$CONTEXT_SIZE` |

-{{< /table >}}

## API Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--address` | `:8080` | Bind address for the API server | `$LOCALAI_ADDRESS`, `$ADDRESS` |

@@ -94,11 +83,9 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--disable-gallery-endpoint` | `false` | Disable the gallery endpoints | `$LOCALAI_DISABLE_GALLERY_ENDPOINT`, `$DISABLE_GALLERY_ENDPOINT` |

| `--disable-metrics-endpoint` | `false` | Disable the `/metrics` endpoint | `$LOCALAI_DISABLE_METRICS_ENDPOINT`, `$DISABLE_METRICS_ENDPOINT` |

| `--machine-tag` | | If not empty, add that string to Machine-Tag header in each response. Useful to track response from different machines using multiple P2P federated nodes | `$LOCALAI_MACHINE_TAG`, `$MACHINE_TAG` |

-{{< /table >}}

## Hardening Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--disable-predownload-scan` | `false` | If true, disables the best-effort security scanner before downloading any files | `$LOCALAI_DISABLE_PREDOWNLOAD_SCAN` |

@@ -106,11 +93,9 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--use-subtle-key-comparison` | `false` | If true, API Key validation comparisons will be performed using constant-time comparisons rather than simple equality. This trades off performance on each request for resilience against timing attacks | `$LOCALAI_SUBTLE_KEY_COMPARISON` |

| `--disable-api-key-requirement-for-http-get` | `false` | If true, a valid API key is not required to issue GET requests to portions of the web UI. This should only be enabled in secure testing environments | `$LOCALAI_DISABLE_API_KEY_REQUIREMENT_FOR_HTTP_GET` |

| `--http-get-exempted-endpoints` | `^/$,^/browse/?$,^/talk/?$,^/p2p/?$,^/chat/?$,^/text2image/?$,^/tts/?$,^/static/.*$,^/swagger.*$` | If `--disable-api-key-requirement-for-http-get` is overridden to true, this is the list of endpoints to exempt. Only adjust this in case of a security incident or as a result of a personal security posture review | `$LOCALAI_HTTP_GET_EXEMPTED_ENDPOINTS` |

-{{< /table >}}

## P2P Flags

-{{< table "table-responsive" >}}

| Parameter | Default | Description | Environment Variable |

|-----------|---------|-------------|----------------------|

| `--p2p` | `false` | Enable P2P mode | `$LOCALAI_P2P`, `$P2P` |

@@ -119,7 +104,6 @@ For more information on VRAM management, see [VRAM and Memory Management]({{%rel

| `--p2ptoken` | | Token for P2P mode (optional) | `$LOCALAI_P2P_TOKEN`, `$P2P_TOKEN`, `$TOKEN` |

| `--p2p-network-id` | | Network ID for P2P mode, can be set arbitrarily by the user for grouping a set of instances | `$LOCALAI_P2P_NETWORK_ID`, `$P2P_NETWORK_ID` |

| `--federated` | `false` | Enable federated instance | `$LOCALAI_FEDERATED`, `$FEDERATED` |

-{{< /table >}}

## Other Commands

@@ -142,20 +126,16 @@ Use `local-ai © 2023-2025 Ettore Di Giacinto

+ diff --git a/docs/themes/hugo-theme-relearn b/docs/themes/hugo-theme-relearn deleted file mode 100644 index 72f933727e11..000000000000 --- a/docs/themes/hugo-theme-relearn +++ /dev/null @@ -1 +0,0 @@ -9a020e7eadb7d8203f5b01b18756c72d94773ec9 \ No newline at end of file diff --git a/docs/themes/hugo-theme-relearn b/docs/themes/hugo-theme-relearn new file mode 160000 index 000000000000..f69a085322cc --- /dev/null +++ b/docs/themes/hugo-theme-relearn @@ -0,0 +1 @@ +Subproject commit f69a085322cc70b3e14577c0823c5bc781ec6bb2 diff --git a/docs/themes/lotusdocs b/docs/themes/lotusdocs deleted file mode 160000 index 975da91e839c..000000000000 --- a/docs/themes/lotusdocs +++ /dev/null @@ -1 +0,0 @@ -Subproject commit 975da91e839cfdb5c20fb66961468e77b8a9f8fd