diff --git a/docs/Polkadot/economics/Combinatorial-candle-auction.png b/docs/Polkadot/economics/academic-research/Combinatorial-candle-auction.png

similarity index 100%

rename from docs/Polkadot/economics/Combinatorial-candle-auction.png

rename to docs/Polkadot/economics/academic-research/Combinatorial-candle-auction.png

diff --git a/docs/Polkadot/economics/Experimental-investigations.png b/docs/Polkadot/economics/academic-research/Experimental-investigations.png

similarity index 100%

rename from docs/Polkadot/economics/Experimental-investigations.png

rename to docs/Polkadot/economics/academic-research/Experimental-investigations.png

diff --git a/docs/Polkadot/economics/collective-members.png b/docs/Polkadot/economics/academic-research/collective-members.png

similarity index 100%

rename from docs/Polkadot/economics/collective-members.png

rename to docs/Polkadot/economics/academic-research/collective-members.png

diff --git a/docs/Polkadot/economics/4-gamification.md b/docs/Polkadot/economics/academic-research/gamification.md

similarity index 98%

rename from docs/Polkadot/economics/4-gamification.md

rename to docs/Polkadot/economics/academic-research/gamification.md

index b89ad046..65b48338 100644

--- a/docs/Polkadot/economics/4-gamification.md

+++ b/docs/Polkadot/economics/academic-research/gamification.md

@@ -2,6 +2,10 @@

title: Non-monetary incentives for collective members

---

+| Status | Date | Link |

+|----------------|------------|----------------------------------------------------------------------|

+| Stale | 06.10.2025 | -- |

+

Behavioral economics has demonstrated that non-monetary incentives can be powerful motivators, offering a viable alternative to financial rewards (see, e.g., [Frey & Gallus, 2015](https://www.bsfrey.ch/articles/C_600_2016.pdf)). This is especially true in environments where intrinsic motivation drives behavior. In such contexts, monetary incentives may even crowd out intrinsic motivation, ultimately reducing engagement ([Gneezy & Rustichini, 2000](https://academic.oup.com/qje/article-abstract/115/3/791/1828156)).

diff --git a/docs/Polkadot/economics/academic-research/index.md b/docs/Polkadot/economics/academic-research/index.md

new file mode 100644

index 00000000..ec57b4c0

--- /dev/null

+++ b/docs/Polkadot/economics/academic-research/index.md

@@ -0,0 +1,7 @@

+---

+title: Academic Research

+---

+

+import DocCardList from '@theme/DocCardList';

+

+

diff --git a/docs/Polkadot/economics/academic-research/npos.md b/docs/Polkadot/economics/academic-research/npos.md

new file mode 100644

index 00000000..698b9162

--- /dev/null

+++ b/docs/Polkadot/economics/academic-research/npos.md

@@ -0,0 +1,10 @@

+---

+title: Approval-Based Committee Voting in Practice

+---

+

+| Status | Date | Link |

+|----------------|------------|----------------------------------------------------------------------|

+| Published as Proceeding of AAAI Conference on AI | 06.10.2025 | [AAAI](https://ojs.aaai.org/index.php/AAAI/article/view/28807) / [ARXIV](https://arxiv.org/abs/2312.11408) |

+

+

+We provide the first large-scale data collection of real-world approval-based committee elections. These elections have been conducted on the Polkadot blockchain as part of their Nominated Proof-of-Stake mechanism and contain around one thousand candidates and tens of thousands of (weighted) voters each. We conduct an in-depth study of application-relevant questions, including a quantitative and qualitative analysis of the outcomes returned by different voting rules. Besides considering proportionality measures that are standard in the multiwinner voting literature, we pay particular attention to less-studied measures of overrepresentation, as these are closely related to the security of the Polkadot network. We also analyze how different design decisions such as the committee size affect the examined measures.

\ No newline at end of file

diff --git a/docs/Polkadot/economics/parachain-auctions.png b/docs/Polkadot/economics/academic-research/parachain-auctions.png

similarity index 100%

rename from docs/Polkadot/economics/parachain-auctions.png

rename to docs/Polkadot/economics/academic-research/parachain-auctions.png

diff --git a/docs/Polkadot/economics/3-parachain-experiment.md b/docs/Polkadot/economics/academic-research/parachain-experiment.md

similarity index 98%

rename from docs/Polkadot/economics/3-parachain-experiment.md

rename to docs/Polkadot/economics/academic-research/parachain-experiment.md

index 897ff819..6f59162a 100644

--- a/docs/Polkadot/economics/3-parachain-experiment.md

+++ b/docs/Polkadot/economics/academic-research/parachain-experiment.md

@@ -2,6 +2,10 @@

title: Experimental Investigation of Parachain Auctions

---

+| Status | Date | Link |

+|----------------|------------|----------------------------------------------------------------------|

+| Under Review | 06.10.2025 | [SSRN Paper](https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5109856) |

+

This entry focuses on experimentally examining the combinatorial candle auction as implemented in the Polkadot and Kusama protocol. Specifically, it compares its outcome with those of more traditional dynamic combinatorial auction formats currently in use.

diff --git a/docs/Polkadot/economics/2-parachain-theory.md b/docs/Polkadot/economics/academic-research/parachain-theory.md

similarity index 87%

rename from docs/Polkadot/economics/2-parachain-theory.md

rename to docs/Polkadot/economics/academic-research/parachain-theory.md

index 649dc9d5..ef5aa59e 100644

--- a/docs/Polkadot/economics/2-parachain-theory.md

+++ b/docs/Polkadot/economics/academic-research/parachain-theory.md

@@ -2,6 +2,10 @@

title: Theoretical Analysis of Parachain Auctions

---

+| Status | Date | Link |

+|----------------|------------|----------------------------------------------------------------------|

+| Under Review | 06.10.2025 | [SSRN Paper](https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3846363) |

+

Polkadot uses a [candle auction format](https://wiki.polkadot.network/docs/en/learn-auction) to allocate parachain slots. A candle auction is a dynamic auction mechanism characterized by a randomly ending time. Such a random-closing rule affects equilibrium behavior, particularly in scenarios where bidders have front-running opportunities.

diff --git a/docs/Polkadot/economics/utility-token.png b/docs/Polkadot/economics/academic-research/utility-token.png

similarity index 100%

rename from docs/Polkadot/economics/utility-token.png

rename to docs/Polkadot/economics/academic-research/utility-token.png

diff --git a/docs/Polkadot/economics/5-utilitytokendesign.md b/docs/Polkadot/economics/academic-research/utilitytokendesign.md

similarity index 84%

rename from docs/Polkadot/economics/5-utilitytokendesign.md

rename to docs/Polkadot/economics/academic-research/utilitytokendesign.md

index d48f368b..a41d7d59 100644

--- a/docs/Polkadot/economics/5-utilitytokendesign.md

+++ b/docs/Polkadot/economics/academic-research/utilitytokendesign.md

@@ -2,6 +2,10 @@

title: Utility Token Design

---

+| Status | Date | Link |

+|----------------|------------|----------------------------------------------------------------------|

+| Under Review | 06.10.2025 | [SSRN Paper](https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3954773) |

+

diff --git a/docs/Polkadot/economics/validator-selection.jpeg b/docs/Polkadot/economics/academic-research/validator-selection.jpeg

similarity index 100%

rename from docs/Polkadot/economics/validator-selection.jpeg

rename to docs/Polkadot/economics/academic-research/validator-selection.jpeg

diff --git a/docs/Polkadot/economics/1-validator-selection.md b/docs/Polkadot/economics/academic-research/validator-selection.md

similarity index 97%

rename from docs/Polkadot/economics/1-validator-selection.md

rename to docs/Polkadot/economics/academic-research/validator-selection.md

index 6ca4ff76..776655cb 100644

--- a/docs/Polkadot/economics/1-validator-selection.md

+++ b/docs/Polkadot/economics/academic-research/validator-selection.md

@@ -1,7 +1,11 @@

---

-title: Validator selection

+title: Validator Selection

---

+| Status | Date | Link |

+|----------------|------------|----------------------------------------------------------------------|

+| Published in Peer-Reviewed Journal | 06.10.2025 | [Omega](https://www.sciencedirect.com/science/article/abs/pii/S0305048323000336) / [SSRN](https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4253515) |

+

Validator elections play a critical role in securing the network, placing nominators in charge of selecting the most trustworthy and competent validators. This responsibility is both complex and demanding. The vast amount of validator data, constantly growing, requires significant technical expertise and sustained engagement. As a result, the process can become overly cumbersome, leading many nominators to either avoid staking altogether or refrain from investing the time needed to evaluate the data thoroughly. In this context, effective tools are essential, not only to support nominators in making informed selections, but also to help ensure the network's long-term health and resilience.

diff --git a/docs/Polkadot/economics/applied-research/index.md b/docs/Polkadot/economics/applied-research/index.md

new file mode 100644

index 00000000..ce7f855d

--- /dev/null

+++ b/docs/Polkadot/economics/applied-research/index.md

@@ -0,0 +1,7 @@

+---

+title: Applied Research

+---

+

+import DocCardList from '@theme/DocCardList';

+

+

diff --git a/docs/Polkadot/economics/applied-research/rfc10.md b/docs/Polkadot/economics/applied-research/rfc10.md

new file mode 100644

index 00000000..f0a266ce

--- /dev/null

+++ b/docs/Polkadot/economics/applied-research/rfc10.md

@@ -0,0 +1,33 @@

+# RFC-0010: Burn Coretime Revenue (accepted)

+

+| | |

+| --------------- | ------------------------------------------------------------------------------------------- |

+| **Start Date** | 19.07.2023 |

+| **Description** | Revenue from Coretime sales should be burned |

+| **Authors** | Jonas Gehrlein |

+

+## Summary

+

+The Polkadot UC will generate revenue from the sale of available Coretime. The question then arises: how should we handle these revenues? Broadly, there are two reasonable paths – burning the revenue and thereby removing it from total issuance or divert it to the Treasury. This Request for Comment (RFC) presents arguments favoring burning as the preferred mechanism for handling revenues from Coretime sales.

+

+## Motivation

+

+How to handle the revenue accrued from Coretime sales is an important economic question that influences the value of DOT and should be properly discussed before deciding for either of the options. Now is the best time to start this discussion.

+

+## Stakeholders

+

+Polkadot DOT token holders.

+

+## Explanation

+

+This RFC discusses potential benefits of burning the revenue accrued from Coretime sales instead of diverting them to Treasury. Here are the following arguments for it.

+

+It's in the interest of the Polkadot community to have a consistent and predictable Treasury income, because volatility in the inflow can be damaging, especially in situations when it is insufficient. As such, this RFC operates under the presumption of a steady and sustainable Treasury income flow, which is crucial for the Polkadot community's stability. The assurance of a predictable Treasury income, as outlined in a prior discussion [here](https://forum.polkadot.network/t/adjusting-the-current-inflation-model-to-sustain-treasury-inflow/3301), or through other equally effective measures, serves as a baseline assumption for this argument.

+

+Consequently, we need not concern ourselves with this particular issue here. This naturally begs the question - why should we introduce additional volatility to the Treasury by aligning it with the variable Coretime sales? It's worth noting that Coretime revenues often exhibit an inverse relationship with periods when Treasury spending should ideally be ramped up. During periods of low Coretime utilization (indicated by lower revenue), Treasury should spend more on projects and endeavours to increase the demand for Coretime. This pattern underscores that Coretime sales, by their very nature, are an inconsistent and unpredictable source of funding for the Treasury. Given the importance of maintaining a steady and predictable inflow, it's unnecessary to rely on another volatile mechanism. Some might argue that we could have both: a steady inflow (from inflation) and some added bonus from Coretime sales, but burning the revenue would offer further benefits as described below.

+

+- **Balancing Inflation:** While DOT as a utility token inherently profits from a (reasonable) net inflation, it also benefits from a deflationary force that functions as a counterbalance to the overall inflation. Right now, the only mechanism on Polkadot that burns fees is the one for underutilized DOT in the Treasury. Finding other, more direct target for burns makes sense and the Coretime market is a good option.

+

+- **Clear incentives:** By burning the revenue accrued on Coretime sales, prices paid by buyers are clearly costs. This removes distortion from the market that might arise when the paid tokens occur on some other places within the network. In that case, some actors might have secondary motives of influencing the price of Coretime sales, because they benefit down the line. For example, actors that actively participate in the Coretime sales are likely to also benefit from a higher Treasury balance, because they might frequently request funds for their projects. While those effects might appear far-fetched, they could accumulate. Burning the revenues makes sure that the prices paid are clearly costs to the actors themselves.

+

+- **Collective Value Accrual:** Following the previous argument, burning the revenue also generates some externality, because it reduces the overall issuance of DOT and thereby increases the value of each remaining token. In contrast to the aforementioned argument, this benefits all token holders collectively and equally. Therefore, I'd consider this as the preferrable option, because burns lets all token holders participate at Polkadot's success as Coretime usage increases.

\ No newline at end of file

diff --git a/docs/Polkadot/economics/applied-research/rfc104.md b/docs/Polkadot/economics/applied-research/rfc104.md

new file mode 100644

index 00000000..fcb4a10e

--- /dev/null

+++ b/docs/Polkadot/economics/applied-research/rfc104.md

@@ -0,0 +1,111 @@

+# RFC-0104: Stale Nominations and Declining Reward Curve (stale)

+

+| | |

+| --------------- | ------------------------------------------------------------------------------------------- |

+| **Start Date** | 28 October 2024 |

+| **Description** | Introduce a decaying reward curve for stale nominations in staking. |

+| **Authors** | Shawn Tabrizi & Jonas Gehrlein |

+

+## Summary

+

+This is a proposal to define stale nominations in the Polkadot's staking system and introduce a mechanism to gradually reduce the rewards that these nominations would receive. Upon implementation, this nudges all nominators to become more active and either update or renew their selected validators at least once per period to prevent losing rewards. In response to that, it gives incentives to validators to behave in the best interest of the network and stay competitive. The decaying factor and duration of the period before nominations would be considered stale is long enough to not overburden nominators and compact enough to provide an incentive to regularly engage and revisit their selection.

+

+Apart from the technical specification of how to achieve this goal, we discuss why active nominators are important for the security of the network. Further, we present ample empirical evidence to substantiate the claim that the current lack of direct incentives results in stale nominators.

+

+Importantly, our proposal should neither be misinterpreted as a negative judgment on the current active set nor as a campaign to force out long-standing validators/nominators. Instead, we want to address the systemic issue of stale nominators that, with the growing age of the network, might at some point become a security risk. In that sense, our proposal aims to prevent a detoriation of our validator set before it is too late.

+

+## Motivation

+

+### Background

+

+Polkadot employs the Nominated Proof-of-Stake (NPoS) mechanism that allows to accumulate resources both from validators and nominators to construct the active set. This increases the inclusivity for validators, because they do not necessarily need huge resources themselves but have the opportunity to convince nominators to entrust them with the important task of validating the Polkadot network.

+

+In the absence of enforcing a strict (and significant) lower limit on self-stake of validators, determining trustworthiness and competency is borderline impossible for an automated protocol. To cope with that challenge, we employ nominators as active agents that are able to navigate the fabrics of the social layer and are tasked to scout for, engage with and finally select suitable validators. The aggregated choices of these nominators are used by the election algorithm to determine a robust active set of validators. For this effort and the included risk, nominators are rewarded generously through staking rewards.

+

+### Why nominators must be active

+

+In this setup, the economic security of validators can be approximated by their self-stake, their future rewards (earned through commission), and the reputational costs incurred from causing a slash on their nominators. Although potentially significant in value, the latter factor is hardly measurable and difficult to quantify. Arguably, however, and irrespective of the exact value, it is diminishing the more time has passed of the last interaction between a nominator and their validator(s). This is because validators that were reputable in the past, might not be in the future and a growing distance between the two entities reduces their attachment to each other. In other words, the contribution of nominators to the security of the network is directly linked to how active they are in the process of engaging and scouting viable validators. Therefore, we not only require but also expect nominators to actively engage in the selection of validators to maximize their contribution to Polkadot's economic security.

+

+### Empirical evidence

+

+In the following, we present empirical evidence to illustrate that, in the light of the mechanisms described above, nominator behavior can be improved upon. We include data from the first days of Polkadot up until the End of October 2024 (the full report can be found [here](https://jonasw3f.github.io/nominators_behavior_hosted/)), giving a comprehensive picture of current and historical behavior.

+

+In our analysis, a key results is that the currently active nominators, on average, changed their selection of validators around 546 days ago. Additionally, the vast majority only makes a selection of validators once (when they become a nominator) and never again. This "set and forget" attitude directly translates into the backing of validators. To obtain a meaningful metric, we define the Weighted Backing Age (WBA) per validator. This metric calculates the age of their backing (from nominators) and weighs it with the size of their stake. This is superior to just taking the average, because we the activity of a nominator might be directly linked to their stake size (for more information, see the full report). Conducting this analysis reveals that the overall staleness of nominators translates into high values of WBA. While there are some validators activated by recent nominations, the average value remains rather high with 226 days (with numerous values above 1000 days). Observing the density function of the individual WBAs, we can conclude that 40% of the total stake is older than *at least* 180 days (6 months).

+

+### Implications of stale nominations

+

+The fact that a large share of nominators simply “set and forget” their selections can inadvertently introduce risks into the network. When early-nominated incumbents hold their positions as validators for extended periods, they effectively gain tenure. This dynamic could lead to complacency among established validators, with quality and performance potentially declining over time. Furthermore, this lack of turnover discourages competition, creating barriers for new validators who may offer better performance but struggle to attract nominations, as the network environment disproportionately favors seniority over merit.

+

+One might argue that nominators are naturally motivated to stay informed by the potential risk of slashing, ensuring they actively monitor and update their selections. And it is indeed possible that a selection made years ago is still optimal for a nominator today. However, we would counter these arguments by noting that nominators, as human individuals, are prone to biases that can lead to irrational behavior. To adequately protect themselves, nominators are required to secure themselves against highly unlikely but potentially detrimental events. Yet, the rarity of slashing incidents (which are even more rarely applied) makes it difficult for nominators to perceive a meaningful risk. Psychological phenomena like availability bias could cause decision-makers to underestimate both the probability and potential impact of such events, leaving them less prepared than they should be.

+

+After all, slashing is meant as deterrend and not a frequently applied mechanism. As protocol designers, we must remain vigilant and continuously optimize the network's security, even in the absence of major issues. After all, if we notice a problem, it may already be too late.

+

+

+## Conclusion and TL;DR

+The NPoS system requires nominators to regularly engage and update their selections to meaningfully contribute to economic security. Additionally, they are compensated for their effort and the risk of potential slashes. However, these risks may be underestimated, leading many nominators to set their nominations once and never revisit them.

+

+As the network matures, this behavior could have serious security implications. Our proposal aims to introduce a gentle incentive for nominators to stay actively engaged in the staking system. A positive side effect is that having more engaged nominators encourages validators to consistently perform at their best across all key dimensions.

+

+

+## Stakeholders

+

+Primary stakeholders are:

+

+- Nominators

+- Validators

+

+## Explanation

+

+Detail-heavy explanation of the RFC, suitable for explanation to an implementer of the changeset. This should address corner cases in detail and provide justification behind decisions, and provide rationale for how the design meets the solution requirements.

+

+TODO Shawn

+

+## Drawbacks

+

+The proposed mechanism does come with some potential drawbacks:

+

+### Risk of Alienating Nominators

+- **Problem**: Some nominators, particularly those who don’t engage regularly, may feel alienated, especially if they experience reduced rewards due to lack of involvement, potentially without realizing there was an update.

+- **Response**: Nominators who fail to stay engaged are not fully performing the role that the network rewards them for. We plan to mitigate this by launching informational campaigns to ensure that nominators are aware of any updates and changes. Moreover, any adjustments in rewards would only take effect after six months from implementation, as we won’t apply these changes retroactively.

+

+### Potential for Bot Automation

+- **Problem**: There is a possibility that some nominators might use bots to automate the process, simply reconfirming their selections without actual engagement.

+- **Response**: In the worst-case scenario, automated reconfirmation would maintain the current state, with no improvement but also no additional detriment. Furthermore, running bots is not a feasible option for all nominators, as it requires effort that may exceed the effort of simply updating selections periodically. Recent advances have also made it easier for nominators to make informed choices, reducing the likelihood of relying on bots for this task.

+

+## Testing, Security, and Privacy

+

+Describe the the impact of the proposal on these three high-importance areas - how implementations can be tested for adherence, effects that the proposal has on security and privacy per-se, as well as any possible implementation pitfalls which should be clearly avoided.

+

+## Performance, Ergonomics, and Compatibility

+

+Describe the impact of the proposal on the exposed functionality of Polkadot.

+

+### Performance

+

+Is this an optimization or a necessary pessimization? What steps have been taken to minimize additional overhead?

+

+### Ergonomics

+

+If the proposal alters exposed interfaces to developers or end-users, which types of usage patterns have been optimized for?

+

+### Compatibility

+

+Does this proposal break compatibility with existing interfaces, older versions of implementations? Summarize necessary migrations or upgrade strategies, if any.

+

+## Prior Art and References

+

+- Report: https://jonasw3f.github.io/nominators_behavior_hosted/

+- Github issue discussions:

+

+## Unresolved Questions

+

+Provide specific questions to discuss and address before the RFC is voted on by the Fellowship. This should include, for example, alternatives to aspects of the proposed design where the appropriate trade-off to make is unclear.

+

+## Future Directions and Related Material

+

+Describe future work which could be enabled by this RFC, if it were accepted, as well as related RFCs. This is a place to brain-dump and explore possibilities, which themselves may become their own RFCs.

+

+

+Open Questions:

+- Can we introduce it on the last selection of a nominator or would it be t0 once we activate the mechanism? The latter might cause issues that we see a drop in backing at the same time.

+- How would self-stake be treated? Can it become stale? It shouldn't.

\ No newline at end of file

diff --git a/docs/Polkadot/economics/applied-research/rfc146.md b/docs/Polkadot/economics/applied-research/rfc146.md

new file mode 100644

index 00000000..4927ac3a

--- /dev/null

+++ b/docs/Polkadot/economics/applied-research/rfc146.md

@@ -0,0 +1,47 @@

+# RFC-0146: Deflationary Transaction Fee Model for the Relay Chain and its System Parachains (accepted)

+

+| | |

+| --------------- | ------------------------------------------------------------------------------------------- |

+| **Start Date** | 20th May 2025 |

+| **Description** | This RFC proposes burning 80% of transaction fees on the Relay Chain and all its system parachains, adding to the existing deflationary capacity. |

+| **Authors** | Jonas Gehrlein |

+

+## Summary

+

+This RFC proposes **burning 80% of transaction fees** accrued on Polkadot’s **Relay Chain** and, more significantly, on all its **system parachains**. The remaining 20% would continue to incentivize Validators (on the Relay Chain) and Collators (on system parachains) for including transactions. The 80:20 split is motivated by preserving the incentives for Validators, which are crucial for the security of the network, while establishing a consistent fee policy across the Relay Chain and all system parachains.

+

+* On the **Relay Chain**, the change simply redirects the share that currently goes to the Treasury toward burning. Given the move toward a [minimal Relay](https://polkadot-fellows.github.io/RFCs/approved/0032-minimal-relay.html) ratified by RFC0032, a change to the fee policy will likely be symbolic for the future, but contributes to overall coherence.

+

+* On **system parachains**, the Collator share would be reduced from 100% to 20%, with 80% burned. Since the rewards of Collators do not significantly contribute to the shared security model, this adjustment should not negatively affect the network's integrity.

+

+This proposal extends the system's **deflationary direction** and is enabling direct value capture for DOT holders of an overall increased activity on the network.

+

+## Motivation

+

+Historically, transaction fees on both the Relay Chain and the system parachains (with a few exceptions) have been relatively low. This is by design—Polkadot is built to scale and offer low-cost transactions. While this principle remains unchanged, growing network activity could still result in a meaningful accumulation of fees over time.

+

+Implementing this RFC ensures that potentially increasing activity manifesting in more fees is captured for all token holders. It further aligns the way that the network is handling fees (such as from transactions or for coretime usage) is handled. The arguments in support of this are close to those outlined in [RFC0010](https://polkadot-fellows.github.io/RFCs/approved/0010-burn-coretime-revenue.html). Specifically, burning transaction fees has the following benefits:

+

+### Compensation for Coretime Usage

+

+System parachains do not participate in open-market bidding for coretime. Instead, they are granted a special status through governance, allowing them to consume network resources without explicitly paying for them. Burning transaction fees serves as a simple and effective way to compensate for the revenue that would otherwise have been generated on the open market.

+

+### Value Accrual and Deflationary Pressure

+

+By burning the transaction fees, the system effectively reduces the token supply and thereby increase the scarcity of the native token. This deflationary pressure can increase the token's long-term value and ensures that the value captured is translated equally to all existing token holders.

+

+

+This proposal requires only minimal code changes, making it inexpensive to implement, yet it introduces a consistent policy for handling transaction fees across the network. Crucially, it positions Polkadot for a future where fee burning could serve as a counterweight to an otherwise inflationary token model, ensuring that value generated by network usage is returned to all DOT holders.

+

+## Stakeholders

+

+* **All DOT Token Holders**: Benefit from reduced supply and direct value capture as network usage increases.

+

+* **System Parachain Collators**: This proposal effectively reduces the income currently earned by system parachain Collators. However, the impact on the status-quo is negligible, as fees earned by Collators have been minimal (around $1,300 monthly across all system parachains with data between November 2024 and April 2025). The vast majority of their compensation comes from Treasury reimbursements handled through bounties. As such, we do not expect this change to have any meaningful effect on Collator incentives or behavior.

+

+* **Validators**: Remain unaffected, as their rewards stay unchanged.

+

+

+## Sidenote: Fee Assets

+

+Some system parachains may accept other assets deemed **sufficient** for transaction fees. This has no implication for this proposal as the **asset conversion pallet** ensures that DOT is ultimately used to pay for the fees, which can be burned.

\ No newline at end of file

diff --git a/docs/Polkadot/economics/applied-research/rfc17.md b/docs/Polkadot/economics/applied-research/rfc17.md

new file mode 100644

index 00000000..4ff9d5f3

--- /dev/null

+++ b/docs/Polkadot/economics/applied-research/rfc17.md

@@ -0,0 +1,184 @@

+# RFC-0017: Coretime Market Redesign (accepted)

+

+| | |

+| --------------- | ------------------------------------------------------------------------------------------- |

+| **Original Proposition Date** | 05.08.2023 |

+| **Revision Date** | 04.06.2025 |

+| **Description** | This RFC redesigns Polkadot's coretime market to ensure that coretime is efficiently priced through a clearing-price Dutch auction. It also introduces a mechanism that guarantees current coretime holders the right to renew their cores outside the market—albeit at the market price with an additional charge. This design aligns renewal and market prices, preserving long-term access for current coretime owners while ensuring that market dynamics exert sufficient pressure on all purchasers, resulting in an efficient allocation.

+| **Authors** | Jonas Gehrlein |

+

+## Summary

+

+This document proposes a restructuring of the bulk markets in Polkadot's coretime allocation system to improve efficiency and fairness. The proposal suggests splitting the `BULK_PERIOD` into three consecutive phases: `MARKET_PERIOD`, `RENEWAL_PERIOD`, and `SETTLEMENT_PERIOD`. This structure enables market-driven price discovery through a clearing-price Dutch auction, followed by renewal offers during the `RENEWAL_PERIOD`.

+

+With all coretime consumers paying a unified price, we propose removing all liquidity restrictions on cores purchased either during the initial market phase or renewed during the renewal phase. This allows a meaningful `SETTLEMENT_PERIOD`, during which final agreements and deals between coretime consumers can be orchestrated on the social layer—complementing the agility this system seeks to establish.

+

+In the new design, we obtain a uniform price, the `clearing_price`, which anchors new entrants and current tenants. To complement market-based price discovery, the design includes a dynamic reserve price adjustment mechanism based on actual core consumption. Together, these two components ensure robust price discovery while mitigating price collapse in cases of slight underutilization or collusive behavior.

+

+## Motivation

+

+After exposing the initial system introduced in [RFC-1](https://github.com/polkadot-fellows/RFCs/blob/6f29561a4747bbfd95307ce75cd949dfff359e39/text/0001-agile-coretime.md) to real-world conditions, several weaknesses have become apparent. These lie especially in the fact that cores captured at very low prices are removed from the open market and can effectively be retained indefinitely, as renewal costs are minimal. The key issue here is the absence of price anchoring, which results in two divergent price paths: one for the initial purchase on the open market, and another fully deterministic one via the renewal bump mechanism.

+

+This proposal addresses these issues by anchoring all prices to a value derived from the market, while still preserving necessary privileges for current coretime consumers. The goal is to produce robust results across varying demand conditions (low, high, or volatile).

+

+In particular, this proposal introduces the following key changes:

+

+* **Reverses the order** of the market and renewal phases: all cores are first offered on the open market, and only then are renewal options made available.

+* **Introduces a dynamic `reserve_price`**, which is the minimum price coretime can be sold for in a period. This price adjusts based on consumption and does not rely on market participation.

+* **Makes unproductive core captures sufficiently expensive**, as all cores are exposed to the market price.

+

+The premise of this proposal is to offer a straightforward design that discovers the price of coretime within a period as a `clearing_price`. Long-term coretime holders still retain the privilege to keep their cores **if** they can pay the price discovered by the market (with some premium for that privilege). The proposed model aims to strike a balance between leveraging market forces for allocation while operating within defined bounds. In particular, prices are capped *within* a `BULK_PERIOD`, which gives some certainty about prices to existing teams. It must be noted, however, that under high demand, prices could increase exponentially *between* multiple market cycles. This is a necessary feature to ensure proper price discovery and efficient coretime allocation.

+

+Ultimately, the framework proposed here seeks to adhere to all requirements originally stated in RFC-1.

+

+## Stakeholders

+

+Primary stakeholder sets are:

+

+- Protocol researchers, developers, and the Polkadot Fellowship.

+- Polkadot Parachain teams both present and future, and their users.

+- Polkadot DOT token holders.

+

+## Explanation

+

+### Overview

+

+The `BULK_PERIOD` has been restructured into two primary segments: the `MARKET_PERIOD` and the `RENEWAL_PERIOD`, along with an auxiliary`SETTLEMENT_PERIOD`. The latter does not require any active participation from the coretime system chain except to simply execute transfers of ownership between market participants. A significant departure from the current design lies in the timing of renewals, which now occur after the market phase. This adjustment aims to harmonize renewal prices with their market counterparts, ensuring a more consistent and equitable pricing model.

+

+### Market Period (14 days)

+

+During the market period, core sales are conducted through a well-established **clearing-price Dutch auction** that features a `reserve_price`. Since the auction format is a descending clock, the starting price is initialized at the `opening_price`. The price then descends linearly over the duration of the `MARKET_PERIOD` toward the `reserve_price`, which serves as the minimum price for coretime within that period.

+

+Each bidder is expected to submit both their desired price and the quantity (i.e., number of cores) they wish to purchase. To secure these acquisitions, bidders must deposit an amount equivalent to their bid multiplied by the chosen quantity, in DOT. Bidders are always allowed to post a bid at or below the current descending price, but never above it.

+

+The market reaches resolution once all quantities have been sold or the `reserve_price` is reached. In the former case, the `clearing_price` is set equal to the price that sold the last unit. If cores remain unsold, the `clearing_price` is set to the `reserve_price`. This mechanism yields a uniform price that all buyers pay. Among other benefits discussed in the Appendix, this promotes truthful bidding—meaning the optimal strategy is simply to submit one's true valuation of coretime.

+

+The `opening_price` is determined by: `opening_price = max(MIN_OPENING_PRICE, PRICE_MULTIPLIER * reserve_price)`. We recommend `opening_price = max(150, 3 * reserve_price)`.

+

+### Renewal Period (7 days)

+

+The renewal period guarantees current tenants the privilege to renew their core(s), even if they did not win in the auction (i.e., did not submit a bid at or above the `clearing_price`) or did not participate at all.

+

+All current tenants who obtained less cores from the market than they have the right to renew, have 7 days to decide whether they want to renew their core(s). Once this information is known, the system has everything it needs to conclusively allocate all cores and assign ownership. In cases where the combined number of renewals and auction winners exceeds the number of available cores, renewals are first served and then remaining cores are allocated from highest to lowest bidder until all are assigned (more information in the details on mechanics section). This means that under larger demand than supply (and some renewal decisions), some bidders may not receive the coretime they expected from the auction.

+

+While this mechanism is necessary to ensure that current coretime users are not suddenly left without an allocation, potentially disrupting their operations, it may distort price discovery in the open market. Specifically, it could mean that a winning bidder is displaced by a renewal decision.

+

+Since bidding is straightforward and can be regarded static (it requires only one transaction) and can therefore be trivially automated, we view renewals as a safety net and want to encourage all coretime users to participate in the auction. To that end, we introduce a financial incentive to bid by increasing the renewal price to `clearing_price * PENALTY` (e.g., 30%). This penalty must be high enough to create a sufficient incentive for teams to prefer bidding over passively renewing.

+

+**Note:** Importantly, the `PENALTY` only applies when the number of unique bidders in the auction plus current tenants with renewal rights exceeds the number of available cores. If total demand is lower than the number of offered cores, the `PENALTY` is set to 0%, and renewers pay only the `clearing_price`. This reflects the fact that we would not expect the `clearing_price` to exceed the `reserve_price` even with all coretime consumers participating in the auction. To avoid managing reimbursements, the 30% `PENALTY` is automatically applied to all renewers as soon as the combined count of unique bidders and potential renewers surpasses the number of available cores.

+

+### Reserve Price Adjustment

+

+After each `RENEWAL_PERIOD`, once all renewal decisions have been collected and cores are fully allocated, the `reserve_price` is updated to capture the demand in the next period. The goal is to ensure that prices adjust smoothly in response to demand fluctuations—rising when demand exceeds targets and falling when it is lower—while avoiding excessive volatility from small deviations.

+

+We define the following parameters:

+

+* `reserve_price_t`: Reserve price in the current period

+* `reserve_price_{t+1}`: Reserve price for the next period (final value after adjustments)

+* `consumption_rate_t`: Fraction of cores sold (including renewals) out of the total available in the current period

+* `TARGET_CONSUMPTION_RATE`: Target ratio of sold-to-available cores (we propose 90%)

+* `K`: Sensitivity parameter controlling how aggressively the price responds to deviations (we propose values between 2 and 3)

+* `P_MIN`: Minimum reserve price floor (we propose 1 DOT to prevent runaway downward spirals and computational issues)

+* `MIN_INCREMENT`: Minimum absolute increment applied when the market is fully saturated (i.e., 100% consumption; proposed value: 100 DOT)

+

+We update the price according to the following rule:

+

+```

+price_candidate_t = reserve_price_t * exp(K * (consumption_rate_t - TARGET_CONSUMPTION_RATE))

+```

+

+We then ensure that the price does not fall below `P_MIN`:

+

+```

+price_candidate_t = max(price_candidate_t, P_MIN)

+```

+

+If `consumption_rate_t == 100%`, we apply an additional adjustment:

+

+```

+if (price_candidate_t - reserve_price_t < MIN_INCREMENT) {

+ reserve_price_{t+1} = reserve_price_t + MIN_INCREMENT

+} else {

+ reserve_price_{t+1} = price_candidate_t

+}

+```

+

+In other words, we adjust the `reserve_price` using the exponential scaling rule, except in the special case where consumption is at 100% but the resulting price increase would be less than `MIN_INCREMENT`. In that case, we instead apply the fixed minimum increment. This exception ensures that the system can recover more quickly from prolonged periods of low prices.

+

+We argue that in a situation with persistently low prices and a sudden surge in real demand (i.e., full core consumption), such a jump is both warranted and economically justified.

+

+### Settlement Period / Secondary Market (7 days)

+

+The remaining 7 days of a sales cycle serve as a settlement period, during which participants have ample time to trade coretime on secondary markets before the onset of the next `BULK_PERIOD`. This proposal makes no assumptions about the structure of these markets, as they are entirely operated on the social layer and managed directly by buyers and sellers. In this context, maintaining restrictions on the resale of renewed cores in the secondary market appears unjustified—especially given that prices are uniform and market-driven. In fact, such constraints could be harmful in cases where the primary market does not fully achieve efficiency.

+

+We therefore propose lifting all restrictions on the resale or slicing of cores in the secondary market.

+

+## Additional Considerations

+

+### New Track: Coretime Admin

+

+To enable rapid response, we propose that the parameters of the model be directly accessible by governance. These include:

+

+* `P_MIN`

+* `K`

+* `PRICE_MULTIPLIER`

+* `MIN_INCREMENT`

+* `TARGET_CONSUMPTION_RATE`

+* `PENALTY`

+* `MIN_OPENING_PRICE`

+

+This setup should allow us to adjust the parameters in a timely manner, within the duration of a `BULK_PERIOD`, so that changes can take effect before the next period begins.

+

+### Transition to the new Model

+

+Upon acceptance of this RFC, we should make sure to transition as smoothly as possible to the new design.

+

+* All teams that own cores in the current system should be endowed with the same number of cores in the new system, with the ability to renew them starting from the first period.

+* The initial `reserve_price` should be chosen sensibly to avoid distortions in the early phases.

+* A sufficient number of cores should be made available on the market to ensure enough liquidity to allow price discovery functions properly.

+

+### Details on Some Mechanics

+

+* The price descends linearly from a `opening_price` to the `reserve_price` over the duration of the `MARKET_PERIOD`. Importantly, each discrete price level should be held for a sufficiently long interval (e.g., 6–12 hours).

+* A potential issue arises when we experience demand spikes after prolonged periods of low demand (which result in low reserve prices). In such cases, the price range between `reserve_price` and the upper bound (i.e., `opening_price`) may be lower than the willingness to pay from many bidders. If this affects most participants, demand will concentrate at the upper bound of the Dutch auction, making front-running a profitable strategy—either by excessively tipping bidding transactions or through explicit collusion with block producers.

+ To mitigate this, we propose preventing the market from closing at the `opening_price` prematurely. Even if demand exceeds available cores at this level, we continue collecting all orders. Then, we randomize winners instead of using a first-come-first-served approach. Additionally, we may break up bulk orders and treat them as separate bids. This still gives a higher chance to bidders willing to buy larger quantities, but avoids all-or-nothing outcomes. These steps diminish the benefit of tipping or collusion, since bid timing no longer affects allocation. While we expect such scenarios to be the exception, it's important to note that this will not negatively impact current tenants, who always retain the safety net of renewal. After a few periods of maximum bids at maximum capacity, the range should span wide enough to capture demand within its bounds.

+* One implication of granting the renewal privilege after the `MARKET_PERIOD` is that some bidders, despite bidding above the `clearing_price`, may not receive coretime. We believe this is justified, because the harm of displacing an existing project is bigger than preventing a new project from getting in (if there is no cores available) for a bit. Additionally, this inefficiency is compensated for by the causing entities paying the `PENALTY`. We need, however, additional rules to resolve the allocation issues. These are:

+ 1. Bidders who already hold renewable cores cannot be displaced by the renewal decision of another party.

+ 2. Among those who *can* be displaced, we begin with the lowest submitted bids.

+* If a current tenant wins cores on the market, they forfeit the right to renew those specific cores. For example, if an entity currently holds three cores and wins two in the market, it may only opt to renew one. The only way to increase the number of cores at the end of a `BULK_PERIOD` is to acquire them entirely through the market.

+* Bids **below** the current descending price should always be allowed. In other words, teams shouldn't have to wait idly for the price to drop to their target.

+* Bids below the current descending price can be **raised**, but only up to the current clock price.

+* Bids **above** the current descending price are **not allowed**. This is a key difference from a simple *kth*-price auction and helps prevent sniping.

+* All cores that remain unallocated after the `RENEWAL_PERIOD` are transferred to the On-Demand Market.

+

+### Implications

+

+* The introduction of a single price (`clearing_price`) provides a consistent anchor for all available coretime. This serves as a safeguard against price divergence, preventing scenarios where entities acquire cores at significantly below-market rates and keep them for minimal costs.

+* With the introduction of the `PENALTY`, it is always financially preferable for teams to participate in the auction. By bidding their true valuation, they maximize their chance of winning a core at the lowest possible price without incurring the penalty.

+* In this design, it is virtually impossible to "accidentally" lose cores, since renewals occur after the market phase and are guaranteed for current tenants.

+* Prices within a `BULK_PERIOD` are bounded upward by the `opening_price`. That means, the maximum a renewer could ever pay within a round is `opening_price * PENALTY`. This provides teams with ample time to prepare and secure the necessary funds in anticipation of potential price increases. By incorporating reserve price adjustment into their planning, teams can anticipate worst-case future price increases.

+

+## Appendix

+

+### Further Discussion Points

+

+- **Reintroduction of Candle Auctions**: Polkadot gathered vast experience with candle auctions where more than 200 auctions has been conducted throughout more than two years. [Our study](https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5109856) analyzing the results in much detail reveals that the mechanism itself is both efficient and (nearly) extracting optimal revenue. This provides confidence to use it to allocate the winners instead of a descending clock auction. Notably, this change solely affects the bidding process and winner determination. Core components, such as the k-th price, reserve price, and maximum price, remain unaffected.

+

+### Insights: Clearing Price Dutch Auctions

+Having all bidders pay the market clearing price offers some benefits and disadvantages.

+

+- Advantages:

+ - **Fairness**: All bidders pay the same price.

+ - **Active participation**: Because bidders are protected from overbidding (winner's curse), they are more likely to engage and reveal their true valuations.

+ - **Simplicity**: A single price is easier to work with for pricing renewals later.

+ - **Truthfulness**: There is no need to try to game the market by waiting with bidding. Bidders can just bid their valuations.

+ - **No sniping**: As prices are descending, a player cannot wait until the end to place a high bid. They are only allowed to place the decreasing bid at the time of bidding.

+- Disadvantages:

+ - **(Potentially) Lower Revenue**: While the theory predicts revenue-equivalence between a uniform price and pay-as-bid type of auction, slightly lower revenue for the former type is observed empirically. Arguably, revenue maximization (i.e., squeezing out the maximum willingness to pay from bidders) is not the priority for Polkadot. Instead, it is interested in efficient allocation and the other benefits illustrated above.

+ - **(Technical) Complexity**: Instead of making a final purchase within the auction, the bid is only a deposit. Some refunds might happen after the auction is finished. This might pose additional challenges from the technical side (e.g., storage requirements).

+

+### Prior Art and References

+

+This RFC builds extensively on the available ideas put forward in [RFC-1](https://github.com/polkadot-fellows/RFCs/blob/6f29561a4747bbfd95307ce75cd949dfff359e39/text/0001-agile-coretime.md).

+

+Additionally, I want to express a special thanks to [Samuel Haefner](https://samuelhaefner.github.io/), [Shahar Dobzinski](https://sites.google.com/site/dobzin/), and Alistair Stewart for fruitful discussions and helping me structure my thoughts.

\ No newline at end of file

diff --git a/docs/Polkadot/economics/applied-research/rfc97.md b/docs/Polkadot/economics/applied-research/rfc97.md

new file mode 100644

index 00000000..7df1d94e

--- /dev/null

+++ b/docs/Polkadot/economics/applied-research/rfc97.md

@@ -0,0 +1,168 @@

+# RFC-0097: Unbonding Queue (accepted)

+

+| | |

+| --------------- | ------------------------------------------------------------------------------------------- |

+| **Date** | 19.06.2024 |

+| **Description** | This RFC proposes a safe mechanism to scale the unbonding time from staking on the Relay Chain proportionally to the overall unbonding stake. This approach significantly reduces the expected duration for unbonding, while ensuring that a substantial portion of the stake is always available to slash of validators behaving maliciously within a 28-day window. |

+| **Authors** | Jonas Gehrlein & Alistair Stewart |

+

+## Summary

+

+This RFC proposes a flexible unbonding mechanism for tokens that are locked from [staking](https://wiki.polkadot.network/docs/learn-staking) on the Relay Chain (DOT/KSM), aiming to enhance user convenience without compromising system security.

+

+Locking tokens for staking ensures that Polkadot is able to slash tokens backing misbehaving validators. With changing the locking period, we still need to make sure that Polkadot can slash enough tokens to deter misbehaviour. This means that not all tokens can be unbonded immediately, however we can still allow some tokens to be unbonded quickly.

+

+The new mechanism leads to a signficantly reduced unbonding time on average, by queuing up new unbonding requests and scaling their unbonding duration relative to the size of the queue. New requests are executed with a minimum of 2 days, when the queue is comparatively empty, to the conventional 28 days, if the sum of requests (in terms of stake) exceed some threshold. In scenarios between these two bounds, the unbonding duration scales proportionately. The new mechanism will never be worse than the current fixed 28 days.

+

+In this document we also present an empirical analysis by retrospectively fitting the proposed mechanism to the historic unbonding timeline and show that the average unbonding duration would drastically reduce, while still being sensitive to large unbonding events. Additionally, we discuss implications for UI, UX, and conviction voting.

+

+Note: Our proposition solely focuses on the locks imposed from staking. Other locks, such as governance, remain unchanged. Also, this mechanism should not be confused with the already existing feature of [FastUnstake](https://wiki.polkadot.network/docs/learn-staking#fast-unstake), which lets users unstake tokens immediately that have not received rewards for 28 days or longer.

+

+As an initial step to gauge its effectiveness and stability, it is recommended to implement and test this model on Kusama before considering its integration into Polkadot, with appropriate adjustments to the parameters. In the following, however, we limit our discussion to Polkadot.

+

+## Motivation

+

+Polkadot has one of the longest unbonding periods among all Proof-of-Stake protocols, because security is the most important goal. Staking on Polkadot is still attractive compared to other protocols because of its above-average staking APY. However the long unbonding period harms usability and deters potential participants that want to contribute to the security of the network.

+

+The current length of the unbonding period imposes significant costs for any entity that even wants to perform basic tasks such as a reorganization / consolidation of their stashes, or updating their private key infrastructure. It also limits participation of users that have a large preference for liquidity.

+

+The combination of long unbonding periods and high returns has lead to the proliferation of [liquid staking](https://www.bitcoinsuisse.com/learn/what-is-liquid-staking), where parachains or centralised exchanges offer users their staked tokens before the 28 days unbonding period is over either in original DOT/KSM form or derivative tokens. Liquid staking is harmless if few tokens are involved but it could result in many validators being selected by a few entities if a large fraction of DOTs were involved. This may lead to centralization (see [here](https://dexola.medium.com/is-ethereum-about-to-get-crushed-by-liquid-staking-30652df9ec46) for more discussion on threats of liquid staking) and an opportunity for attacks.

+

+The new mechanism greatly increases the competitiveness of Polkadot, while maintaining sufficient security.

+

+

+## Stakeholders

+

+- Every DOT/KSM token holder

+

+## Explanation

+

+Before diving into the details of how to implement the unbonding queue, we give readers context about why Polkadot has a 28-day unbonding period in the first place. The reason for it is to prevent long-range attacks (LRA) that becomes theoretically possible if more than 1/3 of validators collude. In essence, a LRA describes the inability of users, who disconnect from the consensus at time t0 and reconnects later, to realize that validators which were legitimate at a certain time, say t0 but dropped out in the meantime, are not to be trusted anymore. That means, for example, a user syncing the state could be fooled by trusting validators that fell outside the active set of validators after t0, and are building a competitive and malicious chain (fork).

+

+LRAs of longer than 28 days are mitigated by the use of trusted checkpoints, which are assumed to be no more than 28 days old. A new node that syncs Polkadot will start at the checkpoint and look for proofs of finality of later blocks, signed by 2/3 of the validators. In an LRA fork, some of the validator sets may be different but only if 2/3 of some validator set in the last 28 days signed something incorrect.

+

+If we detect an LRA of no more than 28 days with the current unbonding period, then we should be able to detect misbehaviour from over 1/3 of validators whose nominators are still bonded. The stake backing these validators is considerable fraction of the total stake (empirically it is 0.287 or so). If we allowed more than this stake to unbond, without checking who it was backing, then the LRA attack might be free of cost for an attacker. The proposed mechansim allows up to half this stake to unbond within 28 days. This halves the amount of tokens that can be slashed, but this is still very high in absolute terms. For example, at the time of writing (19.06.2024) this would translate to around 120 millions DOTs.

+

+Attacks other than an LRA, such as backing incorrect parachain blocks, should be detected and slashed within 2 days. This is why the mechanism has a minimum unbonding period.

+

+In practice an LRA does not affect clients who follow consensus more frequently than every 2 days, such as running nodes or bridges. However any time a node syncs Polkadot if an attacker is able to connect to it first, it could be misled.

+

+In short, in the light of the huge benefits obtained, we are fine by only keeping a fraction of the total stake of validators slashable against LRAs at any given time.

+

+## Mechanism

+

+When a user ([nominator](https://wiki.polkadot.network/docs/learn-nominator) or validator) decides to unbond their tokens, they don't become instantly available. Instead, they enter an *unbonding queue*. The following specification illustrates how the queue works, given a user wants to unbond some portion of their stake denoted as `new_unbonding_stake`. We also store a variable, `max_unstake` that tracks how much stake we allow to unbond potentially earlier than 28 eras (28 days on Polkadot and 7 days on Kusama).

+

+To calculate `max_unstake`, we record for each era how much stake was used to back the lowest-backed 1/3 of validators. We store this information for the last 28 eras and let `min_lowest_third_stake` be the minimum of this over the last 28 eras.

+`max_unstake` is determined by `MIN_SLASHABLE_SHARE` x `min_lowest_third_stake`. In addition, we can use `UPPER_BOUND` and `LOWER_BOUND` as variables to scale the unbonding duration of the queue.

+

+At any time we store `back_of_unbonding_queue_block_number` which expresses the block number when all the existing unbonders have unbonded.

+

+Let's assume a user wants to unbond some of their stake, i.e., `new_unbonding_stake`, and issues the request at some arbitrary block number denoted as `current_block`. Then:

+

+```

+unbonding_time_delta = new_unbonding_stake / max_unstake * UPPER_BOUND

+```

+

+This number needs to be added to the `back_of_unbonding_queue_block_number` under the conditions that it does not undercut `current_block + LOWER_BOUND` or exceed `current_block + UPPER_BOUND`.

+

+```

+back_of_unbonding_queue_block_number = max(current_block_number, back_of_unbonding_queue_block_number) + unbonding_time_delta

+```

+

+This determines at which block the user has their tokens unbonded, making sure that it is in the limit of `LOWER_BOUND` and `UPPER_BOUND`.

+

+```

+unbonding_block_number = min(UPPER_BOUND, max(back_of_unbonding_queue_block_number - current_block_number, LOWER_BOUND)) + current_block_number

+```

+

+Ultimately, the user's token are unbonded at `unbonding_block_number`.

+

+### Proposed Parameters

+There are a few constants to be exogenously set. They are up for discussion, but we make the following recommendation:

+- `MIN_SLASHABLE_SHARE`: `1/2` - This is the share of stake backing the lowest 1/3 of validators that is slashable at any point in time. It offers a trade-off between security and unbonding time. Half is a sensible choice. Here, we have sufficient stake to slash while allowing for a short average unbonding time.

+- `LOWER_BOUND`: 28800 blocks (or 2 eras): This value resembles a minimum unbonding time for any stake of 2 days.

+- `UPPER_BOUND`: 403200 blocks (or 28 eras): This value resembles the maximum time a user faces in their unbonding time. It equals to the current unbonding time and should be familiar to users.

+

+### Rebonding

+

+Users that chose to unbond might want to cancel their request and rebond. There is no security loss in doing this, but with the scheme above, it could imply that a large unbond increases the unbonding time for everyone else later in the queue. When the large stake is rebonded, however, the participants later in the queue move forward and can unbond more quickly than originally estimated. It would require an additional extrinsic by the user though.

+

+Thus, we should store the `unbonding_time_delta` with the unbonding account. If it rebonds when it is still unbonding, then this value should be subtracted from `back_of_unbonding_queue_block_number`. So unbonding and rebonding leaves this number unaffected. Note that we must store `unbonding_time_delta`, because in later eras `max_unstake` might have changed and we cannot recompute it.

+

+

+### Empirical Analysis

+We can use the proposed unbonding queue calculation, with the recommended parameters, and simulate the queue over the course of Polkadot's unbonding history. Instead of doing the analysis on a per-block basis, we calculate it on a daily basis. To simulate the unbonding queue, we require the ratio between the daily total stake of the lowest third backed validators and the daily total stake (which determines the `max_unstake`) and the sum of daily and newly unbonded tokens. Due to the [NPoS algorithm](https://wiki.polkadot.network/docs/learn-phragmen), the first number has only small variations and we used a constant as approximation (0.287) determined by sampling a bunch of empirical eras. At this point, we want to thank Parity's Data team for allowing us to leverage their data infrastructure in these analyses.

+

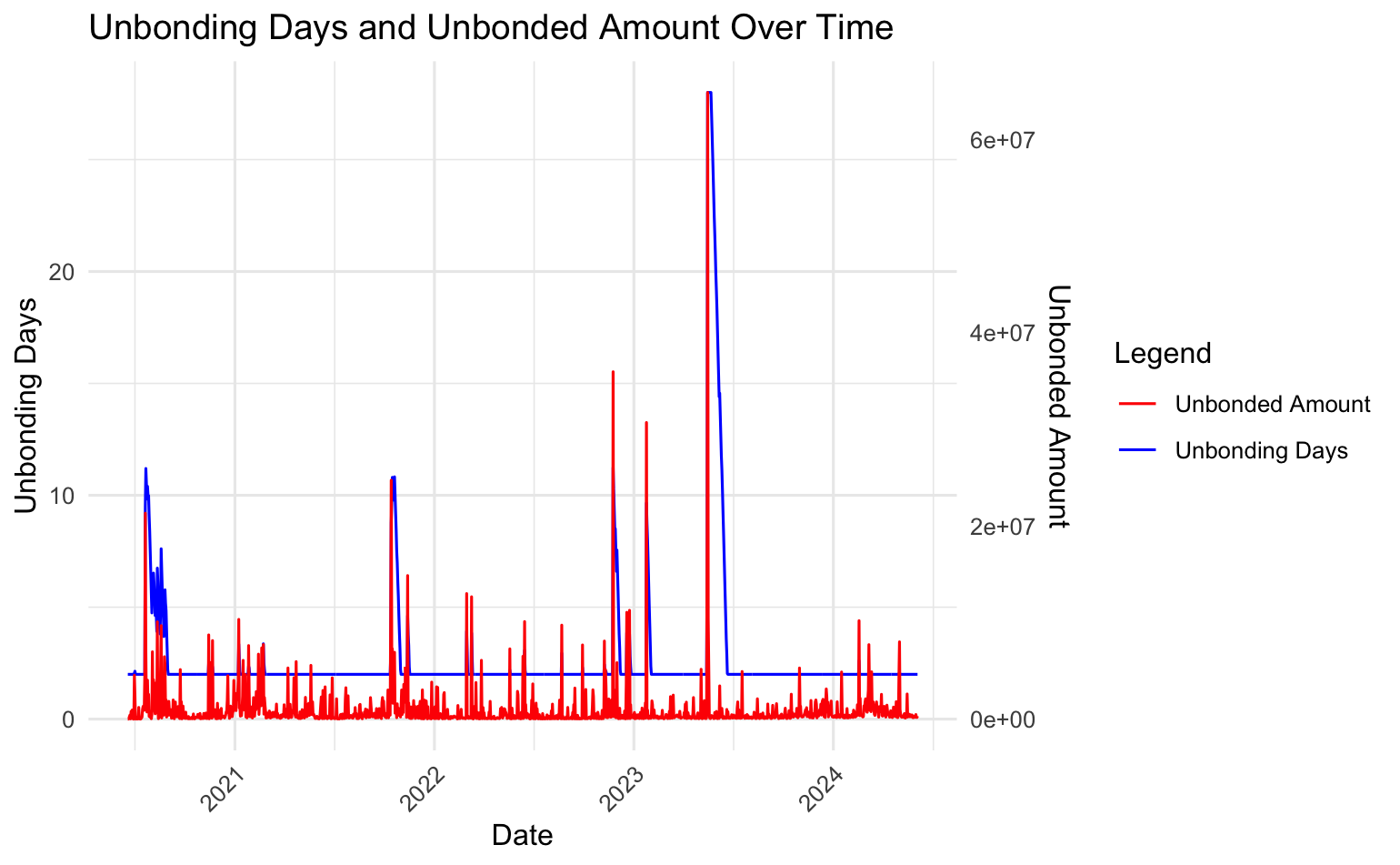

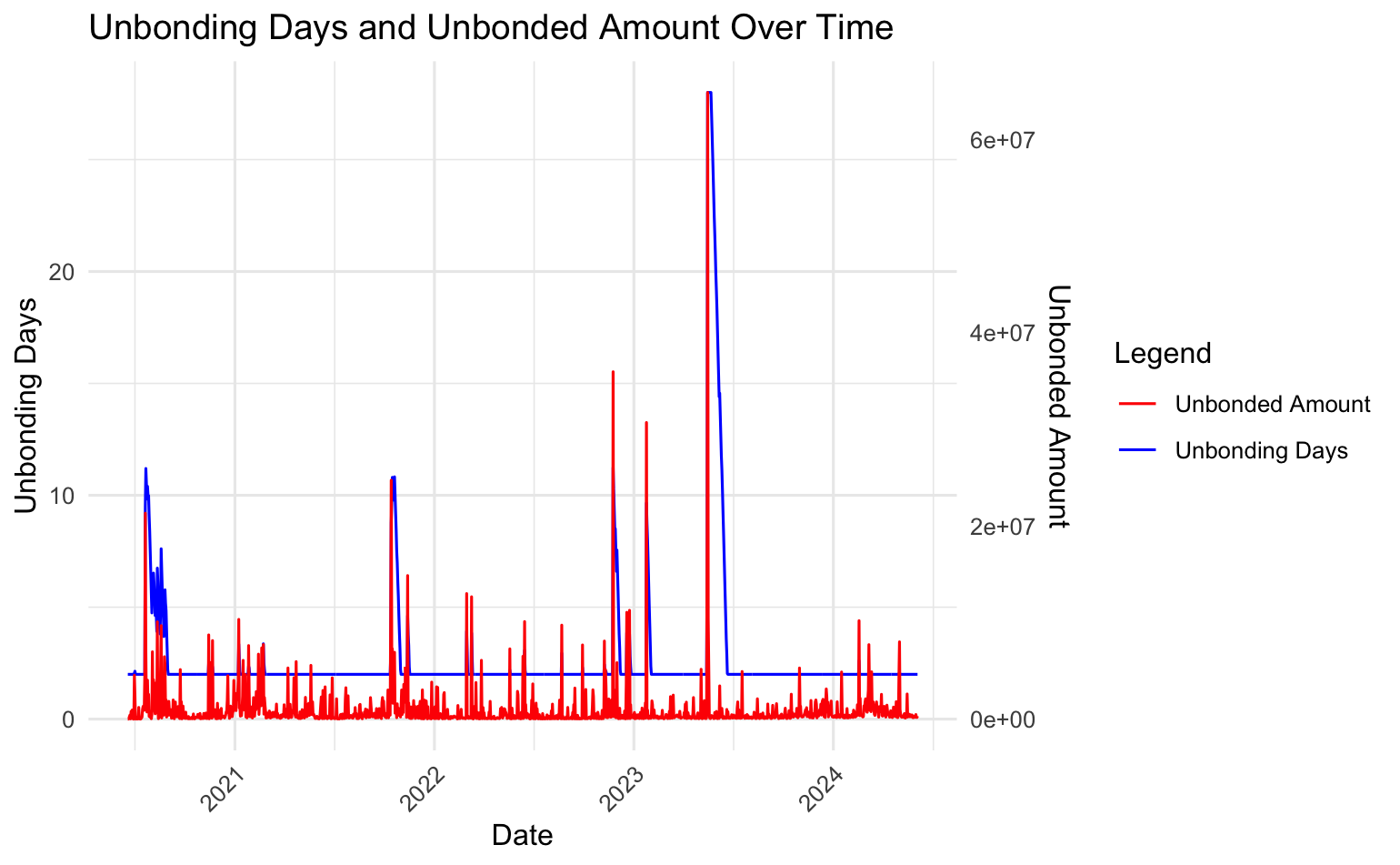

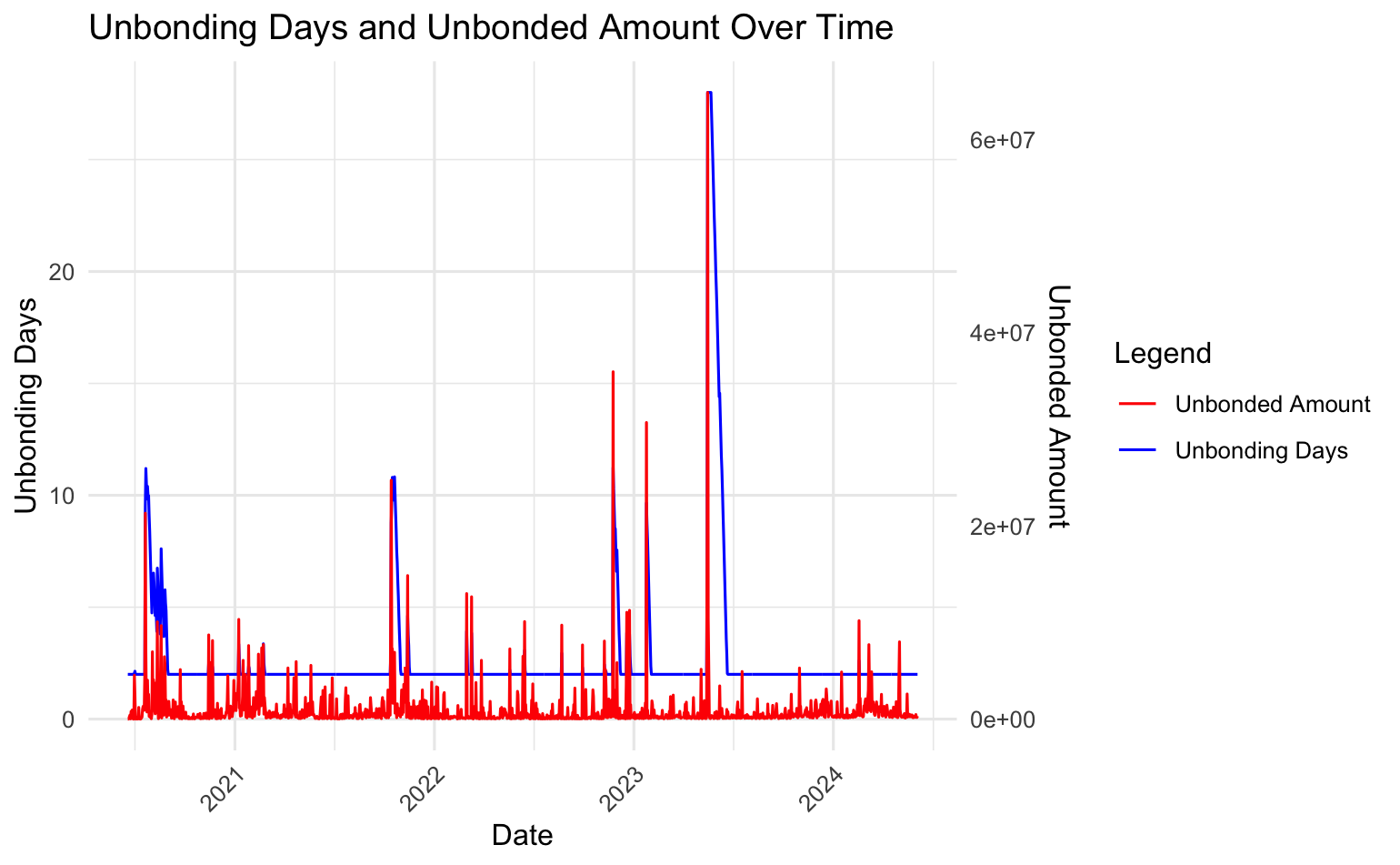

+The following graph plots said statistics.

+

+

+

+

-

-Note that the curves shift depending on the value of $\chi_{ideal}$. Alternative parameter configurations are available [here](https://www.desmos.com/calculator/2om7wkewhr).

-

-

-

-### Payment details

-

-Honest validators participate in several protocols, and the system either rewards their successful involvement or penalize their absence—depending on which is easier to detect. From this perspective, rewards are directed specifically toward validators (and their nominators) for *validity checking* and *block production*, as these activities are the most reliably observable.

-

-In the validity checking branch, the system rewards:

-

-* A parachain validator for each validity statement it issues for a parachain block.

-

-In the block production branch, the system rewards:

-

-* A block producer for producing a non-uncle block on the relay chain

-* A block producer for referencing a previously unreferenced uncle block

-* The original producer of each referenced uncle block

-

-These are thus considered payable actions. The protocol defines a point system in which a validator earns a fixed number of points for each payable action executed. At the end of each era, validators receive compensation proportional to the total points they have earned.[^2]

-

-__Adjustable parameters:__ The proposal outlines the following point system:

-

-* 20 points for each validity statement issued,

-* 20 points for each (non-uncle) block produced,

-* 2 points awarded to the block producer for referencing a previously unreferenced uncle,

-* 1 point awarded to the original producer of each referenced uncle.[^3]

-

-In each era $e$, and for each validator $v$, the system maintains a counter $c_v^e$ that tracks the number of points earned by $v$. Let $c^e =

-\sum_{\text{validators } v} c_v^e$ be the total number of points earned by all validators in era $e$, and let $P^e_{NPoS}$ denote the target total payout to all validators and their nominators for that era (see the previous section on the inflation model for details on how $P^e_{NPoS}$ is determined). Then, at the end of era $e$, the payout to validator $v$ and their nominators is given by

-

-$$

-\frac{c_v^e}{c^e} \cdot P^e_{NPoS}

-$$

-

-The counters can also be used to discourage unresponsiveness: if a validator earns close to zero points from payable actions during an era, or any other defined time period, they may be removed from the active set. See the note on Slashings for further details.

-

-### Distribution of payment within a validator slot

-

-In any given era, the stake of a nominator $n$ is typically distributed accross multiple validators, for example, 70% to validator 1, 20% to validator 2, and 10% to validator 3. This distribution is determined automatically by the NPoS validator election mechanism, which runs at the beginning of each era (see the notes on NPoS for further details).

-

-If there are $m$ validators, the stake distribution partitions the global stake pool into $m$ slots, one per validator. The stake in each validator slot consists of 100% of that validator's stake, along with a fraction (possibly zero) of the stake from each nominator who supported that validator. A validator's stake is sometimes referred to as "self-stake" to distinguish it from the *validator slot's stake*, which is typically much larger.

-

-The previous subsection describes how payouts are assigned to each validator slot during a given era, while this subsection explains how each slot's payout is further distributed among the validator and their nominators. Ultimately, a nominator's payout for a given era equals the sum of their payouts from all slots in which they hold a stake.

-

-Since neither nominators nor validators can individually control the stake partitioning into validator slots, which is determined automatically by the validator election mechanism, nor the exact payouts, which depend on global parameters such as the staking rate, participants cannot know in advance the exact reward they will receive during an era. In the future, nominators may be able to specify their desired interest rates. This feature is currently disabled to simplify the optimization problem solved by the validator election mechanism.

-

-The mechanism utilizes as much of a nominator's available stake as possible. That is, if at least one of their approved validators is elected, their entire available stake will be used. The rationale is that greater stake contributes to stronger security.

-

-In contrast, validator slots are compensated equally for equal work, and NOT in proportion to their stake levels. If a validator slot A has less stake than validator slot B, the participants in slot A receive higher rewards per staked DOT. This design encourages nominators to adjust their preferences in subsequent eras to support less popular validators, thereby promoting a more balanced stake distribution across validator slots, one of the core objectives of the validator election mechanism (see notes on NPoS for more details). This also increases the likelihood that new validator candidates can be elected, supporting decentralization within systems.

-

-Within a validator slot, payments are handled as follows: First, validator $v$ receives a "commission fee", an amount entirely set by $v$ and publicly announced prior to the era, before nominators submit their votes. This fee is intended to cover $v$'s operational costs. The remaining payout is then distributed among all participants in the slot, including $v$ and nominators, in proportion to their stake. In other words, validator $v$ is considered as two entities for the purpose of payment: a non-staked entity that receives a fixed commission, and a staked entity treated like any other nominator and rewarded pro rata based on stake. A higher commission fee increases $v$'s total payout while reducing returns for nominators; however, since the fee is announced in advance, nominators tend to support validators with lower fees (assuming other factors are equal).

-

-Under this scheme, the market regulates itself. A validator candidate who sets a high commission fee risks failling to attract sufficient votes for election, while validators with strong reputations for reliability and performance may justify charging higher fees, an outcome that is considered fair. For nominators, backing less popular or riskier validators may result in higher relative rewards, which aligns with expected risk-reward dynamics.

-

-:::note Additional notes

-

-Finality gadget [GRANDPA](https://github.com/w3f/consensus/blob/master/pdf/grandpa.pdf)

-

-Block production protocol [BABE](Polkadot/protocols/block-production/Babe.md)

-

-The [NPoS scheme](Polkadot/protocols/NPoS/index.md) for selecting validators

-:::

-

-**For any inquiries or questions, please contact** [Jonas Gehrlein](/team_members/Jonas.md)

-

-

-[^1]: The rewards perceived by block producers from transaction fees (and tips) do not come from minting, but from tx senders. Similarly, the rewards perceived by reporters and fishermen for detecting a misconduct do not come from minting but from the slashed party. This is why these terms do not appear in the formula above.

-

-[^2]: The exact DOT value of each point is not known in advance, as it depends on the total number of points earned by all validators during that era. This design ensures that the total payout per era aligns with the inflation model defined above, rather than being directly tied to the number of payable actions executed.

-

-[^3]: Note that what matters here is not the absolute number of points, but rather the point ratios, which determine the relative rewards for each payable action. These point values are parameters subject to adjustment by governance.

\ No newline at end of file

diff --git a/docs/Polkadot/token-economics/payments-and-inflation.png b/docs/Polkadot/token-economics/payments-and-inflation.png

deleted file mode 100644

index d00e36b7..00000000

Binary files a/docs/Polkadot/token-economics/payments-and-inflation.png and /dev/null differ

diff --git a/docs/Polkadot/token-economics/transaction-fees.md b/docs/Polkadot/token-economics/transaction-fees.md

deleted file mode 100644

index 33b26362..00000000

--- a/docs/Polkadot/token-economics/transaction-fees.md

+++ /dev/null

@@ -1,124 +0,0 @@

----

-title: Transaction Fees

----

-

-## Relay-chain transaction fees and per-block transaction limits

-

-With a clearer understanding of how payments and inflation occur, the next step is to discuss the desired properties of relay-chain transactions.

-

-

-

-1. Each relay-chain block should be processed efficiently, even on less powerful nodes, to prevent delays in block production.

-2. The growth rate of the relay-chain state is bounded. 2′. Ideally, the absolute size of the relay-chain state is also bounded.

-3. Each block *guarantees availability* for a fixed amount of operational, high-priority transactions, such as misconduct reports.

-4. Blocks are typically underfilled, which helps handle sudden spikes in activity and minimize long inclusion times.

-5. Fees evolve gradually enough, allowing the cost of a particular transaction to be predicted accurately within a few minutes.

-6. For any transaction, its fee must be strictly higher than the reward perceived by the block producer for processing it. Otherwise, the block producer may be incentivized to fill blocks with fake transactions.

-7. For any transaction, the processing reward perceived by the block producer should be high enough to incentivize its inclusion, yet low enough to discourage the creation of a fork to capture transactions from a previous block. In practice, this means the marginal reward for including an additional transaction must exceed its marginal processing cost, while the total reward for producing a full block remains only slightly greater than that for an empty block, even when tips are taken into account.

-

-For now, the focus is on satisfying properties 1 through 6 (excluding 2′), with plans to revisit properties 2′ and 7 in a future update. Further analysis of property 2 is also among upcoming steps.

-

-The number of transactions processed in a relay-chain block can be regulated in two ways: by imposing resource limits and by adjusting transaction fees. Properties 1 through 3 are satisfied through strict resource limits, while properties 4 through 6 are addressed via fee adjustments. The following subsections present these two techniques in detail.

-

-### Limits on resource usage

-

-When processing a transaction, four types of resources may be consumed: length, time, memory, and state. Length refers to the size of the transaction data in bytes within the relay-chain block. Time represents the duration required to import the transaction, including both I/O operations and CPU usage. Memory indicates the amount of memory utilized during transaction execution, while state refers to the increase in on-chain storage due to the transaction.

-

-Since state storage imposes a permanent cost on the network, unlike the other three resources consumed only once, it makes sense to apply rent or other Runtime mechanisms to better align fees with the true cost of a transaction and help keep the state size bounded. An alternative approach is to regulate state growth via fees rather than enforcing a hard limit. Still, implementing a strict cap remains a sensible safeguard against edge cases where the state might expand uncontrollably.

-

-**Adjustable parameters.** For now, the following limits on resource usage apply when processing a block. These parameters may be refined through governance based on real-world data or more advanced mechanisms.

-

-* Length: 5MB

-* Time: 2 seconds

-* Memory: 10 GB