New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

TaskomaticDaemon - Failure occured during job recovery #5556

Comments

|

Can you please provide complete logs? |

|

@sysy69 can you please enable log level debug and provide the complete from |

|

We're seeing the same issue on 2022.05. However, I don't see a log4j.properties in that directory. Should this be the edit? from |

|

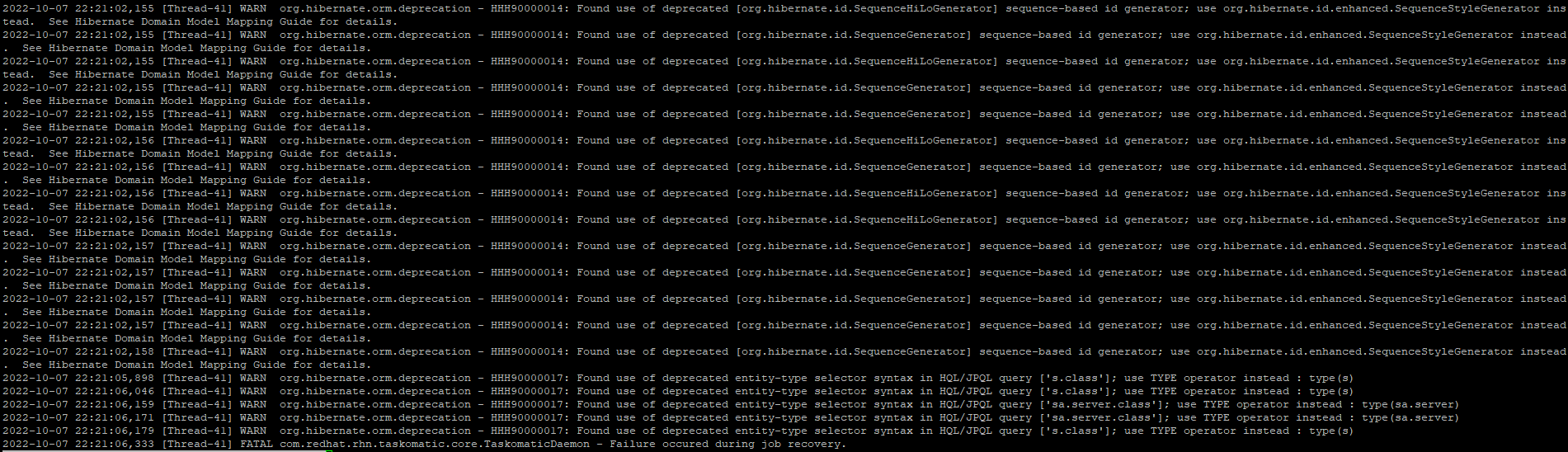

I see this leading up to the fatal entries: |

|

We updated to 2022.06 and the issue persists. We can get taskomatic to generate this error: by calling this api: |

|

The last successful action before this started failing for us was: as seen in https://uyuniserver/rhn/manager/admin/runtime-status |

@hbrown-uiowa if

Logs will be really verbose so after this test you may want to disable them, to save disk space (so remove |

|

I enabled the debug settings from above and restarted but didn't seem to add additional logging for me https://gist.github.com/megamaced/7eb5a7d4e6e825da5bebdee166d00243 |

|

Is there any work around to this issue? We can't sync repodata |

|

still broken in 2022.08 |

|

I suspect the issue is with quartz scheduler. Is there a way to clean out the jobs? uyuni=# select * from qrtz_simple_triggers; uyuni=# select trigger_name,trigger_group,job_name,trigger_state from qrtz_triggers WHERE trigger_name LIKE '%retry%'; |

|

takomatic started after running: We are waiting to see if it stops in the same part of the schedule when it gets back to it. |

|

We had the same issue... |

|

same problem... |

|

cc @mackdk |

|

If the problem recurs, can someone add the log info that was requested. Cleaning out the entries in the DB is just dealing with symptoms (and we're not even sure that it's the right thing to do). Since clearing out those rows in the DB, we haven't had it recur and our test system is syncing well on 2022.08. |

|

Unfortunately the statements @ddholstad99 did not work for me. Entries were removed, but no tasks running. Version 2022.08 |

|

Running Uyuni 2022.08 I have tried the statements shared by @ddholstad99 , but I am still stuck with the same issue. uyuni=# select * from qrtz_simple_triggers; uyuni=# select trigger_name,trigger_group,job_name,trigger_state from qrtz_triggers WHERE trigger_name LIKE '%retry%'; In Uyuni when clicking on Admin, Task Schedules, I get this: I see this in /var/log/rhn/rhn_taskomatic.daemon.log: Please let me know if any other logs/information would help. |

|

@darrynl please follow the instruction here: #5556 (comment) . |

|

@darrynl @sysy69 please try to upgrade uyuni and let me know if the issue is still present. If still present, please follow #5556 (comment) . Thanks! |

|

unfortunately I wasn't able to reproduce the bug and the current logs are not enough to explain the reason of the issue. Since there's no answer for a long time, I suppose the issue has been fix, so I'll close the bug. Feel free to re-open it if you still see the error |

Problem description

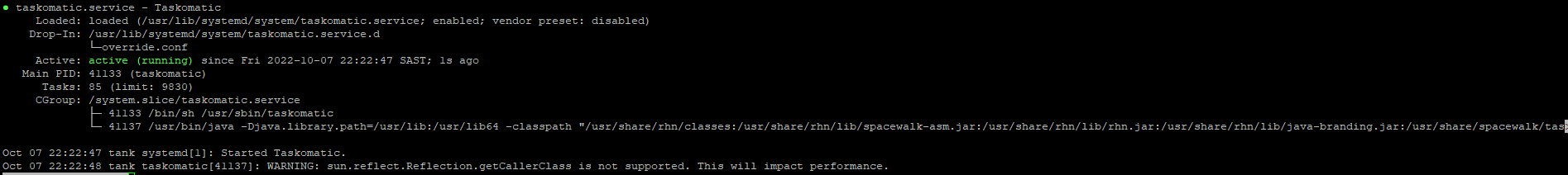

Taskomatic is crashing all 10Sec.

Version of Uyuni Server and Proxy (if used)

Information for package Uyuni-Server-release:

Repository : uyuni-server-stable

Name : Uyuni-Server-release

Version : 2022.05-180.1.uyuni1

Arch : x86_64

Vendor : obs://build.opensuse.org/systemsmanagement:Uyuni

Support Level : Level 3

Installed Size : 1.4 KiB

Installed : Yes

Status : up-to-date

Source package : Uyuni-Server-release-2022.05-180.1.uyuni1.src

Summary : Uyuni Server

Description :

Uyuni lets you efficiently manage physical, virtual,

and cloud-based Linux systems. It provides automated and cost-effective

configuration and software management, asset management, and system

provisioning.

Details about the issue

Taskomatic stopt working, no updates or anything else was made.

error: [Thread-43] FATAL com.redhat.rhn.taskomatic.core.TaskomaticDaemon - Failure occured during job recovery.

/var/log/rhn/rhn_taskomatic_daemon.log

_

The text was updated successfully, but these errors were encountered: