-

-

Notifications

You must be signed in to change notification settings - Fork 123

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Do not duplicate release download #375

Comments

|

Hi! There was a Discussion started a couple of months ago to try and define what a duplicate is because there were some different ideas. #294 Not yet settled on it but should be given some prio in the near future. |

|

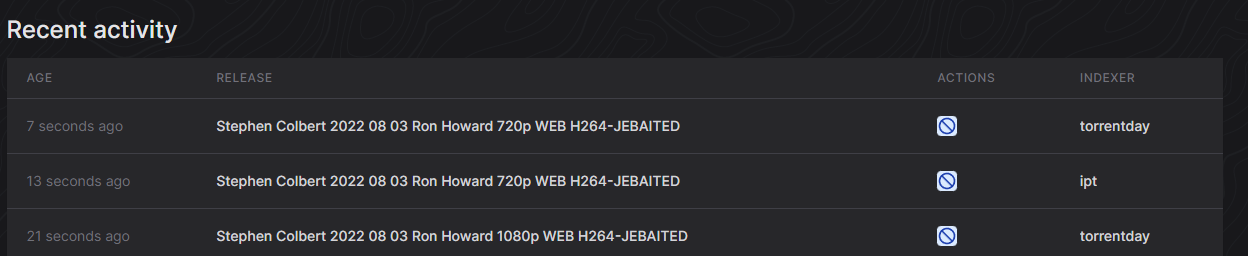

@zze0s I'd say this example is a good candidate on what an duplicate is (referring to the 720p release in the picture), An idea perhaps would be be able to ignore releases with the exact same release name as an previous release? I think that would catch 90% of all cases this is relevant EDIT: An "bigger" picture showing that issue is indeed present, |

|

Just to follow up on my last post (and seeing a lot of duplicates recently in my clients), what I mean was something simple like this (psuedo code): release_name = 'Cool Movie 2022 720p WEB h264-ReleaseGroup'

dedup_releases = True # config variable to enable or disable if we want duplicates sent to client

if release_name in db.query("SELECT 1 FROM approved_release WHERE name = {release_name}") and dedup_releases:

return # drop this release as it already existed in DB and dedup_releeases is true

else:

push_release(release_name) # push to clientI think with this rudementary approach we can catch 98% of all these duplicates getting approved and pushed to clients. This approach obviously doesn't take into account stuff like quality etc, but imo its better then nothing. |

Indeed great. I believe this could somehow be connected with cross-seed. I'm not certain how it would behave when the first torrent is still being downloaded and the next announce comes. It should only happen once the first is completed, right? (looking at the screenshots posted by @jkaberg |

@stxmqa There is complexity with fetching the information about completion from the download client. What we are really asking is even if it's just started downloading and the next announce comes in, it will be ignored. There are indexers which upload the same content at almost exactly the same time (e.g. within a second), so this will prevent the duplication. |

Actually what I'm referring to is looking up the autobrr database and if the (incoming) release has been "registered" in the (autobrr) database and the (autobrr) config option (to not process "similar" releases) is checked then don't send the release to client (radarr/sonarr/torrent etc) So my suggestion doesn't involve fetching information from any client, everything is done "in house". Now sure that is not ideal in some cases, but it should bring down complexity quite a bit |

|

I'm currently testing this approach: # Get the list of last 20 releases via API

last20releases=$(curl -X GET "http://${AUTOBRR_HOST}:7474/api/release" -H "X-API-Token: ${AUTOBRR_API_KEY}" | jq -r '.data[].name')

# Check if the new release is in the list of last 20 or not and quit with appropriate exit code

if echo "${last20releases}" | grep -q "${1}" ; then

echo "$(date -u) |${1}| already in last 20 releases, stopping" >> preventMultigrab.log

exit 1

else

echo "$(date -u) |${1}| is being downloaded" >> preventMultigrab.log

exit 0

fi |

I have the same issue as you with multiple indexers grabbing the same torrents. How is this script working for you? |

just go old school if you're looking to skip exact matches, tee -a into the same file, grep, and then rotate it once it hits 50mb or something unfathomable. |

I created filter which downloads releases from 5 different trackers through IRC announce channels.

The problem if within 1 minute is announced same release on every tracker, autobrr sends 5 torrents to client and although I have 5 items in clients

It is possible in filter to specify unique download no matter from which tracker it downloads ?

The text was updated successfully, but these errors were encountered: