-

Notifications

You must be signed in to change notification settings - Fork 0

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

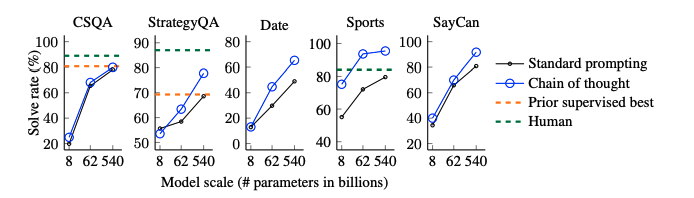

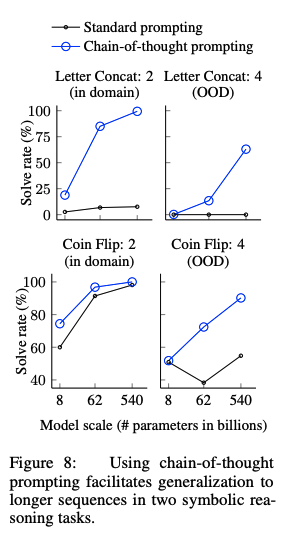

Chain of thought prompting elicits reasoning in large language models, Wei+, Google Research, arXiv'22 #551

Labels

Comments

|

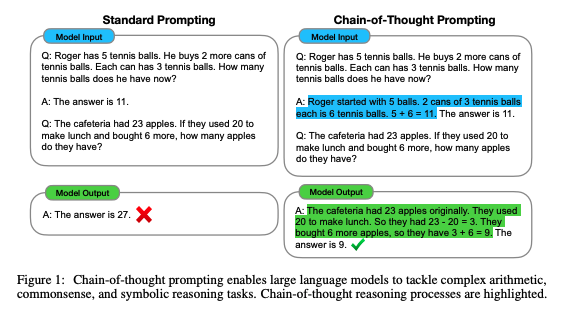

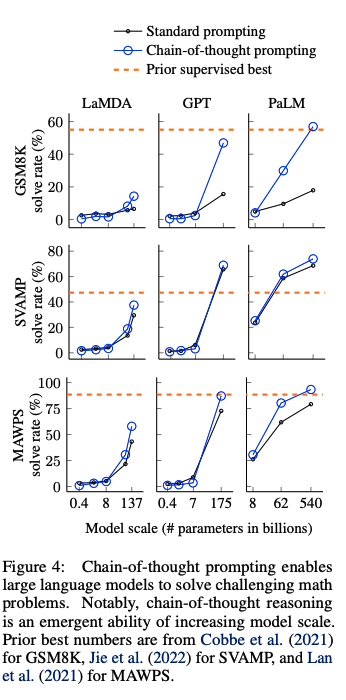

先行研究では、reasoningが必要なタスクの性能が低い問題をintermediate stepを明示的に作成し、pre-trainedモデルをfinetuningすることで解決していた。しかしこの方法では、finetuning用の高品質なrationaleが記述された大規模データを準備するのに多大なコストがかかるという問題があった。 |

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

https://arxiv.org/abs/2201.11903

The text was updated successfully, but these errors were encountered: