New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Some questions about self_attn #12

Comments

|

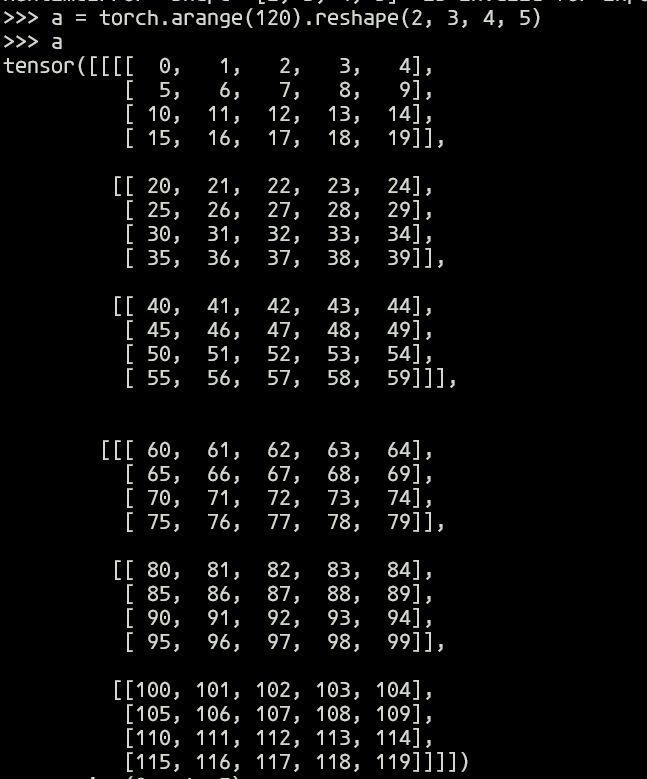

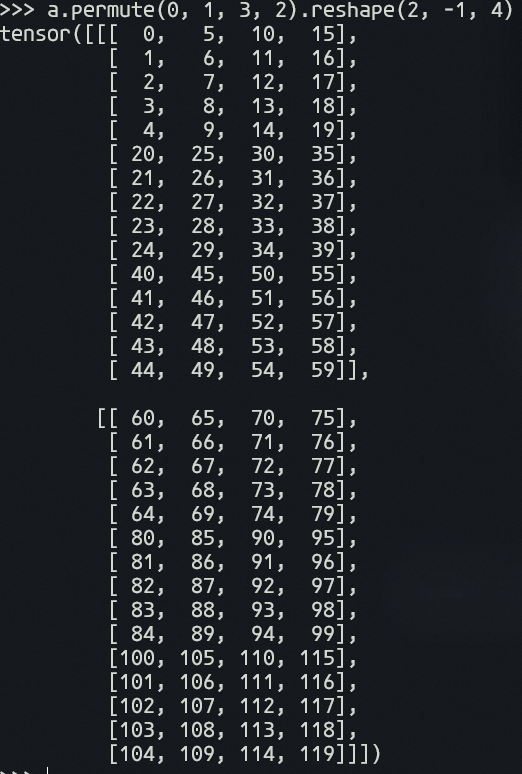

Hi, I do not think need premute. For the mode = 'h', the shape of projected_query is (batch_size, height, channel*weight), projected_key is (batch_size, channel*weight, height). And the attention_map is projected_query * projected_key, and the shape is (batch_size, height, height). The shape of projected_value is (batch_size, channel*weight, height). The output is projected_value * attention_map, and we reshape the output from (batch_size, channel*weight, height) to (batch_size, channel, weight, height). For the mode = 'w' is same. Actually, you may find some repositories, they use the similar way to define self_atten. For the sigmoid is I find that in our network, sigmoid can get better results |

|

see here |

Hi.

viewin modeh?The text was updated successfully, but these errors were encountered: