New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Regression in next11: resampling does not work with alsa #203

Comments

|

this is a bug in next11 . 1.0 release does not have the bug. |

|

Which commit are you on, before or after this was merged? |

|

latest. I just synced a moment ago |

|

If you have time, can you check if the problem already is there on next11 from just before the merge? |

|

here's the test file. This bug is not related to gadget. My USB audio interface has the same issue. here's 1.0 release here's e45d230 here's 519956e |

|

You don't really need gadget to repro the bug. any audio usb interface with inputs would do. |

|

Thanks for all the details! This saves me a ton of investigation time. |

|

EPIPE of snd_pcm_wait could be result of an xrun. Period 1024 at 192kHz is only 5ms, IMO quite susceptible to xruns. A debug with TRACE log would show the timing, the pcm_pcm_wait times etc. Thanks. |

|

I believed I tried 10240 and it failed too. while trying 1024 with no src succeeds. lemme find my setup and get the log. |

|

|

Please post the trace log, not just debug. It shows the actual timing. Thanks |

|

I did run it with '-l trace', and you can find 'TRACE' in the logs... |

|

If change trunk size to 2048. here's next11(519956e) 's log: here's 1.0.0 |

|

It looks like it fails on the first call to snd_pcm_wait. I'm guessing that something is wrong with the buffer size or threshold. Does it only happen with capture_samplerate > samplerate?? |

|

I would suggest to add a patch for tracing the snd_pcm_wait timeout: |

|

Maybe also tracing the buffer manager parameters at each capture/playback_buffer call: |

|

@HEnquist true. only happens when samplerate < capture sample rate @pavhofman here's the log based on your patch rebased to e45d230 |

|

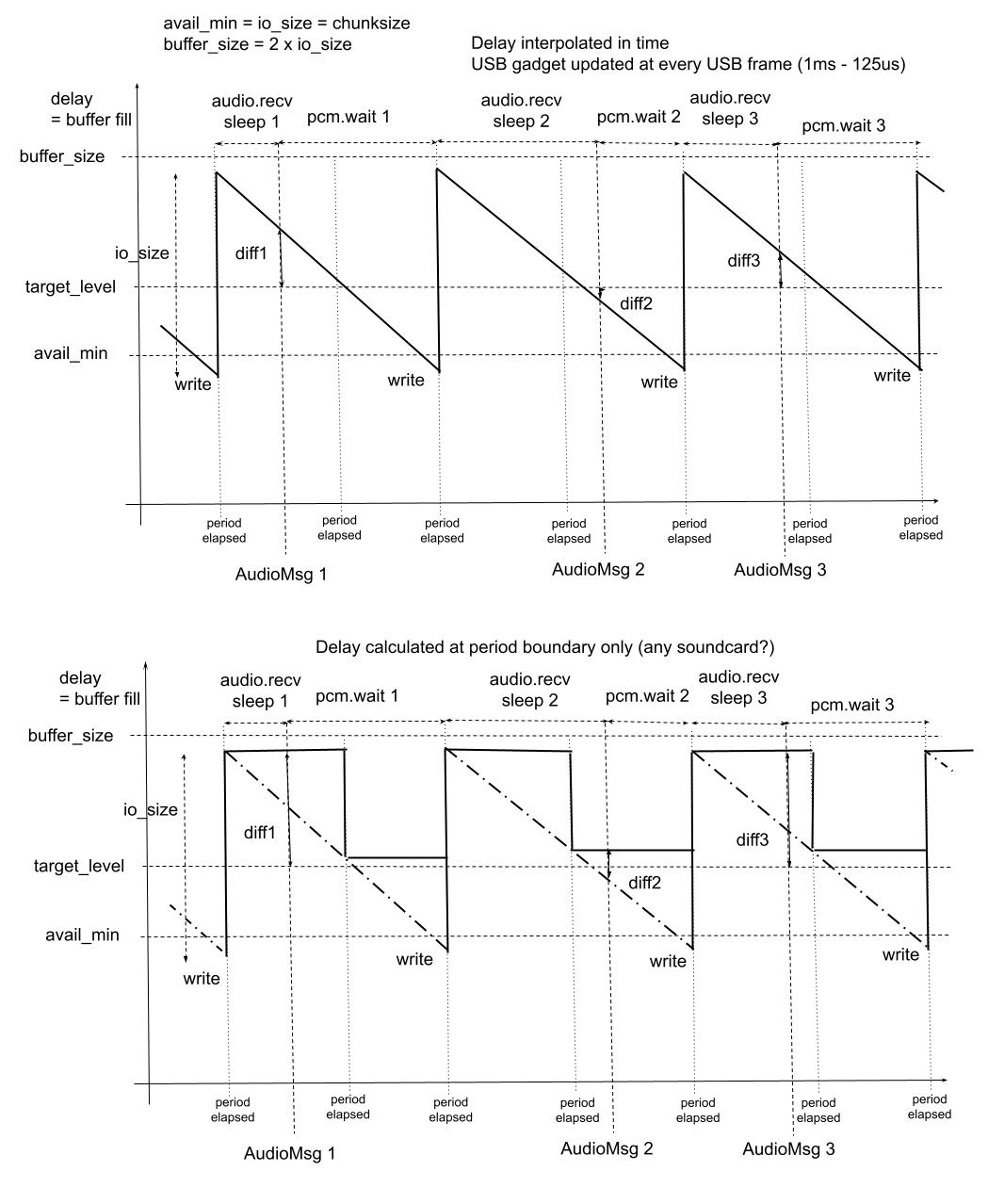

Thanks a lot. IMO the problem is this: The resampler radically changes the required capture_frames which gets outside of the pre-configured device parameters: device bufsize (calculated before the resampler kicks in) ends up lower than avail_min = io_size = capture bytes. @HEnquist please do you see any way out of this? Maybe the resampler should be consulted prior to setting the device hw params. Also I would suggest to include the two debug patches, I will send a PR. |

|

This should be pretty easy to fix. There is already a calculation to give a suitable buffer size: Line 1092 in e45d230

In 1.0.0 this value is used by open_pcm to set the buffer, but not (yet) in next11. Instead it uses values from the Buffer manager that doesn't know about the resampling settings. |

|

This simple fix seems to do the trick: b313257 |

|

Thanks, it really looks like a regression bugfix, considering the DeviceBufferManager just consolidated all device-related data and did not introduce any new functionality. |

|

@Wang-Yue can you check that it also works for you? |

|

It works for the above test config. however, it breaks the gadget code with no SRC |

|

This looks very odd: The avail_min should not be larger than the buffer_size. They should get the same value. It should have requested a buffer_size of 8192, but somehow got 2048 instead. |

|

Max buffer size is defined in https://elixir.bootlin.com/linux/v5.18-rc6/source/drivers/usb/gadget/function/u_audio.c#L26 - 16 * 4k page = 64kB -> 8192 frames at 2ch/32bit. And indeed it is the case on my RPi4 aarch64 : @Wang-Yue What BUFFER_SIZE range does your arecord --dump-hw-params report? @HEnquist Still I am afraid we cannot assume that soundcard will use whatever buffer size we request. That's why the set_xxx_near versions are being called. The code should respect the actual buffer_size value. It may have an impact on chunksize (chunksize = actual buffer_size /4). Also io_size (and subsequenty avail_min) should be adjusted AFTER the actual buffer_size is determined by the soundcard, probably directly in DeviceBufferManager::apply_buffer_size. Maybe the io_size param in the DeviceBufferManager constructor is wrong, and io_size/avail_min should be set in apply_buffer_size. Maybe the method apply_buffer_size should be renamed to sth like init_buffer_period(hw_params, requested io_size=chunksize (playback)/buffer_frames (capture)) which would:

My intention is to keep all actual device parameters and their calculations within the DeviceBufferManager objects so that they are not spread throughout the calling code and values can be monitored and calculations modified easily. |

|

@pavhofman I think we found out the cause: on my rpi4 the BUFFER_SIZE is correct for I'm using 32bit raspbian. here's the gadget setup: here's the dsnoop setup: |

|

I tested the following config with camilladsp. using device So how can I create the dsnoop device correctly? my asoundrc is in the end of the previous comment. |

|

You can try setting the buffer_size param in the slave config of https://www.alsa-project.org/alsa-doc/alsa-lib/pcm_plugins.html#pcm_plugins_dsnoop . Still the code should be able to self-adjust to the actual buffer size used, or maybe just fail with textual explanation. |

|

Yes it needs to a bit more clever when setting up buffer size and avail_min etc. I started by just breaking the alsadevice into several files, it had gotten too long so this should make it easier to work with. |

|

How is the possible values of avail_min connected to buffer and period sizes? The alsa documentation isn't helping much. |

|

@HEnquist Thanks a lot for the changes. Splitting the long file is very useful indeed. I am not sure about introducing the resampling ratio and the chunksize to the device manager, as it is not related to the device, and it would end up keeping it in two places, having to be updated etc. Or maybe I have missed something. How about moving the buffer_frames calculation 80dbb76#diff-97d4c24d5bc14dadbff0f198ddabe43bb729daea8df2988266a047a0a4f680caL17 to a separate function, used by both the device manager and alsadevice.rs? IMO proper naming the function would explain a lot about the purpose of the calculation, especially if it's used on two places in the source. IIUC this change 80dbb76#diff-97d4c24d5bc14dadbff0f198ddabe43bb729daea8df2988266a047a0a4f680caL22 changes the period size - originally it was io_size/4 which was bufsize/8, now it's bufsize/4 . Is that an intention? Thanks! |

IIUC at period-elapsed time the driver checks delay (i.e. buffer fill) and if bufsize - delay (i.e. the unfilled available part of the buffer) is larger than avail_min, it unblocks pcm_wait . IMO we want more periods to make the filling timing more granular. |

|

I am totally confused on how to set correct chunksize in the YML to make camilladsp able to run. Suppose I have let's say output device's sample rate is On Mac I just use 4096 and it works for all rates and devices, from 44.1khz to 768khz. On Linux I run into all kinds of issues ... sometimes bufsize is < available min. sometimes it's larger than the upper boundary of BUFFER_SIZE. even if i tune /etc/asoundrc to make gadget having size of 262,144 it fails as well... Is there a general rule to tune the chunksize depending on |

|

I made some updates to clean things up. I exposed the buffer size calculation as a function of the buffermanager trait, and use that in both places. @Wang-Yue things work a bit different in the Alsa and CoreAudio backends. In the CoreAudio device (and Wasapi), CamillaDSP doesn't change the device buffer and period sizes. Instead it uses what is given by the system, and buffers the data so it can be split into |

|

tried the latest alsa_init_fix. finally everything works, including gadgets and dsnoop. |

|

@Wang-Yue Did you set the buffer_size param to your gadget device prior to the test? @HEnquist Thanks for the changes! https://github.com/HEnquist/camilladsp/blob/alsa_init_fix/src/alsadevice_buffermanager.rs#L58 - that looks like a reason to exit with error, IMO an io operation larger than bufsize cannot work by principle, IIUC. https://github.com/HEnquist/camilladsp/blob/alsa_init_fix/src/alsadevice_buffermanager.rs#L53 + https://github.com/HEnquist/camilladsp/blob/alsa_init_fix/src/alsadevice_buffermanager.rs#L58 - since both the checks are against io_size, perhaps should the check be in update_io_size(requested io_size) instead of the subsequently called apply_avail_min? https://github.com/HEnquist/camilladsp/blob/alsa_init_fix/src/alsadevice.rs#L1006 - could buf_manager.get_data().bufsize be called instead of the buffer_frames calculated in https://github.com/HEnquist/camilladsp/blob/alsa_init_fix/src/alsadevice.rs#L953 ? IMO allocating a buffer for io operations sized as the actual device bufsize makes sense. The final device bufsize can end up being larger than buffer_frames (xx_near call). The variable buffer_frames is not used anywhere else and could be removed alltogether then. Thanks a lot for your great work. |

|

yes. i set it to 8192. |

|

@pavhofman All good points! Thanks! I'll update the PR. |

|

Updated!

I think it's better to keep the checks where they are. That way it also applies the checks when applying the avail_min during the device setup. |

|

You are right, let's keep the check in apply_avail_min. io_size is set in the device manager constructor too, not only in update_io_size() call. Moving the check to update_io_size() would be a bug. The check cannot be performed in the constructor because no period info is available at that moment. Thanks. |

|

I think we are done with this one, closing! |

tested with the following

log

turning src off works.

The text was updated successfully, but these errors were encountered: