You signed in with another tab or window. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert

I have a custom array type where the size is stored as one the type parameters. I hoped to increase performance in tight looping, since the indexing limits would be known at compile time. However, I have noticed that this approach can result in variable performance, depending on the size.

I managed to get a MWE, that does not use my custom array type, but shows the same symptoms. It consists of two mysum functions, looping over a 3D Julia array. In the first mysum1 the indexing bounds are known at compile time, in the other mysum2, they are calculated at runtime.

using BenchmarkTools

using Printf

functionmysum1(a, ::Val{N}) where {N}

S =zero(eltype(a))

for k =1:N

for j =1:N

@simdfor i =1:N

@inbounds S += a[i, j, k]

endendendreturn S

endfunctionmysum2(a)

S =zero(eltype(a))

for k =1:size(a, 3)

for j =1:size(a, 2)

@simdfor i =1:size(a, 1)

@inbounds S += a[i, j, k]

endendendreturn S

end

t1s = Float64[]

t2s = Float64[]

Ns =10:150for N = Ns

a =rand(N, N, N)

vn =Val(N)

t1 =minimum([@elapsedmysum1(a, vn) for i =1:1000])

t2 =minimum([@elapsedmysum2(a) for i =1:1000])

@printf"%d %.6f %.6f %.6f\n" N 1000*t1 1000*t2 t1/t2

push!(t1s, t1)

push!(t2s, t2)

endusing PyPlot; pygui(true)

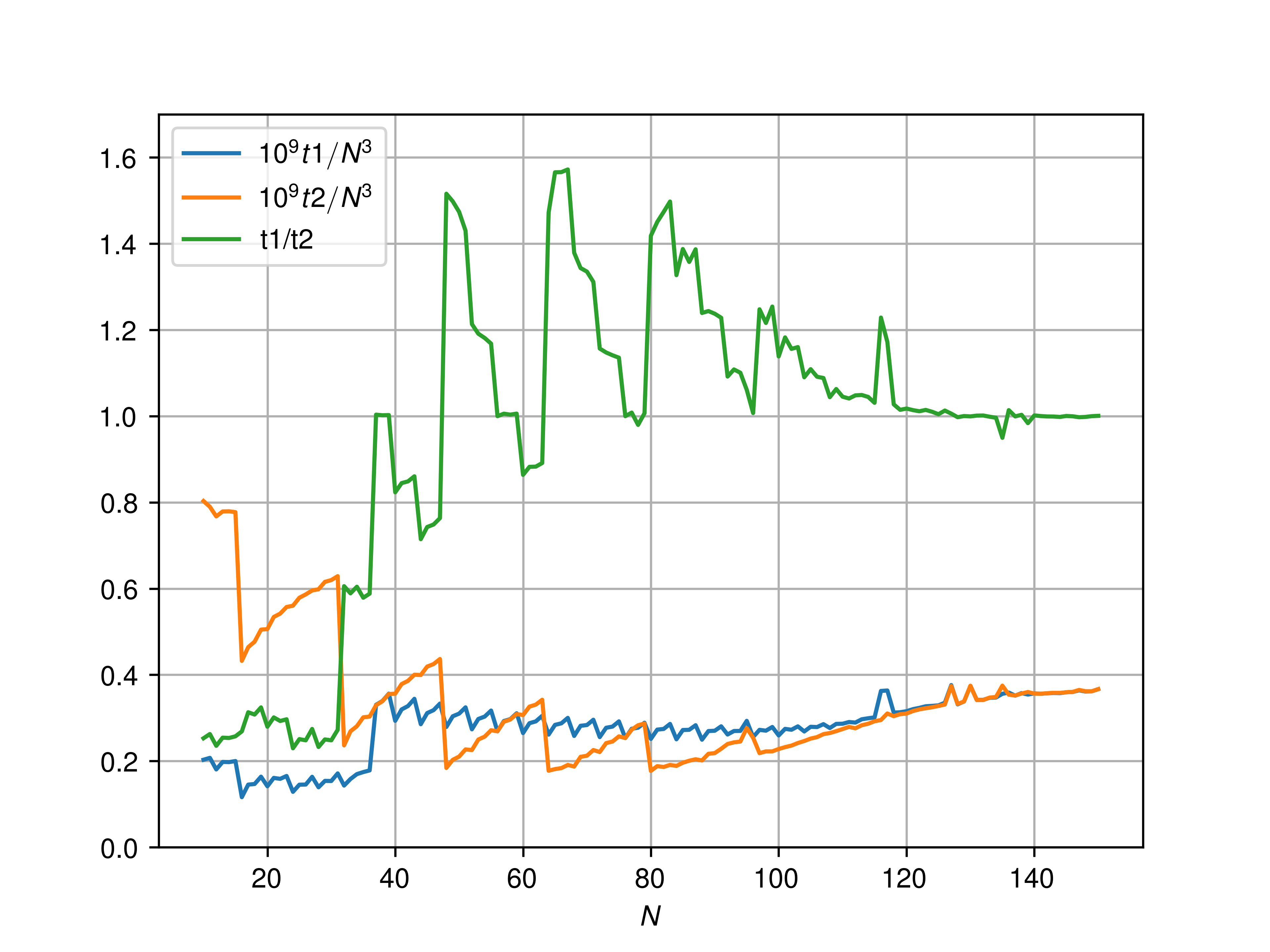

plot(Ns, 1e9.*t1s./(Ns.^3), label=L"10^9 t1 / N^3")

plot(Ns, 1e9.*t2s./(Ns.^3), label=L"10^9 t2 / N^3")

plot(Ns, t1s./t2s, label="t1/t2")

xlabel(L"N")

ylim(0, 1.7)

legend(loc=2)

grid()

savefig("figure.png", dpi=600)

The data is as follows:

There seem to be a threshold around N = 36 below which knowing the array size at compile time is always advantageous. Above that, the speed up with respect to not knowing the size can vary significantly.

I have examined the @code_llvm output and both functions usually produce vectorised code. However, it appears that when mysum2 is faster than mysum1, there is quite some unrolling going on.

For instance, at N=64, we have from mysum1 (slower)

I have a custom array type where the size is stored as one the type parameters. I hoped to increase performance in tight looping, since the indexing limits would be known at compile time. However, I have noticed that this approach can result in variable performance, depending on the size.

I managed to get a MWE, that does not use my custom array type, but shows the same symptoms. It consists of two

mysumfunctions, looping over a 3D Julia array. In the firstmysum1the indexing bounds are known at compile time, in the othermysum2, they are calculated at runtime.The data is as follows:

There seem to be a threshold around

N = 36below which knowing the array size at compile time is always advantageous. Above that, the speed up with respect to not knowing the size can vary significantly.I have examined the

@code_llvmoutput and both functions usually produce vectorised code. However, it appears that whenmysum2is faster thanmysum1, there is quite some unrolling going on.For instance, at

N=64, we have frommysum1(slower)while for

mysum2(faster):Note this is on

The text was updated successfully, but these errors were encountered: