NCG-Optimizer is a set of optimizer about nonlinear conjugate gradient in PyTorch.

Inspired by jettify and kozistr.

$ pip install ncg_optimizerThe theoretical analysis and implementation of all basic methods is based on the "Nonlinear Conjugate Gradient Method"1 , "Numerical Optimization" (23) and "Conjugate gradient algorithms in nonconvex optimization"4.

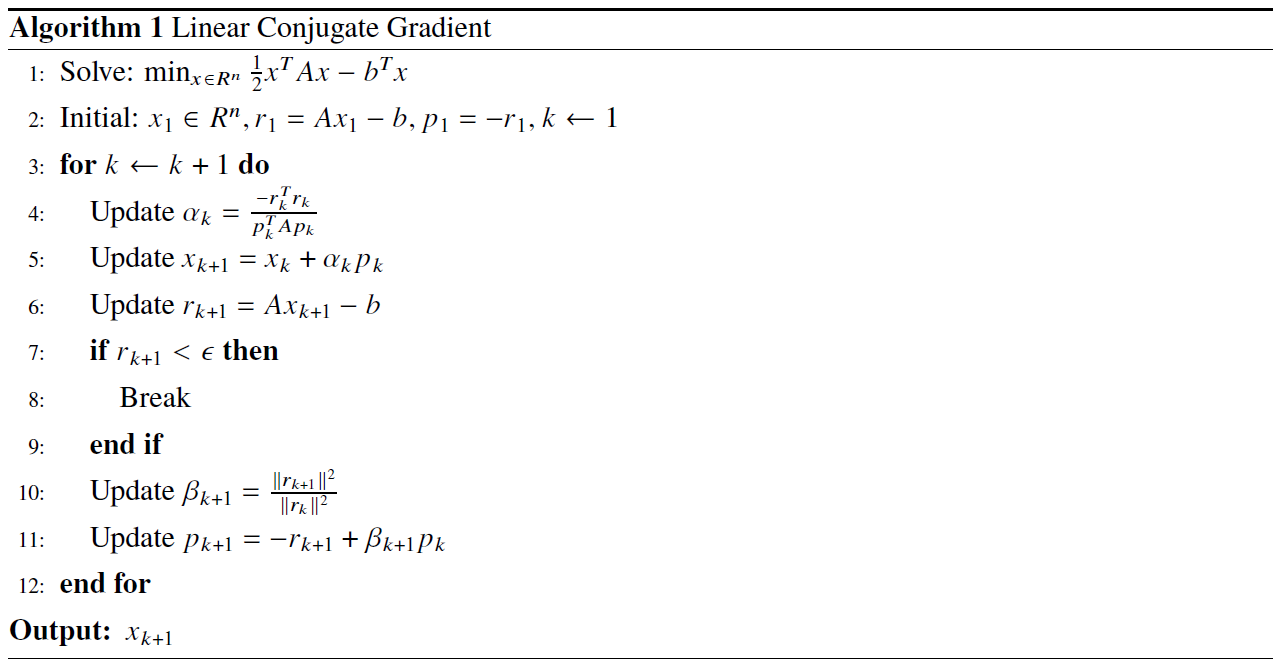

The Linear Conjugate Gradient(LCG) method is only applicable to linear equation solving problems. It converts linear equations into quadratic functions, so that the problem can be solved iteratively without inverting the coefficient matrix.

import ncg_optimizer as optim

# model = Your Model

optimizer = optim.LCG(model.parameters(), eps=1e-5)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

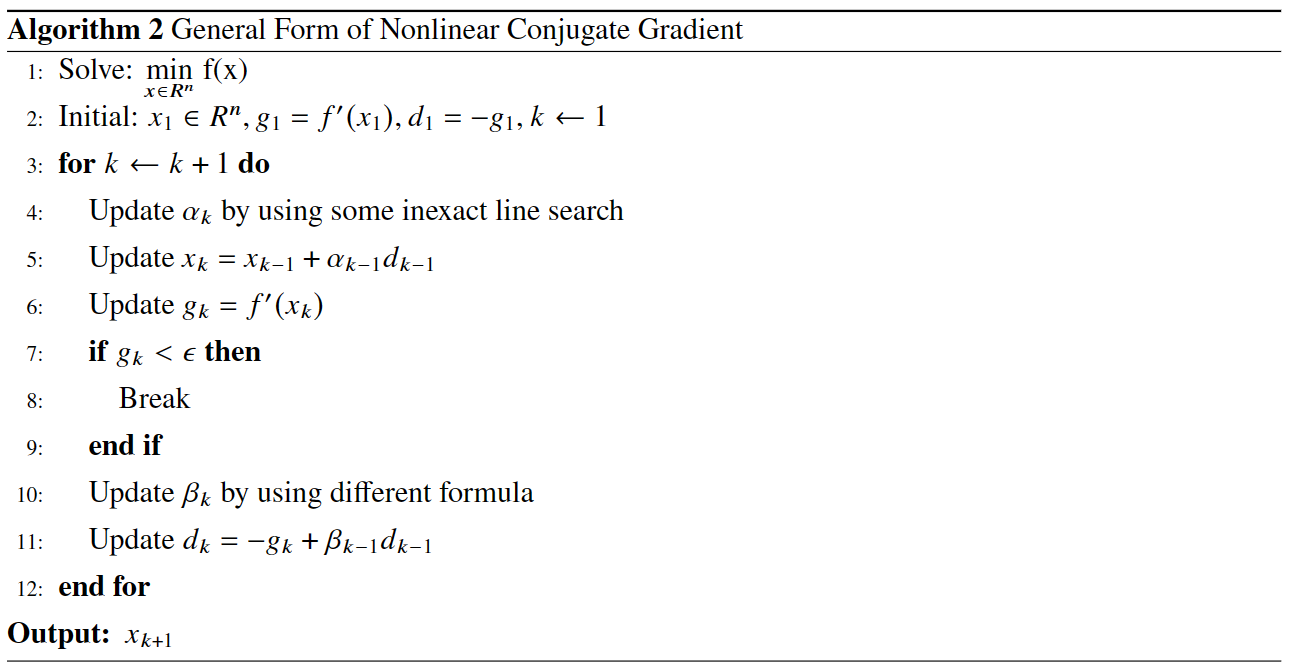

optimizer.step(closure)The Fletcher-Reeves conjugate gradient( FR ) method is the earliest nonlinear conjugate gradient method. It was obtained by Fletcher and Reeves in 1964 by extending the conjugate gradient method for solving linear equations to solve optimization problems.

The scalar parameter update formula of the FR method is as follows:

$$ \beta_k^{F R}=\frac{g{k+1}^T g{k+1}}{g_k^T g_k}$$

The convergence analysis of FR method is often closely related to its selected line search. The FR method of exact line search is used to converge the general nonconvex function. The FR method of strong Wolfe inexact line search method

optimizer = optim.BASIC(

model.parameters(), method = 'FR',

line_search = 'Strong_Wolfe', c1 = 1e-4,

c2 = 0.5, lr = 0.2, max_ls = 25)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

optimizer.step(closure)The Polak-Ribiere-Polyak(PRP) method is a nonlinear conjugate gradient method proposed independently by Polak, Ribiere and Polyak in 1969. The PRP method is one of the conjugate gradient methods with the best numerical performance. When the algorithm produces a small step, the search direction

The scalar parameter update formula of the PRP method is as follows:

$$ \beta_k^{PRP}=\frac{g{k}^{T}(g{k}-g{k-1})}{\lVert g{k-1}\rVert^2}$$

The convergence analysis of the PRP method is often closely related to the selected line search. When the step size $s_k = x{k+1} - x{k} \to 0$ is regarded as a measure of global convergence, the PRP method of exact line search is used to converge the uniformly convex function under this benchmark. The PRP method using Armijo-type inexact line search method converges globally for general nonconvex functions. The PRP

optimizer = optim.BASIC(

model.parameters(), method = 'PRP',

line_search = 'Armijo', c1 = 1e-4,

c2 = 0.9, lr = 1, rho = 0.5,)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

optimizer.step(closure)Another famous conjugate gradient method Hestenes-Stiefel( HS ) method was proposed by Hestenes and Stiefel. The scalar parameter update formula of the HS method is as follows:

$$ \beta{k}^{HS}=\frac{g{k}^{T}(g{k}-g{k-1})}{(g{k}-g{k-1})^Td_{k-1}} $$

Compared with the PRP method, an important property of the HS method is that the conjugate relation $d_k^T(g{k}-g{k-1}) = 0$ always holds regardless of the exact of the line search. However, the theoretical properties and computational performance of the HS method are similar to those of the PRP method.

The convergence analysis of the HS method is often closely related to the selected line search. If the

optimizer = optim.BASIC(

model.parameters(), method = 'HS',

line_search = 'Strong_Wolfe', c1 = 1e-4,

c2 = 0.4, lr = 0.2, max_ls = 25,)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

optimizer.step(closure)Conjugate Descent ( CD ) was first introduced by Fletcherl in 1987. It can avoid the phenomenon that a rising search direction may occur in each iteration such as the PRP method and the FR method under certain conditions.

The scalar parameter update formula of the CD method is as follows:

$$ \beta{k}^{CD}=\frac{g{k}^T g{k}}{-(g{k-1})^T d{k-1}} $$

The convergence analysis of the CD method is often closely related to the selected line search. The CD method using the strong Wolfe (

optimizer = optim.BASIC(

model.parameters(), method = 'CD',

line_search = 'Armijo', c1 = 1e-4,

c2 = 0.9, lr = 1, rho = 0.5,)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

optimizer.step(closure)Liu-Storey ( LS ) conjugate gradient method is a nonlinear conjugate gradient method proposed by Liu and Storey in 1991, which has good numerical performance.

The scalar parameter update formula of the LS method is as follows:

$$ \beta{k}^{LS}=\frac{g{k}^T (g{k} - g{k-1})}{ - g{k-1}^T d{k-1}} $$

The convergence analysis of the LS method is often closely related to the selected line search. The LS method with strong Wolfe inexact line search method has global convergence property ( under Lipschitz condition ). The LS method using Armijo-type inexact line search method converges globally for general nonconvex functions.

optimizer = optim.BASIC(

model.parameters(), method = 'LS',

line_search = 'Armijo', c1 = 1e-4,

c2 = 0.9, lr = 1, rho = 0.5,)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

optimizer.step(closure)The Dai-Yuan method ( DY ) was first proposed by Yuhong Dai and Yaxiang Yuan in 1995, which always produces a descent search direction under weaker line search conditions and is globally convergent. In addition, good convergence results can be obtained without using strong Wolfe inexact line search but only using Wolfe inexact line search.

The scalar parameter update formula of the DY method is as follows:

$$ \beta{k}^{DY}=\frac{g{k}^T g{k}}{(g{k} - g{k-1})^T d{k-1}} $$

The convergence analysis of the DY method is often closely related to the selected line search. The DY method using the strong Wolfe inexact line search method can guarantee sufficient descent and global convergence for general nonconvex functions. The DY method using the Wolfe inexact line search method converges globally for general nonconvex functions.

optimizer = optim.BASIC(

model.parameters(), method = 'DY',

line_search = 'Strong_Wolfe', c1 = 1e-4,

c2 = 0.9, lr = 0.2, max_ls = 25,)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

optimizer.step(closure)Hager-Zhang Method5

The Hager-Zhang ( HZ ) method is a new nonlinear conjugate gradient method proposed by Hager and Zhang in 2005. It satisfies the sufficient descent condition and has global convergence for strongly convex functions, and the search direction approaches the direction of the memoryless BFGS quasi-Newton method.

The scalar parameter update formula of the HZ method is as follows:

$$ \beta_k^{HZ}=\frac{1}{d{k-1}^T (g{k} - g{k-1})}((g{k} - g{k-1})-2 d{k-1} \frac{\^2}{d{k-1}^T (g{k} - g{k-1})})^T{g}_{k} $$

The convergence analysis of the HZ method is often closely related to the selected line search. The HZ method with ( strong ) Wolfe inexact line search method converges globally for general nonconvex functions. The HZ

optimizer = optim.BASIC(

model.parameters(), method = 'HZ',

line_search = 'Strong_Wolfe', c1 = 1e-4,

c2 = 0.9, lr = 0.2, max_ls = 25,)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

optimizer.step(closure)Dai and Yuan studied the HS-DY hybrid conjugate gradient method of. Compared with other hybrid conjugate gradient methods ( such as FR + PRP hybrid conjugate gradient method ), the advantage of this hybrid method is that it does not require the line search to satisfy the strong Wolfe condition, but only the Wolfe condition. Their numerical experiments show that the HS-DY hybrid conjugate gradient method performs very well on difficult problems.

The scalar parameter update formula of the HS-DY method is as follows:

Regarding the convergence analysis of the HS-DY method, the HS-DY method using the Wolfe inexact line search method is globally convergent for general non-convex functions, and the performance effect is also better than the PRP method.

optimizer = optim.BASIC(

model.parameters(), method = 'HS-DY',

line_search = 'Armijo', c1 = 1e-4,

c2 = 0.9 lr = 1, rho = 0.5,)

def closure():

optimizer.zero_grad()

loss(model(input), target).backward()

return loss

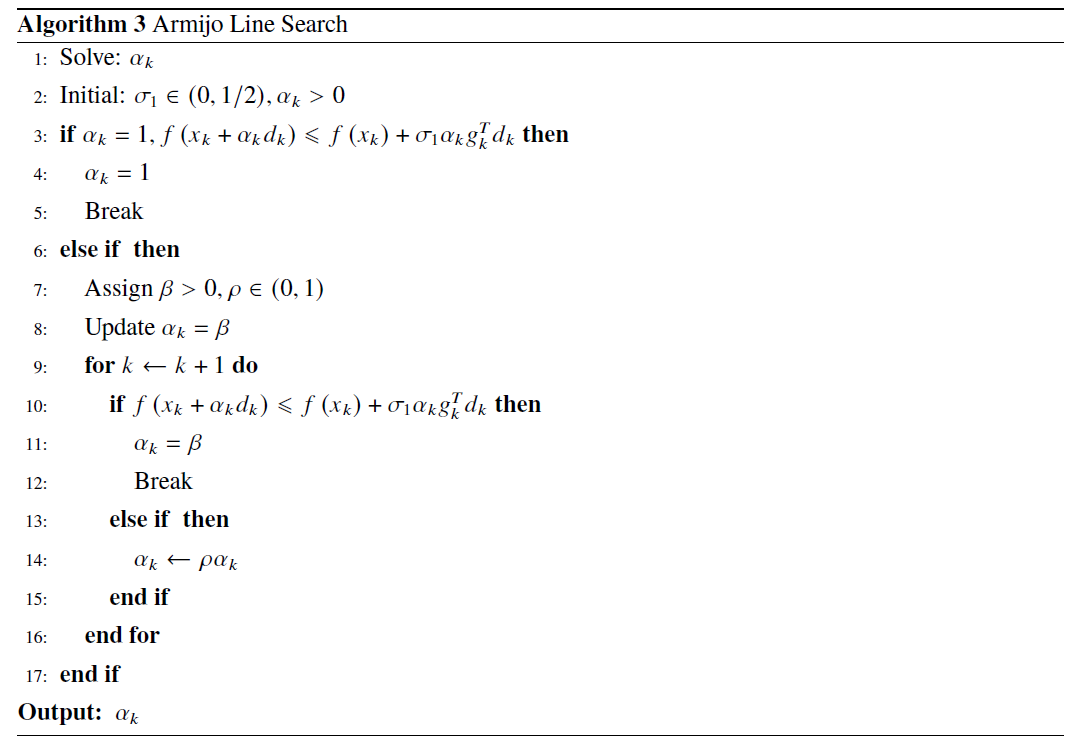

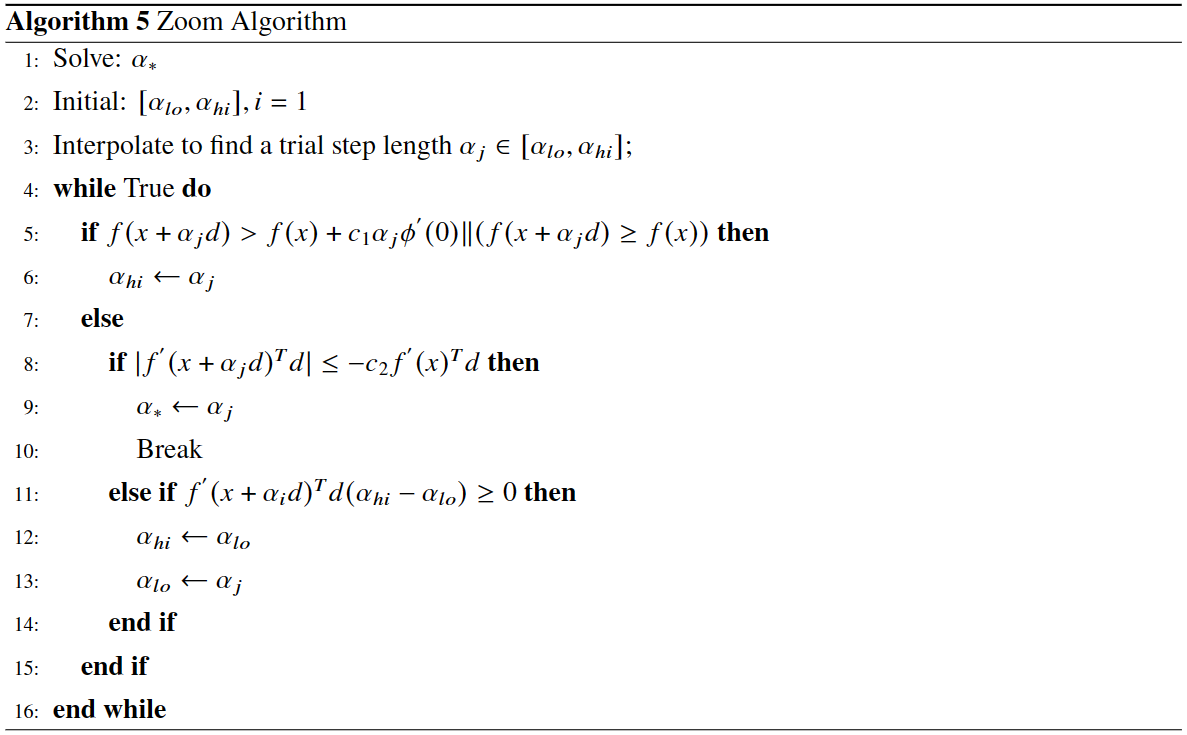

optimizer.step(closure)In order to satisfy the condition that the decrease of the function is at least proportional to the decrease of the tangent, there are:

Among them,

In the following two formulas, the first inequality is a overwrite of the Armijo criterion. In addition, in order to prevent the step size from being too small and ensure that the objective function decreases sufficiently, the second inequality is introduced, so there is:

where the

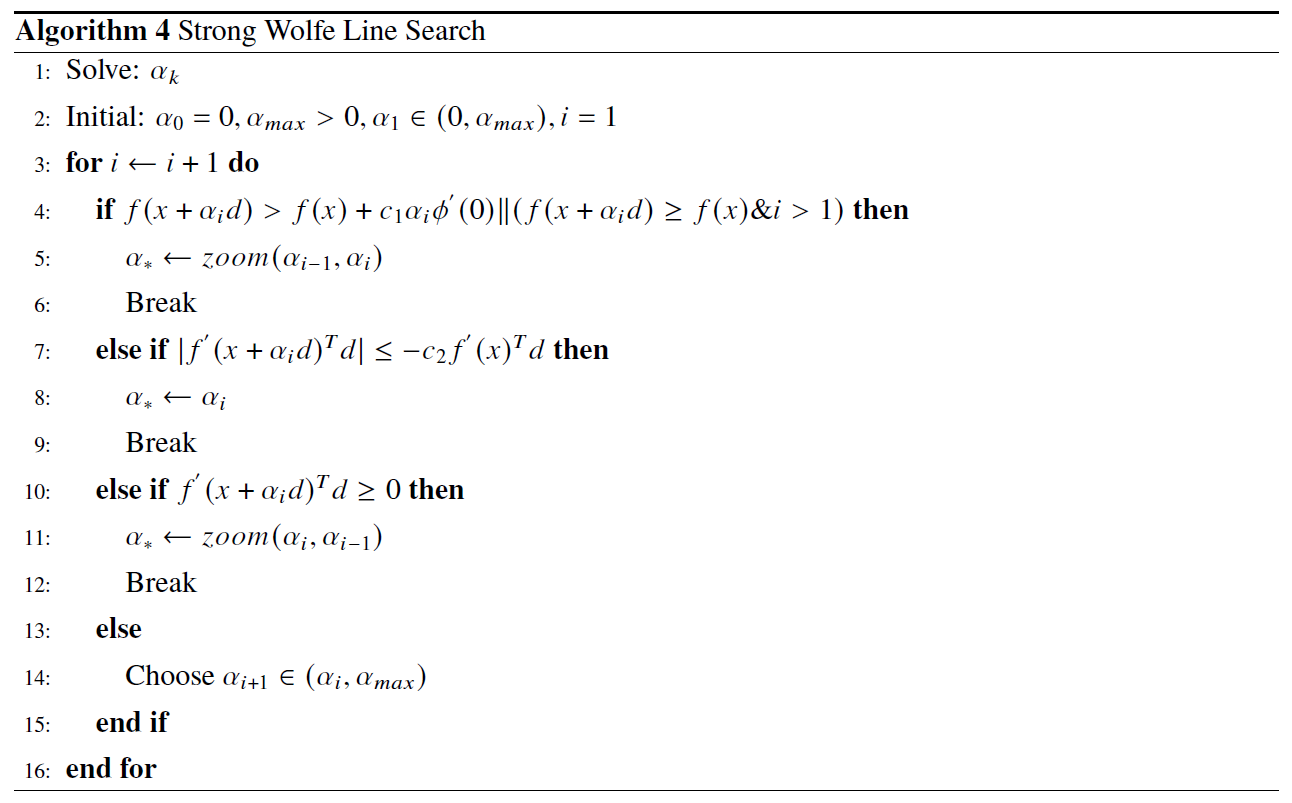

The Strong Wolfe criterion reduces the constraint to less than 0 on the basis of the original Wolfe criterion to ensure the true approximation of the exact line search :

Please cite original authors of optimization algorithms. If you like this software:

@software{Mi_ncgoptimizer,

title = {{NCG-Optimizer -- a set of optimizer about nonlinear conjugate gradient in PyTorch.}},

author = {Kerun Mi},

year = 2023,

month = 2,

version = {0.1.0}}Or you can get from "cite this repository" button.

Y.H. Dai and Y. Yuan (2000), Nonlinear Conjugate Gradient Methods, Shanghai Scientific and Technical Publishers, Shanghai. (in Chinese)↩

Nocedal J, Wright S J. Line search methods[J]. Numerical optimization, 2006: 30-65.↩

Nocedal J, Wright S J. Conjugate gradient methods[J]. Numerical optimization, 2006: 101-134.↩

Pytlak R. Conjugate gradient algorithms in nonconvex optimization[M]. Springer Science & Business Media, 2008.↩

Hager W W, Zhang H. A new conjugate gradient method with guaranteed descent and an efficient line search[J]. SIAM Journal on optimization, 2005, 16(1): 170-192.↩

Nocedal J, Wright S J. Line search methods[J]. Numerical optimization, 2006: 30-65.↩

Schmidt M. minFunc: unconstrained differentiable multivariate optimization in Matlab[J]. Software available at https://www.cs.ubc.ca/~schmidtm/Software/minFunc.html, 2005.↩