New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Error in validation data when training model on HRSC216 dataset #16

Comments

|

Hi, the format of the validation data is not the same as the format of the training data. They take the following forms, respectively. The label of the validation data looks like this This link contains the verification data that I have processed, unzip them to the corresponding folder and use it. Thanks. |

|

This is the training labels. |

|

Hello sir , |

OK. Please check it. |

|

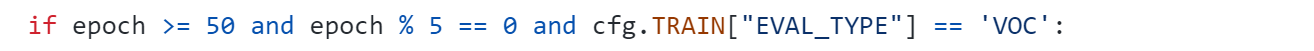

Also sir , you have shared with me your tested data used to test your model not validation data used during model training after 50 epochs ? |

Hi. Since we use both training and validation sets for training, the test set is used for testing. So the validation set is included in trainHRSC2016.txt. The separate verification set I also uploaded to Google Drive. The link is below. |

First, use the following script to convert the .xml labels of the HRSC2016 dataset to txt tags. Then, use the script given in the repository to convert it to the format required by GGHL. |

|

Sorry, I forgot, there is one more script. First use this script to convert it to .xml in VOC format, then use another to convert .xml to txt. Hold me a minute, I'll send you another script later because I don't have a computer right now. |

File "/content/drive/MyDrive/GGHL/evalR/evaluatorGGHL.py", line 237, in __calc_APs

use_07_metric) # 调用voc_eval.py的函数进行计算

File "/content/drive/MyDrive/GGHL/evalR/voc_eval.py", line 122, in voc_eval

recs[imagename] = parse_poly(annopath.format(imagename))

File "/content/drive/MyDrive/GGHL/evalR/voc_eval.py", line 44, in parse_poly

object_struct['name'] = classes[int(splitlines[0])]

ValueError: invalid literal for int() with base 10: 'ship'

The text was updated successfully, but these errors were encountered: