New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

matte_loss减小幅度不大 #84

Comments

|

你好,感谢你的关注。 对于你的问题: Q2: matte_loss减小幅度没有detail_loss那么大 Q3: 融合结果细节损失很严重 |

|

谢谢回复。我可视化一下网络输出,看看效果。 |

|

同问loss收敛问题 |

|

@YisuZhou |

这一部分在论文里有体现吗? |

|

@AloneGu |

有几个别的问题请教一下。

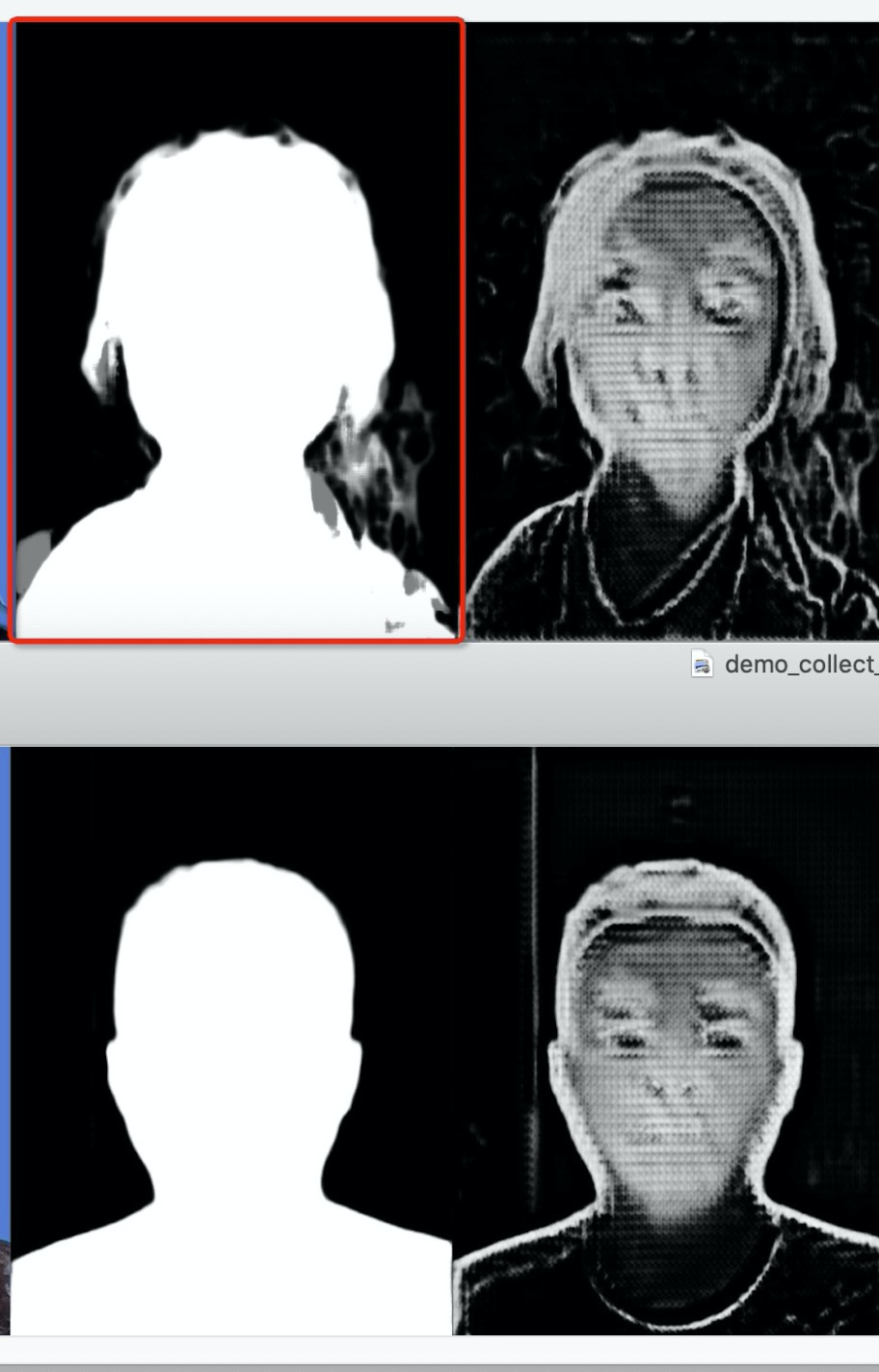

您在训练过程中有遇到过这种情况吗?

|

|

@AloneGu Q2: 代码里 semantic loss weights 默认值是 10, 论文里是 1, 实际训练中使用的是? |

|

Q1: 和你的代码是一致的,但我 rgb 图片是规范化到 【-1,1】之间,所以如果 rgb本身的值在0附近的话,composition loss 一定很小,和 matte 无关,我修改 rgb 图片的预处理应该能解决 Q2: 嗯,我这边的实验结论也是这样。semantic_scale = 10 更好 |

|

@AloneGu |

@ZHKKKe ,您好!

我训练了40个epoch,matte_loss从3.587减少到:

epoch = 39, semantic_loss=0.0039, detail_loss=0.0332, matte_loss=2.2689

epoch = 39, semantic_loss=0.0053, detail_loss=0.0327, matte_loss=2.4930

epoch = 39, semantic_loss=0.0067, detail_loss=0.0446, matte_loss=2.5472

epoch = 39, semantic_loss=0.0034, detail_loss=0.0272, matte_loss=2.2228

epoch = 39, semantic_loss=0.0050, detail_loss=0.0378, matte_loss=2.6175

epoch = 39, semantic_loss=0.0046, detail_loss=0.0338, matte_loss=2.3804

epoch = 39, semantic_loss=0.0061, detail_loss=0.0410, matte_loss=2.2642

epoch = 39, semantic_loss=0.0054, detail_loss=0.0399, matte_loss=2.4980

我使用的爱分割公开的数据集,共34000张,matte_loss减小幅度没有detail_loss那么大,甚至每一个epoch中都会出现3.1左右的值,不止一次。我的bs设置为16.

我想请教一下,这个是不是因为样本的原因导致的。另外,我第一个训练抽取其中10000张样本,但是融合结果细节损失很严重。谢谢!

The text was updated successfully, but these errors were encountered: