-

Notifications

You must be signed in to change notification settings - Fork 2.4k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

[SUPPORT]RejectedExecutionException FutureTask rejected from ThreadPoolExecutor[Terminated...] #2723

Comments

|

Hudi version :0.6.0 Spark version :2.4.0+cdh6.2.0 Hive version :2.1.1+cdh6.2.0 Hadoop version :3.0.0+cdh6.2.0 |

|

This does look like some exception coming from cleaner. Can you look around the logs to see if there are more stack traces related to this ? Can you try setting hoodie.clean.async=False and see ? |

|

@bvaradar : once you respond, can you please remove "awaiting-user-response" label for the issue. If possible add "awaiting-community-help" label. |

|

@liijiankang : No, My intention was to make the cleaner synchronous so that the spark job can fail immediately after encountering the exception. It is purely to debug the issue and not a solution. |

|

@bvaradar I set hoodie.clean.async to false and this exception did not occur again. |

|

@liijiankang : This exception is also seen when shutting down service which has async threads running. With async cleaning, there is a separate executor service setup to handle cleaning. Is it possible that you were doing ctrl-C and then saw this exception. |

|

@bvaradar Our spark streaming program is submitted to yarn to run in cluster mode, and ctrl-C is not executed during the operation. |

|

@liijiankang Does this issue come back when you turn on async cleaning ? If yes, can you file a JIRA ticket and ping back the ticket here so we can look into this ? |

|

can you please update the jira w/ stack trace as well. |

Describe the problem you faced

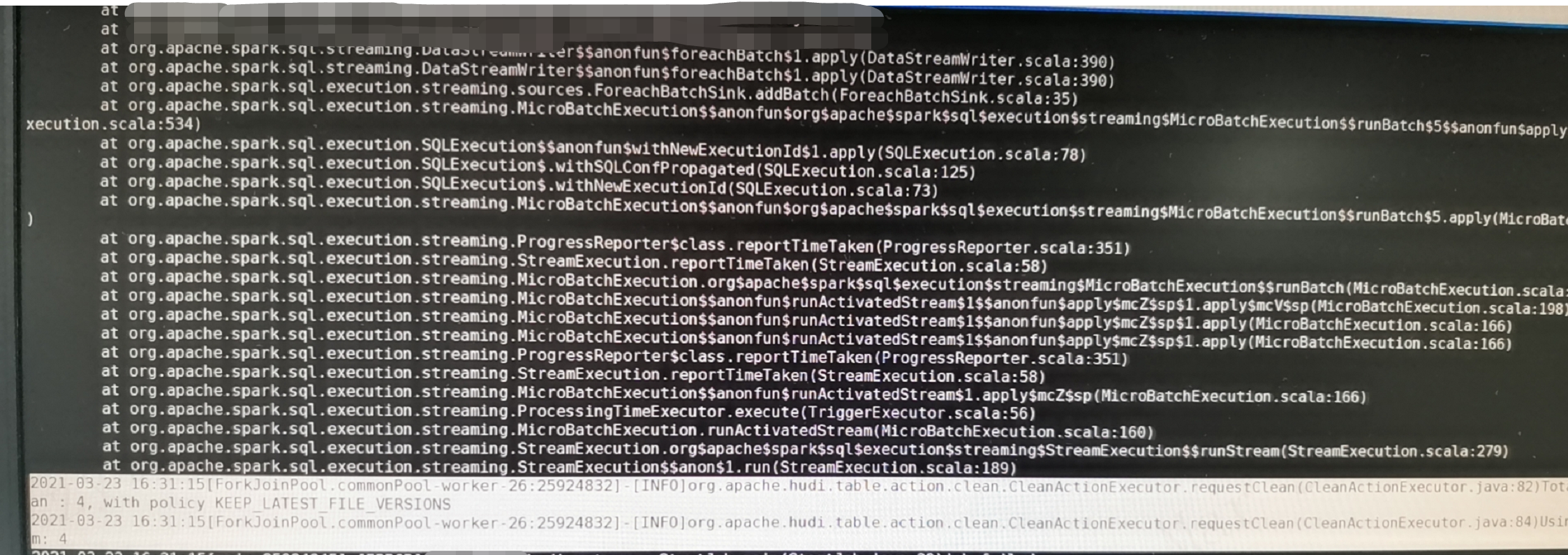

We use Structured Streaming to subscribe to the data in Kafka, and then write the data to the hoodie, the program will stop abnormally after running for a period of time.

Environment Description

Hudi version :

Spark version :

Hive version :

Hadoop version :

Storage (HDFS/S3/GCS..) :HDFS

Running on Docker? (yes/no) :no

Add the stacktrace of the error.The text was updated successfully, but these errors were encountered: