-

Notifications

You must be signed in to change notification settings - Fork 2.4k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

[SUPPORT] Log files are not compacted #2771

Comments

|

I see you have enabled async compaction. If you enable inline compaction, does it work? |

|

@stackfun I see that you have some conflicting configs You have enabled Additionally, I see that you have set the num delta commits before compaction kicks in to 16 This means compaction will not kick in until you do 16 delta commits. Additionally, even after that, the default CompactionPolicy is which will compact at max 500GB https://github.com/apache/hudi/blob/master/hudi-client/hudi-client-common/src/main/java/org/apache/hudi/config/HoodieCompactionConfig.java#L94. Are you by any change writing more than 500GB during your 16 delta commits ? If yes, some log files may not get compacted. |

|

Setting the "hoodie.compaction.target.io" config worked like a charm. Thanks a lot! |

Describe the problem you faced

Sometimes, log files with only upserts are not compacted in MOR table.

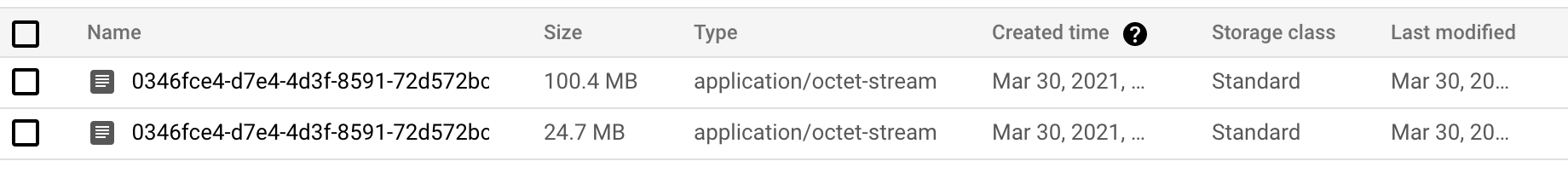

The first image shows the compacted parquet files, note that it was created March 30th.

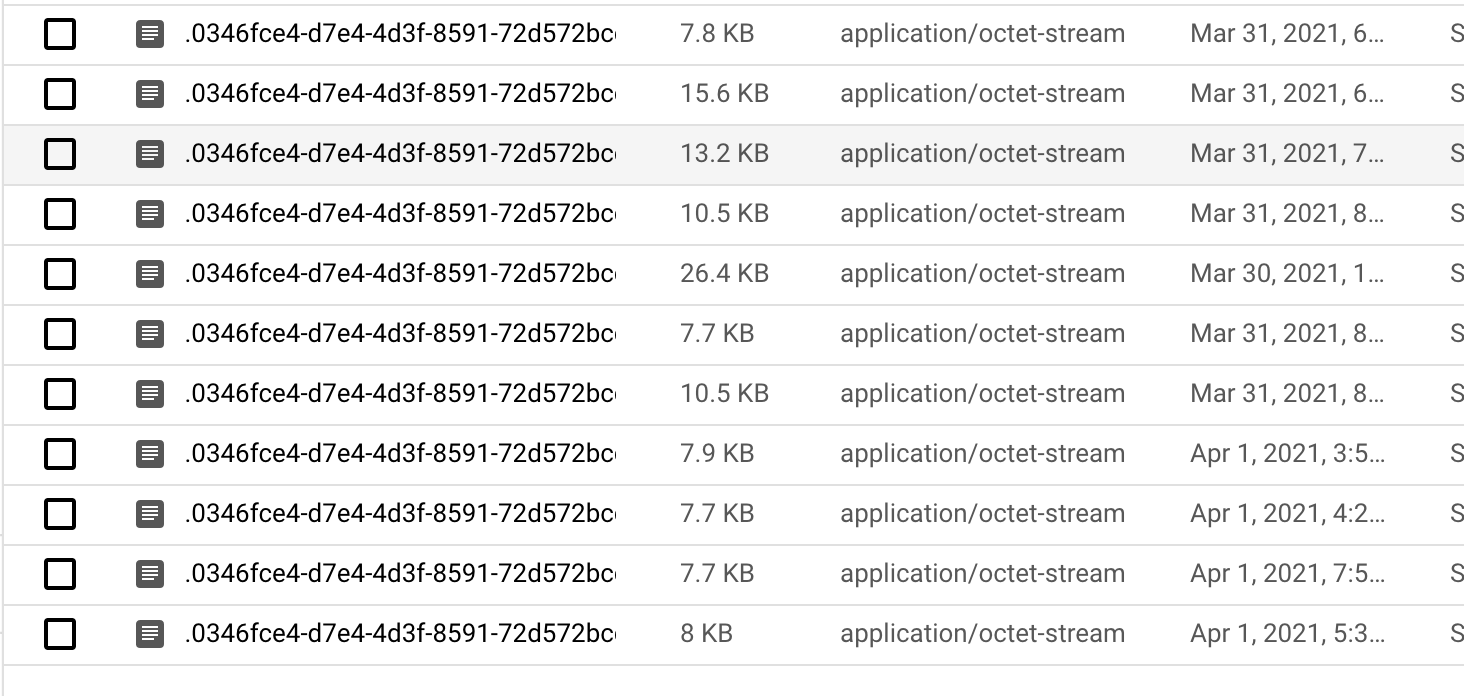

The second image has the log files, which were created after the 30th, but they are never compacted. In our use case, we have a lot of small random upserts.

Here's our hudi configuration.

To Reproduce

Currently trying to reproduce with a small example, but not successful yet.

Expected behavior

Compaction on this file group running

Environment Description

Hudi version : 0.7.0 (with [HUDI-1496] Fixing detection of GCS FileSystem #2500 merged)

Spark version : 2.4.7

Hive version : 2.3.7

Hadoop version : 2.9.2

Storage (HDFS/S3/GCS..) : GCS

Running on Docker? (yes/no) : no

GCP Dataproc: 1.4

The text was updated successfully, but these errors were encountered: