New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

压力测试一段时间后,tm端rpc调用server端超时 #2193

Comments

|

@ppj19891020 Is the server retry time configured? |

|

@slievrly 目前用的版本是1.1.0快照包(清铭昨天发的)配置文件没有指定,应该是用默认(CLIENT_LOCK_RETRY_TIMES:30 CLIENT_LOCK_RETRY_INTERNAL:10); note:昨天试了1.0.0版本,我修改过这个配置的(周期应该是500ms,重试次数60次),后来“清铭”发了我一个1.1.0的快照包,但是这个包我没修改过这个配置,昨天晚上还是出问题了的。今天下午我server端加了debug端口也差不多跑了一个多小时,现在还没出现问题 |

|

@slievrly 下午差不多跑了3个半小时出现了昨天的那个问题,但是我这次调试了server端的rpc请求分发的代码是没有任何错误的,差不多手动断点调试了几次后,又突然的恢复了,主要是调试了io.seata.core.rpc.netty.AbstractRpcRemoting#dispatch方法; 刚统计了下业务的线程数刚好100个,看来业务的线程池最大的队列数20000,怀疑是不是这个数量配置太大,导致都是积压在队列上,正常情况一次请求大概需要6次rpc(申请xid + 注册分支事物 + 提交分支事物+通知tc提交/回滚),我们现在是测试100线程并发调用,我现在按照这个逻辑等出问题之后,过一段时间看看有没有恢复。 |

|

@ppj19891020 As for the ple code, I took a look at the relatively simple localTCC test, which is normal from the stack above, and the queues in the waitting state are also normal, which is the task of the thread pool waiting for blockingQueue. Several questions need to be confirmed:

|

观察server端与tm和rm端连接,发现是有建立连接的seata server连接rm和tm信息: TM和RM端连接seata server信息: Note:This " heartbeat=false" parameter is that the communication heartbeat detection is off, which has nothing to do with the problem.Let me try! |

|

@slievrly Today, I found the timeout problem of TM client again, and then restart TM client to recover. |

|

最终原因已经定位到是使用了dbcp的数据源,由于项目中只配置了maxconn值,没有配置minconn,最终默认默认minconn值1,导致初始化数据源参数最小空闲数和最大空闲数都使用minconn,在并发压力大的情况,数据库连接一直保持在1,新的连接一直在不断的新建和销毁,导致server端处理tps低下,最终导致所有的处理线程一直等待超时。dbcp数据源性能优化参看文章。 public class DbcpDataSourceGenerator extends AbstractDataSourceGenerator {

@Override

public DataSource generateDataSource() {

BasicDataSource ds = new BasicDataSource();

ds.setDriverClassName(getDriverClassName());

ds.setUrl(getUrl());

ds.setUsername(getUser());

ds.setPassword(getPassword());

ds.setInitialSize(getMinConn());

ds.setMaxActive(getMaxConn());

// 主要是这2个参数

ds.setMinIdle(getMinConn());

ds.setMaxIdle(getMinConn());

ds.setMaxWait(5000);

ds.setTimeBetweenEvictionRunsMillis(120000);

ds.setNumTestsPerEvictionRun(1);

ds.setTestWhileIdle(true);

ds.setValidationQuery(getValidationQuery(getDBType()));

ds.setConnectionProperties("useUnicode=yes;characterEncoding=utf8;socketTimeout=5000;connectTimeout=500");

return ds;

}

}目前由于这块是整合druid和dbcp数据源,为了配置的一致性,导致各个数据源参数配置差异问题,可以考虑增加不同数据源的参数配置,或者以文档的方式让开发者注意。 |

Ⅰ. Issue Description

Seata 1.0.0正式包,TCC模式压力测试长时间测试,差不多压力1个多小时之后,突然tm端与server端rpc通信超时导致所有的请求都失败。

测试代码提交在:https://github.com/ppj19891020/seata-tcc-feign-demo

测试场景:spring cloud中模拟调用订单和库存服务。

Ⅱ. Describe what happened

If there is an exception, please attach the exception trace:

TM端报错日志:

server端出现大量的这种日志:

线程栈信息

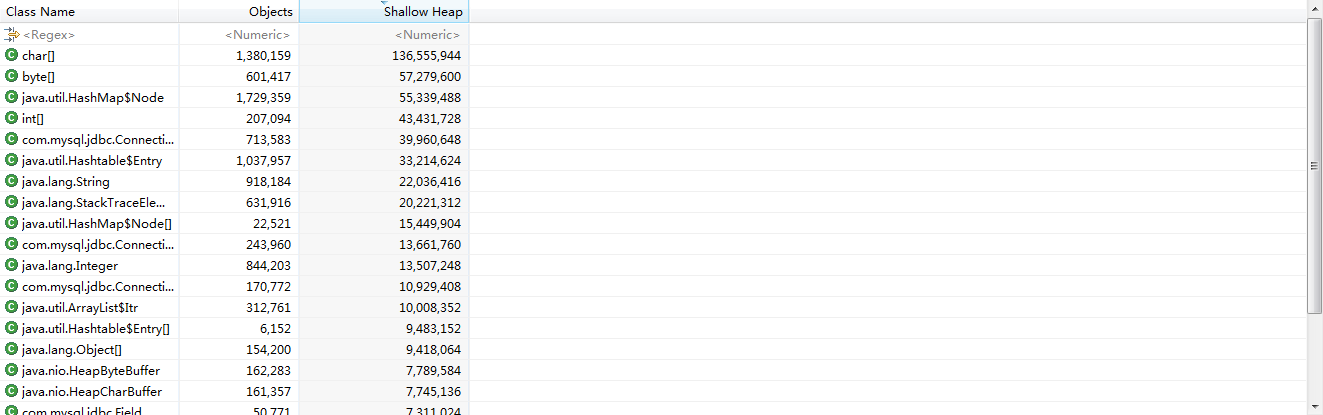

server端myql数据表异常:

global_table中存在有11条数据未成功,都是是没有分支事物的,状态是0和9

Ⅲ. Describe what you expected to happen

压力测试一段时间后,tm端rpc调用server端超时

Ⅳ. How to reproduce it (as minimally and precisely as possible)

tcc模式长时间压力测试

Ⅴ. Anything else we need to know?

Ⅵ. Environment:

The text was updated successfully, but these errors were encountered: