You signed in with another tab or window. Reload to refresh your session.You signed out in another tab or window. Reload to refresh your session.You switched accounts on another tab or window. Reload to refresh your session.Dismiss alert

I had searched in the feature and found no similar feature requirement.

Description

Summary

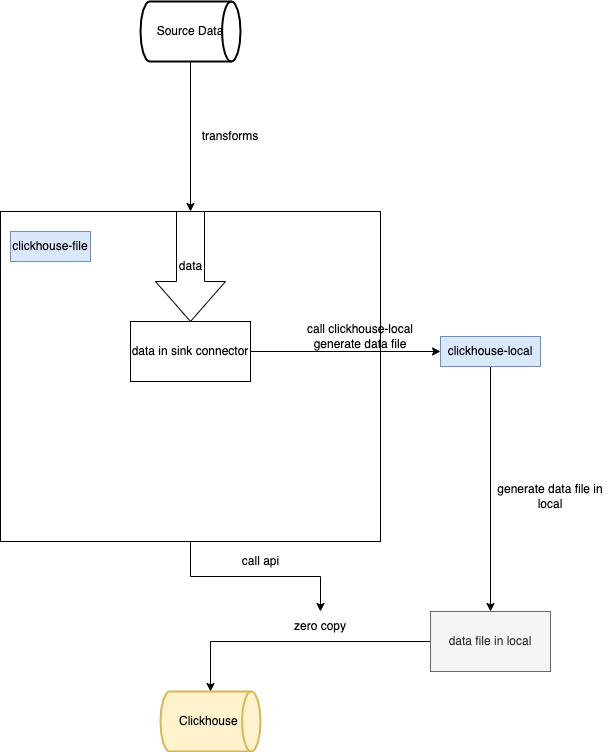

In the scenario where massive data is written to Clickhouse, traditional jdbc cannot carry such a large amount of data. Similar to hbase's bulk load function, seatunnel can provide support for clickhouse to directly write data files.

about the question: how to send files from spark node to ClickHouse node

I think maybe use Rsync, and use a config file to save password?

So I think we need a document describing how to use it.

about the question: how to send files from spark node to ClickHouse node I think maybe use Rsync, and use a config file to save password? So I think we need a document describing how to use it.

rsync can use ssh protocol or configure daemon,provide a generic rsync daemon config,Compatible with Ordinary Database engine or Atomic Database engine

Search before asking

Description

Summary

In the scenario where massive data is written to Clickhouse, traditional jdbc cannot carry such a large amount of data. Similar to hbase's bulk load function, seatunnel can provide support for clickhouse to directly write data files.

Plan

This is original plan: ClickHouse/ClickHouse#10473

Ours plan:

Details:

Options

Some problem

Usage Scenario

No response

Related issues

No response

Are you willing to submit a PR?

Code of Conduct

The text was updated successfully, but these errors were encountered: