-

Notifications

You must be signed in to change notification settings - Fork 409

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Module 'quant_cuda' has no attribute 'vecquant4matmul' #53

Comments

|

Try this: |

I tried that still ran into the same issue. |

|

Are you sure you ran it exactly like I showed? This is wrong syntax: Please show the output of running: |

|

Sure I tried that and it worked. While trying the demo: The bug seems to be referenced here |

|

I think the reason for the error is that you have another version of quant-cuda already installed, likely from GPTQ-for-LLaMa. You should first do: However you can't use CUDA AutoGPTQ anyway. You just told auto-gptq to install without CUDA (BUILD_CUDA_EXT=0), and you need to do that because your CUDA Toolkit version doesn't match the version used to compiled pytorch. If you want to fix that you could uninstall CUDA Toolkit 12.1 and install CUDA Toolkit 11.8 instead. Or you could build pytorch from source, so it can use CUDA Toolkit 12.1. There's no pre-built pytorch binaries for CUDA 12.x yet. Instructions for doing that are on the pytorch Github - it takes a while though, at least an hour to build. Or forget about CUDA and use Triton instead: Here is simple example code to use Triton to load this GPTQ model: https://huggingface.co/TheBloke/stable-vicuna-13B-GPTQ from transformers import AutoTokenizer, pipeline, logging

from auto_gptq import AutoGPTQForCausalLM, BaseQuantizeConfig

quantized_model_dir = "/path/to/stable-vicuna-13B-GPTQ"

tokenizer = AutoTokenizer.from_pretrained(quantized_model_dir, use_fast=True)

def get_config(has_desc_act):

return BaseQuantizeConfig(

bits=4, # quantize model to 4-bit

group_size=128, # it is recommended to set the value to 128

desc_act=has_desc_act

)

def get_model(model_base, triton, model_has_desc_act):

return AutoGPTQForCausalLM.from_quantized(quantized_model_dir, use_safetensors=True, model_basename=model_base, device="cuda:0", use_triton=triton, quantize_config=get_config(model_has_desc_act))

# Prevent printing spurious transformers error

logging.set_verbosity(logging.CRITICAL)

prompt='''### Human: Write a story about llamas

### Assistant:'''

model = get_model("stable-vicuna-13B-GPTQ-4bit.compat.no-act-order", triton=True, model_has_desc_act=False)

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_length=512,

temperature=0.7,

top_p=0.95,

repetition_penalty=1.15

)

print("### Inference:")

print(pipe(prompt)[0]['generated_text']) |

|

It seems the repo path is wrong: |

|

My example code assumes the model is downloaded locally. Download the model then update AutoGPTQ does not yet support loading a model directly from Hugging Face. |

|

@TheBloke That's been my concern from the start I was trying versions of Alpaca, GPT-J, Bloom, OPT, Pegasus but was not able to load them from huggingface. |

|

@TheBloke Is there an open PR to do this? |

|

No, no-one is looking at it yet to my knowledge. Remember that AutoGPTQ is still new and under active development. Such improvements will come over time. It's easy to download the model first. If you want a quick way to do that, clone In this example, using my example code you would then set quantized_model_dir = "/workspace/models/TheBloke_stable-vicuna-13B-GPTQ" |

|

@TheBloke Sure will try that. While loading |

|

I don't think so, no. Are you trying to use the CPU only, or do you have a GPU for inference? |

|

@TheBloke I am trying using CPU only but even while GPU only it does not work. I think it requires 28GB of GPU RAM to load? |

|

CPU only will definitely be terribly slow. For a 13B 4bit model like stable-vicuna-13B you need around 9GB VRAM. Nothing like 28GB - that would be for an unquantised fp16 model. |

|

Last column is used VRAM |

|

I have a VRAM of 14GB. But it still is not able to run? |

|

Please show the full output of running the script |

|

download quantized model from HF hub is a feature that I plan to add in v0.2.0, I'm now writing the features plan of v0.2.0 and v0.3.0, anyone interested can see in |

|

After running the model I got this: |

|

This is wrong: It should be Please use the example code I provided as a base. |

|

@TheBloke I had taken it from the huggingface model card. Do all your models require AutoGPTQ or you have support for huggingface transformers ? |

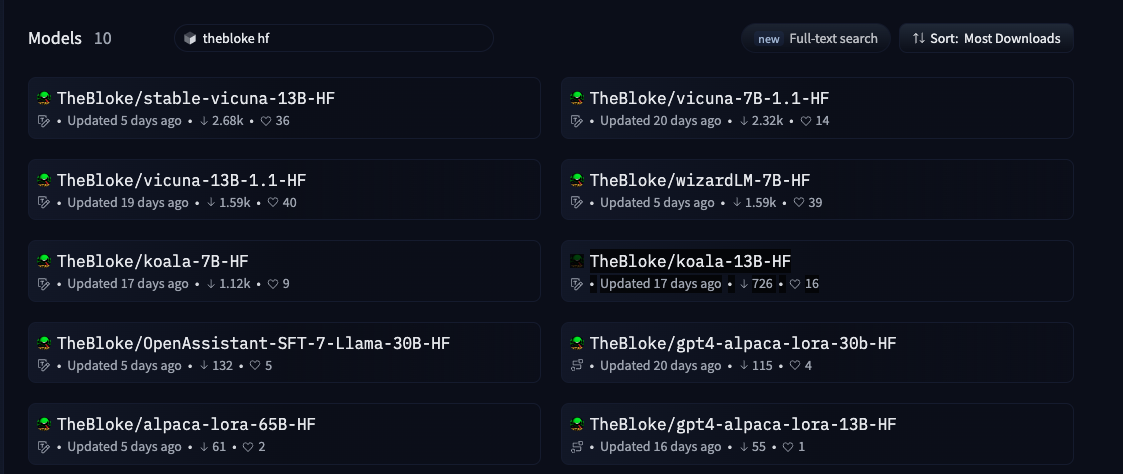

I have many models that support unquantised transformers inference. But they will need a lot more VRAM. I don't think you can load any of them in fp16 in 16GB, but you could load them in 8bit. Here are my HF models: https://huggingface.co/models?search=thebloke%20hf A 7B fp16 model may load in 16GB VRAM. A 13B fp16 definitely will not. But either way I recommend you add If you are loading unquantised HF models then that is not relevant to AutoGPTQ. For further support with that, please open a Discussion on the HF model page for whichever of my models you try to use. |

|

Sure I have used bitsandbytes and accelerate and have experimented with Alpaca, OPT, GPT-J using their 8bit versions |

Unable to load the package after:

Ran into this error:

The text was updated successfully, but these errors were encountered: