New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Regarding the ray color calculation from the MLP output. #171

Comments

|

I also have the same question, hope someone can explain this. |

|

I tried out the NeRF training based on what I understood from the paper. Its just 3 line change compared to the provided implementation. In above code Nevertheless it seems NeRF model converge regardless of the this difference. But based on my limited testing converging seems to be bit unstable in summation based implementation. Again this need to be tested properly. I just ran the code few times to see everything is working. Other than that I could not find any details regarding this. If found an explanation please let me know. |

|

Thank you very much! Closing the issue since the question got answered. |

Hi,

I was trying to rewrite the NeRF from scratch to understand the technique better and during the implementation I encountered a concern which I need a help. I am new to computer graphics area so there may be a misunderstanding from my end. Please let me know if that's the case.

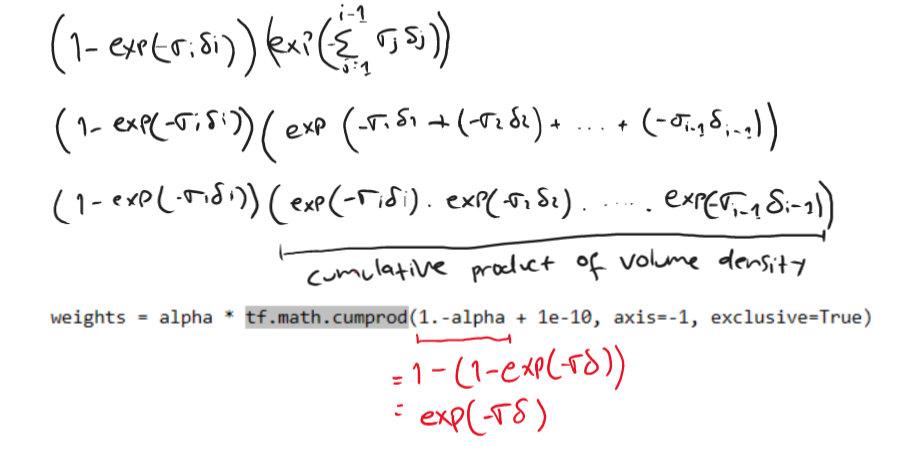

If I understood the paper correctly, In NeRF we are supposed to calculate the pixel colors of an image by integrating over the ray sample point colors (c) and volume density values (σ) given by the MLP using the provided formula in the paper.

Since we cant integrate, we use below formula to calculate the estimated ray color.

In the provided implementation of this repo, we first calculate the difference between sampled points (δ).

Then we calculate the a value called

alpha = 1.0 - tf.exp(-act_fn(raw) * dists)which I think is equivalent to 1 − exp(−σiδi) part of above formula.Then comes my problem.

weights = alpha * tf.math.cumprod(1.-alpha + 1e-10, axis=-1, exclusive=True)rgb_map = tf.reduce_sum(weights[..., None] * rgb, axis=-2) # [N_rays, 3]As per my understanding weights variable is equal to the Ti(1 − exp(−σiδi)) part of the formula (Not Exactly). But shouldn't it be implemented roughly like below according to the paper?

why this difference exist? Is my understanding is wrong or am I missing something?

The text was updated successfully, but these errors were encountered: