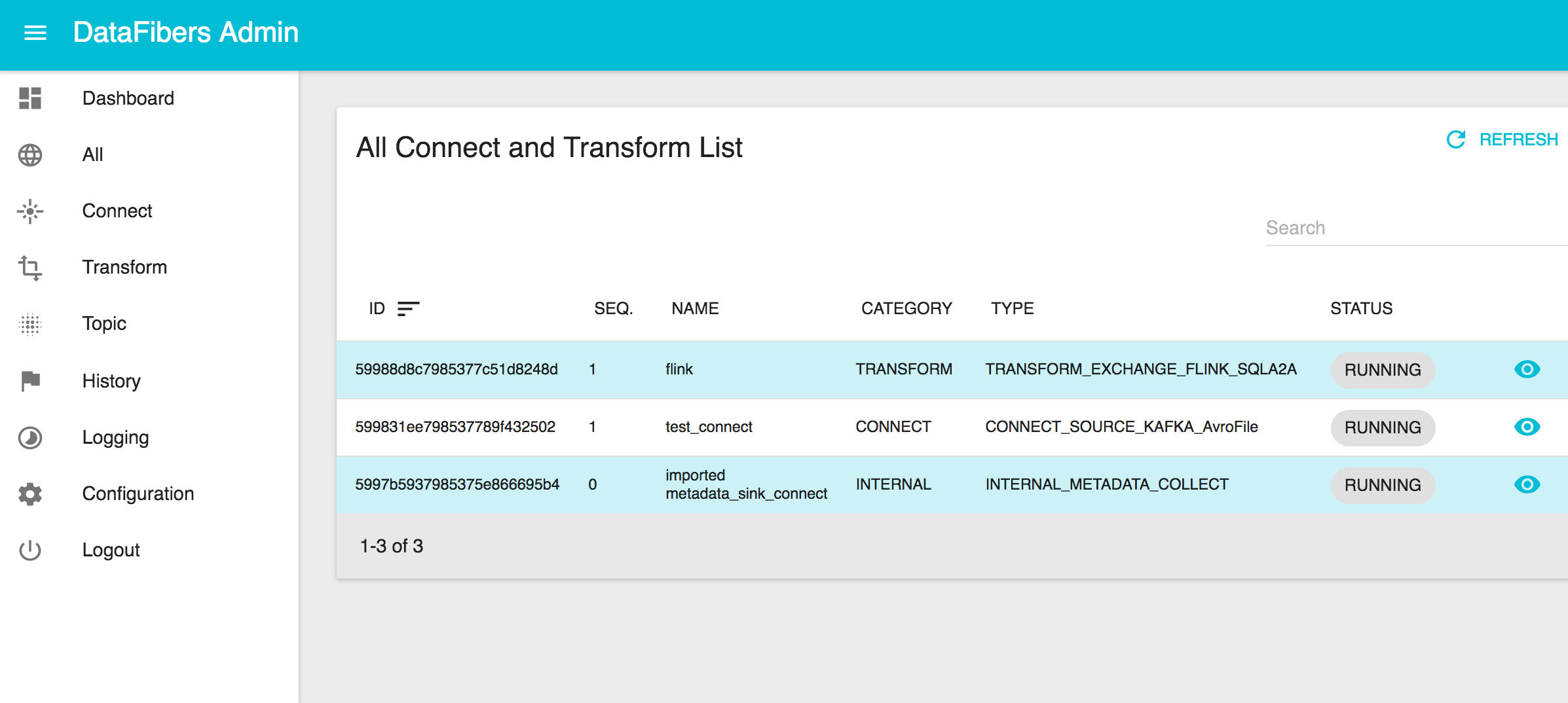

DataFibers (DF) - A pure streaming processing application on Kafka and Flink. The DF processor has two components defined to deal with stream ETL (Extract, Transform, and Load).

- Connects is to leverage Kafka Connect REST API on Confluent v.3.0.0 to landing or publishing data in or out of Apache Kafka.

- Transforms is to leverage streaming processing engine, such as Apache Flink, for data transformation.

You build the project using:

mvn clean package

The application is tested using vertx-unit.

The application is packaged as a fat jar, using the Maven Shade Plugin.

Once packaged, just launch the fat jar as follows ways

- Default with no parameters to launch standalone mode with web ui.

java -jar df-data-service-1.0-SNAPSHOT-fat.jar

- Full parameters mode.

java -jar df-data-service-1.0-SNAPSHOT-fat.jar <DEPLOY_OPTION> <WEB_UI_OPTION>

<DEPLOY_OPTION> values are as follows

- "c": Deploy as cluster mode.

- "s": Deploy as standalone mode.

<WEB_UI_OPTION> values are as follows

- "ui": Deploy with web ui.

- "no-ui": Deploy without web ui.

https://www.gitbook.com/read/book/datafibers/datafibers-complete-guide

- Fetch all installed connectors/plugins in regularly frequency

- Need to report connector or job status

- Need an initial method to import all available|paused|running connectors from kafka connect

- Add Flink Table API engine

- Add memory LKP

- Add Connects, Transforms Logging URL

- Add to generic function to do connector validation before creation

- Add submit other job actions, such as start, hold, etc

- Add Spark Structure Streaming

- Topic visualization

- Launch 3rd party jar

- Job level control and schedule

- Job metrics