New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

(sqlite3.OperationalError) database is locked #4653

Comments

|

Also I mentioned "viewing only" to state the severity of the problem. It does not mean it should crash if the web ui was trying to edit trading while the rest of the software does the same. It's clear that there should be a transactional nature in these things and a lock of some sort that avoids this sort of thing is missing. |

|

This thread is interesting, though not just for the top answer, but also this answer: https://stackoverflow.com/a/53470179/277716 |

|

there can be many things that cause this - blaming frequi might be a quick assumption - which i don't see confirmed by the logs you provide. The same error will probably also happen if the sqlite database is locked at a file-system level. Please note: I will start closing issues without the issue template filled out. It is unclear which version you're using (stable, develop, an older stable version) - and i am sick of having to ask that all the time as people simply delete the issue template. |

|

I'm not lying: the only thing that was being done to the dbase was accessing the web-ui apart from the regular operation (the web-ui was being reloaded the moment it happened). Also it would be hard for users to prove with logging they're not doing anything else with the dbase anyway since you can't see their entire OS anyway don't you think? linux python 3.8 |

|

i didn't say you're lying - i said that you're concluding it's frequi - while in reality it might have other causes i see no reason to exclude at the moment. Obviously it's very difficult to prove (or disprove) if another process / app was using the file at that moment. another stackoverflow thread suggests multiple other reasons (for example acces via command line - or even a virus scanner)... If you're sure it was frequi, we'll need to know which request (which endpoint) was causing it - otherwise there'll be little way to have a chance of fixing this. |

|

It's an ubuntu server updated to the latest packages and the only thing that it was running apart from sshd is ft, so it's either very unlikely to be an OS issue or most users of ft on server environments have the bug. I wonder if this is more related: |

|

is that the same log - or a new one? i wonder as you're the first to ever report this - so if this would be a real issue, all users of frequi would encounter this - or at least more than one - considering that freqUI is inclued now fro more than one version ... |

|

It's the same log: I just hadn't posted the top of it with the full ft-related parts in it. It's not common (!): I encountered it only once after using the web UI for weeks. I was just about to say: since SQLAlchemy documentation appears to pass the blame to the underlying database technology (when they describe OperationalError) and since this might be rare for most users: then: an exception handling that just re-tries a moment later might be useful (even though I seriously suspect that the practical cause of this is reloading the web-ui the very moment of altering the dbase with orders (which should be something reproducible with a "spammy" dry-run configuration and spam-reloading/spam-using the web-ui)). |

|

I couldn't reproduce it with a spam-webpage-reloading during spam-buying in a dryrun (and the exchange hit a throttle too). I guess I'll just stop using the web UI when I suspect ordering may happen. I noticed flush() is used in a dozen of places so one couldn't wrap it with an exception retry that easily ..even though I don't know if the web UI ever uses it indirectly or directly. |

|

reloading the UI (pressing f5) is the wrong approach - that will only download the html / javascript files - not make the API calls (which - if any - are causing this). It's rather interresting as so i suspect that if an explicit |

|

The only problem that I was getting from the web UI (consistently and every time) was that it was complaining in the log "WARNING - Could not get rate for pair" for a couple of pairs but it does that every time with no further issue at all (and the log has nothing interesting before the sqlite3.OperationalError exception other than that warning and after it the order to buy (which it did at the exchange but never reached the point to save it to the db). Interesting what you say that flush() should do nothing at all which points to a bug with SQLAlchemy itself (and I had not touched any code related to it either). Unless perhaps the web server does some indirect double-usage of the dbase that I wouldn't know about because I don't really understand its code. |

|

which version of SQLAlchemy have you got? ( The webserver uses one of the |

|

SQLAlchemy==1.4.3 (when run from the ft virtual environment (outside of it it reports 1.3.23 but I doubt it affects it since ./setup -u finds it updated)). I get the vague impression from its descriptions that if scoped_session() is used: then all the threads should access it that way to be consistent. I wonder if the software has ways to access the dbase that are not just that thread-safe way. |

|

no, all queries run through |

|

I can't imagine how it could happen if ft or the OS do nothing wrong and sqlalchemy would only blame the underlying dbase tech. A bug with the OS sqlite library itself perhaps? A definite red flag is that you expect flush() to do nothing but it does if you're right about that. |

|

Wait a second. The most likely scenario here is that a flush() was called on its own (which is obvious on the trace) right before another access to the db which would call another flush() either automatically via the scoped_session() code or on its own again from ft. |

|

Some background on it to help analyzing it and a possible solution: ft has check_same_thread==false at create_engine(); it is done to allow it to work multithreaded; sqlalchemy docs warn "when using multiple threads with the same connection writing operations should be serialized by the user to avoid data corruption." Part of one of the solutions to that is scoped_session() which is based on threading.local(); under the hood threading.local() restricts usage to one thread; comprehensive usage documentation for scoped_session() is here https://docs.sqlalchemy.org/en/14/orm/contextual.html#unitofwork-contextual Later in the same page at "Thread-Local Scope" it mentions "The Session object is entirely designed to be used in a non-concurrent fashion, which in terms of multithreading means “only in one thread at a time”. So our above example of scoped_session usage, where the same Session object is maintained across multiple calls, suggests that some process needs to be in place such that multiple calls across many threads don’t actually get a handle to the same session. We call this notion thread local storage, which means, a special object is used that will maintain a distinct object per each application thread. Python provides this via the threading.local() construct. The scoped_session object by default uses this object as storage, so that a single Session is maintained for all who call upon the scoped_session registry, but only within the scope of a single thread. Callers who call upon the registry in a different thread get a Session instance that is local to that other thread." Verdict: they are partly cryptic in text but look into the strong possibility that scoped_session() is used well only if you know you have made session objects with scoped_session() for each thread that needs one and not by using the same object on multiple threads (because if that possibility is true and only one object is used then it might be thread-safe in the sense that it protects the dbase from corruption but it will lock the dbase and crash the software in the rare occasion of concurrency from multiple threads) and the strong indication pointing to that is that scoped_session is using threading.local() (by default) which is a restrict-to-one-thread method. |

|

that's not true - when we introduced This is also supported by the above / documentation - as the |

|

Are you saying that scoped_session() is called only once, and it still gives internally a new object for each thread for the purpose of multithreading and it never needs to be called again for the purpose of multithreading specifically? If yes where is that explicitly stated or done in SQLAlchemy (because I'm not sure that even if it returns a new ID it completes the needs for that use case)? |

|

Hi, |

|

^If it's an issue, I bet it's more of an issue on slow machines because race conditions are more likely. I also saw it on a slow machine (and when I tried to reproduce it and I couldn't it was on a fast machine). |

|

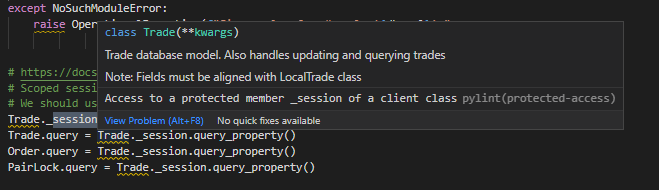

It's rather easy to prove that different sessions are used via debugger. Simply set a breakpoint "somewhere" in

In the screenshot above - the first 2 calls are made from the main thread - while the other 2 are made from an API invoked thread. Notice that they do have different ID's. |

|

The .session is same (not the query.session). I assume you believe .session is correct to stay the same because it represents scoped_session() but is that certainly the correct usage for this case? |

|

See this from https://docs.sqlalchemy.org/en/14/orm/contextual.html#using-thread-local-scope-with-web-applications The scoped_session.remove() method, as always, removes the current Session associated with the thread, if any. However, one advantage of the threading.local() object is that if the application thread itself ends, the “storage” for that thread is also garbage collected. So it is in fact “safe” to use thread local scope with an application that spawns and tears down threads, without the need to call scoped_session.remove(). However, the scope of transactions themselves, i.e. ending them via Session.commit() or Session.rollback(), will usually still be something that must be explicitly arranged for at the appropriate time, unless the application actually ties the lifespan of a thread to the lifespan of a transaction. That seems to state that even if everything is done perfectly with assigning scoped_session(): it still needs timing management of commit() or rollback(). Ft doesn't seem to use commit directly but it does autocommit with an implied flush() so I wonder if the whole problem is having explicit flush()es at all and just moving them out of the way would prevent the issue. |

|

Also it's not calling Trade.query.session.flush(), but Trade.session.flush(), so is it flushing them all at the same time without knowing if another flush is ongoing😱? |

|

not having Now it's been multiple years since we had to add this, so maybe it would not be necessary, however it's one of these subtle bugs which you only encounter every few 1000 times (IIRC, then the problem was that a trade can be closed / updated for the main loop, but telegram / api still got the "wrong" information). While removing it would not cause any immediate harm, it could still reintroduce these subtle bugs without noticing - as it's a "once in a few 1000 times" bug which you can't reliably reporduce (and therefore can't be sure it's gone either). I don't think using Please give the PR linked to this issue a try and let me know if it fixes the problem for you (it still does not reproduce for me - like - at all - not on fast, not on slow systems). |

|

Thanks: I will report if the issue with flush returns (or someone else will I guess since they can search for the flush backtrace in here). Regarding the rarity: on one hand it's probably more common on slower machines (as evidenced by the original report and the other person reporting it) when something 'heavier' like the web ui is produced: though on the other hand I didn't reproduce it more than once on the slow machine with regular operation either so it's exceptionally rare on most machines (maybe an exception to that are the most slow machines that run it (e.g. old raspberries or when they are under additional load by other servers)). Regarding the code: it does make more sense now since Trade.session had all ids under its wing while .query has a new id every time: it's only curious how it was working with .add etc. without having to call it only via query in the first place. |

|

well technically both are session objects - but the As it doesn't reproduce it's difficult to spot too ... and obviously, using FreqUI will expose this as otherwise we'd not be hammering the database from multiple threads on a regular basis. I suspect it's not so much a "power" issue than a "speed of IO" issue - where raspberries (with SD cards, anyway) are rather prone / affected - but it's a guess at best ... |

|

pylint is ... sometimes strange when it comes to certain things - too strickt in many instances (hence i'm not using it at all, but instead use mypy + flake8 - aligned to CI). |

|

^Thanks. Yeah it's kinda spammy, and half of the time I google about it people say "disable it manually" which defies the purpose. |

Well that's what i did - but globally in the editor - as i found too often that i'd need to "disable for this line" instead of getting helpful warnings or errors. |

|

Disclaimer: I'm not confident about what the solution here is so I'm just reporting what I know only and I do not claim to know the definitive solution to make a PR; at the same time I do not demand for any help so if anyone reading does not want to help then don't. I got a related error today but it's different (it appears it could be starting from the load from the UI again (because that's the only way those "could not get rate for pair" warnings are coming up here) though since it happened 5 seconds later it could be irrelevant): (if some line numbers in freqtradebot.py are out of order it's irrelevant since I have some personal comments in it (no actual different code) Because of the difference now being "raised as a result of Query-invoked autoflush; consider using a session.no_autoflush block if this flush is occurring prematurely": I'm reading in docs '''autoflush – When True, all query operations will issue a Session.flush() call to this Session before proceeding.''' so I wonder if that means it issues a flush to the session with the same id for all threads and not to the query.session of the last patch so I'm considering to disable autoflush in the code as an experiment and see what happens (and by the way: the docs report that autocommit is deprecated and it will be removed in 2.0 so I don't know if the general treatment of the software regarding sessionmaker instantiation is correct). |

|

So I got this again after the last comment's attempt with autoflush being off and the backtrace is from freqtradebot.py's process' Trade.query.session.flush(). It did not happen at saving a trade but after heavy usage of the web server so I guess that's progress since it didn't ruin the record. At this moment I suspect the sqlite lib itself is incapable in some cases at slow systems but I don't want to believe that too easily. |

|

is the database stored on a slow filesystem (like a sdcard on a raspberry)? considering how python handles threading (the "GIL") - i don't think it's possible to have "true" concurrency against the database from one freqtrade instance (hence i'd want to exclude the above), as we only use multiple threads, not multiple processes (which could be active at the same time). sqlite does not handle concurrent writes pretty well - but concurrent reads should be fine (especially as freqtrade isn't really concurrent due to the python GIL). it's also pretty strange - i'm running quite a few bots (with UI) - in some dry-run instances on pretty slow machines - and didn't experience this problem even once. How did you install freqtrade? docker? virtualenv? system python ... ? |

|

Yes it's on a slow card (directly on linux with ./setup on a venv of python 3.8.5) though the RAM is never filled so I didn't suspect the drive writes (though I probably should) and it never run two instances since I check with top (and always close it with ctrl-c after a stop anyway). I observe that a practical cause is heavy load from the web server because I kept reloading the ui at that time and top kept showing high CPU usage from processes like kworker/1:1-events. Looking at all three logs it appears it happens after arbitrary moments of freqtradebot.py; the three moments are: execute_buy()'s |

|

If you think a slow drive is an inevitable cause, it might be possible to be tested in a way that becomes faster than even the best nvme drives by using a ram disk (which can also become persistent). Though I find it hard to believe that a slow drive is an inevitable cause of these problems to be honest (even though I would not be surprised if a fast drive can workaround them). |

|

So even a ramdisk holding the sqlite file didn't help: failed after What I'm adding here which wasn't clearly stated before (but I was thinking about it for a while) is that there appears to be system load that persists for several seconds AFTER the web ui stops being used (the browser tab closed). It's very consistent and not a chance because I have never seen any of these problems if at least ~5 minutes have passed after using the ui. |

|

Back to the basics: I believe there's a very good chance the session handling is not safe especially when ui threads are involved in light of the fact not even a ram disk holding the database helped (even if of course a faster CPU might have workarounded the issue but that wouldn't be a practical solution here and it wouldn't solve the general issue either). I'm reading this person that insists even with scoped_session each thread should be closing their sessions derived from a separate call to that one scoped session individually https://stackoverflow.com/a/64828162/277716 and later claims that they should be removed too https://stackoverflow.com/a/64846206/277716 by citing the official docs about it https://docs.sqlalchemy.org/en/13/orm/contextual.html What I'm getting at: we might need to have each thread making a new session from _session() for each transaction (_session as it is currently derived from scoped session) and then remove()ing them/discarding them when that is done; right now it appears that instead all the threads get a query property from the one same _session from scoped_session and always use that (*I know debugging may show different ids but talking about the actual code conflicting with the methodology of the docs (they cite |

|

If you have the possibility, please test this on a different system (ideally using the official docker image). I run about 8+ bots on different systems (with different specs and performance) - and NONE of these has EVER had this problem in ~2 years of usage over the different versions. This makes me think that maybe it's some kind of specifity about your system / configuration. You also talk about "reloading the UI" - is this "clicking the "refresh" button - or pressing f5 reloading the whole UI html stuff? |

|

I believe it mainly happens with relatively heavy reloading with shift+f5 etc. and in general when the ui server is pummeled with some requests (and the server's system load appears to remain for seconds after the browser tab is closed); also I'm not sure if your systems have much better CPUs since after the ramdisk on fast RAM failed to fix it then I bet it can be workarounded only with a fast CPU; also it might depend on how much trading they do (e.g. if they do only 4-5 trades at the same time the situation might be much lighter than one doing 10 or more trades). I consider testing making a function that returns query objects from a new session that is = _session(). In general I think the docs are clear that they want people to do new_session = Session() (with Session = scoped_session()) |

|

using shift+f5 with freqUI does not make any sense whatsoever - the results you get from this is a static HTML page with a lot of Javascript. So instead of using shift+f5 (which will not touch the database whatsoever until the UI is fully started, by the way), you should simply use the "reload" button in the "botcontrols" section of FreqUI to refresh the content of the page. |

|

I bet you're right though I find it unimportant in the context of the problem since I first noticed the problem by using the page very normally without reloading it at all or reloading it with a single hit of a browser's reload button. I mentioned shift+f5 in the context of how I was trying to make it get higher load lately while testing its resilience to the problem but I bet you're right I should be using your controls instead. I want to see if I can follow the docs' example of new_session = Session() (when Session = scoped_session()) without messing up too much with the existing code. |

|

Oh sorry: it becomes clear to me you meant to not even use a single "normal" refresh of a browser in this case: since it may inflict heavy load to weak systems for no reason. I bet you're right that might alleviate the problem. I'd still want to avoid the chance since who knows what else may inflict load on it (an automated security update or whatever). |

|

when you reload (shift+f5 or f5) the page, you're causing additional disk load (uvicorn needs to get the files from disk - and send them to your browser) ... i wouldn't consider either of the 2 as "normal" load - but neither of the 2 will crash my bot either (not on my good VPS, neither on my shitty VPS with constantly failing disks, nor on my raspberry...). |

|

I bet I might have some additional conditions locally (e.g. more trades or an older/bigger database or whatever). I haven't made any changes to relevant code or use any weird OS so I don't think it's user error or even exceptionally rare (e.g. at least one other person gets it). Also sqlite is often accused "simplistic" but in practice this software is small enough for it so I would much easier look into sqlalchemy or FT causing the problem than such an ancient overused library. |

|

I just realized something very possible: what if FT is perfectly thread safe and it does nothing wrong at all it tems of SQLAlchemy handling, |

I was fiddling with the web ui (only viewing: reloading at the order tab: no actual trading or changes) and the 'regular' software crashed at that moment while it was trying to order something (it went through at the exchange but not at the database).

The text was updated successfully, but these errors were encountered: