Example content project for tensorflow-ue4 plugin.

This repository also tracks changes required across all dependencies to make tensorflow work well with UE4.

See issues for current work and bug reports.

- (GPU only) Install CUDA and cudNN pre-requisites if you're using compatible GPUs (NVIDIA)

- Download latest project release

- Download the matching tensorflow plugin release. Choose CPU download (or GPU version if hardware is supported). The matching plugin link is usually found under the project release.

- Browse to your extracted project folder

- Copy Plugins folder from your plugin download into your Project root.

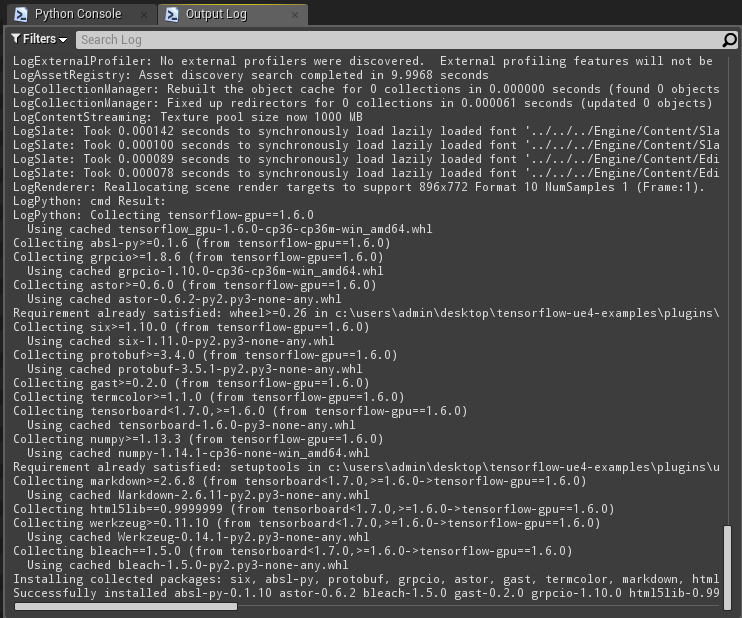

- Launch and wait for tensorflow dependencies to be installed. The tensorflow plugin will auto-resolve any dependencies listed in Plugins/tensorflow-ue4/Content/Scripts/upymodule.json using pip. Note that this step may take a few minutes and depends on your internet connection speed and you will see nothing change in the output log window until the process has completed.

- Once you see an output similar to the above in your console window, everything should be ready to go, try different examples from e.g. Content/ExampleAssets/Maps!

If you're not using a release, but instead wish to clone the repository using git. Ensure you follow TensorFlow-ue4 instructions on cloning.

Map is found under Content/ExampleAssets/Maps/Mnist.umap and it should be the default map when the project launches.

Default mnist example script: mnistSpawnSamples.py

On map launch you'll have a basic example ready for play in editor. It should automatically train a really basic network when you hit play and then be ready to use in a few seconds. You can then press 'F' to send e.g. image of a 2 to predict. Press 0-9 numbers on your keyboard to change the input, press F again to send this updated input to classifier. Note that this is a very basic classifier and it will struggle to classify digits above 4 in the current setup.

You can change the input to any UTexture2D you can access in your editor or game, but if the example is using a ConnectedTFMnistActor you can also use your mouse/fingers to draw shapes to classify. Simply go to http://qnova.io/e/mnist on your phone or browser after your training is complete, then draw shapes in your browser and it will send those drawn shapes to your UE4 editor for classification.

Note that only the latest connected UE4 editor will receive these drawings, if it's not working just restart your play in editor to become the latest editor that connected. You can also host your own server with the node.js server module found under: https://github.com/getnamo/TensorFlow-Unreal-Examples/tree/master/ServerExamples. If you want to connect to your own server, change the ConnectedTFMnistActor->SocketIOClient->Address and Port variable to e.g. localhost:3000.

If you want to try other mnist classifiers models, change your ConnectedTFMnistActor->Python TFModule variable to that python script class. E.g. if you want to try the Keras Convolutional Neural Network Classifier change the module name to mnistKerasCNN and hit play. Note that this classifier may take around 18 min to train on a CPU, or around 45 seconds on a GPU. It should however be much more accurate than the basic softmax classifier used by default.

See available classifier models provided here: https://github.com/getnamo/TensorFlow-Unreal-Examples/tree/master/Content/Scripts

mnistSaveLoad python script will train on the first run and then save the trained model. Each subsequent run will then use that trained model, skipping training. You can also copy and paste this saved model to a new project and then when used in a compatible script, it will also skip the training. Use this as a guide to link your own pre-trained network for your own use cases.

You can force retraining by either changing ConnectedTFMnistActor->ForceRetrain to true or deleting the model found under Content/Scripts/model/mnistSimple

Map is found under Content/ExampleAssets/Maps/Basic.umap

Uses TFAddExampleActor to encapsulate addExample.py. This is a bare bones basic example to use tensorflow to add or subtract float array data. Press 'F' to send current custom struct data, press 'G' to change operation via custom function call. Change your default ExampleStruct a and b arrays to change the input sent to the tensorflow python script.

If you have other examples you want to implement, consider contributing or post an issue with a suggestion.

See https://github.com/getnamo/tensorflow-ue4 for latest documentation.

depends on:

https://github.com/getnamo/tensorflow-ue4

https://github.com/getnamo/UnrealEnginePython

https://github.com/getnamo/socketio-client-ue4

If you're seeing something like

You did not follow step 3. in setup. Each release has a matching plugin that you need to download and drag into the project folder.

There's a video made by github user Berranzan that walks through setting up the tensorflow examples for 4.18 with GPU support.

For issues not covered in the readme see:

https://github.com/getnamo/TensorFlow-Unreal-Examples/issues

and

https://github.com/getnamo/TensorFlow-Unreal/issues

Example project used in the presentation https://drive.google.com/open?id=1GiHmYJeZI6BKUKYfel6xc0YFhMbjSOoCY17nl98dihA contained in https://github.com/getnamo/TensorFlow-Unreal-Examples/tree/presentation branch

Second presentation with 0.4 api: https://docs.google.com/presentation/d/1p5p6CjYYYfbflFpvr104U1GwrfArfl4Hkcb6N3SIBH8