-

Notifications

You must be signed in to change notification settings - Fork 403

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

JavaScript heap out of memory #339

Comments

|

What’s your os? Node.js version? UnCSS version? How many files/how big files are we processing here? |

|

Windows 10 (8 GB of RAM), v6.11.2, 0.15.0 |

|

Wow, that's a lot! How are you running uncss? Via the command line? As a postcss plugin? |

|

The way this is done https://github.com/giakki/uncss#within-nodejs. But with options parameter. |

|

I was able to crash uncss by running it against a huge list of URLs (I was using the JS API). I think this is basically an inherent limitation of how Node and UnCSS works. UnCSS basically loads all the pages, then all the CSS, and then begins its work. Node can't use all your system RAM, the heap is capped at a certain size. My task manager showed over 1.6 GB of memory usage before Node crashed. Edit: It can't use more than 1.7 GB. |

|

Honestly, you're just going to have to run this in chunks. Node just can't handle loading all that data into memory and processing it. |

|

Yeah I reckoned so. May need to run it on a server machine then, because running in chunks is no use to me. It adds duplicate css. |

|

Running it on a larger machine won't help, Node is hard-coded to only use so much memory. |

|

Conceptually, we really only need to render one page at a time. We grab all the used CSS classes from that page, and then can continue to the next one. If we are O(number-of-pages) in memory usage, that sounds like a problem. At a high level, it should be possible to be O(number-of-CSS-classes + size-of-largest-page). Unfortunately, it looks like we run |

|

@mikelambert That's not how it works, we need to load each page, get the CSS, then when we have all the CSS selectors, we need to check which selectors are used on each of the pages. So either we need to load them all at once, or we need to load them twice. |

|

Good point, I forgot the pages are used twice in the current algorithm. But again, I don't think it needs to load them all at once, or load them twice. Basic algorithm would be: Load a page, load the stylesheets (pulling from a cache of semi-processed stylesheets, if necessary). Then for each stylesheet, mark which css classes are actually used in the page. Unload the page (while keeping the semi-processed stylesheets around). Repeat for each page. I'm sure it's easier to build a simpler version of this (eating RAM by loading them all, or eating CPU by loading them twice), I'm just trying to point out that we actually can do better, here. As opposed to saying "oh yeah it's impossible to run on a bunch of pages", which I don't believe to be true. :) |

|

I just published this module a few days ago: My little brother says that this test page loads fast on his two year old google pixel phone, which surprised me because the CSS file for this page is 676,712 lines long. Anyways running uncss against the css file and the single test page results in an infinite loop as documented earlier. I'm starting to wonder though ... if a old google pixel phone renders the css and page fast, then surely uncss should be able to keep up ... Running it on the above test case uses 90% of my memory (16GB) and 30% of the CPU (8 cores). |

|

Yeah, memory can be utilized more efficiently, by unloading the pages that are processed. |

|

So I'm definitely not an expert here, but it seems like there has to be a really simple fast solution to this. Something like (And this is a totally captain obvious - very easily said thing - might even just be rephrasing what someone else has said):

Repeat until all the of css rules are examined. This should only take a few MB at most of memory and be fast. Anyways like I said I'm totally shooting from the hip here ... |

|

@oleersoy That's not how it works; we're using postcss, which doesn't really have support for parsing CSS bit by bit. Also, this would almost certainly make sourcemaps next to impossible. |

|

@RyanZim I understand - just trying to trigger some brainstorming around how this can be improved. I'm sure PostCSS or others will help us out if we can define a conceptual process that is more efficient and delivers the same end result - source maps included. I'll ask on SO as well. Perhaps there some uncss genius out there that knows of a flux capacitor that we can just tweak a bit. |

|

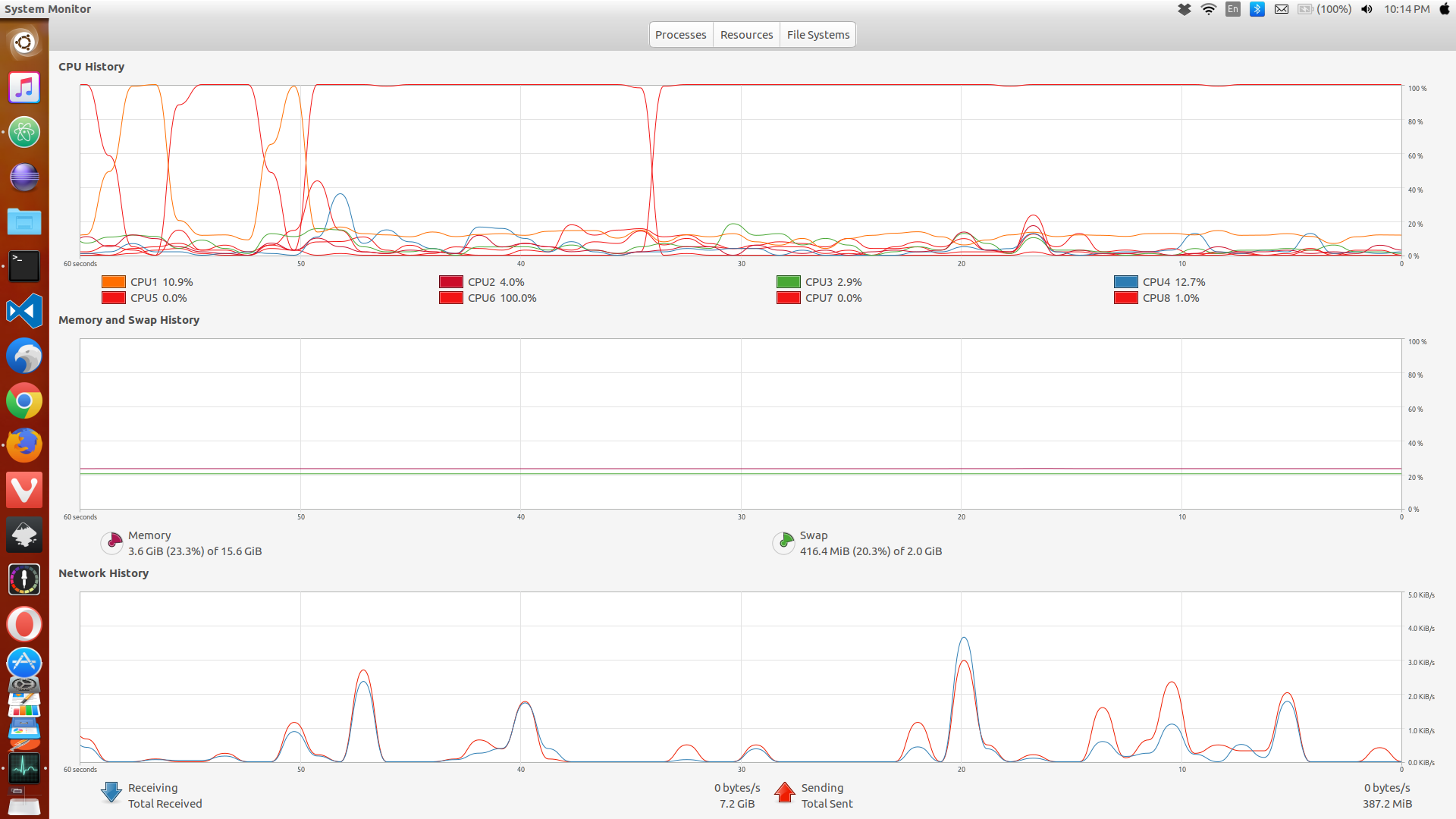

Just wanted to make a correction to my earlier memory utilization number - It's not using that much memory - that was Chrome with a lot of tabs open that was eating up my 16GBs - one core is running at 100% though ... forever.... Attached a screenshot of what system monitor looks like while running: |

|

You may want to have a look at: I just completed a test with over a million lines of css run against uncss and it completes in 22 minutes. Before I had chrome open with a lot of tabs, and it was eating most of my laptops memory. Without Chrome open (Leaving about 10 G of memory free) the build completes. |

Running out of heap memory, when a lot of files are processed. When I process files in chunks, they work. But that adds duplicate css to output. Is there any workaround, or do I need to increase my RAM?

The text was updated successfully, but these errors were encountered: