-

Notifications

You must be signed in to change notification settings - Fork 4

Results are unusable - what am I doing wrong? #1

Comments

|

Hi Sebastian, Thanks for trying our project for pruning LLaMA. After pruning a model, it is imperative to perform post-training before using it for any further application. This is because pruning adversely impacts the model's structure, necessitating the post-training step. Failure to execute this step would significantly reduce the model's efficacy, as evidenced by your results and also the results of our own experiments. It is a widely recognized problem that arises in model pruning. At present, our LLaMA library only supports structural pruning, but not the post training of that model. We are developing the code for post-training, but it still needs some time before we can release it in the repo. |

|

Hi Horsee, thanks your quick reply. I have apparently misunderstood the README, because the "Available Features" section speaks about "structural pruning" being available. The instruction steps in the README also do not mention the need for post-training/re-training. It only mentions "fine tuning" which usually is considered optional. Would you be open to clarifying the README so it get's clearer for other people that might be interested in the repository? Kind regards, PS: Do you have an ETA planned for re-training/fine tuning code? |

|

Hi Sebastian, Thanks for your advice! We have modified the readme to make it clear. |

|

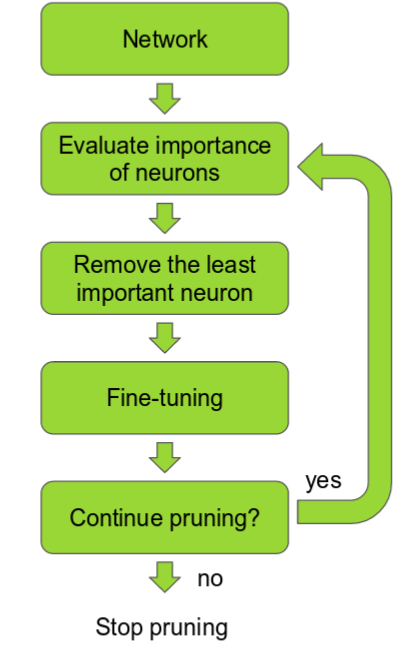

I found this image clarifying: |

|

Hi all, We found a huge bug in our pruning code and we are working on it to see the reason and the way to fix it. |

|

We have updated the code in https://github.com/horseee/LLM-Pruner. Please refer to the new repo-v- |

Hi,

I've tried the code "out of the box" and the output is very bad / unusable.

I've tried all pruner_types with a ratio of 0.5, and I also tried to reduce the pruning_ratio to 0.1.

The result stays the same.

Am I doing something wrong? (

How) have you been able to obtain different results?

Kind regards,

Sebastian

The text was updated successfully, but these errors were encountered: