New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Leaving the program open for days--continues to consume memory to exhaustion. #40

Comments

|

If you do not follow the template, this issue can hardly be helpful. |

|

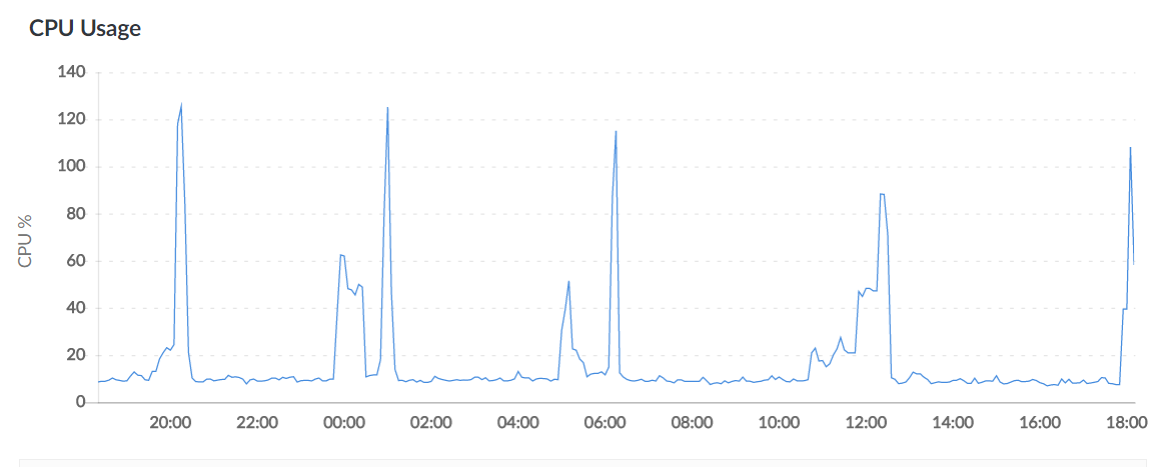

Hey. I apologize for not following the template. Here it is: Describe the bug After running scylla uninterrupted for a few days (say 5, or 120 hours), the memory of the OS goes to 99%. To Reproduce Open scylla, and allow it to run uninterrupted. I usually ran into issues after a couple of days. Also, this may/or may not be part of the issue: I would run a scraper that would use the scylla API every minute or so. I would also occasionally open up the browser to view the scraped proxies/countries. Expected behavior Screenshots Desktop (please complete the following information): Smartphone (please complete the following information): Additional context I found that I can ctrl+c/close out the program, and re-open, and the memory will completely clear up. The scraped/working proxies are left in place, and scylla will return to scraping (and verifying scraped proxies are still working) as expected. Memory usage does not continue to grow until in use for many days. @imWildCat I hope this is a little better. Again, my apologies. |

|

I can confirm this issue. After leaving it to run for two days, linux oom killer was triggered. There seems to be a memory leak somewhere but I couldn't find it. My syslog: [56917.949294] Out of memory: Kill process 2344 (scylla) score 670 or sacrifice child

[56917.952297] Killed process 2461 (scylla) total-vm:281812kB, anon-rss:61084kB, file-rss:24kB |

|

Found the culprit! Lines 55 to 62 in 19c8a2e

OOM was not caused by memory leak, but resource exhaustion. |

|

Fixes I could think of are:

What do you guys think? |

The program seems to continue to consume memory until it runs out. Ctrl+C and restarting clears it--and keeps in place proxies found...

The text was updated successfully, but these errors were encountered: