-

-

Notifications

You must be signed in to change notification settings - Fork 149

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

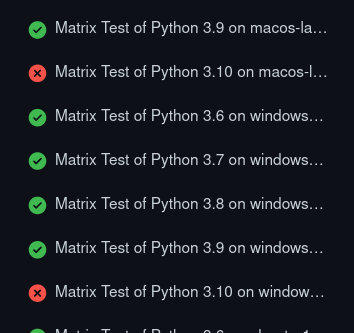

python3.10 compatibility #1136

Comments

|

I've started a draft #1137 to see what obvious failures pop out of CI. |

|

https://github.com/ivadomed/ivadomed/runs/6658529084?check_suite_focus=true#step:4:68 This is being tracked in #1057, though it looks like it's going to be a bit of a dead end at the moment. I've tweaked ivadomed to use the nightly build. I expect it to fail pretty hard but we'll see: https://github.com/ivadomed/ivadomed/actions/runs/2410383291 |

|

Another blocker: torch 1.8.x doesn't have 3.10 builds. Reviewing our research, torch needs to be I've bumped it: b21e77c and results will be https://github.com/ivadomed/ivadomed/actions/runs/2410415666 EDIT: needed some work, attempt 2: https://github.com/ivadomed/ivadomed/actions/runs/2410465467 Hot diggity, python3.10 on Linux passed all the tests: but macOS is completely broken: :P EDIT: I see the problem 3b944f9: attempt 3: https://github.com/ivadomed/ivadomed/actions/runs/2410531735 |

|

onnxruntime 3.12 that supports python 3.10 is scheduled to be released by mid-July. Also, microsoft/onnxruntime#9782 suggests to downgrade to 3.9.x. I was wondering if we could wait till mid-July and in the meantime bump up torch & drop support for python 3.6. |

|

I bumped torch to 1.11 for this, but @kanishk16 pointed out that that is a big jump and maybe not necessary. In https://download.pytorch.org/whl/ there's these

But if I try to use one of them: Notice that it says "from versions: 1.11.0 ....". There's no 1.10.x that it considered. For some reason it's ignoring https://download.pytorch.org/whl/cpu/torch-1.10.2-cp310-cp310-manylinux2014_aarch64.whl. Maybe this platform (which is Ubuntu 22.04) doesn't count as manylinux2014? I'll try to pin it down to 1.10 but I don't think it'll work: 8b988d0 -> https://github.com/ivadomed/ivadomed/actions/runs/2410969434 EDIT: indeed, no bueno: log: |

Information where to download onnxruntime 1.12 is embedded within the notebook The notebook [updated ~1 month ago] requirement for onnxruntime 1.12 is mandatory. from packaging import version

from onnxruntime import __version__ as ort_version

if version.parse(ort_version) >= version.parse("1.12.0"):

from onnxruntime.transformers.models.gpt2.gpt2_helper import Gpt2Helper, MyGPT2LMHeadModel

else:

from onnxruntime.transformers.gpt2_helper import Gpt2Helper, MyGPT2LMHeadModel

raise RuntimeError("Please install onnxruntime 1.12.0 or later to run this notebook")Onnxruntime 1.12 needs to be provided as nightly (not just for python, but also for c#, c++ etc. ) |

|

@kousu looks like if we want to support python3.10, torch 1.11.0 is the only way to go pytorch/pytorch#67669 (comment) |

|

ONNXRuntime v1.12 is out! https://github.com/microsoft/onnxruntime/releases/tag/v1.12.0 |

Motivation for the feature

Python3.10 is standard now: Ubuntu 22.04 came out last month and uses it as its default. But ivadomed is only marked compatible up to 3.9, meaning it cannot be installed on Ubuntu 22.04, or anyone else using an up-to-date system.

This follows #770.

Description of the feature

Change

ivadomed/setup.py

Line 35 in 1da019b

to read

and fix anything this breaks.

Additional context

Due to https://github.com/neuropoly/computers/issues/338,

ivadomednow has to hide inside of conda envs set up likeconda create -n my_env python==3.8when we are using it internally onromane.neuro.polymtl.caorrosenberg.neuro.polymtl.cato do research. And come to think of it, this would affect SCT too, which we usually run onjoplin.neuro.polymtl.ca-- except SCT bundles its own conda.It would be nice to have the flexibility to install ivadomed with the faster

pip install --userorpython -m venvagain.The text was updated successfully, but these errors were encountered: