New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

k3s doesn't work behind corporate proxy #194

Comments

|

I see below after installing k3s in VM behind corporate firewall. -bash-4.2$ k3s kubectl get pods -n kube-system |

|

This doesn't seems a k3 issues at all. References: |

it's works ? |

|

Tried to add the HTTP_PROXY/HTTPS_PROXY env to the k3s service, does not work Also tried to add it to the docker service and run agent with "--docker" flag, no joy... #> cat /etc/systemd/system/docker.service.d/http-proxy.conf

[Service]

Environment="HTTP_PROXY=http://corporate.proxy:10754"

Environment="HTTPS_PROXY=http://corporate.proxy:10754"

Environment="NO_PROXY=localhost,127.0.0.1"

#> systemctl daemon-reload && systemctl restart docker && systemctl show --property=Environment docker

Environment=HTTP_PROXY=http://corporate.proxy:10754 HTTPS_PROXY=http://corporate.proxy:10754 NO_PROXY=10.43.0.1

#> docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

ab939ee831ca 2ee68ed074c6 "/coredns -conf /etc…" 50 seconds ago Up 49 seconds k8s_coredns_coredns-7748f7f6df-dwm9n_kube-system_2cd74aaf-4956-11e9-b107-000c2928d9f1_367

[...]

#> docker logs ab939ee831ca

E0319 10:20:07.777680 1 reflector.go:205] github.com/coredns/coredns/plugin/kubernetes/controller.go:317: Failed to list *v1.Endpoints: Get https://10.43.0.1:443/api/v1/endpoints?limit=500&resourceVersion=0: dial tcp 10.43.0.1:443: connect: no route to host

E0319 10:20:09.781958 1 reflector.go:205] github.com/coredns/coredns/plugin/kubernetes/controller.go:322: Failed to list *v1.Namespace: Get https://10.43.0.1:443/api/v1/namespaces?limit=500&resourceVersion=0: dial tcp 10.43.0.1:443: connect: no route to host

.:53

2019-03-19T10:20:11.775Z [INFO] CoreDNS-1.3.0

2019-03-19T10:20:11.775Z [INFO] linux/amd64, go1.11.4, c8f0e94

CoreDNS-1.3.0

linux/amd64, go1.11.4, c8f0e94

2019-03-19T10:20:11.775Z [INFO] plugin/reload: Running configuration MD5 = 3ef0d797df417f2c0375a4d1531511fb

E0319 10:20:11.785788 1 reflector.go:205] github.com/coredns/coredns/plugin/kubernetes/controller.go:322: Failed to list *v1.Namespace: Get https://10.43.0.1:443/api/v1/namespaces?limit=500&resourceVersion=0: dial tcp 10.43.0.1:443: connect: no route to hostI don't know where the 10.43.0.1 ip comes from though... #> ip addr

3: docker0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:a8:c0:7f:1d brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:a8ff:fec0:7f1d/64 scope link

valid_lft forever preferred_lft forever

4: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether d6:64:f6:18:3f:25 brd ff:ff:ff:ff:ff:ff

inet 10.42.0.0/32 brd 10.42.0.0 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::d464:f6ff:fe18:3f25/64 scope link

valid_lft forever preferred_lft forever |

|

Also see /issues/152 |

|

Make sure the node do DNS against ipv4 address of 1.1.1.1 ie, on the host, do: host www.cnn.com 1.1.1.1 if does not work, you network is blocked from the dns server at 1.1.1.1, and coredns/flannel/traefik will not install properly. |

|

Had the same problem. I installed k3s without deploying coredns and traefik, got the plans from git and manually deployed them with my company's DNS server IPs instead of 1.1.1.1. Would be nice if the DNS servers to be used were configurable. Perhaps in the same way as mentioned here: #166 |

|

Thanks @ThomasADavis & @fuero, this info saved me a lot of time trying to reproduce the issue. We have created a release candidate v0.3.0-rc3 which will hopefully fix these DNS issues. Please try it out and let me know if it helps with the proxy settings! The settings are configurable in that we will either take a |

|

@erikwilson Nice - I'll try it next week when I'm back at work. Keep up the good work! |

|

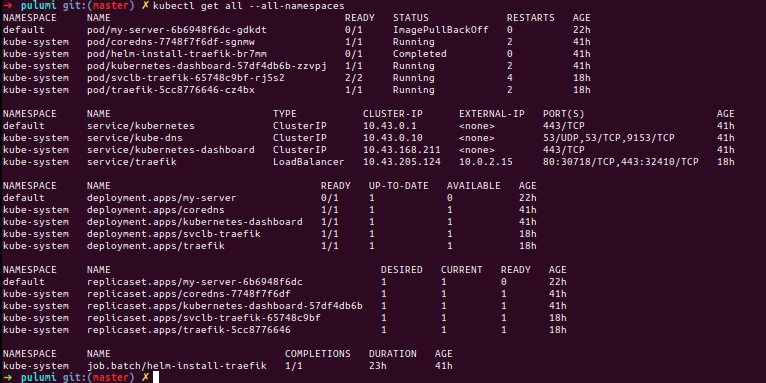

With v0.3.0, after adding proxy settings to . $ kubectl get pods --namespace kube-system

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-7748f7f6df-dbszl 1/1 Running 0 90m

kube-system helm-install-traefik-nsbmc 0/1 Completed 0 90m

kube-system svclb-traefik-6d68977fbc-tdw6j 2/2 Running 0 5m51s

kube-system traefik-5cc8776646-rghgj 1/1 Running 0 5m51s |

|

The following works when running using vagrant behind a proxy on alpine, vagrant is managing /etc/environment using the proxy plugin. Advantage being I have just one place to manage the proxy. |

|

Can you test it out? @AWDDude @ankurshah7 @tfabien @fuero With v0.3.0 support, we've added in support for airgap. https://github.com/rancher/k3s/#air-gap-support and introduced the ability to configure DNS. Per @erikwilson

|

|

Shouldn't need docker @jeusdi, it looks like the other images pulled down okay unless you used the air-gap tar? What is the result of |

|

Are you using air-gap images @jeusdi? How are you configuring your proxy? Might be best to create a new issue or hop on the rancher/k3s slack channel for more help. |

|

I don't know which type of images I'm requesting right now. I'm deployig using I tested behavior without having behind a proxy, and images have been pulled down correctly. It seems a proxy related issue. |

|

I've just added proxy configuration at It seems, k3s is able to pull down images right now. Which's the container runtime k3s use? |

I am also facing the same issue, how did you resolved it in your workspace? |

|

When is edit than execute |

|

We have a same issue. The configuration file UPDATED ON 7 June 2020: I tried to reinstall k3s with setting environement variables below. After that, My k3s cluster is running correctly. |

I am having the same problem. Can you please clarify, how did you reinstall K3s and on the same time passed these environments to it? |

|

If there are any HTTP proxy related variables in the user's environment during installation, they will be inherited and written by the installer to the file Example k3s.service.env: |

|

Hi guys, it seems k3s itself works BUT all the containers that are created through kubectl (no matter if using contained or docker as runtime) are not initialized with the http_proxy variable correctly set. So, based on the complexity of the deployed application, it is a mess to understand where and why the deployment fails. I have tried for 3 days to have k3s automatically inject the proxy on every container upon creation but did not manage to find any solution. Note that, using plain docker, it is possible to have every container correctly configured with proxy on startup https://docs.docker.com/network/proxy. However this configuration seems to be ignored by k3s even when using docker as runtime. |

|

@gabrielecastellano There is no comparable feature in Kubernetes or K3s to pass HTTP proxy settings into user application pods. The canonical way to achieve this is by running a mutating webhook to inject environment variables when pods are created. This may just do the job: |

|

@janeczku Thank you for your reply and the solution you provided. I am going to try this injector! |

Describe the bug

I have 2 Ubuntu VMs running a k3s cluster vm1 is the master and vm2 just has the agent running. Both VMs have to route through a proxy to access the internet. I have set both the HTTP_PROXY and NO_PROXY environment variables, in both my user context as in the /etc/systemd/system/k3s.service

The problem is almost all pods are stuck in a crash loop:

k3s kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-7748f7f6df-d54td 1/1 Running 0 17m

coredns-7748f7f6df-gmjbk 1/1 Terminating 0 16h

helm-install-traefik-jg4v5 0/1 Terminating 173 16h

helm-install-traefik-p6ld7 0/1 CrashLoopBackOff 8 17m

Here are the logs from one of the traefik pods:

k3s kubectl logs helm-install-traefik-p6ld7 -n kube-system

--listen=127.0.0.1:44134 --storage=secret

Creating /root/.helm

Creating /root/.helm/repository

Creating /root/.helm/repository/cache

Creating /root/.helm/repository/local

Creating /root/.helm/plugins

Creating /root/.helm/starters

Creating /root/.helm/cache/archive

Creating /root/.helm/repository/repositories.yaml

Adding stable repo with URL: https://kubernetes-charts.storage.googleapis.com

[main] 2019/03/08 17:09:24 Starting Tiller v2.12.3 (tls=false)

[main] 2019/03/08 17:09:24 GRPC listening on 127.0.0.1:44134

[main] 2019/03/08 17:09:24 Probes listening on :44135

[main] 2019/03/08 17:09:24 Storage driver is Secret

[main] 2019/03/08 17:09:24 Max history per release is 0

Error: Looks like "https://kubernetes-charts.storage.googleapis.com" is not a valid chart repository or cannot be reached: Get https://kubernetes-charts.storage.googleapis.com/index.yaml: dial tcp 216.58.193.80:443: connect: connection refused

Here is the k3s.service file which contains the proxy environment vars (NO_PROXY is not on its own line, its a word wrap issue):

cat /etc/systemd/system/k3s.service

[Unit]

Description=Lightweight Kubernetes

Documentation=https://k3s.io

After=network.target

[Service]

Environment="HTTP_PROXY=http://proxy.corp.com:8080/" "NO_PROXY=localhost,127.0.0.1,0.0.0.0,localaddress,corp.com,10.0.0.0/8,vm1,vm2"

ExecStartPre=-/sbin/modprobe br_netfilter

ExecStartPre=-/sbin/modprobe overlay

ExecStart=/usr/local/bin/k3s server

KillMode=process

Delegate=yes

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

[Install]

WantedBy=multi-user.target

To Reproduce

Steps to reproduce the behavior:

Run k3s cluster behind corp firewall

Expected behavior

k3s to respect proxy environment vars or some other way to specify proxy configuration

The text was updated successfully, but these errors were encountered: