New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

PID controllers get messed up after a while #671

Comments

|

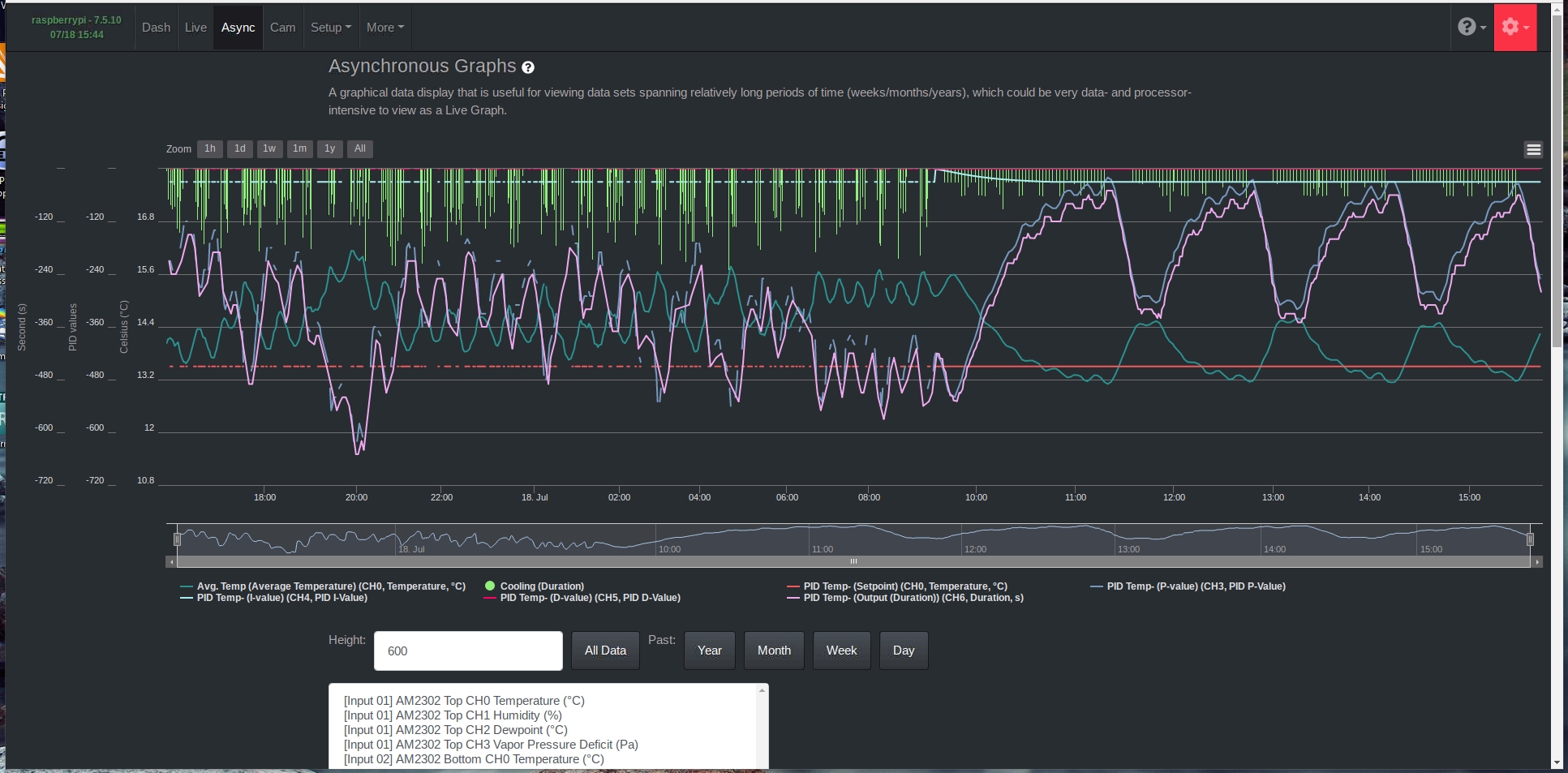

You can go to the asynchronous graph page and, for the offending PID, view the P, I, and D values for the PID, in addition to the PID duration output. This is the first bit of data that's needed to understand how the PID output is calculated. I would also include on this graph the Output duration itself to make sure it matches the PID output. Once we see that data for a period of time comprising good PID operation and bad PID operation, we can begin to understand what's happening. |

|

Also check the daemon log (ALL button) for any errors or strange lines at the time the PID(s) began acting strangely. Was it all PIDs that started doing this or one? |

|

Another data series to include on the asynchronous graph I mentioned previously is the measurement the PID uses as input and the setpoint. |

|

3 out of five PID controllers where acting up, the other 2 haven't produced any output the past time because no heating or humidifying where needed. Here are the graphs: In the log I see a lot of these errors during the period things where messed up: "RPyC timed out. To prevent this error, increase the RPyC Timeout value in the configuration menu" These errors stopped after restarting the backend. |

|

I just noticed that I used the average RV over 60 minutes as input in the 2 RV graphs, this actually should be a different input, average RV "live". I hope this won't be an issue for interpreting what went wrong? |

|

Can you run one of the PID controllers that suffers from the issue in debug mode (Input option Log Level Debug)? |

|

In fact, this will help the most. In addition to enabling debug mode on one of the affected PID controllers (and activating it), could you also do this:

This will start the daemon in debug mode, which will provide a lot of potentially useful information when the error occurs. Upgrading to master is needed because I just pushed code that's not available in a release that includes debug logging lines about the RPyC process. Keep in mind this will generate a lot of log information. You may want to add the Free Space Input to monitor your micro SD card free space and notify you if it starts to run low. Logrotate will take care of keeping the log sizes manageable, but you may have to go to where they're stored if you don't notice soon after the error occurs and logrotate has moved the pertinent log info to an archive (if the length of time from the error occurring and you getting to the logs being so long that logrotate has archived the log in the meantime). Let me know if you can do this and if you have any questions. When in debug mode, the daemon will function exactly as it would normally, but there will just be more log lines. |

|

How long does it typically take for the error to occur? |

|

Since my setup is currently in use I'd rather not update at the moment. I'll put onde of the PID controllers in debug mode for now. There's no typical period after which the errors start occuring. It can be days, hours or weeks. |

|

Did you have the Mycodo RAM Input running at the time and can look at what the RAM usage was at the time the issue began? |

|

Unfotunately not. At the moment the temperature PID looks like its keeping the cooling output almost constantly high however the temperature never reaches the Band Min setpoint. Instead the temperature is regulating just above the Band Max setpoint within a span of 0.5 degrees C. I guess my next step is to check if the fridge is working properly, maybe the fridge thermostat is switching. This would be unwanted behaviour and also would be strange because I measured the min temperature at the current thermostat setting and that was almost 5 degrees below the currently set temperature in the PID controler. Another thing I noticed atm is that the output duration of each PID period with a min on time of 60 seconds gets split up in 49 seconds and 11 seconds instead of 60 seconds per PID period. |

|

In the meantime I found the problem with the continuous cooling. It was indeed the fridge thermostat switching too soon. I hope that all of the excess switching during the strange behaviour of the PID controller hasn't lead to unwanted wear of the compressor. At least now I can get back to trying to figure out why the PID controllers act up sometimes. |

|

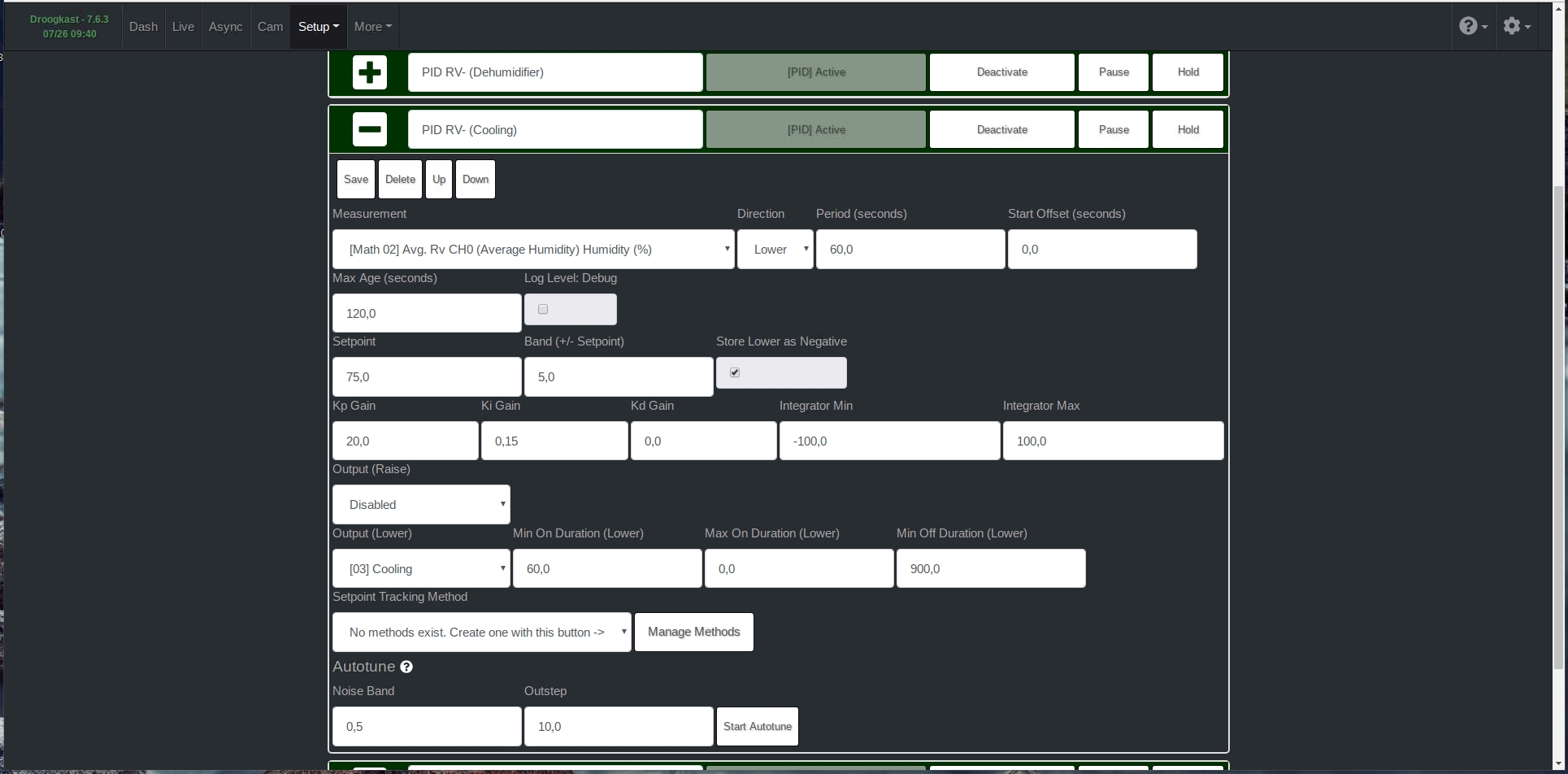

Can you take a screenshot of the configuration options for your PIDs that cause the issue? |

|

Something I'm confused about is your PID periods are all 60 seconds, which means no output used by any of these PIDs can turn on for more than 60 seconds (by these PIDs). However, I see in your initial post the outputs are turning on for far longer than 60 seconds (prior to the 'issue' starting). Do you have something else turning on these outputs besides the PIDs? |

|

Sorry, I wrote that before realizing how the PIDs actually work (excuse my just-woken-up brain). The PIDs can keep an output on continually (past the PID period), though I now have an idea for what's causing your PID behavior. I'll have to look at the code to determine where exactly it's occurring. |

|

Sorry for the late reply, I've been "offline" (read: vacation) for a while. Do you mean you think you can fix the issues? |

|

I added a debugging line to the PID controller, but I'm not sure if that's going to catch the issue. I had asked previously if you could look at the daemon log at the exact time the issue began. Can you try to bring those log lines up and paste the contents here, for just a few minutes before the issue started until a few minutes after the issue began? |

|

Unfortunately there is no specific time it begins, it just gradually does. All I can see in my Daemon error log is that I get a lot of "RPyC timed out .." errors Is it possible that this has something to do with the added ability to use the same output on 2 different PID controllers? I'm using 1 output on 2 PID controllers in my case |

|

What's more important than the error itself is what part of the system the error is coming from. Each log line has a string that identifies where the error originates. Therefore, it is crucial to have the exact log lines to analyze. Just knowing there is an error without knowing where it came from is not that useful.

An easy way to test would be to disable one of the PIDs. I don't recommend using the same output for more than one PID because there can be unforeseen issues. |

|

Here's a few lines from the past days: As you can see it involves multiple PID controllers and one conditional statement (I only have 1) Using the same output for 2 PID controllers is a must have in my case but I can test what happens if I disable one of them. Edit: This is the code of the conditional statement, the actions are set PWM's. I'm running my fans at very low duty cycle so this is just to make sure they keep running. #measurements

inlet_rpm = measure("{4aa60edd}")

outlet_rpm = measure("{948428bf}")

#Conditional Inlet Fan RPM

if inlet_rpm < 100:

run_action("{3ad209a7}")

else:

run_action("{0c5ed710}")

#Conditional Outlet Fan RPM

if outlet_rpm < 100:

run_action("{ecc3b38e}")

else:

run_action("{4d2f3b63}") |

|

I had one of my production systems experience this issue twice in the past 2 weeks (RPyC: "result timeout"). So, I'm exploring other RPC options, such as Pyro4. I've successfully replaced the code, and am currently running a stability test. |

|

"Good" to hear that it's not just me. |

|

I haven't seen any issues with the new communication system. If you would like to try it and see if your issues persist, you can upgrade to master. |

|

Thanks! I will upgrade to master asap. In the meantime I've raised the RPyC timout to 60 and this seems to delay the occurance of the issues but after a few days they still turn up slowly. |

|

I get the following error when trying to upgrade to master. I'm currently in production so I restored back to 7.6.3 for now because I don't want too much down time. Edit: |

|

This may be a result of previously upgrading to master, then now upgrading to master again. The master branch changes, so repeated upgrades to master may cause issues. Can you restore to the backup that was made of your system just prior to upgrading to master the first time, then upgrade to master? All settings are preserved when upgrading to master, and a backup is also made just as if you were upgrading to a release. |

|

Can you look at the full upgrade log for the error that caused the error you pasted to be returned? |

|

I didn't upgrade to master earlier, this was my first try. Here's the full log of yesterdays attempt: Thank you so much for all the effort you are putting into this! |

Exactly the error I suspected, which was likely the result of previously upgrading to master. If you follow the instructions in my previous comment, you should be able to upgrade successfully:

|

|

unfortunately I'm still getting the same error |

|

The latest release, 7.7.1, has switched to Pyro4. If you upgrade, let me know if your issue persists. |

|

Thank you, I just updated and will let you know how it goes. |

|

Oh wow. I took a closer look at the graphs and that is a lot better. I take it by no new messages since you updated that you haven't had any problems. When I refactored Mycodo to use Pyro4 instead of RPyC, I didn't know there was a Pyro5 that existed. So, this latest version release (7.7.3) I've upgraded Pyro4 to Pyro5. I'm not sure if there will be any further noticeable performance improvements, but maybe ;) |

|

I think the performance improvement you saw was related to the messages being transmitted faster. For whatever reason, with RPyC, sometimes communication between process could take several seconds! Definitely not good for things like turning on outputs from PIDs. Seeing your data makes all that work worth it. I rarely see such an obvious performance improvement. |

|

Had some issues with the PID controllers not working at all in version 7.7.3 but after upgrading to 7.7.4 it was all working again and it's still looking good so far. There is some offset in the resulting values but I reckon I need to re-tweak the PID controllers for that. |

|

How has Pyro been treating your Mycodo? |

|

I don't mean to be a pain, but I'm eager to know if your issue has reemerged since the RPC change from RPyC to Pyro. If all has been good, I think we can finally close this issue. |

|

Sorry for the late reply. I've been busy with a lot of things except for my curing chamber. On top of that I'm currently having VNC issues with my r-pi but I had a good chance to test it after you implemented it. After switching to Pyro4 it got a lot better, as you have seen on the screenshots I posted earlier. After the update to Pyro5 I was under the impression that it got a bit less stable than with version 4 but still a lot better. The lesser results might also have something to do with lesser products in the fridge. |

|

Time to close this one! Finally got to sorting my VNC issues and monitored the behaviour for a short while. Looking good! Thank you for all the effort that went into this! |

Mycodo Issue Report:

Problem Description

PID issues

Errors

Once in a while I have to restart the Mycodo backend because my PID controllers get messed up

Steps to Reproduce the issue:

I can't reproduce it in a specific way, it just happens once in a while.

After rebooting the backend it starts running properly again.

Screenshot:

You can clearly see the strange behaviour before rebooting the backend (just after 9.30) and correct behaviour afterwards

p.s. I don't know if this info is relevant for the issues but have 5 PID controllers in total:

Cooling (Fridge)

Heating (Heater, seperate from cooling because it needs very different settings)

RV- 1 (Cooling to lower humidity)

RV -2 (Dehumidifier to lower humidity)

RV+ (Humidifier, seperate from both RV- because it needs very different settings)

I use both the dehumidifier as well as the fridge for lowering RV because it produces an overall better result.

The text was updated successfully, but these errors were encountered: