New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

How to use read multiple / write multiple #319

Comments

|

New version 0.2.227 is now available |

|

Hi @mikakaraila What should be put in the payload to use READ MULTIPLE? An array of NodeId? |

|

Please look example from OPCUA-TEST-NODES.json there should be example on one tab. |

|

Hello, thanks for this useful node. I was checking the example, and I managed to trigger a multiple read. The output, however, is a series of separate messages for each parameter, as if I read them sequentially, but with no index that can be used with the Join node afterwards. Is this the normal behaviour? |

|

Did you check topic of the msg? |

|

I tested inject "readAll" twice to read them again. No problems. Do you inject anything else to client node? |

|

Something like this? Function node will use context as save each variable and then combine them to newmsg. var variableName = msg.topic.nodeId.toString(); // Use # as delimeter names = variables.split("#"); console.log("Names:" + JSON.stringify(names)); if (names.length == 2) { |

My Clear node array is injected once upon deploying/restarting the flows. Then the inject nodes inject the variables once, automatically. I didn't know if you had an internal queue, so I gave them 500 ms between injects to make sure the node can process each message before sending the next one. My server currently is down due to power issues, but I'll share the flow when it's up again. |

|

OK, I will check what happens with clear array if it will cause something. |

|

If I clean the reading queue everytime, add all the nodes, and trigger the multiple read, it works. Otherwise, it doesn't. |

|

But error seems to be in the mssql interface? ConnectionError: Connection is closed. at Request._query (/root/.node-red/node_modules/mssql/lib/base/request.js:495:37) at Request._query (/root/.node-red/node_modules/mssql/lib/tedious/request.js:367:11) at /root/.node-red/node_modules/mssql/lib/base/request.js:459:12 at new Promise () at Request.query (/root/.node-red/node_modules/mssql/lib/base/request.js:458:12) at connection.node.execSql (/root/.node-red/node_modules/node-red-contrib-mssql-plus/src/mssql.js:423:40) at doSQL (/root/.node-red/node_modules/node-red-contrib-mssql-plus/src/mssql.js:779:25) at /root/.node-red/node_modules/node-red-contrib-mssql-plus/src/mssql.js:765:17 at runMicrotasks () at runNextTicks (internal/process/task_queues.js:60:5) at processImmediate (internal/timers.js:437:9) { code: 'ECONNCLOSED' } |

|

My OPC-UA flow is completely unrelated to the mssql interface, it is not connected to it and it does not depend on it. If there is a problem with the MSSQL, it is from another group of machines, that supplier made a mess with their DB. In any case, I still can read it. The problem I have now is that I do not have an efficient way of reading multiple variables from the OPC-UA interface for these other production lines. In order to do so, I still have to clear and initialize the variables every single time. |

|

I mean, after my second inject with "readmultiple", my catch error node focused on the OPCUA client node generates an output where the error message is: |

|

Hmm cannot check code line as you have now older version running (102-opcuaclient.js: 730). Input msg.topic should be nodeId String machine_started is not valid nodeId as it doesn´t have ns=1;s= Can you install latest version to check code and rerun to get correct code line into the error message? |

|

machine_started is the name of the node where the error occurs: It is also the first variable in the list. I already updated to the last version and same thing happens. This is the output of the catch error node in node-red: Stands to reason that if I had configured the OPC-UA node tag in the wrong way, it would never be able to read the values. As it is now, it works just ONCE after adding the variables. If I then clear the list and add the variables again, I can read them another time, but only once. So in order to work, my flow should be like this:

|

|

Looks for me that flow mixes msg.topic and msg.name can you check your flow? |

|

Can you add to flow debug all that is injected into the client node? As action readmultiple will store all msg with topic into the array calling actual session.read(items) will crash if there is any other than valid nodeId stored into the items. I can add there extra check to validate each msg.topic that it is valid nodeId. I will do this later today with other fix. |

|

Ack good that you found it. I have Digital Twin under work. I will do just needed fixes to node-red-contrib-opcua. |

|

OK, I will take a look to that node if I could add similar node to handle read/write multiple. Question: Which S7 package contains "Variable list" feature? |

node-red-contrib-s7 p.s. thanks for your work <3 |

|

@OriolFM can you explain / give some coordinates for the "Variable list"? I could implement similar with node crawler. Then it would be easier to select nodeId from the list to read/write. |

The S7 node endpoints have a list where you can already specify which variables you want to read. It looks like this: In the past, you had to define them yourself one by one, but in more recent versions they added the Import/export buttons, allowing the endpoint node to read or write CSV files where the memory addresses, variable type, and offset are stored, as well as a variable name, so the PLC node will already produce an output with a human-readable variable name. This is an example of a CSV file I'm currently using: This saves a lot of work, being able to define the variables you read from each endpoint and the meaningful variable name you want to use (as opposed to the address + variable type + offset). I estimate that about 75% of the time I spent working with the OPC-UA nodes was getting the output from the node, loop through the items array and compare to a whole list of nodes and variable names, and assign the variable name if the item name string contained a specific substring, then getting that output arrays with variable names and convert them to a JSON object sorted by modules (the machine is split in different modules, so I separate the variables for each one of them). The only issue I have with the S7 node is that the variables you read from an endpoint are fixed. You can choose to read all of them at once, or only produce the values that changed since the last update (similar to a subscription). You can setup a regular interval for reading the variables, or you can use an S7 Control node to trigger an asyncronous read if you want, but each time you'll get either all variables or just the ones that have changed from last time, depending on which mode you selected. You can't just choose to read the value from a specific variable and only that one if you configured 40 entries. Your OPC-UA node is more versatile in which you can dynamically alter the variables you want to read each time, but sometimes it would be useful to just configure everything in the endpoint node and just trigger the reads whenever you want (or put it in subscription mode and let it generate the output when a value from the list changes). The option of sending a manual request at the node input could still be there if the variable list was empty, so it would keep backwards compatibility, for instance. |

|

I @mikakaraila looking in the examples, I can not find a way to pass an array of nodeId to be read. Is it possible? |

|

I found this example which subscribes multiple by sending nodeId array. |

|

I'm on the latest version on the library, but it does not work, I've got the following error: it seems that |

|

I KNOW, as I wrote "I found this example which subscribes multiple by sending nodeId array." I just don´t have time to check read multiple example / interface... |

For subscription, is there any way to receive all the variables that have changed in a single array, instead of receiving n messages? I read am reading several OPC-UA servers with over 200 nodes each and the message overhead is taxing on my node-red server. Thanks! |

|

As far as I have experience only read multiple will return all values in one array. As subscription is triggered only on value changes those are individual item that will get notification. This is normal OPC UA functionality. Only as subscription is created it is possible to add all items in array. |

|

Then, what about adding the nodeIds in an array for Read Multiple, like in the Subscription example? Is it possible? I've been trying to add all the nodeIDs in a single array for my readmultiple application, but it is not working. At the moment, I have a lot of opc-ua item nodes (between 180-300) to read the data from several chemical production lines. Having so many forked wires increases the memory consumption of node-red and creates a lot of overhead. The main problem with this line is that almost all of the OPC-UA parameters are analog readings from sensors that change almost every time, so I get about 80-90% of all my parameters every time I read in subscription mode. That generates a lot of messages, and it is actually less costly (regarding memory consumption and speed of processing) to get a single array with read multiple in a single message than getting the subscribed parameters one by one in separate messages. Also, processing the parameters is easier if you have all of them in a single array and convert it to a JSON object with named properties, that you can use with a simple change node to overwrite the context object that holds the information and you display on the dashboard. With subscription, the code is more complex, it is necessary to update each single property of the context object separately, requiring more logic, processing power and steps. |

|

Ok, just back at home from SPS. I will add option to use payload as array of nodeIds. It is "missing" feature from readmultiple. |

|

Thank you very much! I was wondering about that :) I'll give it a try when it's ready and let you know how it goes. |

|

And works :-) I will publish new v0.2.292 in few minutes. |

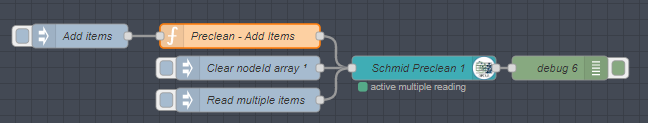

I tested it, followed these steps:

So actually, I'd say it works fine, except for returning all these individual messages when the array is processed. I think it should either send nothing when the array is processed, or send the normal array once all the nodeIDs have been added. Thank you for your help! Do you have a Ko-Fi page or equivalent? :) |

|

What you mean with this comment? "except for returning all these individual messages when the array is processed" I don´t have Ko-Fi page, done everything free :-) Just enjoy helping people and solving problems. Kind of hobby for me. Code will always clear items when Array of nodeIds are provided in the payload. I added actually only 8 lines of code for this feature, in a way it is still compatible with earlier releases. |

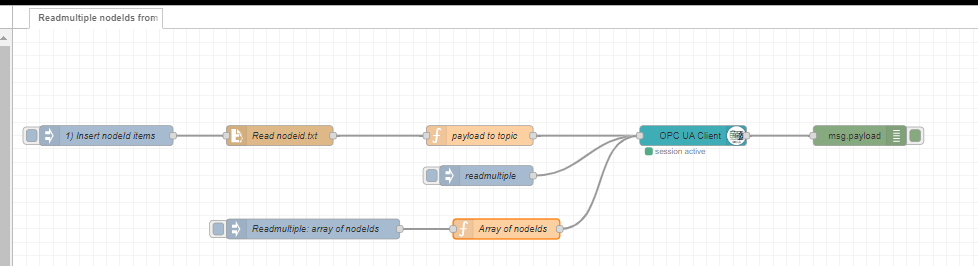

I added example, look this:

Flow:

Items in normal way:

Client action:

And import inject with Topic: readmultiple

Write multiple same way.

The text was updated successfully, but these errors were encountered: