Norm Matloff, Prof. of Computer Science, UC Davis; my bio

The site is for those who know nothing of R or even of programming, and seek a quick, painless entree to the world of R.

-

FAST: You'll already be doing good stuff -- useful data analysis --- in R in your very first lesson.

-

No prerequisites: If you're comfortable with navigating the Web, you're fine. This tutorial is aimed at you, not experienced C or Python coders.

-

Motivating: Every lesson centers around a real problem to be solved, on real data. The lessons do not consist of a few toy examples, unrelated to the real world. The material is presented in a conversational, story-telling manner.

-

Just the basics, no frills or polemics: Notably, no Integrated Development Environments (IDEs). RStudio, ESS etc. are great, but you shouldn't be burdened with learning R and learning an IDE at the same time. This can come later, optionally. Coverage is mainly limited to base R (the gplot2 graphics package will be an exception), so for instance the popular but self-described "opinionated" Tidyverse is not treated, partly due to its controversial nature but mainly because it would be a burden on the user to learn both base R and the Tidyverse at the same time.

-

Nonpassive approach: Passive learning, just watching the screen, is NO learning. There will be occasional Your Turn sections, in which you the learner must devise and try your own variants on what has been presented. Sometimes the tutorial will be give you some suggestions, but even then, you should cook up your own variants to try.

- Overview and Getting Started

- First R Steps

- Lesson 2: More on Vectors

- Lesson 3: On to Data Frames!

- Lesson 4: R Factor Class

- Lesson 5: Data Cleaning

- Lesson 6: R List Class

- Lesson 7: Another Look at the Nile Data

- Pause to Reflect

- Lesson 8: Introduction to Base R Graphics

- Lesson 9: More on Graphics

- Lesson 10: Writing Your Own Functions

- Lesson 11: 'For' Loops

- Lesson 12: If-Else

- To Learn More

More lessons coming soon!

For the time being, the main part of this online course will be this README.md file. It is set up as a potential R package, though, and I may implement that later.

GPL-3: Permission granted to copy/modify, provided the source (N. Matloff) is stated. No warranties.

The color figure at the top of this file was generated by our prVis package, run on a famous dataset called Swiss Roll.

-

You will just be using R from the command line here. Most tutorials, say the excellent one by R-Ladies Sydney, start with RStudio. It is a very attractive, powerful IDE. But even the R-Ladies tutorial laments that RStudio can be "overwhelming." Here we stick to the R command line, and focus on data analysis, not advanced tools. At some point in your evolution as a programmer, you'll need to start using either an IDE or external text editor; this will be discussed in a later lesson.

-

Nonpassive learning is absolutely key! So even if the output of an R command is shown here, run the command yourself in your R console, by copy-and-pasting from this document.

-

Similarly, the Your Turn sections are absolutely crucial. Devise your own little examples, and try them out! "When in doubt, Try it out!" This is a motto I devised for teaching. If you are unclear or curious about something, try it out! Just devise a little experiment, and type in the code. Don't worry -- you won't "break" things.

-

Also similarly: I cannot teach you how to program. I can merely give you the tools, e.g. R vectors, and some examples. For a given desired programming task, then, you must creatively put these tools together to attain the goal. Treat it like a puzzle! I think you'll find that if you stick with it, you'll find you're pretty good at it. After all, we can all work puzzles.

You'll need to install R, from the R Project site. Start up R, either by clicking an icon or typing 'R' in a terminal window.

As noted, this tutorial will be "bare bones." In particular, there is no script to type your command for you. Instead, you will either copy-and-paste from the test here, or type by hand. (Note that the code lines here will all begin with the R interactive prompt, '>'; that should not be typed.)

This is a Markdown file. You can read it right there on GitHub, which has its own Markdown renderer, or on your own machine in Chrome using the Markdown Reader extension (be sure to enable Allow Access to File URLs). RStudio also has a built-in Markdown reader, though again I suggest holding off on IDEs for now.

The R command prompt is '>'. It will be shown here, but you don't type it.

So, just type '1+1' then hit Enter. Sure enough, it prints out 2:

> 1 + 1

[1] 2But what is that '[1]' here? It's just a row label. We'll go into that later, not needed yet.

R includes a number of built-in datasets, mainly for illustration purposes. One of them is Nile, 100 years of annual flow data on the Nile River.

Let's find the mean flow:

> mean(Nile)

[1] 919.35Here mean is an example of an R function, and in this case Nile is an argument -- fancy way of saying "input" -- to that function. That output, 919.35, is called the return value or simply value. The act of running the function is termed calling the function.

Another point to note is that we didn't need to call R's print function. We could have,

> print(mean(Nile))but whenever we are at the R '>' prompt, any expression we type will be printed out.

Since there are only 100 data points here, it's not unwieldy to print them out:

> Nile

Time Series:

Start = 1871

End = 1970

Frequency = 1

[1] 1120 1160 963 1210 1160 1160 813 1230 1370 1140 995 935 1110 994 1020

[16] 960 1180 799 958 1140 1100 1210 1150 1250 1260 1220 1030 1100 774 840

[31] 874 694 940 833 701 916 692 1020 1050 969 831 726 456 824 702

[46] 1120 1100 832 764 821 768 845 864 862 698 845 744 796 1040 759

[61] 781 865 845 944 984 897 822 1010 771 676 649 846 812 742 801

[76] 1040 860 874 848 890 744 749 838 1050 918 986 797 923 975 815

[91] 1020 906 901 1170 912 746 919 718 714 740Now you can see how the row labels work. There are 15 numbers per row, here, so the second row starts with the 16th, indicated by '[16]'.

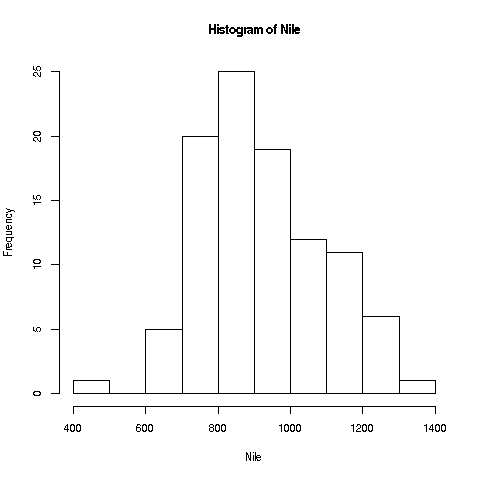

R has great graphics, not only in base R but also in user-contributed packages, such as ggplot2 and lattice. But we'll start with a very simple, non-dazzling one, a no-frills histogram:

> hist(Nile)No return value for the hist function (there is one, but it is seldom used, and we won't go into it here), but it does create the graph.

Your Turn: The hist function draws 10 bins in the histogram by default, but you can choose other values, by specifying a second argument to the function, named breaks. E.g.

> hist(Nile,breaks=20)would draw the histogram with 20 bins. Try plotting using several different large and small values of the number of bins.

Note: The hist function, as with many R functions, has many different options, specifiable via various arguments. For now, we'll just keep things simple.

R has lots of online help, which you can access via '?'. E.g. typing

> ?histwill tell you to full story on all the options available for the hist function. Note, there are far too many for you to digest for now (most users don't ever find a need for the more esoteric ones), but it's a vital resource to know.

Your Turn: Look at the online help for mean and Nile.

Now, one more new thing for this first lesson. Say we want to find the mean flow after year 1950. Since 1971 is the 100th year, 1951 is the 80th. How do we designate the 80th through 100th elements in the Nile data?

First, note that a set of numbers such as Nile is called a vector. Individual elements can be accessed using subscripts or indices (singular is index), which are specified using brackets, e.g.

> Nile[2]

[1] 1160for the second element (which we see above is indeed 1160).

The value 2 here is the index.

The c ("concatenate") function builds a vector, stringing several numbers together. E.g. we can get the 2nd, 5th and 6th:

> Nile[c(2,5,6)]

[1] 1160 1160 1160And 80:100 means all the numbers from 80 to 100. So, to answer the above question on the mean flow during 1951-1971, we can do

> mean(Nile[80:100])

[1] 877.6667If we plan to do more with that time period, we should make a copy of it:

> n80100 <- Nile[80:100]

> mean(n80100)

[1] 877.6667

> sd(n80100)

[1] 122.4117The function sd finds the standard deviation.

Note that we used R's assignment operator here to copy ("assign") those particular Nile elements to n80100. (In most situations, you can use "=" instead of "<-", but why worry about what the exceptions might be? They are arcane, so it's easier just to always use "<-". And though "keyboard shortcuts" for this are possible, again let's just stick to the basics for now.)

We can pretty much choose any name we want; "n80100" just was chosen to easily remember this new vector's provenance. (But names can't include spaces, and must start with a letter.)

Note that n80100 now is a 21-element vector. Its first element is now element 80 of Nile:

> n80100[1]

[1] 890

> Nile[80]

[1] 890Keep in mind that although Nile and n80100 now have identical contents, they are separate vectors; if one changes, the other will not.

Your Turn: Devise and try variants of the above, say finding the mean over the years 1945-1960.

Another oft-used function is length, which gives the length of the vector, e.g.

> length(Nile)

[1] 100Leave R by typing 'q()' or ctrl-d. (Answer no to saving the workspace.)

Continuing along the Nile, say we would like to know in how many years the level exceeded 1200. Let's first introduce R's sum function:

> sum(c(5,12,13))

[1] 30Here the c function ("concatenate") built a vector consisting of 5, 12 and 13. That vector was then fed into the sum function, returning 5+12+13 = 30.

By the way, the above is our first example of function composition, where the output of one function, c here, is fed as input into another, sum in this case.

We can now use this to answer our question on the Nile data:

> sum(Nile > 1200)

[1] 7But how in the world did that work? Bear with me a bit here. Let's look at a small example first:

> x <- c(5,12,13)

> x > 8

[1] FALSE TRUE TRUE

> sum(x > 8)

[1] 2First, R recycled that 8 into 3 8s, i.e. the vector (8,8,8), in order to have the same length as x. This sets up an element-by-element comparison. Then, the 5 was compared to the first 8, yielding FALSE i.e. 5 is NOT greater than 8. Then 12 was compared to the second 8, then 13 with the third. So, we got the vector (FALSE,TRUE,TRUE).

Fine, but how will sum add up some TRUEs and FALSEs? The answer is that R, like most computer languages, treats TRUE and FALSE as 1 and 0, respectively. So we summed the vector (0,1,1), yielding 2.

Your Turn: Try a few other experiments of your choice using sum. I'd suggest starting with finding the sum of the first 25 elements in Nile. You may wish to start with experiments on a small vector, say (2,1,1,6,8,5), so you will know that your answers are correct. Remember, you'll learn best nonpassively. Code away!

Right after vectors, the next major workhorse of R is the data frame. It's a rectangular table consisting of one row for each data point.

Say we have height, weight and age on each of 100 people. Our data frame would have 100 rows and 3 columns. The entry in, e.g., the second row and third column would be the age of the second person in our data. The second row as a whole would be all the data for that second person, i.e. the height, weight and age of that person.

As our first example, consider the ToothGrowth dataset built-in to R. Again, you can read about it in the online help (the data turn out to be on guinea pigs, with orange juice or Vitamin C as growth supplements). Let's take a quick look from the command line.

> head(ToothGrowth)

len supp dose

1 4.2 VC 0.5

2 11.5 VC 0.5

3 7.3 VC 0.5

4 5.8 VC 0.5

5 6.4 VC 0.5

6 10.0 VC 0.5R's head function displays (by default) the first 6 rows of the given dataframe. We see there are length, supplement and dosage columns; each column is an R vector.

The head function works on vectors too:

> head(ToothGrowth$len)

[1] 4.2 11.5 7.3 5.8 6.4 10.0Like many R functions, head has an optional second argument, specifying how many elements to print:

> head(ToothGrowth$len,10)

[1] 4.2 11.5 7.3 5.8 6.4 10.0 11.2 11.2 5.2 7.0To avoid writing out the long word "ToothGrowth" repeatedly, let's make a copy.

> tg <- ToothGrowthHow large is the dataset?

> dim(tg)

[1] 60 3Ah, 60 rows and 3 columns (60 guinea pigs, 3 measurements each).

Dollar signs are used to denote the individual columns. E.g. we can print out the mean length; tg$len is the tooth length column, so

> mean(tg$len)

[1] 18.81333Subscripts in data frames are pairs, specifying row and column numbers. To get the element in row 3, column 1:

> tg[3,1]

[1] 7.3which matches what we saw above. Or, use the fact that tg$len is a vector:

> tg$len[3]

[1] 7.3The element in row 3, column 1 in the data frame tg is element 3 in the vector tg$len.

Some data frames don't have column names, but that is no obstacle. We can use column numbers, e.g.

> mean(tg[,1])

[1] 18.81333Note the expression '[,1]'. Since there is a 1 in the second position, we are talking about column 1. And since the first position, before the comma, is empty, no rows are specified -- so all rows are included. That boils down to: all of column 1.

One can extract subsets of data frames, using either some of the rows or some of the columns, e.g.:

> z <- tg[2:5,c(1,3)]

> z

len dose

2 11.5 0.5

3 7.3 0.5

4 5.8 0.5

5 6.4 0.5Here we extracted rows 2 through 5, and columns 1 and 3, assigning the result to z. To extract those columns but keep all rows, do

> y <- tg[ ,c(1,3)]i.e. leave the row specification field empty.

Your Turn: Devise your own little examples with the ToothGrowth data. For instance, write code that finds the number of cases in which the length was less than 16. Also, try some examples with another built-in R dataset, faithful. This one involves the Old Faithful geyser in Yellowstone National Park in the US. The first column gives duration of the eruption, and the second has the waiting time since the last eruption.

Each object in R has a class. The number 3 is of the 'numeric' class, the character string 'abc' is of the 'character' class, and so on. (In R, class names are quoted; one can use single or double quotation marks.) Note that vectors of numbers are of 'numeric' class too; actually, a single number is considered to be a vector of length 1. So, c('abc','xw'), for instance, is 'character' as well.

What about tg and tg$supp?

> class(tg)

[1] "data.frame"

> class(tg$supp)

[1] "factor"R factors are used when we have categorical variables. If in a genetics study, say, we have a variable for hair color, that might comprise four categories: black, brown, red, blond. We can find the list of categories for tg$supp as follows:

> levels(tg$supp)

[1] "OJ" "VC"The supplements were either orange juice or Vitamin C.

Factors can sometimes be a bit tricky to work with, but the above is enough for now. Let's see how to apply the notion in the current dataset.

Let's compare mean tooth length for the two types of supplements:

> tgoj <- tg[tg$supp == 'OJ',]

> tgvc <- tg[tg$supp == 'VC',]

> mean(tgoj$len)

[1] 20.66333

> mean(tgvc$len)

[1] 16.96333What did that first line do? First, tg$supp == 'OJ' produces a vector of TRUEs and FALSEs. The lines in which supplement = OJ produce TRUE, the rest FALSE. We saw above that TRUEs and FALSEs can be interpreted as 1s and 0s in a context like that of the sum function, but here they are used to select indices.

In the context here, tg$supp == 'OJ' appeared in a row-indices context. R takes this to mean that we want just those rows of tg that produced the TRUEs. (The column-index field, after the comma, was blank, so we want all columns of those rows.)

So tgoj does become those rows of tg. In other words, we extracted the rows of tg for which the supplement was orange juice, with no restriction on columns, and assigned the result to tgoj. (Once again, we can choose any name; we chose this one to help remember what we put into that variable.)

Often in R there is a shorter, more compact way of doing things. That's the case here; we can use the magical tapply function:

> tapply(tg$len,tg$supp,mean)

OJ VC

20.66333 16.96333 In English: "Split tg$len into two groups, according to the value of tg$supp, then apply mean to each group." Note that the result was returned as a vector, which we could save by assigning it to, say z:

> z <- tapply(tg$len,tg$supp,mean)

> z[1]

OJ

20.66333

> z[2]

VC

16.96333 That can be quite handy, because we can use that result in subsequent code.

Instead of mean, we can use any function as that third argument in tapply. Here is another example, using the built-in dataset PlantGrowth:

> tapply(PlantGrowth$weight,PlantGrowth$group,length)

ctrl trt1 trt2

10 10 10 Here tapply split the weight vector into subsets according to the group variable, then called the length function on each subset. We see that each subset had length 10, i.e. the experiment had assigned 10 plants to the control, 10 to treatment 1 and 10 to treatment 2.

Your Turn: One of the most famous built-in R datasets is mtcars, which has various measurements on cars from the 60s and 70s. Lots of opportunties for you to cook up little experiments here! You may wish to start by comparing the mean miles-per-gallon values for 4-, 6- and 8-cylinder cars. Another suggestion would be to find how many cars there are in each cylinder category, using tapply.

By the way, the mtcars data frame has a "phantom" column.

> head(mtcars)

mpg cyl disp hp drat wt qsec vs am gear carb

Mazda RX4 21.0 6 160 110 3.90 2.620 16.46 0 1 4 4

Mazda RX4 Wag 21.0 6 160 110 3.90 2.875 17.02 0 1 4 4

Datsun 710 22.8 4 108 93 3.85 2.320 18.61 1 1 4 1

Hornet 4 Drive 21.4 6 258 110 3.08 3.215 19.44 1 0 3 1

Hornet Sportabout 18.7 8 360 175 3.15 3.440 17.02 0 0 3 2

Valiant 18.1 6 225 105 2.76 3.460 20.22 1 0 3 1That first column seems to give the make (brand) and model of the car. Yes, it does -- but it's not a column. Behold:

> head(mtcars[,1])

[1] 21.0 21.0 22.8 21.4 18.7 18.1Sure enough, column 1 is the mpg data, not the car names. But we see the names there on the far left! The resolution of this seeming contradiction is that those car names are the row names of this data frame:

> row.names(mtcars)

[1] "Mazda RX4" "Mazda RX4 Wag" "Datsun 710"

[4] "Hornet 4 Drive" "Hornet Sportabout" "Valiant"

[7] "Duster 360" "Merc 240D" "Merc 230"

[10] "Merc 280" "Merc 280C" "Merc 450SE"

[13] "Merc 450SL" "Merc 450SLC" "Cadillac Fleetwood"

[16] "Lincoln Continental" "Chrysler Imperial" "Fiat 128"

[19] "Honda Civic" "Toyota Corolla" "Toyota Corona"

[22] "Dodge Challenger" "AMC Javelin" "Camaro Z28"

[25] "Pontiac Firebird" "Fiat X1-9" "Porsche 914-2"

[28] "Lotus Europa" "Ford Pantera L" "Ferrari Dino"

[31] "Maserati Bora" "Volvo 142E" As with everything else, row.names is a function, and as you can see above, its return value is a 32-element vector. The elements of that vector are of type character.

You can even assign to that vector:

> row.names(mtcars)[7]

[1] "Duster 360"

> row.names(mtcars)[7] <- 'Dustpan'

> row.names(mtcars)[7]

[1] "Dustpan"(If you have some background in programming, it may appear odd to you to have a function call on the left side of an assignment. This is actually common in R.)

Your Turn: Try some experiments with the mtcars data, e.g. finding the mean MPG for 6-cylinder cars.

Most real-world data is "dirty," i.e. filled with errors. The famous New York taxi trip dataset, for instance, has one trip destination whose lattitude and longitude place it in Antartica! The impact of such erroneous data on one's statistical analysis can be anywhere from mild to disabling. Let's see below how one might ferret out bad data. And along the way, we'll cover several new R concepts.

We'll use the famous Pima Diabetes dataset. Various versions exist, but we'll use the one included in faraway, an R package compiled by Julian Faraway, author of several popular books on statistical regression analysis in R.

I've placed the data file, Pima.csv, on my Web site. Here is how you can read it into R:

> pima <- read.csv('http://heather.cs.ucdavis.edu/FasteR/data/Pima.csv',header=TRUE)The dataset is in a CSV ("comma-separated values") file. Here we read it, and assigned the resulting data frame to a variable we chose to name pima.

Note that second argument, 'header=TRUE'. A header in a file, if one exists, is in the first line in the file. It states what names the columns in the data frame are to have. If the file doesn't have one, set header to FALSE. You can always add names to your data frame later (future lesson).

It's always good to take a quick look at a new data frame:

> head(pima)

pregnant glucose diastolic triceps insulin bmi diabetes age test

1 6 148 72 35 0 33.6 0.627 50 1

2 1 85 66 29 0 26.6 0.351 31 0

3 8 183 64 0 0 23.3 0.672 32 1

4 1 89 66 23 94 28.1 0.167 21 0

5 0 137 40 35 168 43.1 2.288 33 1

6 5 116 74 0 0 25.6 0.201 30 0

> dim(pima)

[1] 768 9768 people in the study, 9 variables measured on each.

Since this is a study of diabetes, let's take a look at the glucose variable.

> table(pima$glucose)

0 44 56 57 61 62 65 67 68 71 72 73 74 75 76 77 78 79 80 81

5 1 1 2 1 1 1 1 3 4 1 3 4 2 2 2 4 3 6 6

82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101

3 6 10 7 3 7 9 6 11 9 9 7 7 13 8 9 3 17 17 9

102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121

13 9 6 13 14 11 13 12 6 14 13 5 11 10 7 11 6 11 11 6

122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141

12 9 11 14 9 5 11 14 7 5 5 5 6 4 8 8 5 8 5 5

142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161

5 6 7 5 9 7 4 1 3 6 4 2 6 5 3 2 8 2 1 3

162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181

6 3 3 4 3 3 4 1 2 3 1 6 2 2 2 1 1 5 5 5

182 183 184 186 187 188 189 190 191 193 194 195 196 197 198 199

1 3 3 1 4 2 4 1 1 2 3 2 3 4 1 1 Uh, oh! 5 women in the study had glucose level 0. And 44 had level 1, etc. Presumably this is not physiologically possible.

Let's consider a version of the glucose data that excludes these 0s.

> pg <- pima$glucose

> pg1 <- pg[pg > 0]

> length(pg1)

[1] 763As before, the expression "pg > 0" creates a vector of TRUEs and FALSEs. The filtering "pg[pg > 0]" will only pick up the TRUE cases, and sure enough, we see that pg1 has only 763 cases, as opposed to the original 768.

Did removing the 0s make much difference? Turns out it doesn't:

> mean(pg)

[1] 120.8945

> mean(pg1)

[1] 121.6868But still, these things can in fact have major impact in many statistical analyses.

R has a special code for missing values, NA, for situations like this. Rather than removing the 0s, it's better to recode them as NAs. Let's do this, back in the original dataset so we keep all the data in one object:

> pima$glucose[pima$glucose == 0] <- NAOr, broken up into smaller steps (I recommend this especially for beginners),

> z <- pima$glucose == 0

> pima$glucose[z] <- NASo z will be a vector of TRUEs and FALSEs, which we then use to select the desired elements in pima$glucose and set them to NA.

Note again the double-equal sign! If we wish to test whether, say, a and b are equal, the expression must be "a == b", not "a = b"; the latter would do "a <- b".

As a check, let's verify that we now have 5 NAs in the glucose variable:

> sum(is.na(pima$glucose))

[1] 5Here the built-in R function is.na will return a vector of TRUEs and FALSEs. Recall that those values can always be treated as 1s and 0s. Thus we got our count, 5.

Let's also check that the mean comes out right:

> mean(pima$glucose)

[1] NAWhat went wrong? By default, the mean function will not skip over NA values; thus the mean was reported as NA too. But we can instruct the function to skip the NAs:

> mean(pima$glucose,na.rm=TRUE)

[1] 121.6868Your Turn: Determine which other columns in pima have suspicious 0s, and replace them with NA values.

Now, look again at the plot we made earlier of the Nile flow histogram. There seems to be a gap between the numbers at the low end and the rest. What years did these correspond to? Find the mean of the data, excluding these cases.

We saw earlier how handy the tapply function can be. Let's look at a related one, split.

Earlier we mentioned the built-in dataset mtcars, a data frame. Consider mtcars$mpg, the column containing the miles-per-gallon data. Again, to save typing, let's make a copy first:

> mtmpg <- mtcars$mpgSuppose we wish to split the original vector into three vectors, one for 4-cylinder cars, one for 6 and one for 8. We could do

> mt4 <- mtmpg[mtcars$cyl == 4]and so on for mt6 and mt8..

Your Turn: In order to keep up, make sure you understand how that line of code works, with the TRUEs and FALSEs etc. First print out the value of mtcars$cyl == 4, and go from there.

But there is a cleaner way:

> mtl <- split(mtmpg,mtcars$cyl)

> mtl

$`4`

[1] 22.8 24.4 22.8 32.4 30.4 33.9 21.5 27.3 26.0 30.4 21.4

$`6`

[1] 21.0 21.0 21.4 18.1 19.2 17.8 19.7

$`8`

[1] 18.7 14.3 16.4 17.3 15.2 10.4 10.4 14.7 15.5 15.2 13.3 19.2 15.8 15.0

> class(mtl)

[1] "list"In English, the call to split said, "Split mtmpg into multiple vectors, with the splitting criterion being the values in mtcars$cyl."

Now mtl, an object of R class "list", contains the 3 vectors. We can access them individually with the dollar sign notation:

> mtl$`4`

[1] 22.8 24.4 22.8 32.4 30.4 33.9 21.5 27.3 26.0 30.4 21.4Or, we can use indices, though now with double brackets:

> mtl[[1]]

[1] 22.8 24.4 22.8 32.4 30.4 33.9 21.5 27.3 26.0 30.4 21.4Looking a little closer:

> head(mtcars$cyl)

[1] 6 6 4 6 8 6 We see that the first car had 6 cylinders, so the

first element of mtmpg, 21.0, was thrown into the 6 pile, i.e.

mtl[[2]] (see above printout of mtl), and so on.

And of course we can make copies for later convenience:

> m4 <- mtl[[1]]

> m6 <- mtl[[2]]

> m8 <- mtl[[3]]Lists are especially good for mixing types together in one package:

> l <- list(a = c(2,5), b = 'sky')

> l

$a

[1] 2 5

$b

[1] "sky"Note that here we can names to the list elements, 'a' and 'b'. In forming mtl using split above, the names were assigned according to the values of the vector beiing split. If we don't like those default names, we can change them:

> names(mtl) <- c('four','six','eight')

> mtl

$four

[1] 22.8 24.4 22.8 32.4 30.4 33.9 21.5 27.3 26.0 30.4 21.4

$six

[1] 21.0 21.0 21.4 18.1 19.2 17.8 19.7

$eight

[1] 18.7 14.3 16.4 17.3 15.2 10.4 10.4 14.7 15.5 15.2 13.3 19.2 15.8

15.0By the way, it's no coincidence that a dollar sign is used for delineation in both data frames and lists; data frames are lists. Each column is one element of the list. So for instance,

> mtcars[[1]]

[1] 21.0 21.0 22.8 21.4 18.7 18.1 14.3 24.4 22.8 19.2 17.8 16.4 17.3 15.2 10.4

[16] 10.4 14.7 32.4 30.4 33.9 21.5 15.5 15.2 13.3 19.2 27.3 26.0 30.4 15.8 19.7

[31] 15.0 21.4Here we used the double-brackets list notation to get the first element of the list, which is the first column of the data frame.

Here we'll learn several new concepts, using the Nile data as our starting point.

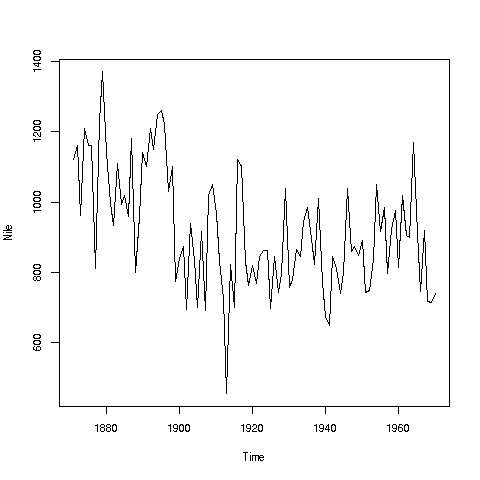

If you look again at the histogram of the Nile we generated, you'll see a gap between the lowest numbers and the rest. In what year(s) did those really low values occur? Let's plot the data:

> plot(Nile)Looks like maybe 1912 or so. Is this an error? Or was there some big historical event then? This would require more than R to track down, but at least R can tell us which year or years correspond to the unusually low flow. Here is how:

We see from the graph that the unusually low value was below 600. We can use R's which function to see when that occurred:

> which(Nile < 600)

[1] 43As before, make sure to understand what happened in this code. The expression "Nile < 600" yields 100 TRUEs and FALSEs. The which then tells us which of those were TRUEs.

So, element 43 is the culprit here, corresponding to year 1871+42=1913. Again, we would have to find supplementary information in order to decide whether this is a genuine value or an error.

Of course, since this is a small dataset, we could have just printed out the entire data and visually scanned it for a low number. But what if the length of the data vector had been 100,000 instead of 100? Then the visual approach wouldn't work. Moreover, a goal of programming is to automate tasks, rather than doing them by hand.

Your Turn: There appear to be some unusually high values as well, e.g. one around 1875. Determine which year this was, using the techniques presented here.

Also, try some similar analysis on the built-in AirPassengers data. Can you guess why those peaks are occurring?

Here is another point: That function plot is not quite so innocuous as it may seem.

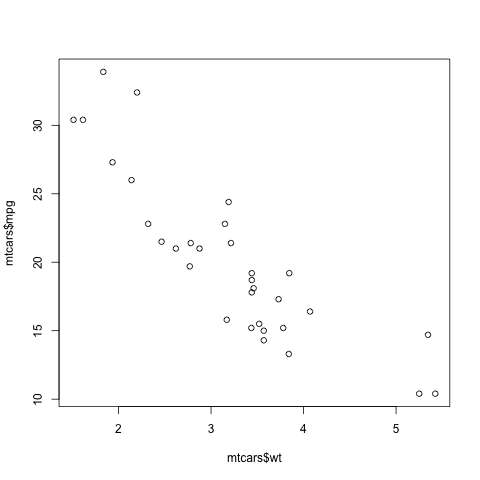

> plot(mtcars$wt,mtcars$mpg)In contrast to the previous plot, in which our data were on the vertical axis and time was on the horizontal, now we are plotting two datasets, against each other. This enables us to explore the relation between weight and gas mileage.

There are a couple of important points here. First, as we might guess, we see that the heavier cars tended to get poorer gas mileage. But here's more: That plot function is pretty smart!

Why? Well, plot knew to take different actions for different input types. When we fed it a single vector, it plotted those numbers against time. When we fed it two vectors, it knew to do a scatter plot.

In fact, plot was even smarter than that. It noticed that Nile is not just of 'numeric' type, but also of another class, 'ts' ("time series"):

> is.numeric(Nile)

[1] TRUE

> class(Nile)

[1] "ts"So, it put years on the horizontal axis, instead of indices 1,2,3,...

And one more thing: Say we wanted to know the flow in the year 1925. The data start at 1871, so 1925 is 1925 - 1871 = 54 years later. This means the flow for that year is in element 1+44 = 55.

> Nile[55]

[1] 698OK, but why did we do this arithmetic ourselves? We should have R do it:

> Nile[1925 - 1871 + 1]

[1] 698R did the computation 1925 - 1871 + 1 itself, yielding 55, then looked up the value of Nile[55]. This is the start of your path to programming.

How does one build a house? There of course is no set formula. One has various tools and materials, and the goal is to put these together in a creative way to produce the end result, the house.

It's the same with R. The tools here are the various functions, e.g. mean and which, and the materials are one's data. One then must creatively put them together to achieve one's goal, say ferreting out patterns in ridership in a public transportation system. Again, it is a creative process; there is no formula for anything. But that is what makes it fun, like solving a puzzle.

And...we can combine various functions in order to build our own functions. This will come in future lessons.

One of the greatest things about R is its graphics capabilities. There are excellent graphics features in base R, and then many contributed packages, with the best known being ggplot2 and lattice. These latter two are extremely powerful, and will be the subjects of future lessons, but for now we'll concentrate on the base.

As our example here, we'll use a dataset I compiled on Silicon Valley programmers and engineers, from the US 2000 census. Let's read in the data and take a look at the first records:

> pe <- read.table('https://raw.githubusercontent.com/matloff/polyreg/master/data/prgeng.txt',header=TRUE)

> head(pe)

age educ occ sex wageinc wkswrkd

1 50.30082 13 102 2 75000 52

2 41.10139 9 101 1 12300 20

3 24.67374 9 102 2 15400 52

4 50.19951 11 100 1 0 52

5 51.18112 11 100 2 160 1

6 57.70413 11 100 1 0 0We used read.table here because the file is not of the CSV type. It uses blank spaces rather than commas as its delineator between fields.

Here educ and occ are codes, for levels of education and different occupations. For now, let's not worry about the specific codes. (You can find them in the Census Bureau document. For instance, search for "Educational Attainment for the educ variable.)

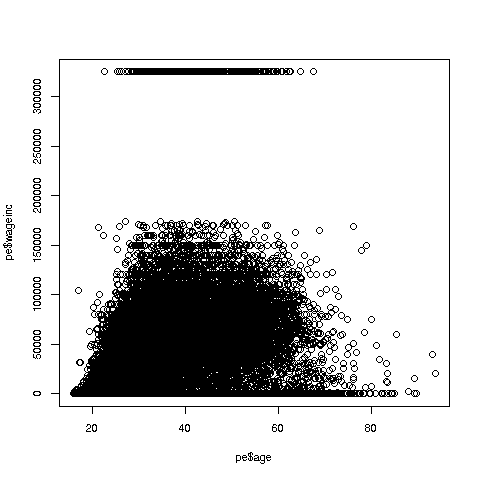

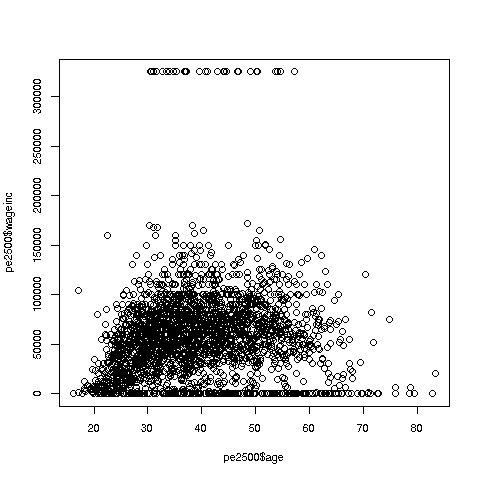

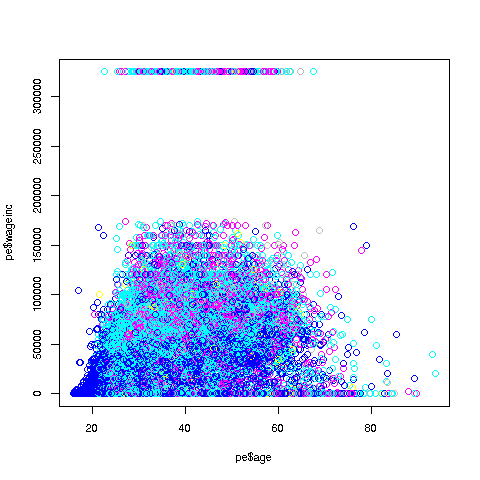

Let's start with a scatter plot of wage vs. age:

> plot(pe$age,pe$wageinc)Oh no, the dreaded Black Screen Problem! There are about 20,000 data points, thus filling certain parts of the screen. So, let's just plot a random sample, say 2500. (There are other ways of handling the problem, say with smaller dots or alpha blending.)

> indxs <- sample(1:nrow(pe),2500)

> pe2500 <- pe[indxs,]The nrow() function returns the number of rows in the argument, which in this case is 20090, the number of rows in pe.

R's sample function does what its name implies. Here it randomly samples 2500 of the numbers from 1 to 20090. We then extracted those rows of pe, in a new data frame pe2500.

Note again that I could have written the more companct

> pe2500 <- pe[sample(1:nrow(pe),2500),]but it would be hard to read that way. I also use direct function composition sparingly, preferring to break

h(g(f(x),3)into

y <- f(x)

z <- g(y,3)

h(z) So, here is the new plot:

> plot(pe2500$age,pe2500$wageinc)OK, now we are in business. A few things worth noting:

-

The relation between wage and age is not linear, indeed not even monotonic. After the early 40s, one's wage tends to decrease. As with any observational dataset, the underlying factors are complex, but it does seem there is an age discrimination problem in Silicon Valley. (And it is well documented in various studies and litigation.)

-

Note the horizontal streaks. Some people in the census had 0 income (or close to it), as they were not working. And the census imposed a top wage limit of $350,000 (probably out of privacy concerns).

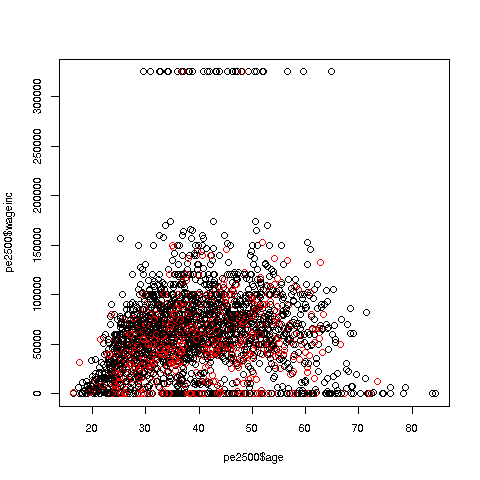

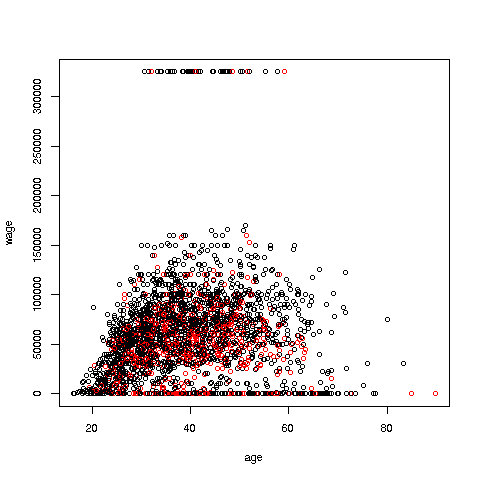

We can break things down by gender, via color coding:

> plot(pe2500$age,pe2500$wageinc,col=as.factor(pe2500$sex))The col argument indicates we wish to color code, in this case by gender. Note that pe2500$sex is a numeric vector, but col requires an R factor; the function as.factor does the conversion.

The red dots are the women. (Details below.) Are they generally paid less than men? There seems to be a hint of that, but detailed statistical analysis is needed (a future lesson).

It would be good to have better labels on the axes, and maybe smaller dots:

> plot(pe2500$age,pe2500$wageinc,col=as.factor(pe2500$sex),xlab='age',ylab='wage',cex=0.6)Here 'xlab' meant "X label" and similarly for 'ylab'. The argument 'cex = 0.6' means "Draw the dots at 60% of default size."

Now, how did the men's dots come out black and the women's red? The first record in the data was for a man, so black was assigned to men. And why black and red? They are chosen from a list of default colors; again, details in a future lesson.

There are many, many other features. More in a future lesson.

Your Turn: Try some scatter plots on various datasets. I suggest first using the above data with wage against age again, but this time color-coding by education level. (By the way, 1-9 codes no college; 10-12 means some college; 13 is a bachelor's degree, 14 a master's, 15 a professional degree and 16 is a doctorate.)

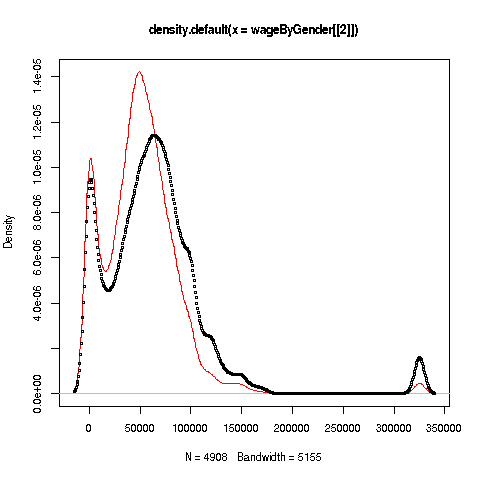

We can also plot multiple histograms on the same graph. But the pictures are more effective using a smoothed version of histograms, available in R's density function. Let's compare men's and women's wages in the census data.

First we use split to separate the data by gender:

> wageByGender <- split(pe$wageinc,pe$sex)

> dm <- density(wageByGender[[1]])

> dw <- density(wageByGender[[2]])Remember, wageByGender[[1]] will now be the vector of men's wages, and similarly wageByGender[[1]] will have the women's wages.

The density function does not automatically plot; it has the plot in a return value, which we've assigned to dm and dw here. We can now plot the graph:

> plot(dw,col='red')

> points(dm,cex=0.2)Why did we call the points function instead of plot in that second line? The issue is that calling plot again would destroy the first plot; we merely want to add points to the existing graph.

And why did we plot the women's data first? As you can see, the women's curve is taller, so if we plotted the men first, part of the women's curve would be cut off. Of course, we didn't know that ahead of time, but graphics often is a matter of trial-and-error to get to the picture we really want. (In the case of ggplot2, this is handled automatically by the software.)

Well, then, what does the graph tell us? The peak for women, a little less than $50,000, seems to be at a lower wage than that for men, at something like $60,000. At salaries around, say, $125,000, there seem to be more men than women. (Black curve higher than red curve. Remember, the curves are just smoothed histograms, so, if a curve is really high at, say 168.0, that means that 168.0 is very frequently-occurring value..)

Your Turn: Try plotting multiple such curves on the same graph, for other data.

Recall a line we had in Lesson 2:

> sum(Nile > 1200)This gave us the count of the elements in the Nile data larger than 1200.

Now, say we want the mean of those elements:

> mean(Nile[Nile > 1200])

[1] 1250Let's review how this works. The expression 'Nile > 1200' gives us a bunch of TRUEs and FALSEs, one for each element of Nile. Then 'Nile[Nile > 1200]' gives us the subvector of Nile corresponding to the TRUEs, i.e. the stated condition. We then take the mean of that.

Say we want to do this again, but with 1350 instead of 1200. Or, with the tg$len vector from Lesson 3, with 10.2 as our lower bound. We could keep typing the same pattern as above, but if we're going to do this a lot, it's better to write a function for it:

> mgd <- function(x,d) mean(x[x > d])I named it 'mgd' for "mean of elements greater than d," but any name is fine.

Let's try it out, then explain:

> mgd(Nile,1200)

[1] 1250

> mgd(tg$len,10.2)

[1] 21.58125This saved me typing. In the second call, I would have had to type

mean(tg$len[tg$len > 10.2])considerably longer.

So, how does all this work? Again, look at the code:

> mgd <- function(x,d) mean(x[x > d])Odd to say, but there is a built-in function in R itself named 'function'! We're calling it here. And its job is to build a function, which we assigned to mgd. We can then call the latter, as we saw above.

The above line of code says that mgd will have two arguments, x and d. These are known as formal arguments, as they are just placeholders. For example, in

> mgd(Nile,1200)we said, "R, please execute mgd with Nile playing the role of x, and 1200 playing the role of d. (Here Nile and 1200 are known as the actual arguments.)

As you have seen with R's built-in functions, a function will typically have a return value. In our case here, we could arrange that by writing

> mgd <- function(x,d) return(mean(x[x > d]))But it's not needed, because in any function, R will return the last value computed, in this case the requested mean.

And we can save the function for later use, by using R's save function, which can be used to save any R object:

> save(mgd,file='mean_greater_than_d')The function has now been saved in the indicated file, which will be in whatever folder R is running in right now. We can leave R, and say, come back tomorrow. If we then start R from that same folder, we then run

> load('mean_greater_than_d')and then mgd will be restored, ready for us to use again.

Your Turn: Try your hand at writing some simple functions along the lines seen here. You might start with a function n0(x), that returns the number of 0s in the vector x. Another suggestion would be a function hld(x,d), which draws a histogram for those elements in the vector x that are less than d.

Recall that in Lesson 5, we found that there were several columns in the Pima dataset that contained values of 0, which were physiologically impossible. These should be coded NA. We saw how to do that recoding for the glucose variable:

> pima$glucose[pima$glucose == 0] <- NABut there are several columns like this, and we'd like to avoid doing this all repeatedly by hand. (What if there were several hundred such columns?) Instead, we'd like to do this programmatically. This can be done with R's for loop construct.

Let's first check which columns seem appropriate for recoding.

> for (i in 1:9) print(sum(pima[,i] == 0))

[1] 111

[1] 5

[1] 35

[1] 227

[1] 374

[1] 11

[1] 0

[1] 0

[1] 500This is known in the programming world as a for loop.

The 'print(etc.)' is called the body of the loop. The 'for (i in 1:9)' part says, "Execute the body of the loop with i = 1, then execute it with i = 2, then i = 3, etc. up through i = 9."

In other words, the above code instructs R to do the following:

print(sum(pima[,1] == 0))

print(sum(pima[,2] == 0))

print(sum(pima[,3] == 0))

print(sum(pima[,4] == 0))

print(sum(pima[,5] == 0))

print(sum(pima[,6] == 0))

print(sum(pima[,7] == 0))

print(sum(pima[,8] == 0))

print(sum(pima[,9] == 0))Now, it's worth reviewing what those statements do, say the first. Once again, pima[,1] == 0 yields a vector of TRUEs and FALSEs, each indicating whether the corresponding element of column 1 is 0. When we call sum, TRUEs and FALSEs are treated as 1s and 0s, so we get the total number of 1s -- which is a count of the number of elements in that column that are 0.

We probably have forgotten which column is which, so let's see:

> colnames(pima)

[1] "pregnant" "glucose" "diastolic" "triceps" "insulin" "bmi"

[7] "diabetes" "age" "test" Since some women will indeed have had 0 pregnancies, that column should not be recoded. And the last column states whether the test for diabetes came out positive, 1 for yes, 0 for no, so those 0s are legitimate too.

But 0s in columns 2 through 6 ought to be recoded as NAs. And the fact that it's a repetitive action suggests that a for loop can be used there too:

> for (i in 2:6) pima[pima[,i] == 0,i] <- NAYou'll probably find this line quite challenging, but be patient and, as with everything in R, you'll find you can master it.

First, let's write it in more easily digestible (though a bit more involved) form:

> for (i in 2:6) {

+ zeroIndices <- which(pima[,i] == 0)

+ pima[zeroIndices,i] <- NA

+ }Here I intended the body of the loop to consist of a block of two statements, not one, so I needed to tell R that, by typing '{' before writing my two statements, then letting R know I was finished with the block, by typing '}'. Meanwhile R was helpfully using its '+' prompt to remind me that I was still in the midst of typing the block.

So, the block (two lines here) will be executed with i = 2, then 3, 4, 5 and 6. The line

zeroIndices <- which(pima[,i] == 0)determines where the 0s are in column i, and then the line

pima[zeroIndices,i] <- NAreplaces those 0s by NAs.

Note that I have indented the two lines in the block. This is not required but is considered good for clear code.

Your Turn: Write a function with call form countNAs(dfr), which prints the numbers of NAs in each column of the data frame dfr.

If our Census data example above, it was stated that education codes 0-9 all corresponded to having no college education at all. For instance, 9 means high school graduate, while 6 means schooling through the 10th grade. (Of course, few if any programmers and engineers have educational attainment level below college, but this dataset was extracted from the general data.) 13 means a bachelor's degree.

Suppose we wish to color-code the wage-age graph by educational attainment, and amalgamate all codes under 13, giving them the code 12.

The straightforward but overly complicated potentiall slower way would be this:

> head(pe$educ,15)

[1] 13 9 9 11 11 11 12 11 14 9 12 13 12 13 6

> for (i in 1:nrow(pe)) {

+ if (pe$educ[i] < 13) pe$educ[i] <- 12

+ }

> head(pe$educ,15)

[1] 13 12 12 12 12 12 12 12 14 12 12 13 12 13 12For pedagogical clarity, I've inserted "before and after" code, to show the educ did indeed change where it should.

The if statement works pretty much like the word "if" in English. First i will be set to 1, so R will test whether pe$educ[1] is less than 13. If so, it will reset that element to 12; otherwise, do nothing. Then it will do the same for i equal to 2, and so on. You can see above that, for instance, pe$educ[2] did indeed change from 9 to 12.

But there is a slicker way to do this (re-read the data file before running this, so as to be sure the code worked):

> edu <- pe$educ

> pe$educ <- ifelse(edu < 13,12,edu)(Again, we've broken what could have been one line into two, for clarity.)

Now how did that work? As you see above, R's ifelse function has three arguments, and its return value is a new vector, that in this case we've reassigned to pe$educ. Here, edu < 12 produces a vector of TRUEs and FALSEs. For each TRUE, we set the corresponding element of the output to 12; for each FALSE, we set the corresponding element of the output to the corresponding element of edu. That's exactly what we want to happen.

The if can be paired with else (not ifelse). For example, say we need to set y to either -1 or 1, depending on whether x is less than 3. We could write

if (x < 3) y <- -1 else y <- 1So, we can now produce the desired graph:

> plot(pe$age,pe$wageinc,col=edu1)One more important point: Using ifelse instead of a loop in the above example is termed vectorization. The name comes from the fact that ifelse operates on vectors, while in the loop we operate on one individual element at a time.

Vectorized code is typically much more compact than loop-based code, as was the case here. In some cases, though certainly not all, the vectorized will be much faster.

Well, congratulations! With for and now ifelse, you've really gotten into the programming business. We'll be using them a lot in the coming lessons.

Your Turn: Recode the Nile data to a new vector nile, with the values 1, 2 and 3, according to whether the corresponding number in Nile is less than 800, between 800 and 1150, or greater than 1150.

These are books and other resources that I myself consult a lot. (Yes, I do consult my own books; can't keep it all in my head. :-) )

R Programming and Language

-

John Chambers, Software for Data Analysis: Programming with R, Springer

-

Norm Matloff, The Art of R Programming, NSP

-

Norm Matloff, Parallel Computation for Data Science, CRC

-

Hadley Wickham, Advanced R, CRC

Data Science with R

-

John Mount and Nina Zumel, Practical Data Science with R, Manning

-

Hadley Wickham and Garrett Grolemund, R for Data Science: Import, Tidy, Transform, Visualize, and Model Data, O'Reilly

Graphics in R

-

Winston Chang, R Graphics Cookbook: Practical Recipes for Visualizing Data, O'Reilly

-

Paul Murrell, R Graphics (third ed.), CRC

-

Deepayan Sarkar, Lattice: Multivariate Data Visualization with R, Springer

-

Hadley Wickham, ggplot2: Elegant Graphics for Data Analysis, Springer

Regression Analysis and Machine Learning, Using R

-

Francis Chollet and JJ Allaire, Deep Learning in R, Manning

-

Julian Faraway, Linear Models with R, CRC

-

Julian Faraway, Extending the Linear Model with R, CRC

-

John Fox and Sanford Weisberg, An R Companion to Applied Regression, SAGE

-

Frank Harrell, Regression Modeling Strategies: With Applications to Linear Models, Logistic and Ordinal Regression, and Survival Analysis, Springer

-

Max Kuhn, Applied Predictive Modeling, Springer

-

Max Kuhn and Kjell Johnson, Feature Engineering and Selection: A Practical Approach for Predictive Models, CRC

-

Norm Matloff, Statistical Regression and Classification: from Linear Models to Machine Learning, CRC

I also would recommend various Web tutorials:

-

Szilard Palka, CEU Business Analytics program: Use Case Seminar 2 with Szilard Pafka (2019- 05-08)

-

Hadley Wickham, the Tidyverse