English| 简体中文

Alink is the Machine Learning algorithm platform based on Flink, developed by the PAI team of Alibaba computing platform. Welcome everyone to join the Alink open source user group to communicate.

About package names and versions:

- PyAlink provides different Python packages for Flink versions that Alink supports:

package

pyalinkalways maintains Alink Python API against the latest Flink version, which is 1.10, whilepyalink-flink-***support old-version Flink, which arepyalink-flink-1.9for now. - The version of python packages always follows Alink Java version, like

1.1.0.

Installation steps:

- Make sure the version of python3 on your computer is 3.6 or 3.7.

- Make sure Java 8 is installed on your computer.

- Use pip to install:

pip install pyalinkorpip install pyalink-flink-1.9(Note: for now,pyalink-flink-1.9is not available,use following links instead).

Potential issues:

-

pyalinkand/orpyalink-flink-***can not be installed at the same time. Multiple versions are not allowed. Ifpyalinkorpyalink-flink-***was/were installed, please usepip uninstall pyalinkorpip uninstall pyalink-flink-***to remove them. -

If

pip installis slow of failed, refer to this article to change the pip source, or use the following download links: -

If multiple version of Python exist, you may need to use a special version of

pip, likepip3; If Anaconda is used, the command should be run in Anaconda prompt.

We recommend using Jupyter Notebook to use PyAlink to provide a better experience.

Steps for usage:

-

Start Jupyter:

jupyter notebookin terminal , and create Python 3 notebook. -

Import the pyalink package:

from pyalink.alink import *. -

Use this command to create a local runtime environment:

useLocalEnv(parallism, flinkHome=None, config=None).Among them, the parameter

parallismindicates the degree of parallelism used for execution;flinkHomeis the full path of flink,and the default flink-1.9.0 path of PyAlink is used;configis the configuration parameter accepted by Flink. After running, the following output appears, indicating that the initialization of the running environment is successful.

JVM listening on ***

Python listening on ***

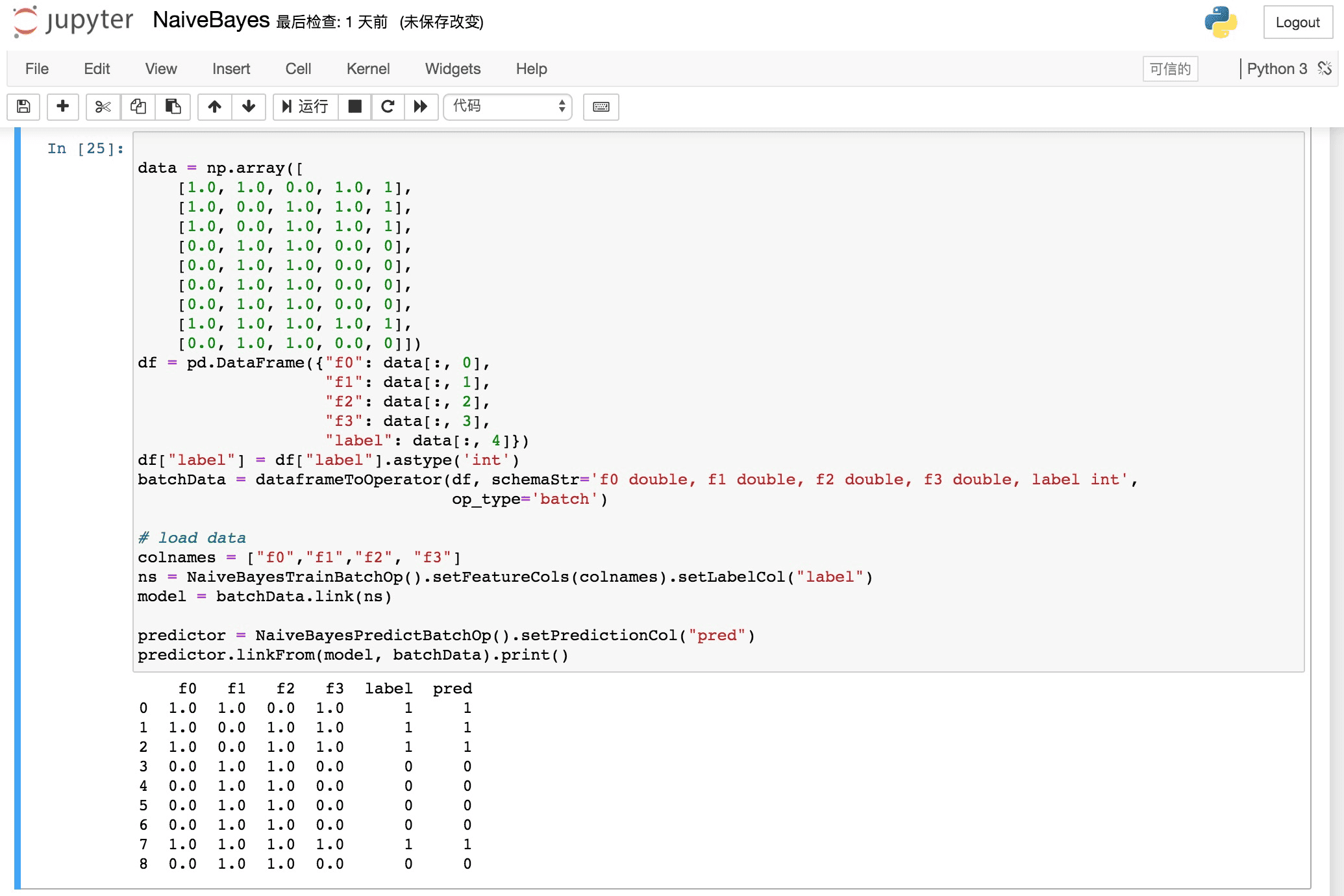

- Start writing PyAlink code, for example:

source = CsvSourceBatchOp()\

.setSchemaStr("sepal_length double, sepal_width double, petal_length double, petal_width double, category string")\

.setFilePath("https://alink-release.oss-cn-beijing.aliyuncs.com/data-files/iris.csv")

res = source.select(["sepal_length", "sepal_width"])

df = res.collectToDataframe()

print(df)In PyAlink, the interface provided by the algorithm component is basically the same as the Java API, that is, an algorithm component is created through the default construction method, then the parameters are set through setXXX, and other components are connected through link / linkTo / linkFrom.

Here, Jupyter's auto-completion mechanism can be used to provide writing convenience.

For batch jobs, you can trigger execution through methods such as print / collectToDataframe / collectToDataframes of batch components or BatchOperator.execute (); for streaming jobs, start the job with StreamOperator.execute ().

- PyAlink Tutorial

- Interchange between DataFrame and Operator

- StreamOperator data preview

- UDF/UDTF/SQL usage

- Use with PyFlink

String URL = "https://alink-release.oss-cn-beijing.aliyuncs.com/data-files/iris.csv";

String SCHEMA_STR = "sepal_length double, sepal_width double, petal_length double, petal_width double, category string";

BatchOperator data = new CsvSourceBatchOp()

.setFilePath(URL)

.setSchemaStr(SCHEMA_STR);

VectorAssembler va = new VectorAssembler()

.setSelectedCols(new String[]{"sepal_length", "sepal_width", "petal_length", "petal_width"})

.setOutputCol("features");

KMeans kMeans = new KMeans().setVectorCol("features").setK(3)

.setPredictionCol("prediction_result")

.setPredictionDetailCol("prediction_detail")

.setReservedCols("category")

.setMaxIter(100);

Pipeline pipeline = new Pipeline().add(va).add(kMeans);

pipeline.fit(data).transform(data).print();<dependency>

<groupId>com.alibaba.alink</groupId>

<artifactId>alink_core_flink-1.10_2.11</artifactId>

<version>1.1.1</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>1.10.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_2.11</artifactId>

<version>1.10.0</version>

</dependency><dependency>

<groupId>com.alibaba.alink</groupId>

<artifactId>alink_core_flink-1.9_2.11</artifactId>

<version>1.1.1</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>1.9.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table-planner_2.11</artifactId>

<version>1.9.0</version>

</dependency>- Prepare a Flink Cluster:

wget https://archive.apache.org/dist/flink/flink-1.10.0/flink-1.10.0-bin-scala_2.11.tgz

tar -xf flink-1.10.0-bin-scala_2.11.tgz && cd flink-1.10.0

./bin/start-cluster.sh- Build Alink jar from the source:

git clone https://github.com/alibaba/Alink.git

cd Alink && mvn -Dmaven.test.skip=true clean package shade:shade- Run Java examples:

./bin/flink run -p 1 -c com.alibaba.alink.ALSExample [path_to_Alink]/examples/target/alink_examples-1.1-SNAPSHOT.jar

# ./bin/flink run -p 2 -c com.alibaba.alink.GBDTExample [path_to_Alink]/examples/target/alink_examples-1.1-SNAPSHOT.jar

# ./bin/flink run -p 2 -c com.alibaba.alink.KMeansExample [path_to_Alink]/examples/target/alink_examples-1.1-SNAPSHOT.jar