New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Bug? - Error in processor permanently breaks entire queue #956

Comments

|

Ok, so you say all subsequent jobs fail too, which what error exactly? |

|

Wait, actually this is not reproducible with the example I have given. I'll give you a working example, one moment. |

|

Okay, so I updated my comments with a piece of code that actually triggers the issue. Interestingly, when I add the attachment to the queue and get the data via job.data.attachments = [{ filename: 'test.txt', content: Buffer.from('hello world!', 'utf-8') }]When I [

{ filename: 'test.txt', content: { type: 'Buffer', data: [Array] } }

]So, to me it looks like it could be an issue with queueing |

|

Have you tried |

|

I found a way of fixing the application, but the issue with BullMQ kind of remains: It should not fail all subsequent jobs, once this type of error happens. This is quite concerning for use in production. |

You should probably add a try catch around the problematic line of code. I personally haven't seen it then force fail all other jobs, unless all those jobs in the screenshot were concurrent on the same worker. If not, they probably fail due to the same issue, judgeing from the console logs. I have witness, however, the worker will cease to accept anymore jobs and go offline when an unhandled exception occurred. |

|

Yeah, I tried |

BullMQ does not behave in this way, a failing job cannot break the queue. However, I see you are not using a QueueScheduler instance in your setup. Since you want to use retries, this is needed otherwise the delayed jobs will never be promoted. Have you checked the tutorials for exactly this use case? https://blog.taskforce.sh/implementing-mail-microservice-with-bullmq/ |

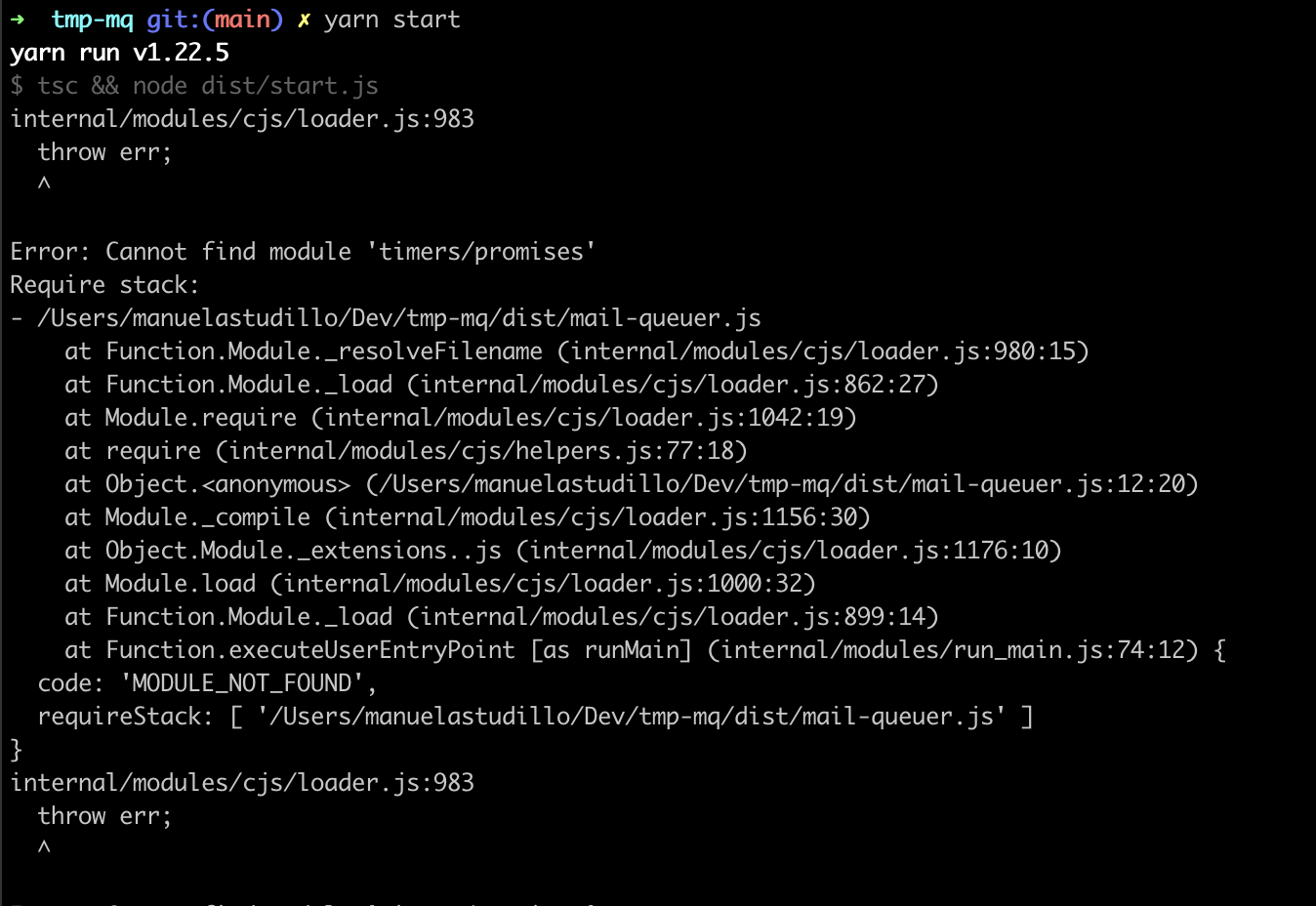

When starting the project, I took your tutorial as a template, so yes (and thanks for the tutorial) :) Could you please try to run this newly created repo ( At least on my machine this reproduces the issue. However, I'm using Node v16.12.0 on an M1 (Apple Silicon) Mac and use the latest Redis inside Docker.. maybe that's part of the problem, in case you cannot reproduce the issue on your machine. Edit: Merry Christmas btw 🎄 |

|

You are probably not using Node v16: https://stackoverflow.com/a/69063138/974647 If you don't want to update to v16 permanently, you can temporarily install/use it with NVM: https://www.digitalocean.com/community/tutorials/nodejs-node-version-manager |

|

@manast Have you been able to test it with v16 on your machine? |

|

I haven't dig down yet but if I have to guess this is a bug in NodeJS, maybe you could try with other versions? Because this code also reproduces the issue, which makes absolutely no sense: i.e., the jobs are not even failing, still it breaks the queue, so there must be some weird intra-process side effect at play here. |

|

If there was no bug in node then one of that console.log would be printed, but they are not, it is as if the nodejs process itself crashes or something... |

|

I found that the callstack for the error looks like this: So this adds to the assumption that it is a bug in node, since the error is produced in the core and probably breaks V8. Now the question is if using a sandboxed processor in BullMQ could somehow detect this and spawn a new child process, I am spending some time to investigate if this is possible or not. |

|

🎉 This issue has been resolved in version 1.64.1 🎉 The release is available on: Your semantic-release bot 📦🚀 |

|

Great, thanks for following up and making BullMQ even more robust! |

Consider the following setup:

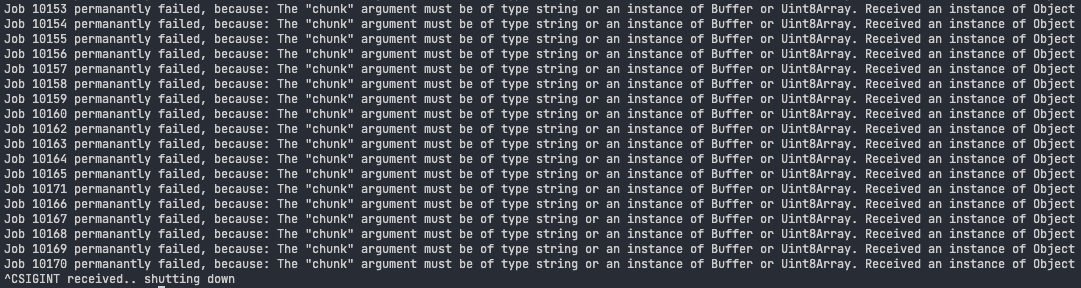

When running this, the queue breaks when the first erroneous mail is processed. The "logger" will console.log something like

Failed job 9880, because: The "chunk" argument must be of type string or an instance of Buffer or Uint8Array. Received an instance of Object, which is correct and expected, but all subsequent jobs will fail, too. Even though they are correct and Nodemailer doesn't throw an error here.Am I missing something?

The text was updated successfully, but these errors were encountered: