-

-

Notifications

You must be signed in to change notification settings - Fork 6.2k

This issue was moved to a discussion.

You can continue the conversation there. Go to discussion →

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

How can I return a 202 Accepted response for long running REST call? #3

Comments

|

@danieljfarrell from fastapi import FastAPI

from pydantic import BaseModel

from uuid import uuid4

from collections import deque

from starlette.status import HTTP_201_CREATED, HTTP_202_ACCEPTED

# see https://github.com/encode/starlette/blob/master/starlette/status.py

queues = dict(

pending=deque(),

working=deque(),

finished=deque()

)

def get_job(id: str, queue: str = None, order=['finished', 'working', 'pending']):

_id = str(id)

# if queue:

# order = [queue]

for queue in order:

for job in queues[queue]:

# print(job['id'])

if str(job['id']) == _id:

return job, queue

return 'not found', None #this will fail horribly

app = FastAPI()

class BaseJob(BaseModel):

data: bytes = None

class JobStatus(BaseModel):

status: str

id: str

data: bytes = None

result: int = -1

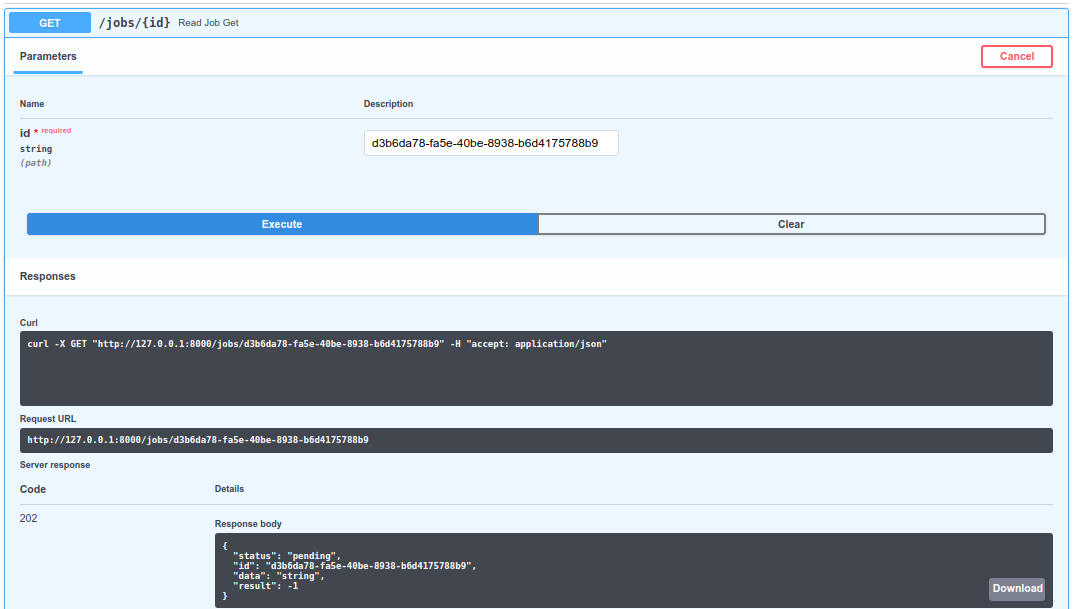

@app.get("/jobs/{id}", response_model=JobStatus, status_code=HTTP_202_ACCEPTED)

async def read_job(id: str):

job, status = get_job(id)

d = dict(

id=job['id'],

status=status,

data=job['data'],

result=job.get('result', -1)

)

return d

@app.post("/jobs/", response_model=JobStatus, status_code=HTTP_201_CREATED)

async def create_job(*, job: BaseJob):

_job = dict(

id=str(uuid4()),

status='pending',

data=job.data

)

queues['pending'].append(_job)

return _job |

|

Thanks @rcox771 for the thorough response! Sorry for the delay @danieljfarrell , I had planned to create a tutorial in the docs for these cases (it will come in the next days nevertheless). First, to customize the status code, in general, you can do as @rcox771 says. In the path operation decorator with the Copying from @rcox771's example: ...

@app.get("/jobs/{id}", response_model=JobStatus, status_code=HTTP_202_ACCEPTED)

async def read_job(id: str):

# your code here

passThe But when you don't remember exactly which status code is for what (as frequently happens to me), you can import the variables from About background jobs, as FastAPI is fully based on Starlette and extends it, you can use Starlette's integrated background tasks. It is not in FastAPI's docs yet, but will come soon. For these Starlette's background tasks to work in FastAPI you need to return a In more complex scenarios, when you need distributed task workers, possibly in several servers, with different dependencies (for example, one worker might need I hope to add that to the tutorials too, but meanwhile, you can see how to set it all up with the full-stack project generator, it includes Celery: https://github.com/tiangolo/full-stack-fastapi-couchbase |

|

Thanks so much! This project is so cool and the documentation is amazing. |

|

That's great to hear! Thanks @danieljfarrell ! The status code docs are live now: https://fastapi.tiangolo.com/tutorial/response-status-code/ I still owe you the docs for background tasks. |

|

Thanks! What’s the history of this project? It seems to have come from nowhere to awesome in a few weeks from viewing the commit history. |

|

@danieljfarrell here's a bit of history, now in the docs: https://fastapi.tiangolo.com/history-design-future/ And I quoted you 😁 |

|

Assuming the original need was handled, this will be automatically closed now. But feel free to add more comments or create new issues or PRs. |

This issue was moved to a discussion.

You can continue the conversation there. Go to discussion →

Hope this is the right place for a question.

I have a REST API post request that does a lot of computation,

/crunch. Rather than block the event loop I would like/crunchto return202 Acceptedstatus code along with a token string. Then the user of the API can call get request/result/{token}to check on the status of the computation. This is outlined nicely here for example.Is it possible to modify the response status code, for example, similar to this approach in Sanic?

The text was updated successfully, but these errors were encountered: