-

Notifications

You must be signed in to change notification settings - Fork 4

RGB color #37

Comments

|

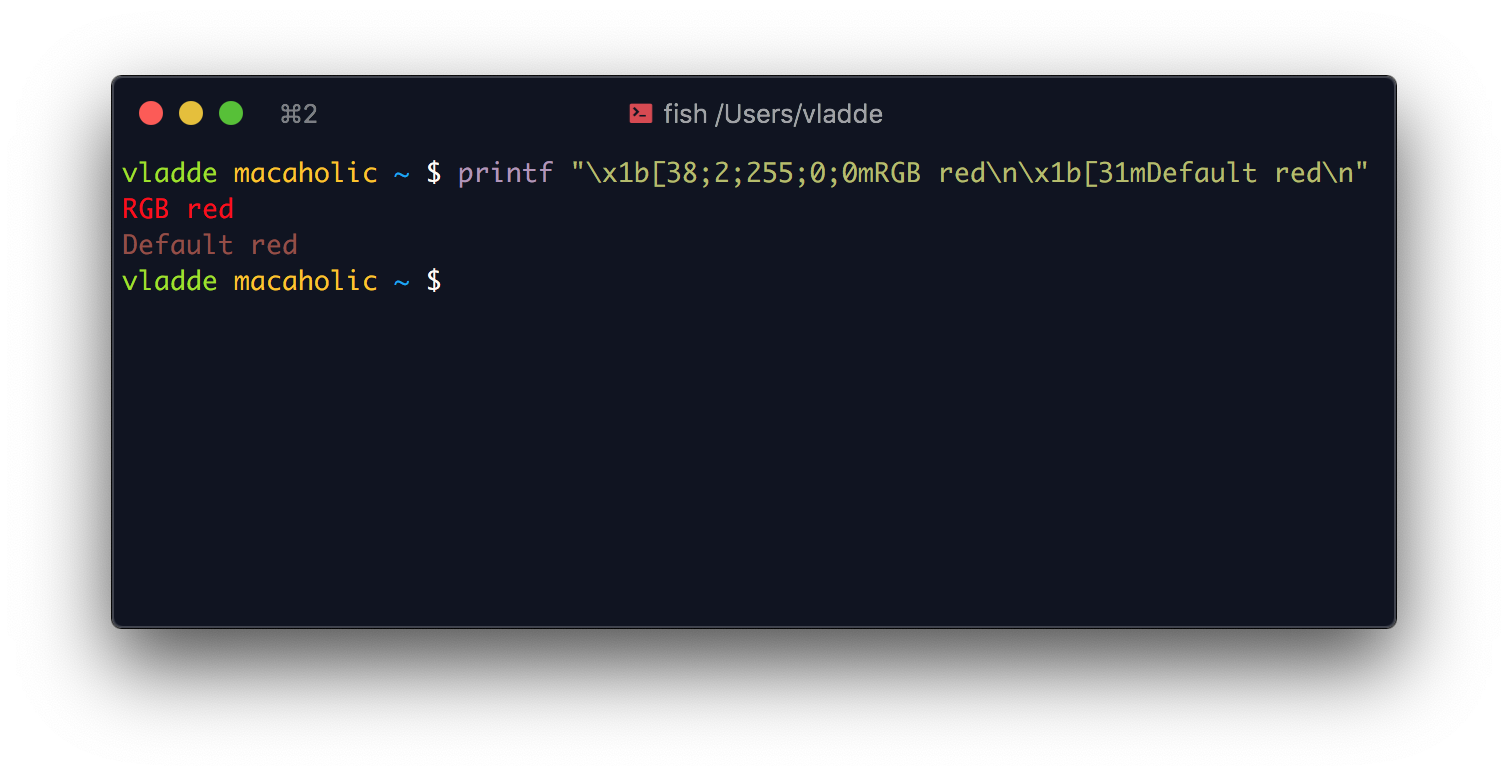

There is a fundamental difference between the ANSI color red, and the RGB color red. Most terminal emulators allow you to specify which RGB color each ANSI color represents. Looking at iTerm on mac, one can specify for the red color code to mean any RGB color. In this case, red is set to a washed out red color, but the RGB color code still shows up as the "true" red. |

|

I'm looking into RGB colors. It turns out it is a little bit more complicated than I thought. There seem to be three levels of ANSI colors:

I really don't know how to handle this. Maybe each level of color depth should have it's own type that can be used interchangeably in the application. Not sure how ANSI colors (which usually can be customised in the terminal settings) should be treated when combined with actual colors (ANSI 256/Truecolor). |

|

I would like to have as much backwards compatibility, but I have a hard time seeing how I could implement colors internally and still have them work seamlessly. Should scone just try to print out RGB colors for systems which do not specify it handles RGB colors? Maybe just drop the compatibility thing completely since most terminals today handle at least 256 colors. |

|

Sorry for the incoherent text, it's 2AM. None of the code was tested and probably does not compile. I think best-effort compatibility can be handled by approximation as long as you assume the user has a mostly sane 16-color colorscheme: Define each color as an RGB vector: define the ANSI colors as constants (I'm not thinking about style here, just how it'd work): equivalent code also be written for the 256-color palette (an array may be better than a switch) Programmer could choose to use the base set of 16 constants, extended 256 constants or just plain RGB with the Then for compatibility: if running on a 16-color terminal, you'd approximate to the nearest color: With that you will know which color's sequence to emit. This should be fast enough to do it for every cell on a 4k screen-sized terminal 100 times per second, and if not fast enough, can be optimized to execute in a few cycles (ideas: unroll the loop, get rid of branches if possible, use D array expressions) For 256 colors the approximation code above may be too slow, but can be optimized by using a better algorithm (generate an octree with the 256 colors - can probably be done at compile-time, and run a loop that cuts off a half of the color space on each iteration - should get most colors approximated in 8 iterations. Octree is probably overkill, a simple 3D grid should work) This is probably a solved problem with a better solution than what I wrote (search color approximation? color quantization? etc) TLDR:

|

|

Thank you for your input @kiith-sa!

I'll take a better look at your post a bit later :)

…Sent from my rymdtelefon

On 15 May 2020, at 01:59, Ferdinand Majerech <notifications@github.com> wrote:

Sorry for the incoherent text, it's 2AM. None of the code was tested and probably does not compile.

I think best-effort compatibility can be handled by approximation as long as you assume the user has a mostly sane 16-color colorscheme:

Define each color as an RGB vector:

struct Color

{

ubyte r;

ubyte g;

ubyte b;

uint squaredDistanceTo( Color b )

{

// needs int casts and may be incorrect, wrote it from memory

return abs(r - b.r) ^^ 2 + abs(g - b.g) ^^ 2 + abs(b - b.b) ^^ 2;

}

}

define the ANSI colors as constants (I'm not thinking about style here, just how it'd work):

enum Red = Color(255,0,0);

enum DarkRed = Color(128,0,0);

// same for the rest of the colors

// some kind of function so we can also get ANSI colors by index

Color getANSI16Color( in ubyte index )

{

switch(index)

{

case 0: return Red;

// etc.

}

}

equivalent code also be written for the 256-color palette (an array may be better than a switch)

Programmer could choose to use the base set of 16 constants, extended 256 constants or just plain RGB with the Color() constructor.

Then for compatibility: if running on a 16-color terminal, you'd approximate to the nearest color:

uint approximateTo16( in Color base )

{

auto bestSquaredDistance = uint.max;

uint bestColor;

foreach(c; 0 .. 16)

{

const candidate = getANSI16Color( c );

const distance = base.squaredDistanceTo( candidate );

if( distance < bestSquaredDistance )

{

bestSquaredDistance = distance;

bestColor = c;

}

}

return bestColor;

}

With that you will know which color's sequence to emit.

This should be fast enough to do it for every cell on a 4k screen-sized terminal 100 times per second, and if not fast enough, can be optimized to execute in a few cycles (ideas: unroll the loop, get rid of branches if possible, use D array expressions)

For 256 colors the approximation code above may be too slow, but can be optimized by using a better algorithm (generate an octree with the 256 colors - can probably be done at compile-time, and run a loop that cuts off a half of the color space on each iteration - should get most colors approximated in 8 iterations. Octree is probably overkill, a simple 3D grid should work)

This is probably a solved problem with a better solution than what I wrote (search color approximation? color quantization? etc)

TLDR:

Programmer can always use RGB colors

But they can have the '16-color programmer experience' with RGB constants, and same for 256

Detect whether the terminal supports RGB, 256 or 16 colors

When drawing colored output:

If we support RGB, output that

If 256, approximate to the closest of the 256 colors and output that

If 16, approximate to the closest of the 16 colors and output that

—

You are receiving this because you authored the thread.

Reply to this email directly, view it on GitHub, or unsubscribe.

|

|

Detecting if a terminal supports 256 colors or more is not that easy (currently I've only looked at POSIX (mac specifically)). The only way I've figured out to detect color support is I currently see no proper way of actually detecting color support of a terminal (only assuming), but maybe Maybe a middle way would be to still support ANSI 16 colors (currently |

|

From my experience, I think this can be done on case-by-case basis to cover most cases on most platforms, but there is probably no 'perfect' way. Source: https://gist.github.com/XVilka/8346728

TLDRI think querying Note: I did not find a reliable way to detect 256 color support. But it does seem to work everywhere where 24bit colors are supported. |

|

Thank you for your input @kiith-sa! 🙏 I'll read it more thoroughly later tonight. |

|

I believe that just to detect (guess) how many colors the terminal supports we could go with checking in the following order: (I would prefer to not check the emulators name, but I might do that still)

The info you provided is very useful, and once I get to implement this I'll revisit this thread. Once again, thank you very much @kiith-sa for the help you've provided ❤️ |

|

6 months later, and I would like to add that |

Modern terminal emulators (POSIX/Windows) support RGB ANSI color codes. This would be great to have as a feature.

The text was updated successfully, but these errors were encountered: