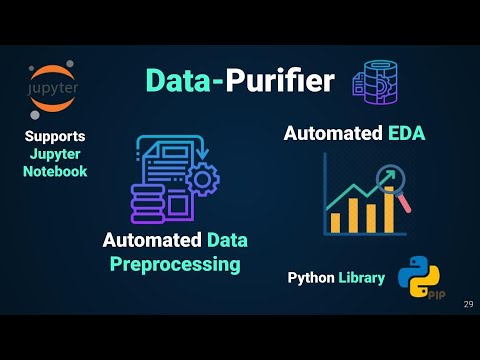

A Python library for Automated Exploratory Data Analysis, Automated Data Cleaning and Automated Data Preprocessing For Machine Learning and Natural Language Processing Applications in Python.

Table of Contents

Prerequsites

To use Data-purifier, it's recommended to create a new environment, and install the required dependencies:

To install from PyPi:

conda create -n <your_env_name> python=3.6 anaconda

conda activate <your_env_name> # ON WINDOWS: `source activate <your_env_name>`

pip install data-purifier

python -m spacy download en_core_web_smTo install from source:

cd <Data-Purifier_Destination>

git clone https://github.com/Elysian01/Data-Purifier.git

# or download and unzip https://github.com/Elysian01/Data-Purifier/archive/master.zip

conda create -n <your_env_name> python=3.6 anaconda

conda activate <your_env_name> # ON WINDOWS: `source activate <your_env_name>`

cd Data-Purifier

pip install -r requirements.txt

python -m spacy download en_core_web_smLoad the module

import datapurifier as dp

from datapurifier import Mleda, Nlpeda, Nlpurifier, NLAutoPurifier

print(dp.__version__)Get the list of the example dataset

print(dp.get_dataset_names()) # to get all dataset names

print(dp.get_text_dataset_names()) # to get all text dataset namesLoad an example dataset, pass one of the dataset names from the example list as an argument.

df = dp.load_dataset("womens_clothing_e-commerce_reviews")Automated NLP Pre-Processing using Data-Purifier Library Blog

Basic NLP

- It will check for null rows and drop them (if any) and then will perform following analysis row by row and will return dataframe containing those analysis:

- Word Count

- Character Count

- Average Word Length

- Stop Word Count

- Uppercase Word Count

Later you can also observe distribution of above mentioned analysis just by selecting the column from the dropdown list, and our system will automatically plot it.

- It can also perform

sentiment analysison dataframe row by row, giving the polarity of each sentence (or row), later you can also view thedistribution of polarity.

Word Analysis

- Can find count of

specific wordmentioned by the user in the textbox. - Plots

wordcloud plot - Perform

Unigram, Bigram, and Trigramanalysis, returning the dataframe of each and also showing its respective distribution plot.

Code Implementation

For Automated EDA and Automated Data Cleaning of NL dataset, load the dataset and pass the dataframe along with the targeted column containing textual data.

nlp_df = pd.read_csv("./datasets/twitter16m.csv", header=None, encoding='latin-1')

nlp_df.columns = ["tweets","sentiment"]Basic Analysis

For Basic EDA, pass the argument basic as argument in constructor

eda = Nlpeda(nlp_df, "tweets", analyse="basic")

eda.dfWord Analysis

For Word based EDA, pass the argument word as argument in constructor

eda = Nlpeda(nlp_df, "tweets", analyse="word")

eda.unigram_df # for seeing unigram datfarame- In automated data preprocessing, it goes through the following pipeline, and return the cleaned data-frame

- Drop Null Rows

- Convert everything to lowercase

- Removes digits/numbers

- Removes html tags

- Convert accented chars to normal letters

- Removes special and punctuation characters

- Removes stop words

- Removes multiple spaces

Code Implementation

Pass in the dataframe with the name of the column which you have to clean

cleaned_df = NLAutoPurifier(df, target = "tweets")-

Here you can choose the preprocessing method from the GUI

-

It provides following cleaning techniques, where you have to just tick the checkbox and our system will automatically perform the operation for you.

| Features | Features | Features |

|---|---|---|

| Drop Null Rows | Lower all Words | Contraction to Expansion |

| Removal of emojis | Removal of emoticons | Conversion of emoticons to words |

| Count Urls | Get Word Count | Count Mails |

| Conversion of emojis to words | Remove Numbers and Alphanumeric words | Remove Stop Words |

| Remove Special Characters and Punctuations | Remove Mails | Remove Html Tags |

| Remove Urls | Remove Multiple Spaces | Remove Accented Characters |

-

You can convert word to its base form by selecting either

stemmingorlemmatizationoption. -

Remove Top Common Word: By giving range of word, you can

remove top common word -

Remove Top Rare Word: By giving range of word, you can

remove top rare word

After you are done, selecting your cleaning methods or techniques, click on Start Purifying button to let the magic begins. Upon its completion you can access the cleaned dataframe by <obj>.df

Code Implementation

pure = Nlpurifier(nlp_df, "tweets")View the processed and purified dataframe

pure.df-

It gives shape, number of categorical and numerical features, description of the dataset, and also the information about the number of null values and their respective percentage.

-

For understanding the distribution of datasets and getting useful insights, there are many interactive plots generated where the user can select his desired column and the system will automatically plot it. Plot includes

- Count plot

- Correlation plot

- Joint plot

- Pair plot

- Pie plot

Code Implementation

Load the dataset and let the magic of automated EDA begin

df = pd.read_csv("./datasets/iris.csv")

ae = Mleda(df)

aeReport contains sample of data, shape, number of numerical and categorical features, data uniqueness information, description of data, and null information.

df = pd.read_csv("./datasets/iris.csv")

report = MlReport(df)Official Documentation: https://cutt.ly/CbFT5Dw

Python Package: https://pypi.org/project/data-purifier/