Modeling and simulating experiments on communication and computing costs.

For small transfers, latencies dominate; more participants increase latency.

For large transfers, bandwidth is key; bottlenecks are easily exposed.

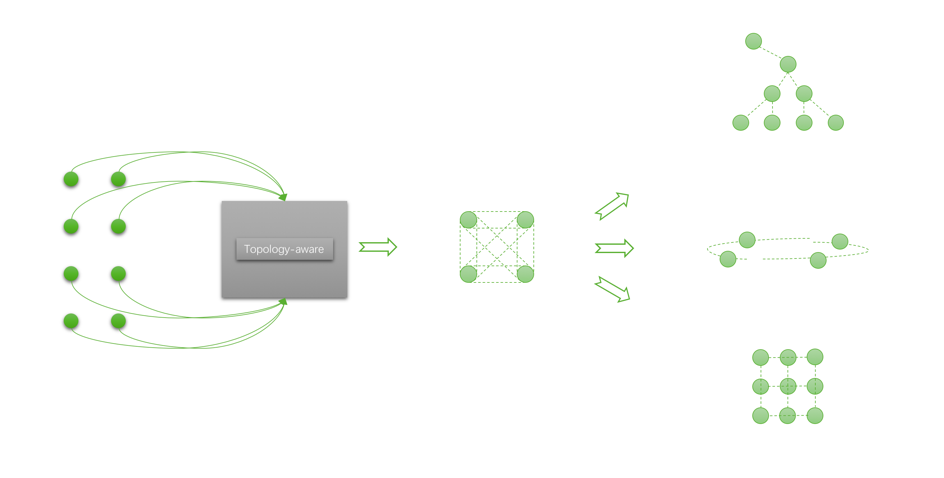

Thus, topology-aware implementation for high performance is necessary.

Btw, collectives are often non-overlapped, but computation and communication are not.

Topologies can be complex, where not every system is a fat tree, there are ring topology and mesh topology as well.

Communication algorithms are topology-dependent.

Most collectives amenable to bandwidth-optimal implementation on rings, and many topologies can be interpreted as one or more rings [P. Patarasuk and X. Yuan].

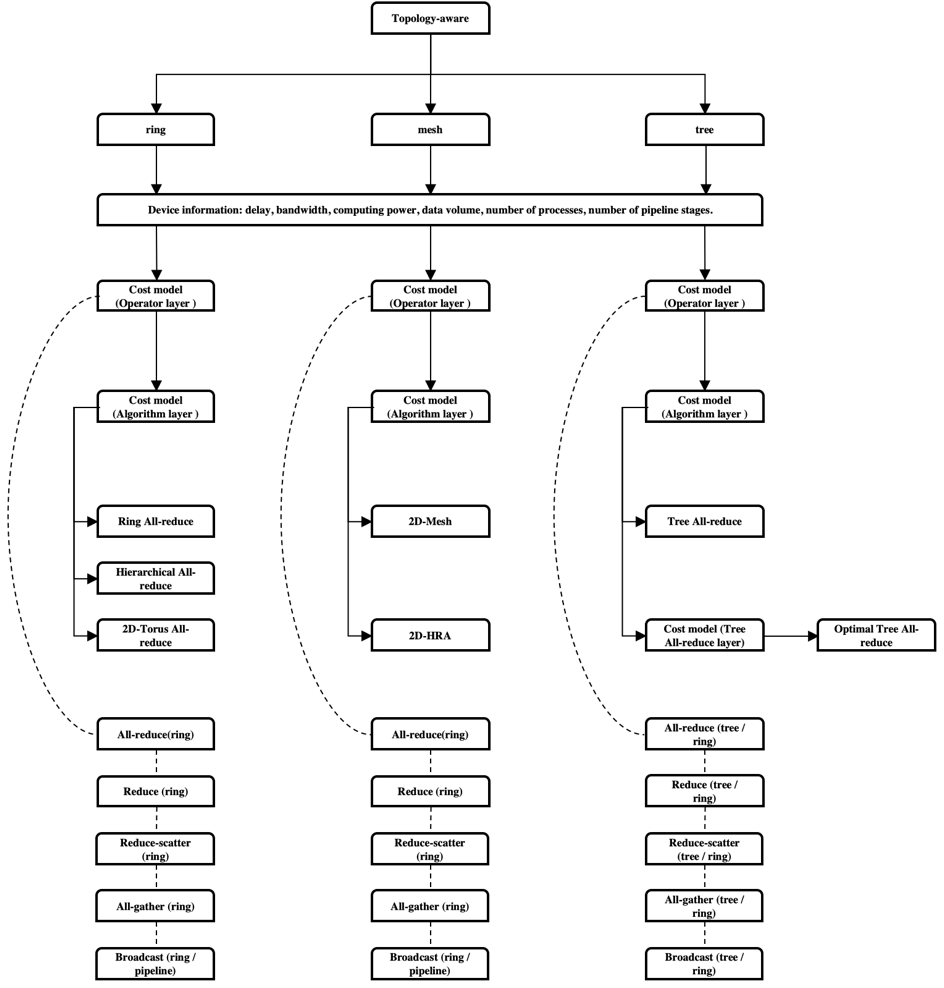

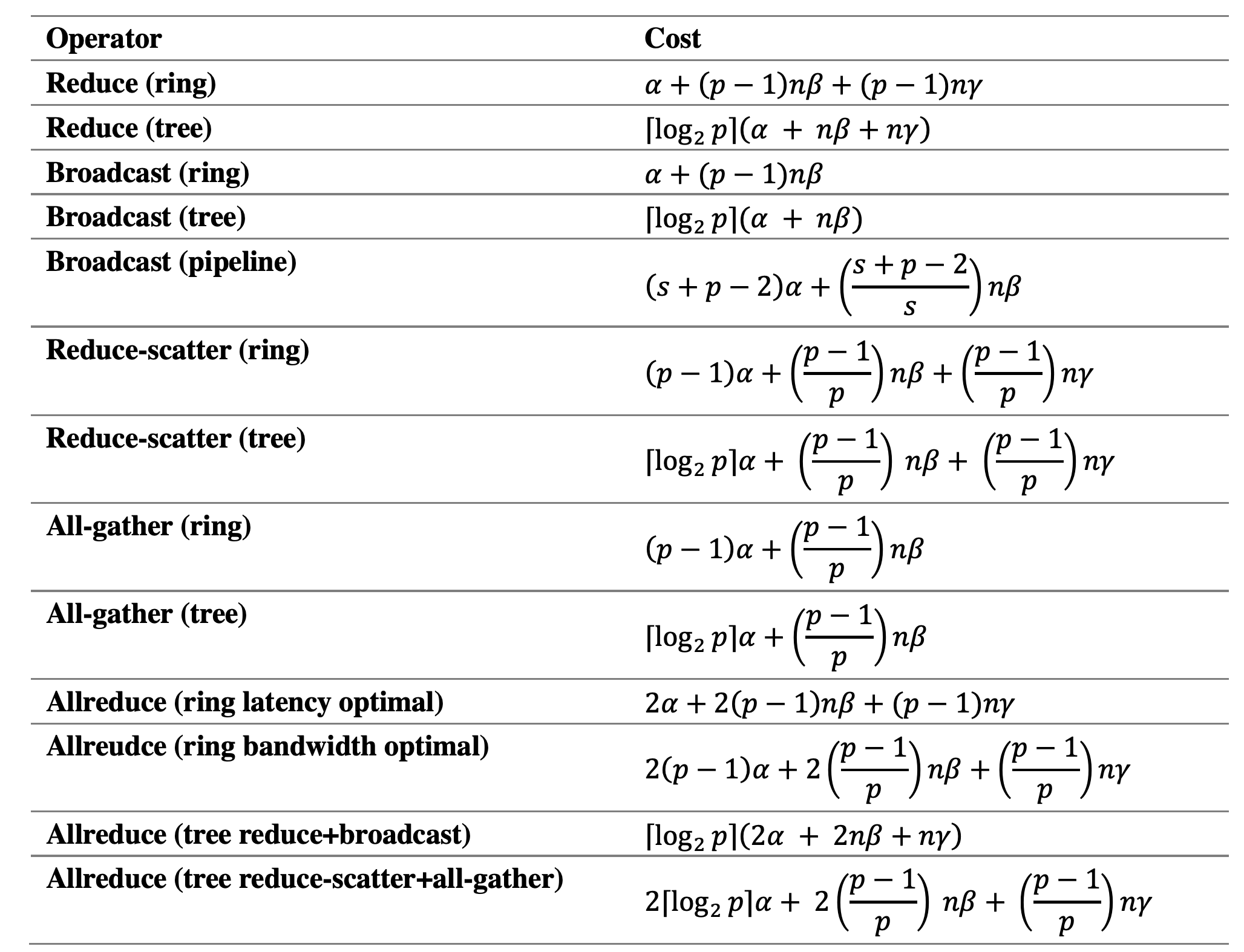

In this experiment, the delay, bandwidth, and calculation of multiple communication operators are modelled, including reduce, broadcast, reduce-scatter, all-gather, all-reduce and etc.

On the basis, several factors have been considered:

The bandwidth difference between intra machine and inter machine.

The operator complexity of different topological structures.

The overlap of communication and computation.

The experimental process is shown in the figures below.

α: latency

β: bandwidth

γ: computation cost

n: number of bytes transferred

p: number of process

s: split data into s messages)

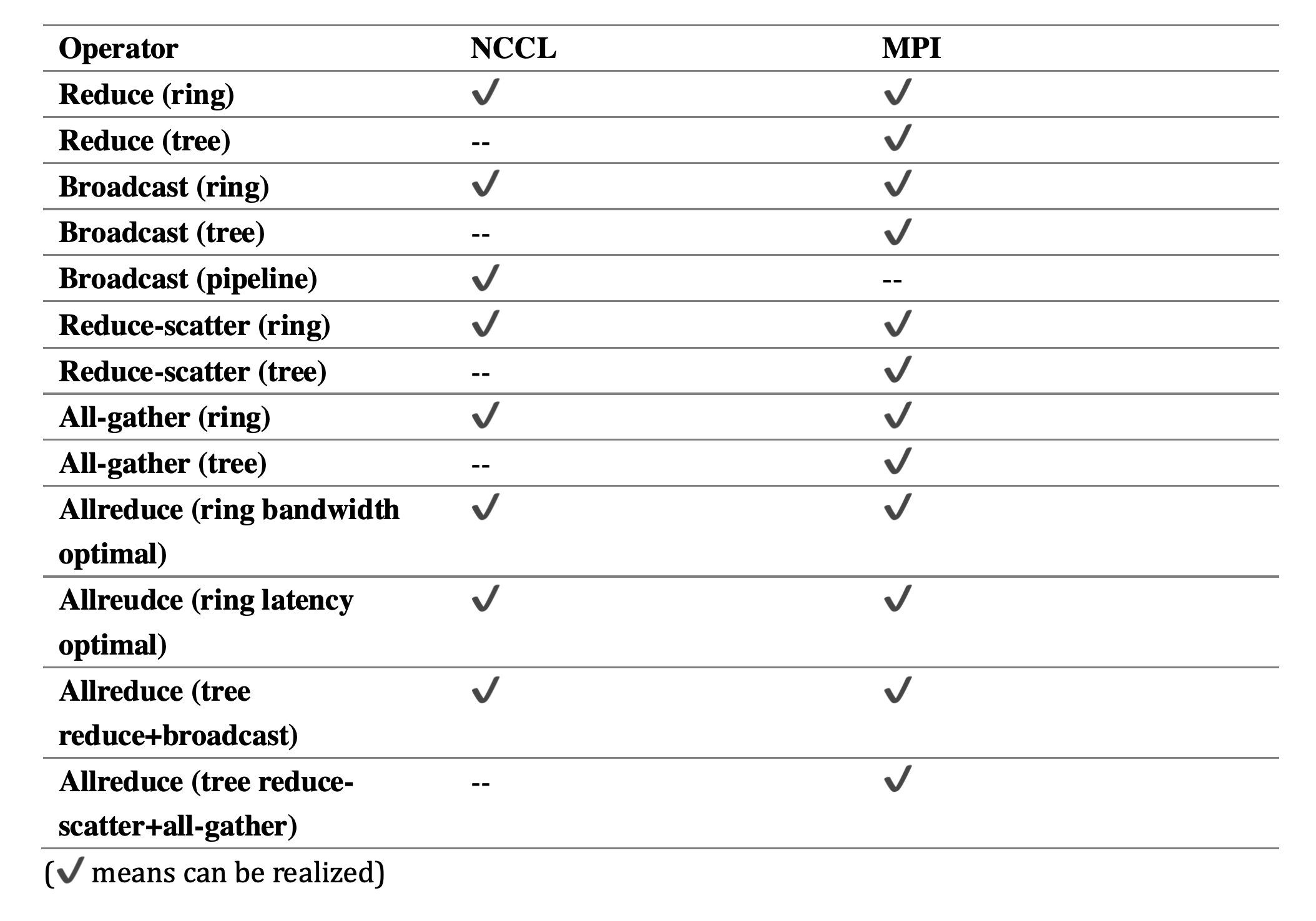

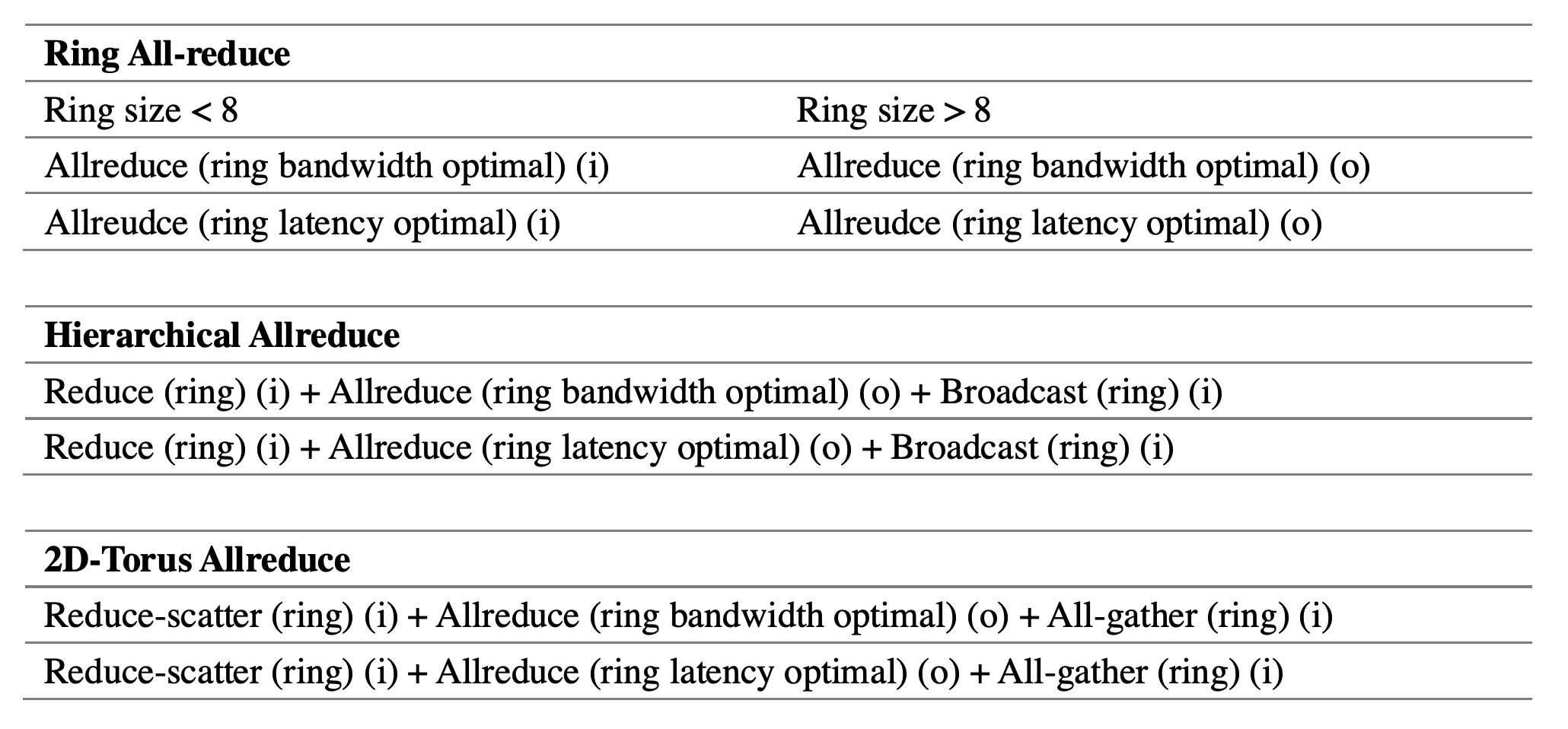

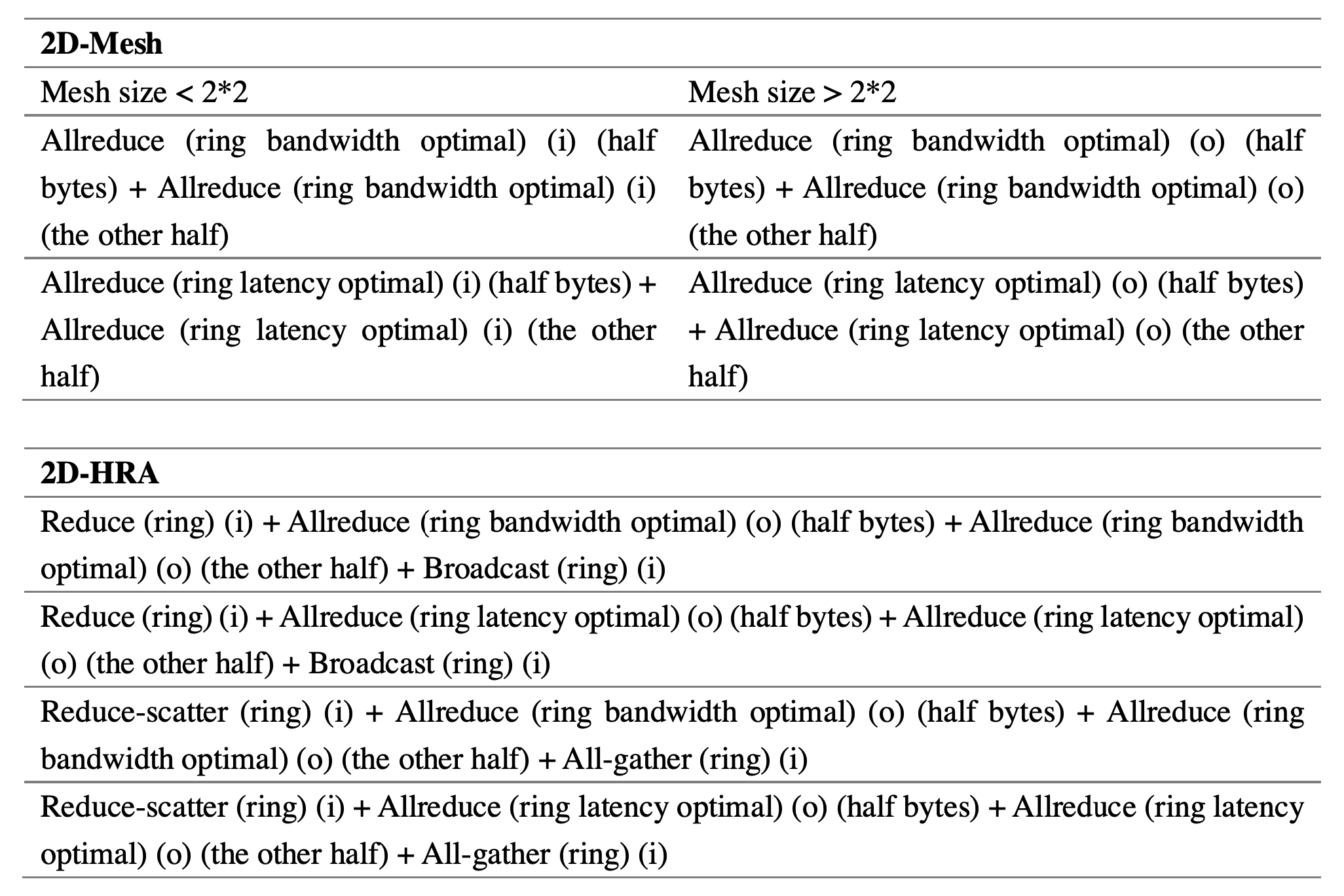

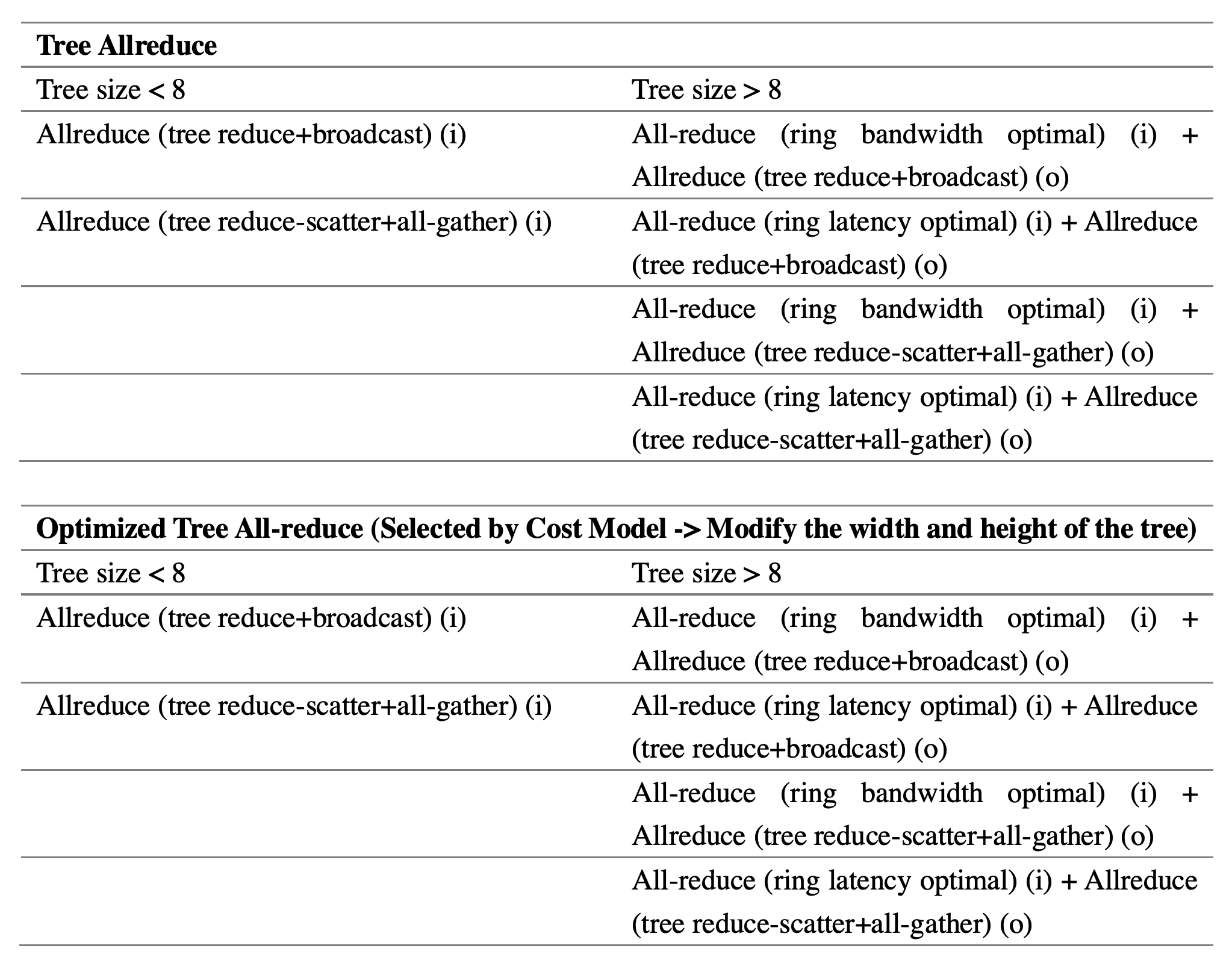

The types of operators that constitute different synchronization algorithms are different, as shown in the table.

i: intra group -> bandwidth β

o: inter group -> bandwidth β'

If the overlapping of communication and computation is considered, the optimal situation is that the communication time is less than the computation time (completely overlapping), then the cost model of each formula can be rewrite as:

cost=max((α,β),γ)

If not ideal, an overlap factor δ is required:

δ=(α,β)⊓γ

cost=(α,β)+γ-δ

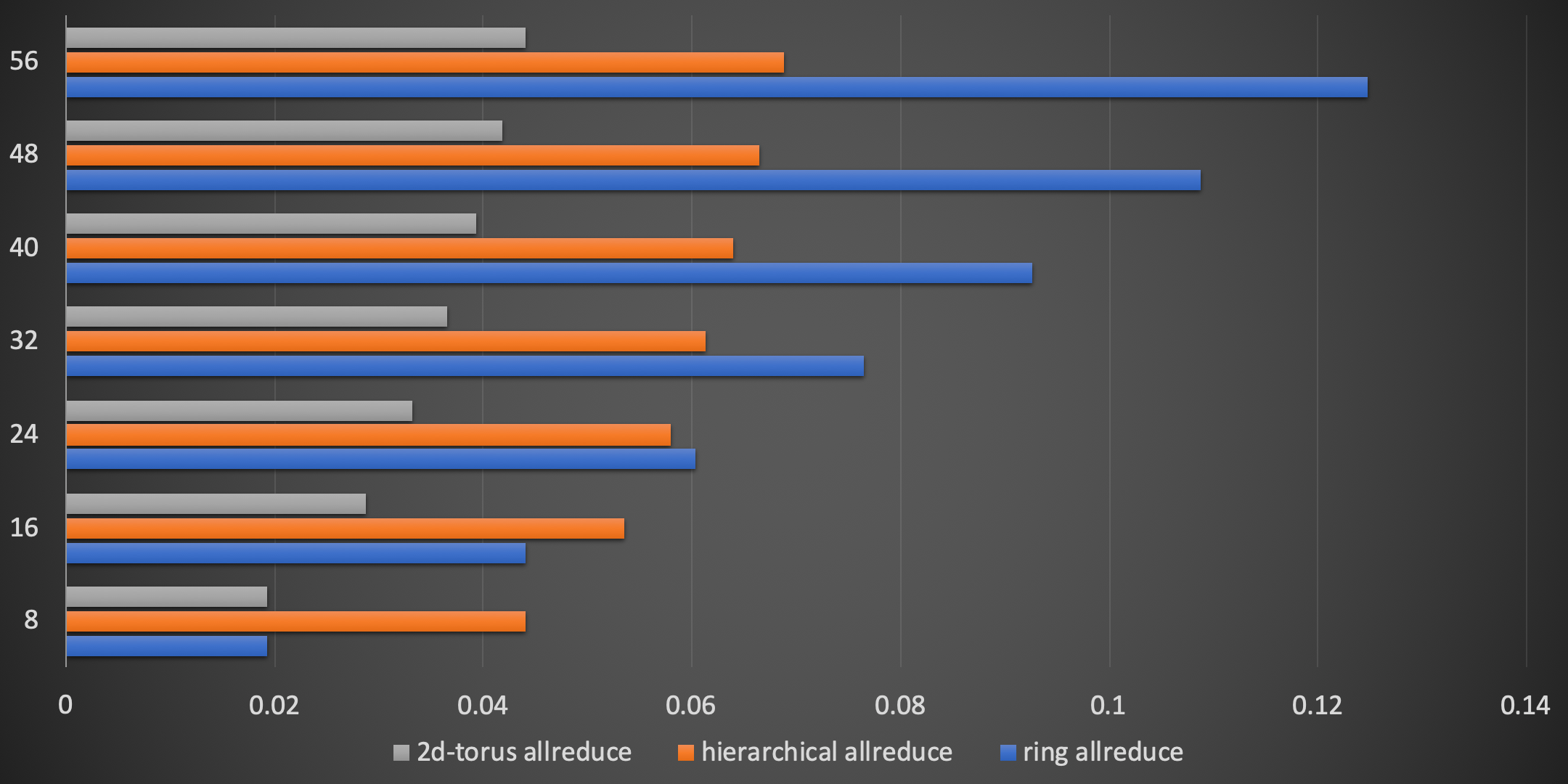

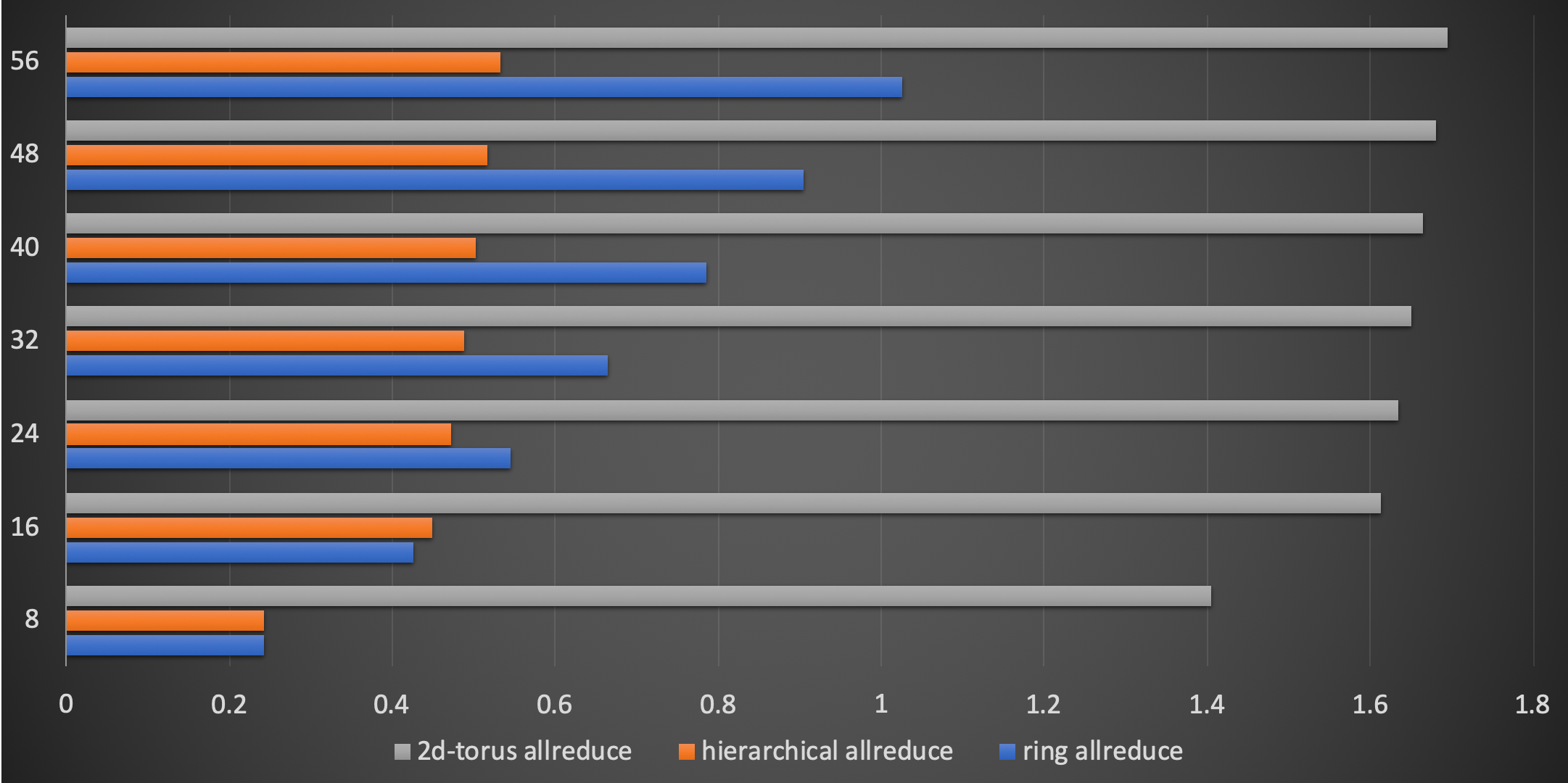

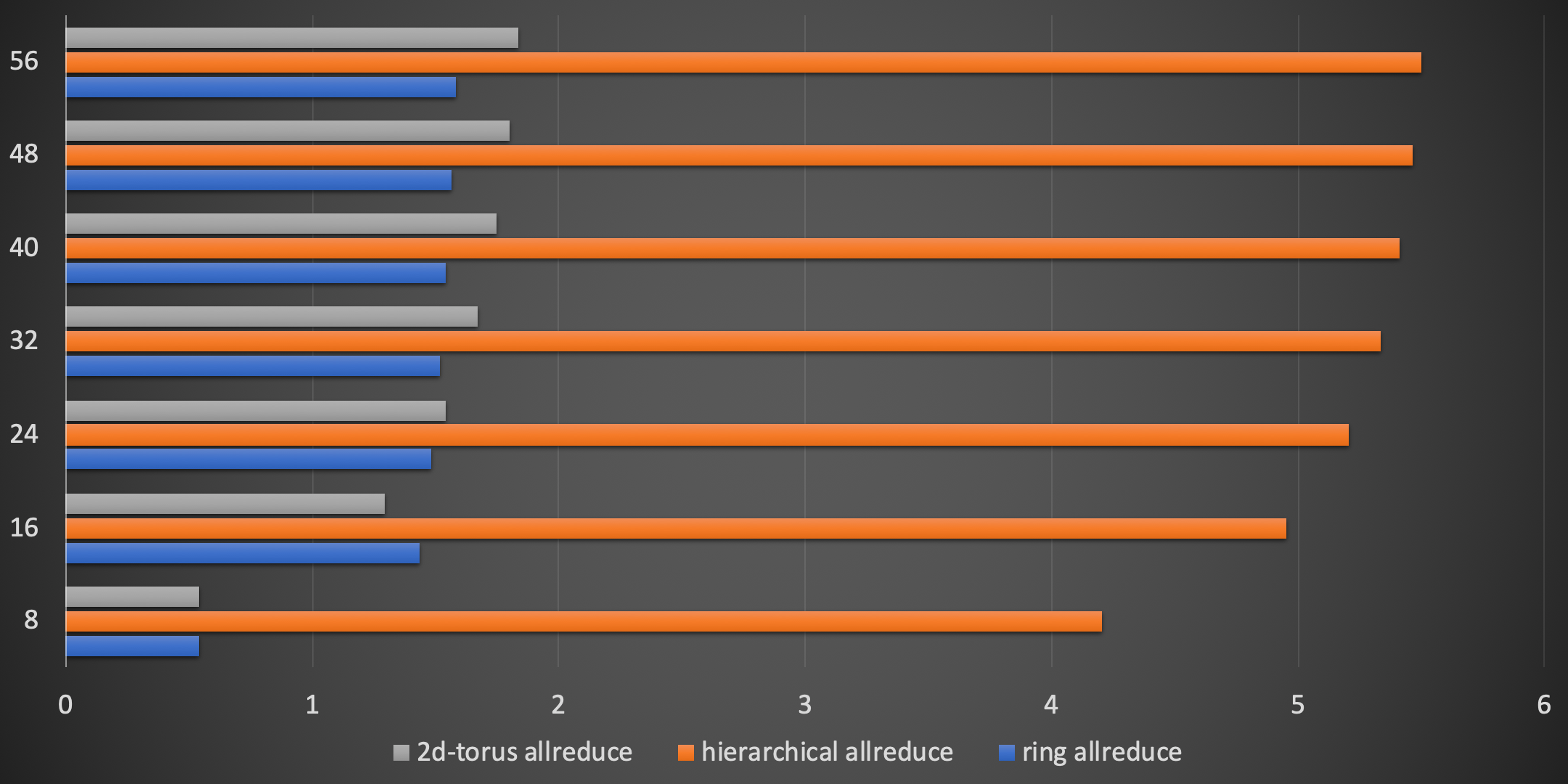

We only show part of the results.

x-axis: cost.

y-axis: number of process.

While each synchronization algorithm has its corresponding optimal deployment scenario, which makes AutoTopology necessary.