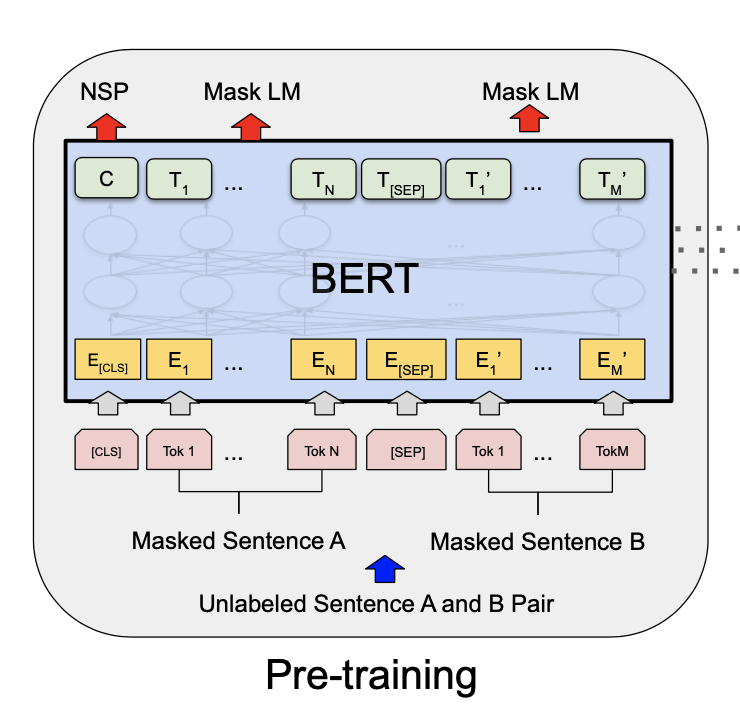

Bidirectional Encoder Representation from Transformers (BERT) is designed to pretrain deep bidirectional representations from unlabeled text by jointly conditioning on both left and right context in all layers and have achieved State-Of-Art results on many NLP tasks like Question Answering , Sentiment analysis/classification , language inference and much more .

For indepth explanation see the original paper : https://arxiv.org/pdf/1810.04805.pdf

Checkout my blogpost for practical implementation of state-of-art BERT in tensorflow : https://medium.com/nerd-for-tech/multi-class-classification-using-bert-3e02a050170d