-

Notifications

You must be signed in to change notification settings - Fork 1.7k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

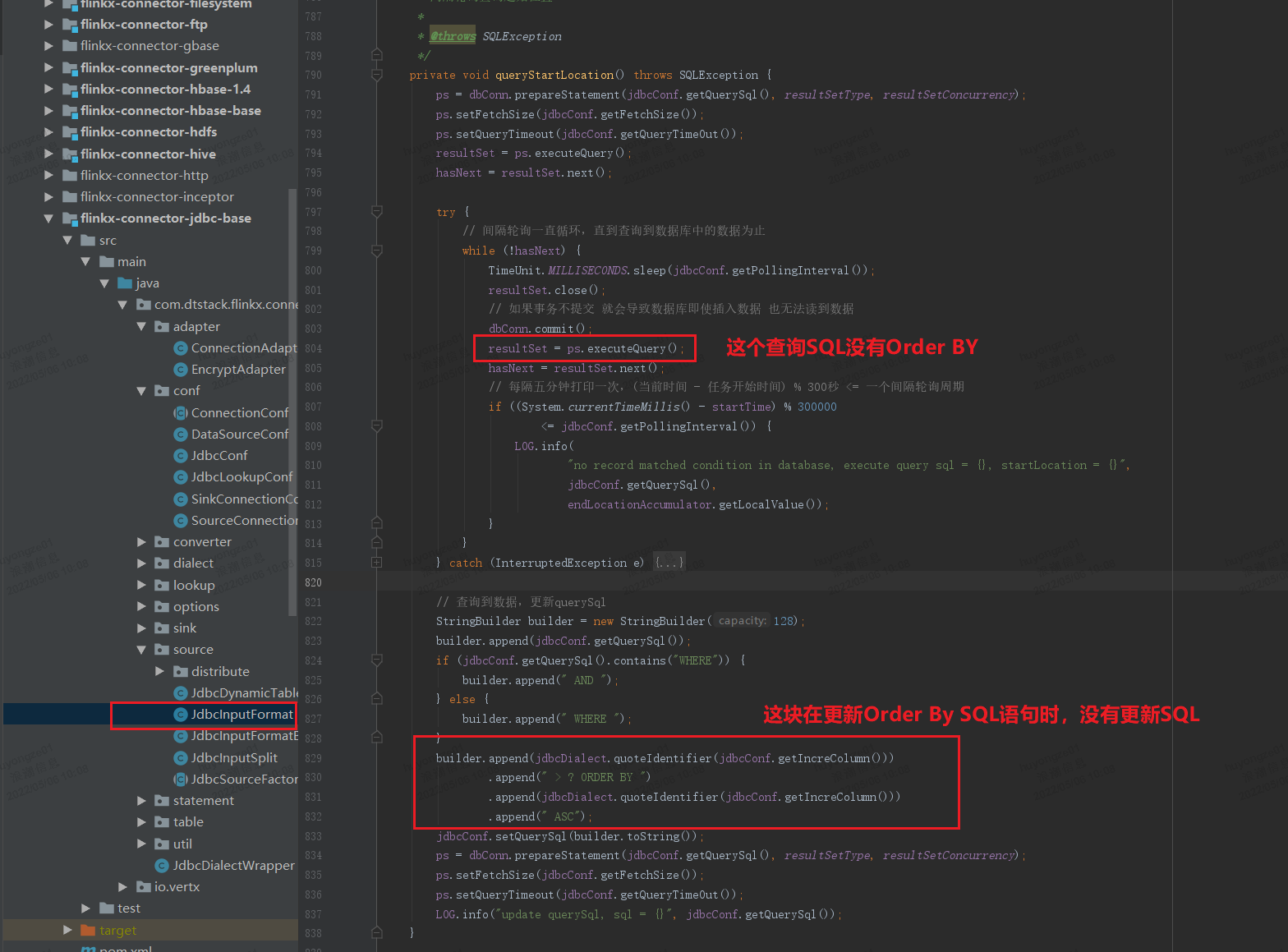

JDBC轮询抽取数据时出现重复数据 #764

Labels

bug

Something isn't working

Comments

|

Yes, it's a bug. Thank you for bringing up this issue.Is it convenient to submit a PR to fix it? |

|

Ok,I will try to fix it. |

FlechazoW

pushed a commit

that referenced

this issue

May 9, 2022

[hotfix-764][flinkx-connector-jdbc-base] Fixed Duplicate data occurred when use jdbc polling ,this commit update notes [hotfix-772][oceanbase]Fixed Could not found oceanbase clinet dependencies

FlechazoW

pushed a commit

that referenced

this issue

May 30, 2022

[hotfix-764][flinkx-connector-jdbc-base] Fixed Duplicate data occurred when use jdbc polling ,this commit update notes [hotfix-772][oceanbase]Fixed Could not found oceanbase clinet dependencies

Paddy0523

added a commit

to Paddy0523/chunjun

that referenced

this issue

Jun 1, 2022

…olling and startLocation is not null

|

Repeated consumption still exists in polling mode |

yanghuaiGit

pushed a commit

to yanghuaiGit/chunjun

that referenced

this issue

Jun 24, 2022

…olling and startLocation is not null

Sign up for free

to join this conversation on GitHub.

Already have an account?

Sign in to comment

Describe the bug

A clear and concise description of what the bug is.

JDBC数据源在实时扫表拉取数据时,在数据量大于1万条时,会出现部分数据重复,后续在增加数据,便不会出现重复。

原因:在实时抽取数据过程中,第一次从JDBC数据源的SQL,没有按照splitKey进行排序,导致获取的下一次的起始位置数据不是上一次轮询后的最大值,导致数据重复。

发现版本:1.12.1

To Reproduce

Steps to reproduce the behavior:

1.在oracle中插入1万条数据

2.配置运行一个Oracel的实时数据抽取任务,到Hive中

3.可以发现,在最后几千条数据出现重复

4.任务不停止,再次插入数据

5.再次插入的数据没有重复

Expected behavior

A clear and concise description of what you expected to happen.

实时从oracle拉取数据不会出现重复

Screenshots

If applicable, add screenshots to help explain your problem.

The text was updated successfully, but these errors were encountered: