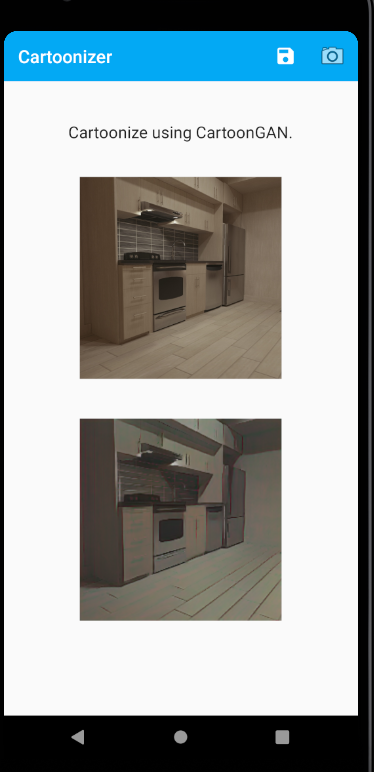

This is an Android app with the White-box CartoonGAN TensorFlow Lite models , cycleGAN & styleGAN2.

There are three TensorFlow Lite Models included in the Android app and see the ml README for details.

Android Studio ML Model Binding was used to import these models into the Android project.

drive: https://drive.google.com/drive/folders/1jNj-ao5Ybb5HxuKZ3ZFKmmn04Sx2aOsv?usp=sharing

- Android Studio Preview Beta version - download here.

- Android device (with at least 3GB RAM) in developer mode with USB debugging enabled

- USB cable to connect an Android device to computer

- Clone the project repo:

git clone https://github.com/margaretmz/CartoonGAN-e2e-tflite-tutorial.git - Open the code in Android Studio.

- Connect your Android device to computer then click on

"Run -> Run 'app'. - Once the app is launched on device, grant camera permission.

- sign up to launch the app.

- select the type you want (cartoonGAN , styleGAN2 , style transfer, filters).

- Take a selfie or a photo and wait to process.

1- cartoonGAN 2- StyleGAN2 3- CycleGAN 4- Style transfer 5- Filters 6- Augmanted reality

The white-box CartooGAN TensorFlow Lite models (with metatdata) are available on TensorFlow Hub in three different formats: Dynamic-range Integer float16

helpful resources: https://blog.tensorflow.org/2020/09/how-to-create-cartoonizer-with-tf-lite.html

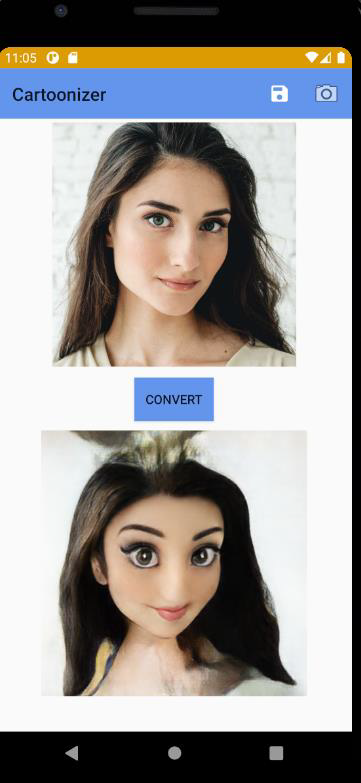

the classic StyleGAN model which is trained on photos of people’s faces. This was released with the StyleGAN2 code and paper and produces pretty fantastically high-quality results. the faces model were fine-tuned on a dataset of various characters from animated films. It’s only around 300 images but enough for the model to start learning what features these characters typically have. there are two API for the feature

RapidAPI: https://rapidapi.com/toonify-toonify-default/api/toonify

DeepAI: https://deepai.org/machine-learning-model/toonify

colab: https://colab.research.google.com/drive/1s2XPNMwf6HDhrJ1FMwlW1jl-eQ2-_tlk?usp=sharing

paper: https://paperswithcode.com/method/stylegan2

The model was built same as the model architecture described in the official cycleGAN paper and used across a range of image-to-image translation tasks. The implementation used the Keras deep learning framework based directly on the model described in the paper and implemented in the author’s codebase, designed to take and generate color images with the size 256×256 pixels. The architecture is comprised of four models, two discriminator models, and two generator models.

my colab training: https://colab.research.google.com/drive/10ZOGAcqytp2wm-e9wk7vGvwAwBsMcsyv#scrollTo=bKgkrXgvc5If

drive link for tflite model: https://drive.google.com/drive/folders/1fr60j9GaVp0j3Ccl40X0fHDQb45ytCdD?usp=sharing

refrence that i followed: https://machinelearningmastery.com/cyclegan-tutorial-with-keras/

paper: https://arxiv.org/abs/1703.10593

CycleGAN github: https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix

book reference that hepls me alot to under stand cycleGAN: GANs in action

book link: https://www.amazon.com/GANs-Action-learning-Generative-Adversarial/dp/1617295566

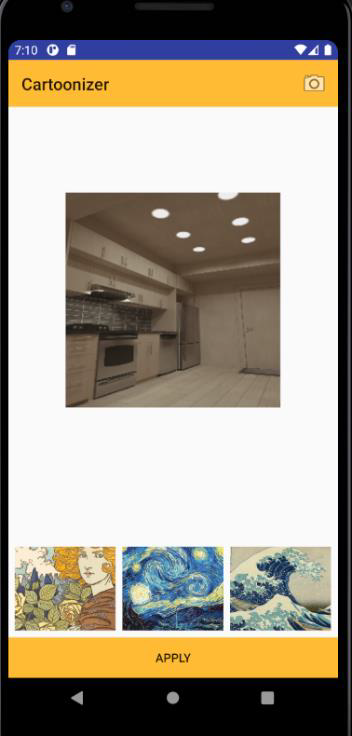

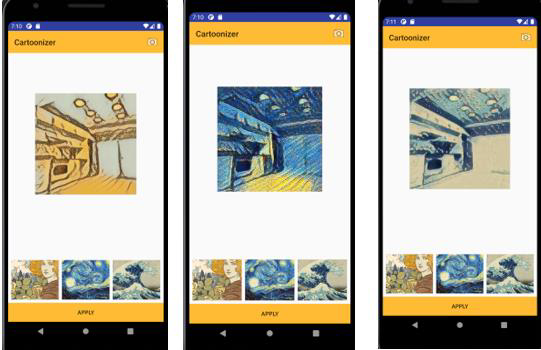

style transfer model from TensorFlow lite was used in the project as a trained model for the application.

tensorFlow lite for style transfer: https://www.tensorflow.org/tutorials/generative/style_transfer

model link: https://www.tensorflow.org/lite/examples/style_transfer/overview

output:

filters library: https://github.com/nekocode/CameraFilter

1- courses:

Neural Networks and Deep Learning: https://www.coursera.org/learn/neural-networks-deep-learning

Convolutional Neural Networks: https://www.coursera.org/learn/convolutional-neural-networks

Neural Style Transfer with TensorFlow: https://www.coursera.org/projects/neural-style-transfer

2- books: deep learning principles. GANs in Action.

if you are egyptian or someone who can't open medium website you can use this extension: https://chrome.google.com/webstore/detail/browsec-vpn-free-vpn-for/omghfjlpggmjjaagoclmmobgdodcjboh?hl=en