Twitter has become an important communication channel in times of emergency. The ubiquitousness of smartphones enables people to announce an emergency they’re observing in real-time. Because of this, more agencies are interested in programmatically monitoring Twitter (i.e. disaster relief organizations and news agencies).

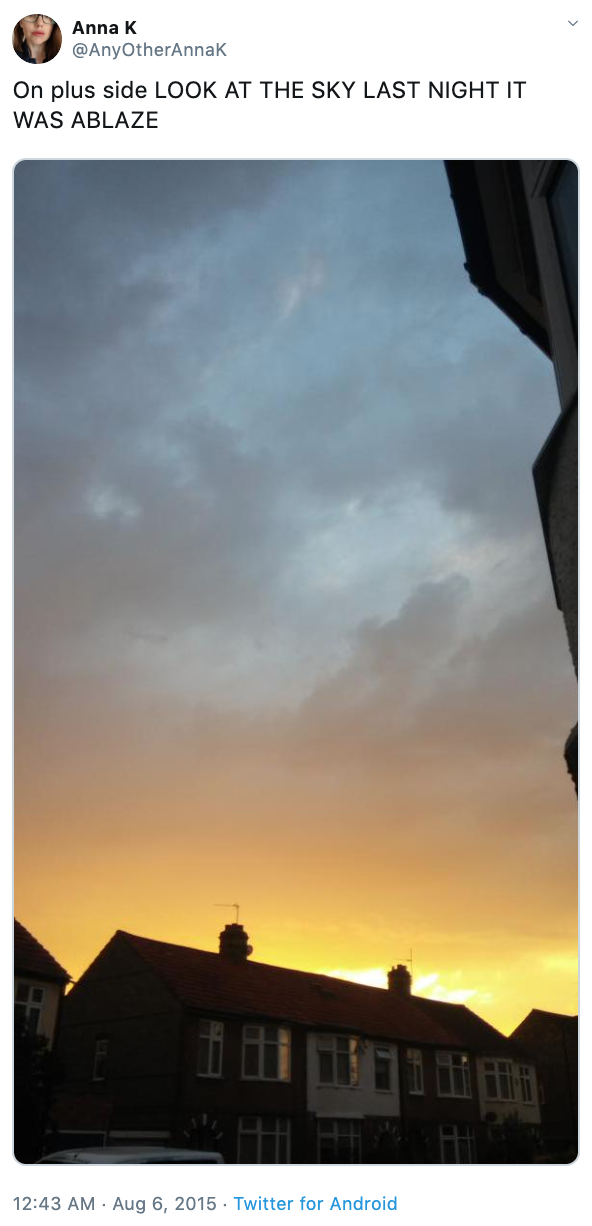

But, it’s not always clear whether a person’s words are actually announcing a disaster. Take this example:

The author explicitly uses the word “ABLAZE” but means it metaphorically. This is clear to a human right away, especially with the visual aid. But it’s less clear to a machine.

Develop a machine learning model that predicts which Tweets are about real disasters and which ones aren’t.

- Numpy

- Pandas

- Seaborn

- Matplotlib.pyplot

- Warnings

- NLTK

- re

- string

- SymSpellPy

- Sklearn

- XGBoost

- WordCloud

| Model Name | Accuracy Score |

| Random Forests Classifier | 79.06% |

| Decision Tree Classifier | 75.38% |

| Multinomial Naive Bayes | 80.53% |

| Support Vector Classifier | 79.62% |

| K Nearest Neighbors | 68.89% |

| Logistic Regression | 79.06% |

| XG Boost Classifier | 77.64% |

The Multinomial Naive Bayes made the most accurate predictions on detection of a real disaster from any tweet given in the dataset with an overall accuracy of almost 81%. This proves the effectiveness of the Multinomial Naive Bayes algorithm in text classification tasks.

K Nearest Neighbors had the worst performance among all the ML algorithms used, having an accuracy of just over 68%.

This dataset was created by the company figure-eight and originally shared on their 'Data For Everyone' website here.