Authors: [Yuki Saito], [Ryo Hachiuma], [Hideo Saito]

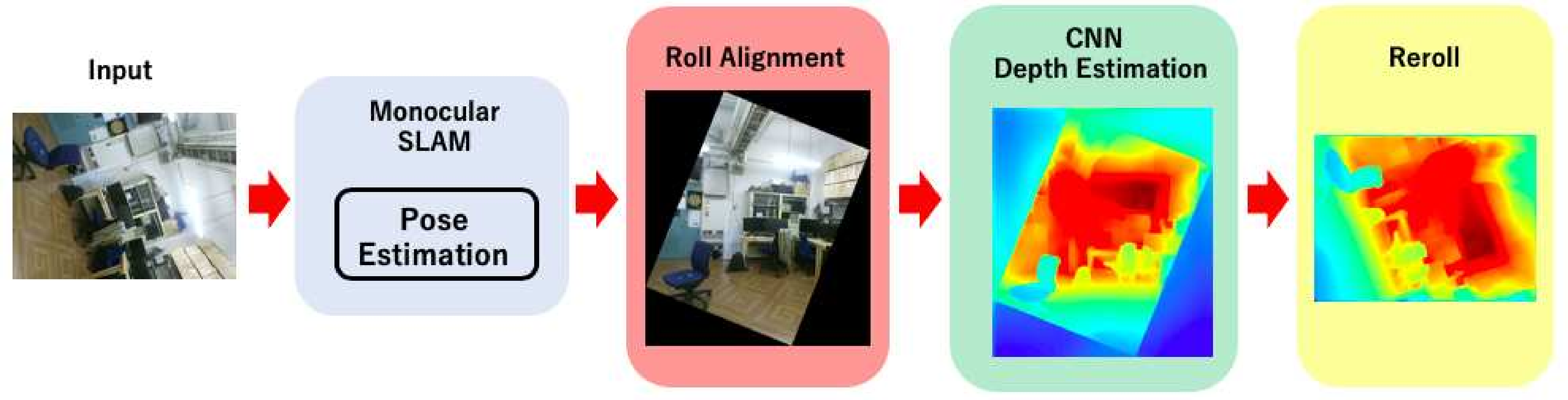

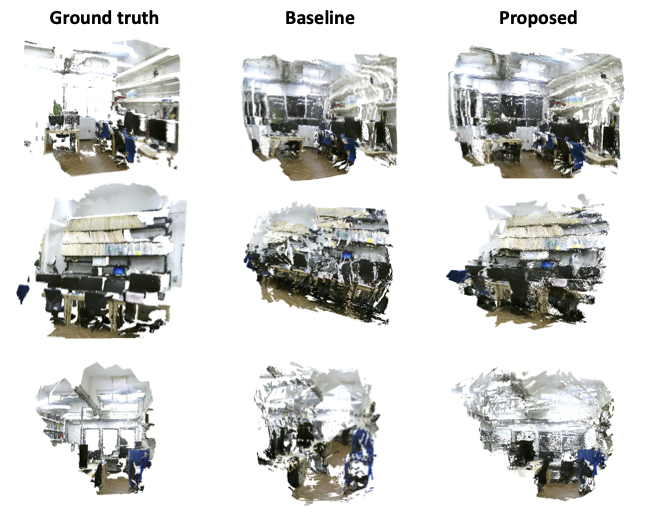

This is a repository of Paper: "In-Plane Rotation-Aware Monocular Depth Estimation using SLAM", "Training-free Approach to Improve the Accuracy of Monocular Depth Estimation with In-Plane Rotation"".

This script is composed of ORB-SLAM2 part, DepthEstimation part, and DenseReconstruction part.

[Our Paper1] Yuki Saito, Ryo Hachiuma, and Hideo Saito. In-Plane Rotation-Aware Monocular Depth Estimation using SLAM. International Workshop on Frontiers of Computer Vision(IW-FCV 2020), pp. 305-317, 2020. pdf.

[Our Paper2] Yuki Saito, Ryo Hachiuma, Masahiro Yamaguchi, and Hideo Saito, Training-free Approach to Improve the Accuracy of Monocular Depth Estimation with In-Plane Rotation. IEICE Transactions on Information and Systems, 2021. pdf

[Our paper3] 齋藤祐貴,八馬遼,山口真弘,斎藤英雄,SLAMを用いたRoll方向回転に頑健な単眼depth推定の精度改善手法,第222回コンピュータビジョンとイメージメディア研究会,2020年5月15日発表済. [優秀賞] ** pdf**

If you use our scripts in an academic work, please cite:

@article{In-PlaneRotationAwareMonoDepth2020,

title={In-Plane Rotation-Aware Monocular Depth Estimation Using SLAM},

author={Yuki Saito, Ryo Hachiuna, and Hideo Saito},

journal={International Conference on Frontiers of Computer Vision},

pages={305--317},

publisher={Springer Singapore},

year={2020}

}

if you use ORB-SLAM2 (Stereo or RGB-D) in an academic work, please cite:

@article{murORB2,

title={{ORB-SLAM2}: an Open-Source {SLAM} System for Monocular, Stereo and {RGB-D} Cameras},

author={Mur-Artal, Ra\'ul and Tard\'os, Juan D.},

journal={IEEE Transactions on Robotics},

volume={33},

number={5},

pages={1255--1262},

doi = {10.1109/TRO.2017.2705103},

year={2017}

}

if you use DenseReconstruction Module in an academic work, please cite:

@INPROCEEDINGS{WhangCNNMonoFusion,

author={Wang, Jiafang and Liu, Haiwei and Cong, Lin and Xiahou, Zuoxin and Wang, Liming},

booktitle={2018 IEEE International Symposium on Mixed and Augmented Reality Adjunct (ISMAR-Adjunct)},

title={CNN-MonoFusion: Online Monocular Dense Reconstruction Using Learned Depth from Single View},

year={2018},

volume={},

number={},

pages={57--62},

doi={10.1109/ISMAR-Adjunct.2018.00034}

}

We have tested the library in **Ubuntu 18.04 and 16.04, but it should be easy to compile in other platforms. A powerful computer (e.g. i7) will ensure real-time performance and provide more stable and accurate results.

For other related libaries, please search in official page of ORB-SLAM2 Git

-

Download a sequence from http://vision.in.tum.de/data/datasets/rgbd-dataset/download and uncompress it.

-

Associate RGB images and depth images using the python script associate.py. We already provide associations for some of the sequences in Examples/RGB-D/associations/. You can generate your own associations file executing:

python associate.py PATH_TO_SEQUENCE/rgb.txt PATH_TO_SEQUENCE/depth.txt > associations.txt

- Execute the following command. Change

XXX.yamlto TUMX.yaml, or OurDataset.yaml for each sequence respectively. ChangePATH_TO_SEQUENCE_FOLDERto the uncompressed sequence folder.

./Examples/Monocular/mono_tum Vocabulary/ORBvoc.txt Examples/RGB-D/XXX.yaml PATH_TO_SEQUENCE_FOLDER ASSOCIATIONS_FILE

- Execute the following command. Change

XXXXX.yamlto TUM1.yaml, or OurDataset.yaml for each sequence respectively. ALl yaml files are summarized in Examples/rgbd_monodepth folder. ChangePATH_TO_SEQUENCE_FOLDERto the uncompressed sequence folder.

./Examples/rgbd_monodepth/rgbd_monodepth Vocabulary/ORBvoc.txt Examples/Monocular/XXX.yaml PATH_TO_SEQUENCE_FOLDER ASSOCIATIONS_FILE