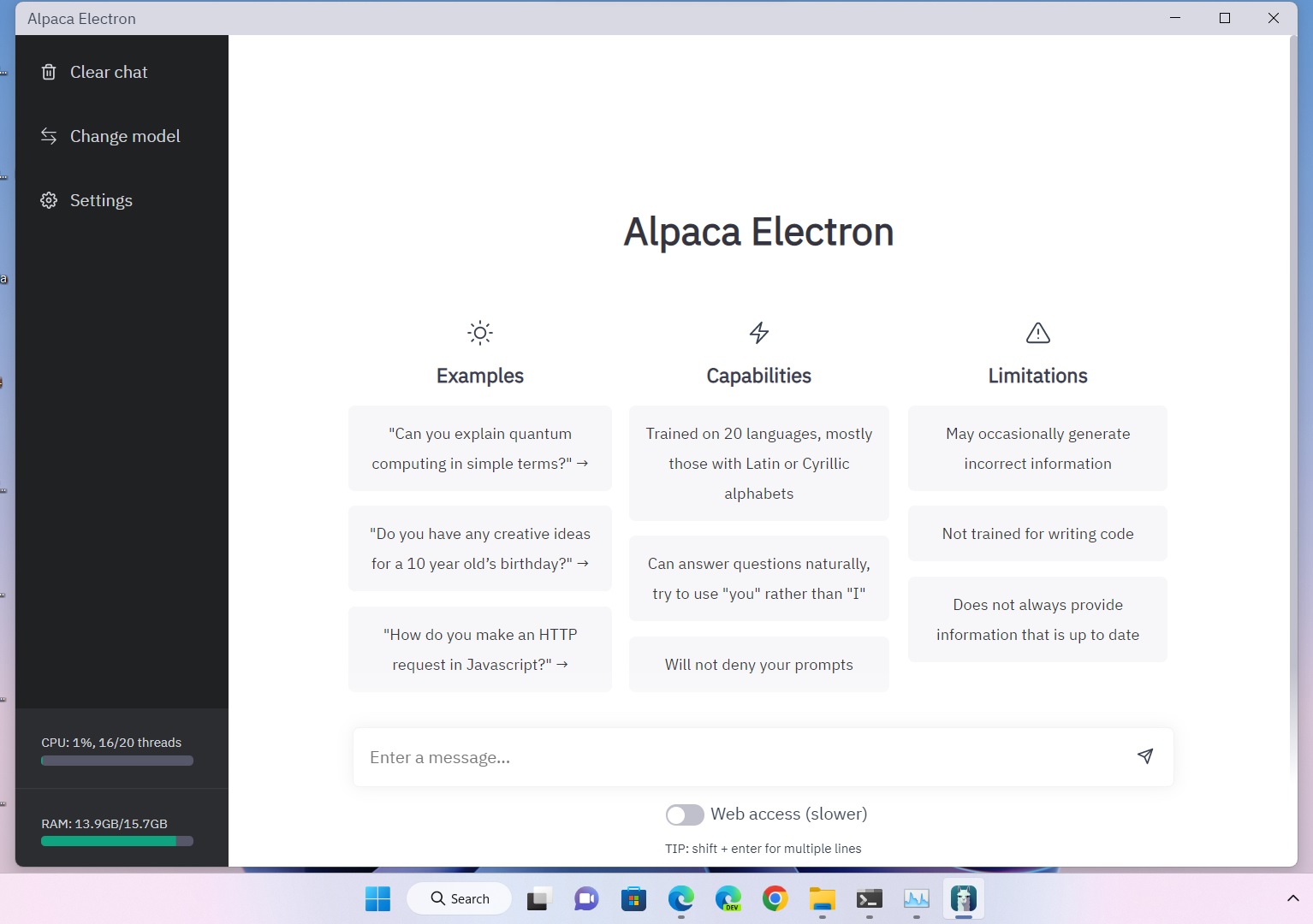

Alpaca Electron is built from the ground-up to be the easiest way to chat with the alpaca AI models. No command line or compiling needed!

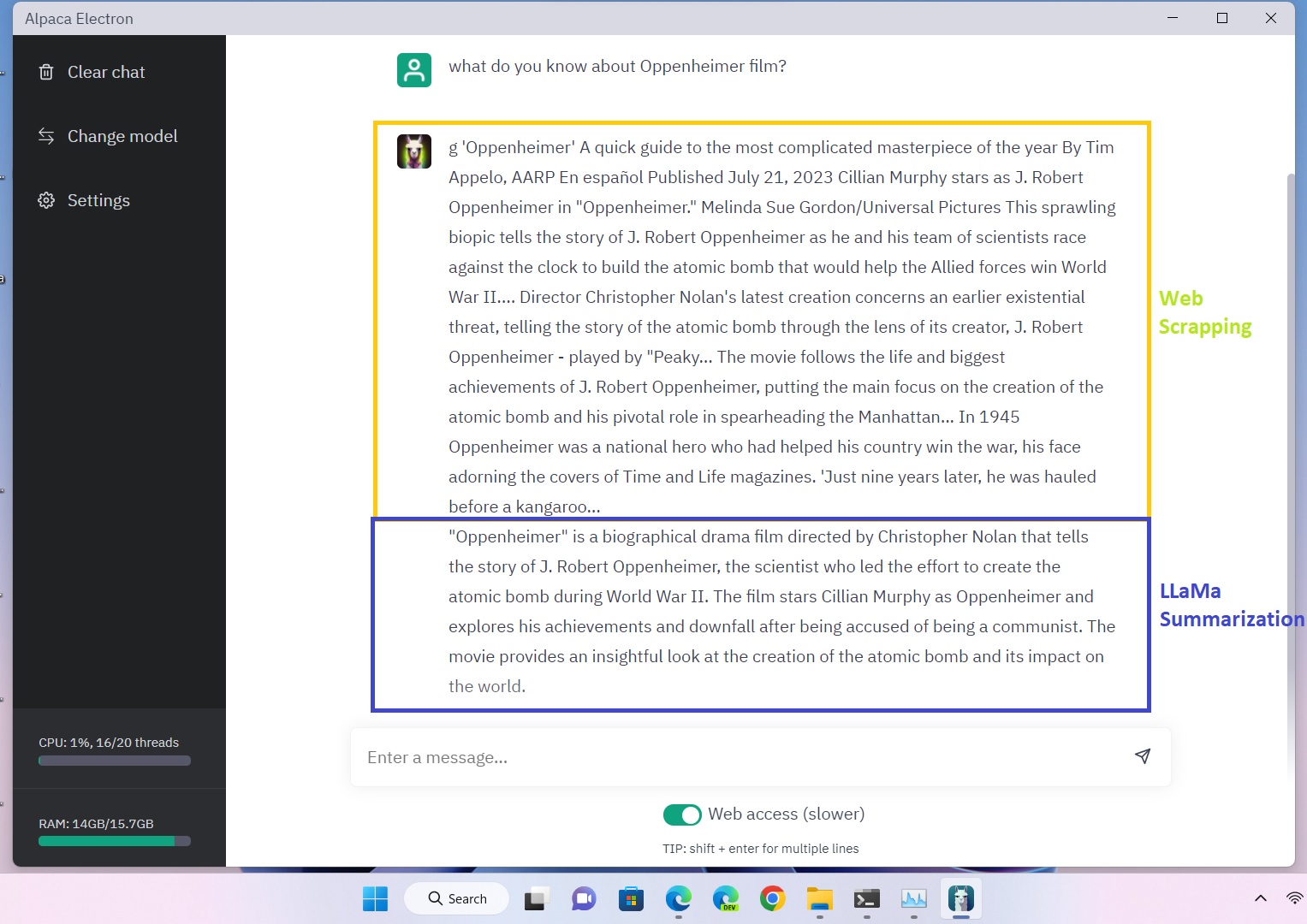

This updated Alpaca-Electron is designed for GGML v2 and v3. Also in this build there's web-search integration albeit much slower to summarize it.

Example of GGMLv2:

Example of GGMLv3:

- https://huggingface.co/TheBloke/gpt4-x-vicuna-13B-GGML/blob/main/gpt4-x-vicuna-13B.ggmlv3.q4_0.bin

- https://huggingface.co/TheBloke/Llama-2-13B-chat-GGML/blob/main/llama-2-13b-chat.ggmlv3.q4_0.bin

Special thanks to @ItsPi3141 for creating Alpaca-Electron, @antimatter15 for creating alpaca.cpp and to @ggerganov for creating llama.cpp, the backbones behind alpaca.cpp. Finally, credits go to Meta and Stanford for creating the LLaMA and Alpaca models, respectively.