New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

[SEDONA-21] Add extension classes for auto registration of UDFs/UDTs #513

Conversation

docs/tutorial/sql-sql.md

Outdated

| Start `spark-sql` as following (replace `<VERSION>` with actual version, like, `1.0.1-incubating`): | ||

|

|

||

| ```sh | ||

| park-sql --packages org.apache.sedona:sedona-sql-3.0_2.12:<VERSION>,org.apache.sedona:sedona-viz-3.0_2.12:<VERSION>,org.locationtech.jts:jts-core:1.18.0,,org.apache.sedona:sedona-core-3.0_2.12:<VERSION>,org.geotools:gt-referencing:24.0 \ |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Could you please change the packages to the packages listed here: http://sedona.apache.org/download/GeoSpark-All-Modules-Maven-Central-Coordinates/#spark-30-scala-212

- sedona-python-adapter: a fat jar for Scala/Java/Python users that includes all dependencies except geotools

- sedona-viz

- org.datasyslab.geotools-wrapper: the geotools packages on Maven Central. The original Geotools jars are in OSGEO repo.

The individual Sedona jars are only for advanced users who know how to handle dependency conflicts. In addition, use OSGEO GeoTools jars will lead to 'jai-core missing' issue on some platforms

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@jiayuasu done - you're right, it's much easier to use that coordinates...

|

@alexott Thank you very much for your contribution. Please see my comment above. |

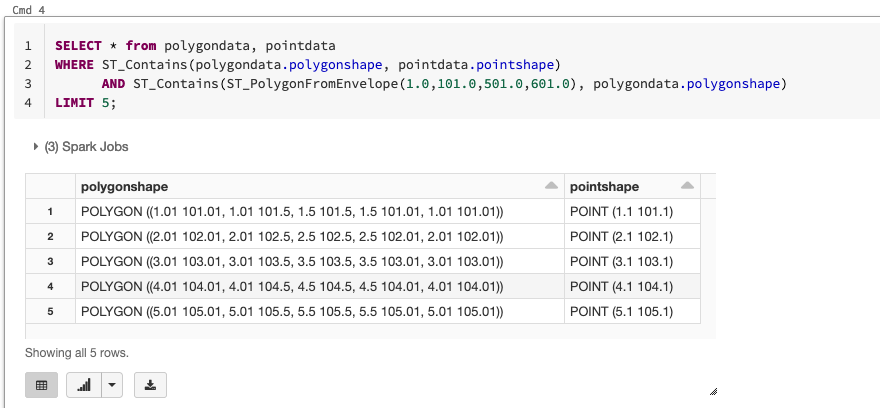

With this change we can use Sedona UDFs/UDTs from Spark SQL, for example, from `spark-sql` or via Thrift server. Just need to add following to command-line: ``` --conf spark.sql.extensions=org.apache.sedona.viz.sql.SedonaVizExtensions,org.apache.sedona.sql.SedonaSqlExtensions \ --conf spark.kryo.registrator=org.apache.spark.serializer.KryoSerializer \ --conf spark.kryo.registrator=org.apache.sedona.viz.core.Serde.SedonaVizKryoRegistrator ```

5f99468

to

66c81e2

Compare

|

@alexott Thank you again for your contribution to Sedona. After the release of Sedona 1.0.1, a user reported that they cannot use this PR in Azure Databricks workspace. His question is almost identical to this SO post: https://stackoverflow.com/questions/66721168/sparksessionextensions-injectfunction-in-databricks-environment I wonder if you have any idea about how to fix it. |

|

@jiayuasu The main problem is that if you add the library via UI, then it's loaded only after Spark is started, so all SparkExtensions already executed. That's an existing limitation of the platform. There is a workaround - copy all necessary jars to DBFS (the cp /dbfs/tmp/sedona-jars/*.jar /databricks/jarsAfter that, Spark extensions are picked up, and you can use SQL commands: P.S. One problem is also that you need to pull many jars to make it working. I was using following <project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>net.alexott.demos.spark</groupId>

<artifactId>sedona-all_3.0_2.12</artifactId>

<version>1.0.1-incubating</version>

<packaging>jar</packaging>

<name>sedona-all</name>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<sedona.version>1.0.1-incubating</sedona.version>

<spark.version>3.0_2.12</spark.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.sedona</groupId>

<artifactId>sedona-viz-${spark.version}</artifactId>

<version>${sedona.version}</version>

</dependency>

<dependency>

<groupId>org.apache.sedona</groupId>

<artifactId>sedona-python-adapter-${spark.version}</artifactId>

<version>${sedona.version}</version>

</dependency>

<dependency>

<groupId>org.datasyslab</groupId>

<artifactId>geotools-wrapper</artifactId>

<version>geotools-24.0</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.8.1</version>

<configuration>

<source>${java.version}</source>

<target>${java.version}</target>

<optimize>true</optimize>

</configuration>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.2.0</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project> |

Is this PR related to a proposed Issue?

SEDONA-21

What changes were proposed in this PR?

With this change we can use Sedona UDFs/UDTs from Spark SQL, for example, from

spark-sqlor via Thrift server. Just need to add following to command-line:

How was this patch tested?

manual test using

spark-sqlDid this PR include necessary documentation updates?

yes