New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

[SPARK-34769][SQL]AnsiTypeCoercion: return closest convertible type among TypeCollection #31859

[SPARK-34769][SQL]AnsiTypeCoercion: return closest convertible type among TypeCollection #31859

Conversation

|

BTW I will add Spark document for the ANSI Type Coercion after this one. |

|

Test build #136138 has finished for PR 31859 at commit

|

| val narrowestCommonType = convertibleTypes.find { dt => | ||

| convertibleTypes.forall { target => | ||

| implicitCast(dt, target, isInputFoldable = false).isDefined | ||

| } | ||

| } |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

It seems there is no explicit definition of narrowest data types. I'm not 100% sure about this logic though, does the find method (finding a first data type) works fine for any input?

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

@maropu I have updated the related context in PR description. This part is quite complicated.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

does the find method (finding a first data type) works fine for any input?

The logic here is to find a data type that can be converted to all other convertible types. If there is one, it is the closest convertible type.

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/AnsiTypeCoercion.scala

Show resolved

Hide resolved

|

Shall we simply fail if there are multiple matches in the type collection? It's a bit tricky to define "closest" |

That sounds not reasonable. E.g., failing an input of Integer type for |

|

Is there any function using |

There are similar expressions: |

| shouldNotCastStringInput(TypeCollection(NumericType, BinaryType)) | ||

| // When there are multiple convertible types in the `TypeCollection` and there is no closest | ||

| // convertible data type among the convertible types. | ||

| shouldNotCastStringLiteral(TypeCollection(NumericType, BinaryType)) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

This duplicates the previous case.

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

This one is for String Literal, which can convert to any of (NumericType, BinaryType). The previous one is for String type column, which can't do the conversion.

|

Kubernetes integration test starting |

|

Kubernetes integration test status failure |

|

Test build #136434 has finished for PR 31859 at commit

|

|

Test build #136439 has started for PR 31859 at commit |

| // When there are multiple convertible types in the `TypeCollection`, use the closet convertible | ||

| // data type among convertible types. | ||

| shouldCast(IntegerType, TypeCollection(BinaryType, FloatType, LongType), LongType) | ||

| shouldCast(IntegerType, TypeCollection(BinaryType, LongType, NumericType), IntegerType) |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

This doesn't go to the code path to pick the closest convertible type. This hits case _ if expectedType.acceptsType(inType) => Some(inType)

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

right

| @@ -377,10 +368,31 @@ class AnsiTypeCoercionSuite extends AnalysisTest { | |||

| ArrayType(StringType, true), | |||

| TypeCollection(ArrayType(StringType), StringType), | |||

| ArrayType(StringType, true)) | |||

|

|

|||

| // When there are multiple convertible types in the `TypeCollection`, use the closet convertible | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

nit: closet -> closest

| @@ -73,8 +73,8 @@ select left(null, -2), left("abcd", -2), left("abcd", 0), left("abcd", 'a') | |||

| -- !query schema | |||

| struct<> | |||

| -- !query output | |||

| java.lang.NumberFormatException | |||

| invalid input syntax for type numeric: a | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

can we move left(null, -2) out to a new query? otherwise we can't test the error message of left("abcd", 'a')

| @@ -92,7 +92,7 @@ select right(null, -2), right("abcd", -2), right("abcd", 0), right("abcd", 'a') | |||

| struct<> | |||

| -- !query output | |||

| org.apache.spark.sql.AnalysisException | |||

| cannot resolve 'substring('abcd', (- CAST('a' AS DOUBLE)), 2147483647)' due to data type mismatch: argument 2 requires int type, however, '(- CAST('a' AS DOUBLE))' is of double type.; line 1 pos 61 | |||

| cannot resolve 'substring(NULL, (- -2), 2147483647)' due to data type mismatch: argument 1 requires (string or binary) type, however, 'NULL' is of null type.; line 1 pos 7 | |||

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

ditto

|

Kubernetes integration test starting |

|

Kubernetes integration test status failure |

|

Kubernetes integration test starting |

|

Kubernetes integration test status failure |

|

thanks, merging to master! |

|

Test build #136448 has finished for PR 31859 at commit

|

|

late lgtm. |

|

I just found out that I mistakenly assigned it to myself .. I removed it back now .. |

What changes were proposed in this pull request?

Currently, when implicit casting a data type to a

TypeCollection, Spark returns the first convertible data type amongTypeCollection.In ANSI mode, we can make the behavior more reasonable by returning the closet convertible data type in

TypeCollection.In details, we first try to find the all the expected types we can implicitly cast:

type among them. If there is no such closet data type, return None.

Note that if the closet type is Float type and the convertible types contains Double type, simply return Double type as the closet type to avoid potential

precision loss on converting the Integral type as Float type.

Why are the changes needed?

Make the type coercion rule for TypeCollection more reasonable and ANSI compatible.

E.g. returning Long instead of Double for

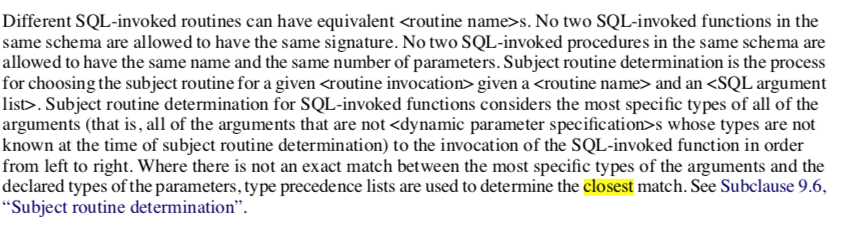

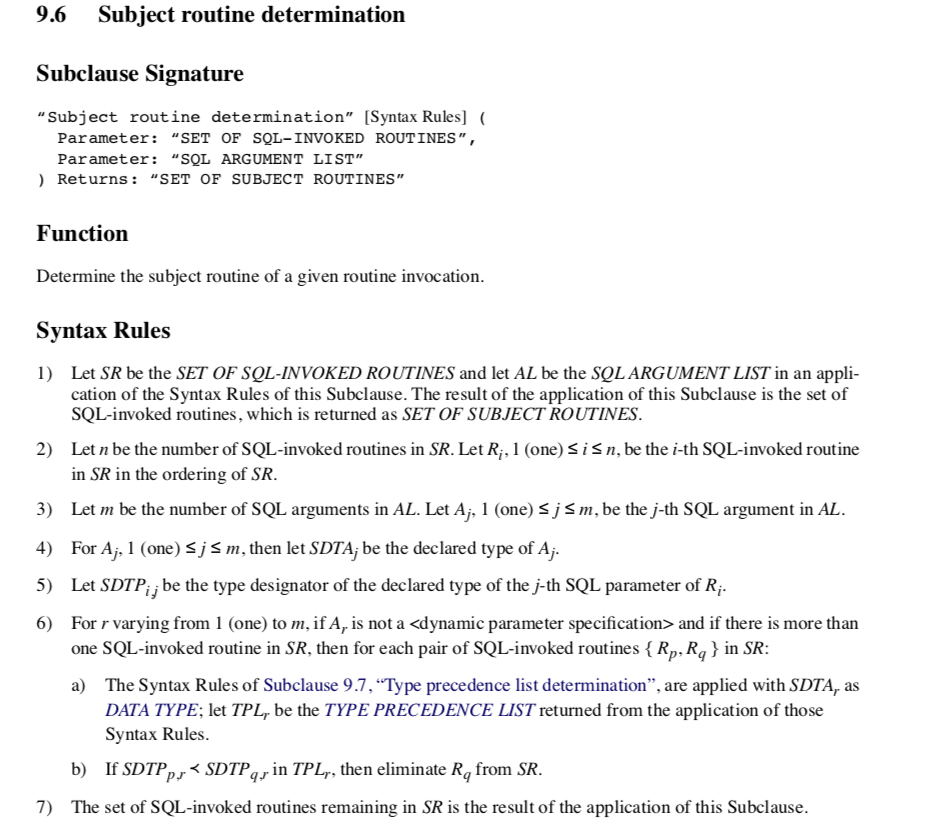

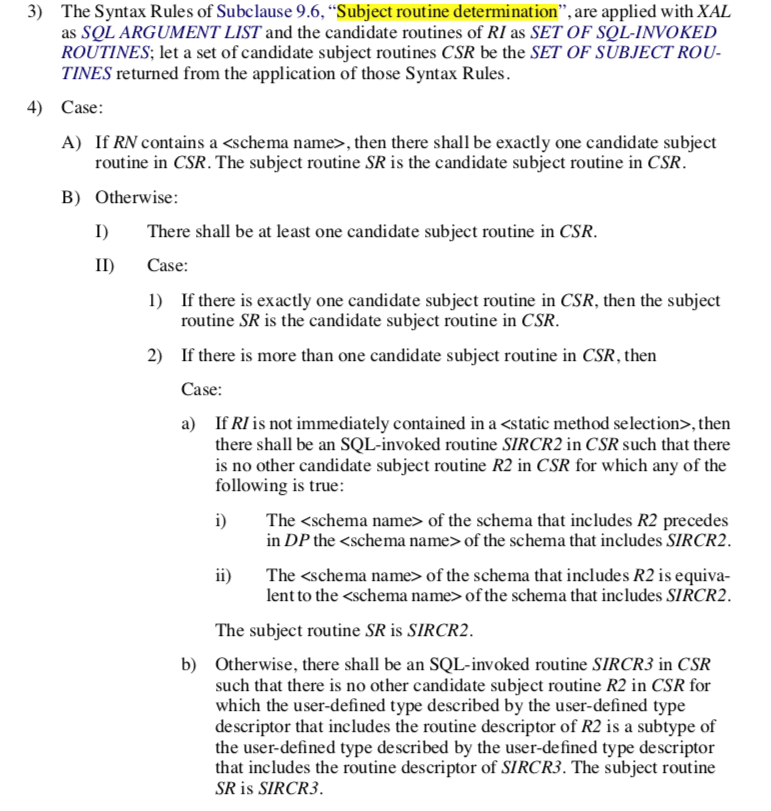

implicast(int, TypeCollect(Double, Long)).From ANSI SQL Spec section 4.33 "SQL-invoked routines"

Section 9.6 "Subject routine determination"

Section 10.4 "routine invocation"

Does this PR introduce any user-facing change?

Yes, in ANSI mode, implicit casting to a

TypeCollectionreturns the narrowest convertible data type instead of the first convertible one.How was this patch tested?

Unit tests.