-

Notifications

You must be signed in to change notification settings - Fork 832

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Karpenter choose costly spot instances. #3044

Comments

|

can you use ec2-instance-selector to see the spot pricing for those instances in your account? |

|

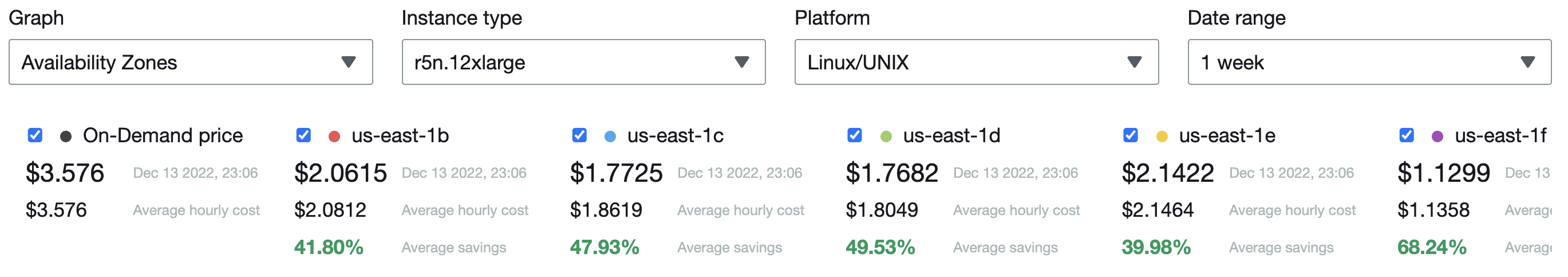

Here is the spot price history: 4 - r5n.4xlarge - 2.9016$ Here is the current average frequency of interruption in our region (Taken from https://aws.amazon.com/ec2/spot/instance-advisor/): And from my experience with Spots, the r5n.4xlarge is the least interrupted instance from the above list. |

|

We are looking into this internally to see if we can find a better answer for you. |

|

One thing to note about the spot instance advisor:

This does a good job of representing monthly history, but a bad job of representing shortlived spikes. From our investigation, things are working as intended and your instance type flexibility enabled you to find lower interruption rate capacity during this scale out. Karpenter uses the PCO strategy, you may be interested to learn more here: https://aws.amazon.com/blogs/compute/introducing-price-capacity-optimized-allocation-strategy-for-ec2-spot-instances/ |

|

The attached doc explains how the different strategies are not even close to what we see in reality. The facts are that once we moved from CAS to Karpenter, our monthly costs increased by 15%. |

|

Going to re-open this and investigate deeper. |

|

@liorfranko In my use case all containers fit in t3.small so I created a provisioner with t3.small with high priority and then another provisioner with all types with low or 0 priority. My problem now is I do not know how to debug the configuration and consolidation is not working for me. but that is another story. I hope it work for you. because for me is almost good. |

|

Well, it will work, but operation-wise, it will be tough to manage. |

|

Recently, the spot market has been more stable, and we don't see this behavior anymore. |

|

Hey @liorfranko I did want to let you know that we merged in #3292 which should also help prevent this. |

|

Looks great; we'll upgrade next week. |

Hi @liorfranko Did the update solved your issue? |

|

Hi @felipewnp |

Version

Karpenter Version: v0.19.2

Kubernetes Version: v1.20.15

Expected Behavior

Deploying 13 pods, with CPU request=10 and memory request=53Gi with anfti-affinity (Each pod should deploy on different node), we expect to get spot instances with, more or less, 16 cores each.

Actual Behavior

We got:

4 - r5n.4xlarge

5 - r5n.8xlarge

1 - c5n.9xlarge

2 - r5n.12xlarge

I know that Karpenter uses the "price and capacity optimized" strategy, but it doesn't seem legit.

In the above situation, we requested 130 cores, and we could get 13 instances of many 4xlarges which is 208 cores, but we got 420 cores instead.

This often happens, and our clusters are significantly underutilized without the "support node replacement for spot nodes" kubernetes-sigs/karpenter#763.

Steps to Reproduce the Problem

Use a deployment with anti-affinity, CPU request = 10.

Resource Specs and Logs

Community Note

The text was updated successfully, but these errors were encountered: