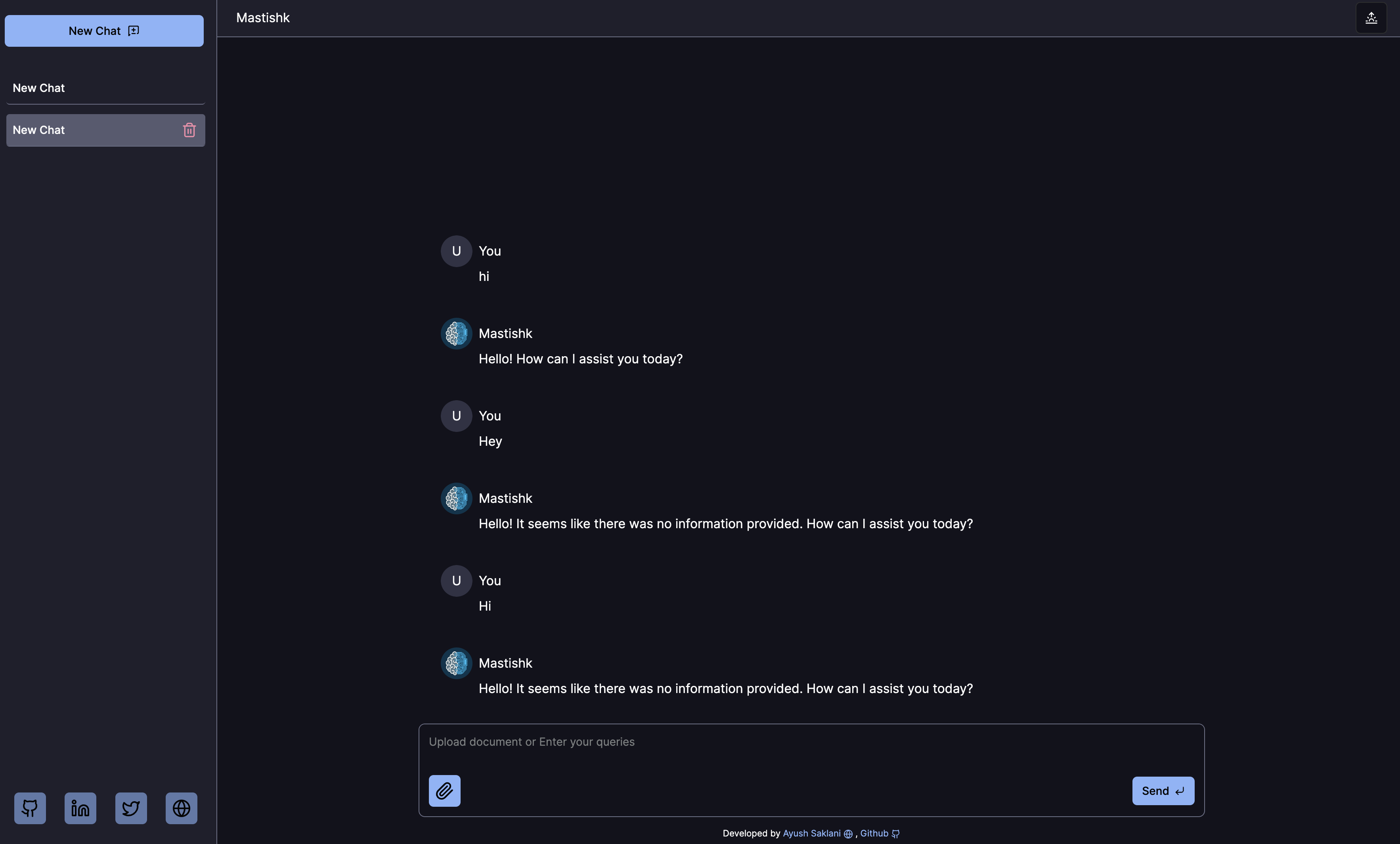

A chatbot powered by LLM to chat with documents. It is using RAG to answer question based on document provided.

mastishk.ayushsaklani.com built with Next.js and Qdran VectorDB , hosted with Vercel

-

Local Storage Chat Storage: Chats are stored locally in the user's browser, ensuring privacy and security.

-

Multiple Chat Support: Users can engage in multiple chats simultaneously, managing conversations efficiently.

-

Responsive Design: The app is designed to adapt seamlessly to various screen sizes and devices.

-

Dark Mode: Option available for users to switch to a dark mode interface, reducing eye strain especially in low-light environments.

-

Progressive Web App (PWA): The app functions as a Progressive Web App, allowing users to install it on their devices, access it offline, and receive push notifications for new messages.

- First, install dependencies

npm install- Run developement server

npm run devOpen http://localhost:3000 with your browser to see the result.

-

Generate a full static production build

npm run build

-

Preview the site as it will appear once deployed

npm run serve