-

Notifications

You must be signed in to change notification settings - Fork 90

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Petsc interface #1805

Petsc interface #1805

Conversation

* include/bout/petsc_interface.hxx: C

I have added an object-oriented interface between BOUT and PETSc which will allow for easier conversion between Bout Fields and PETSc vectors and matrices. In particular, the interface uses BOUT indexing and then internally handles the conversion to PETSc global indexing. I have written unit tests for this interface to ensure it behaves as expected. In particular, I have checked that it can handle indexing properly for parallel simulations. As all tests are run in serial, this required quite a lot of work. I created a new fake mesh class which acts as though it is on just one processor of many. By creating a group of these meshes they can pass information to each other, emulating communication between processors. I also needed to fake MPI calls, which required creating virtual methods for them on the mesh class. These are then overridden in the fake mesh implementation. I also structured the classes in my PETSc interface so that fake classes could override certain methods where it is necessary to make the pretend-parallelism work. This PETSc interface will make implementation of a 3D Laplace solver much simpler.

I've written implementations for the setters (tests passing), indexers, and vectors. For the latter two, the tests currently fail. I've also fixed the makefile so that gmock is now linked.

This required me to make a number of changes to the fake parallel mesh class I'd added for testing. I had made numerous errors in the implementation of that and had overlooked a few problems. However, the current state of the code will now compile and all indexer tests pass.

All unit tests now pass for this type. PETSc prints some error messages to the screen, but this is expected; it occurs when testing that erroneous use-cases are caught correctly.

While writing a 3D Laplace it I caught a few bugs here and there in my PETSc interface, particularly around its handling of corner cells.

These regions are now created during setup of the mesh. Creating them myself in the tests is unnecessary and leads to an error because they already exist.

Following suggestions made by @ZedThree on PR #1803, I have made a number of changes to the PETSc interface. The most significant of these are: - Changing the template parameters in this interface from F to T - Moving the PetscVectorElement and PetscMatrixElement classes inside PetscVector and PetscMatrix, respectively. Then are now PetscVector::Element and PetscMatrix::Element - Changing the Element classes to return values rather than pointers - Adding conversion routines to turn PetscVector::Element into BoutReal - Removing the copy-and-swap idiom from the PetscVector copy-assignment operator - Making some more variables const and some return-types non-const (more still to be done on this) - Converting from C-style casting to C++ static_cast<> - Various other minor fixes and style changes

`FakeMesh::createBoundaryRegions()` is now called when constructing `FakeMeshFixture`, so attempts to call it a second time from within this unit test result in an error. As such, I removed the second call.

Converted these into PascalCase.

|

Most of the specific issues raised on PR #1803 have now been resolved on this branch. The main ones that remain are

|

|

Currently failing to compile because it turns out I pass There's a strange double-free bug coming from the unit tests. I'm not sure I completely understand, but it looks like it's something to do with the way virtual ~ParallelIndexerTest() {

bout::globals::mesh = nullptr;

}Google recommend adding a virtual destructor to all test fixtures, btw. It's never been a problem for me, but it's probably wise. The WithQuietOutput info{output_info}, warn{output_warn}, progress{output_progress}, all{output};

|

This required both disabling the output streams used by BOUT++ (to suppress the information it prints to the screen) and setting `PetscErrorPrintf = PetscErrorPrintfNone;` so that PETSc error messages (which are expected on tests checking erroneous behaviour) are no longer visible.

Yes, I noticed this problem on the CI server. Good to see that it's an easy fix.

Where do you see this bug? I'm not getting it when I run on my system. Also, do you have a reference for the Google recommendation? I'd be interested to read why.

Thanks for mentioning these. The noise was bothering me quite a lot, especially where it consists of PETSc error messages (which, as you say, are supposed to be raised, as I'm checking that improper use results in an exception being thrown). I had tried to figure out how to suppress the noise from PETSc but didn't find anything when I Googled, so not sure where |

It was a bit tricky to reproduce, as it involved multiple tests interacting so it could be due to tests being run in a different order. Perhaps try running

I can't seem to find an explicit recommendation specifically for googletest now, so I'm probably misremembering, but the gist of it is that base classes should have virtual destructors to ensure they get called by derived classes. Each Can the |

These data races were causing tests to fail in a non-deterministic manner. One was caused by a variable being shared which should have been private. The other was due to the chance of simultaneous writes to `std::set`.

|

I've finally gotten the CI test all to pass, having eliminated the data races and other thread-unsafe behaviour within the PETSc interface and tests. I've run |

|

I think generally we'd ask that you clang format just the regions that you've touched rather than whole files but @ZedThree can probably provide exact guidance. |

|

I wouldn't run I'm in the final stages of prepping the v4.3.0 release, so I hope to be able to give this another read through either tomorrow or Monday. |

|

Thanks for the heads-up about |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Looks good! A few points to go through, but I think basically ready to go in.

I've not been through the tests yet, I'll do that tomorrow

| public: | ||

| /// If \p localmesh is the same as the global one, return a pointer | ||

| /// to the global instance. Otherwise create a new one. | ||

| static IndexerPtr getInstance(Mesh* localmesh); |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Given that this is tied to a particular mesh, maybe we should think about moving it into there eventually. That would likely require this working for all boundary sizes, so we'll hold off on that for now

I've added additional boundary regions with the suffix _THIN which differ from the exinst regions by 1. Including corner values (in y boundaries) 2. Only being one cell deep In addition, the GlobalIndexer class creates a region from these and RGN_NOBNDRY, called RGN_ALL_THIN. This allows far more compact iteration over the grid cells to be stored in PETSc vectors. The new regions will also be useful when setting boundary condition in various solvers. While doing this refactoring I caught an error in the routine on BoutMesh which created the existing boundary regions. There was a call to `firstX()` which should have been `lastX()` when determining what indices to place in RGN_OUTER_X. I can only presume that this hasn't been caught yet because no one has used these region when there is more than one processor in the x-direction. This refactor revealed that sometimes the GlobalIndexer class ended up returning a stale instance between unit tests, due to a quirk of where new values of `localmesh` were being allocated in memory. To avoid this I have added an explicit `recreateGlobalInstance()` method, which marks that the indexer corresponding to the global mesh should be recreated the next time it is requested.

Turned out I was getting the interpolation weights incorrectly, as I shouldn't have used the y-index for the position I wanted but instead for where I want to extend along the field-line from.

|

I've committed the changes requested above, as well as a few bug-fixes I made while running integration tests for the 3D AMG Laplace solver. I've also merged in the latest changes from the |

|

2 of the CI runs failed, but I'm not sure why. In both cases the log says It looks like something may be wrong with Travis itself. |

|

Yes, agreed. It looks like Travis had a hiccup. I've restarted the jobs so hopefully they work this time. |

|

For reason the submodules are reverting to the previous commit we had. But I can see |

There was a problem hiding this comment.

Choose a reason for hiding this comment

The reason will be displayed to describe this comment to others. Learn more.

Just two things need fixing, then we should get this in

|

Fixed those to issues with assignment operators. It seems I accidentally introduced a commit reverting the updates to the submodules. I had thought that I was bringing the submodules commits in my repo up-to-date with the ones in |

|

Great work, thanks @cmacmackin! |

This is the PETSc interface which I implemented as part of #1803, which @ZedThree requested I split into a separate PR. It addresses issue #1771 and allows vectors and arrays to be set using the local BOUT++ index objects for that processor. The wrapper will automatically handle conversions to global indexing for PETSc. It also handles the interpolation weights needed when calculating along-field values in the y-direction. It has been extensively unit tested. The unit tests for the index conversion ability required some modest refactoring of the Mesh class, making some methods virtual which weren't previously and adding methods which wrap some MPI calls (so that they can be overridden for testing purposes). This allows the parallel behaviour of the indexing to be tested while running the unit tests in serial.

There were a number of specific comments Peter made about my code on the old PR. I will address those and then push the changes to this branch.

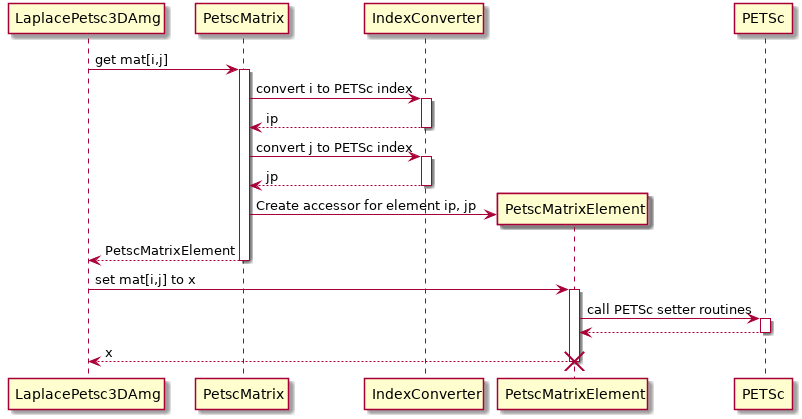

The basic call-sequence of my PETSc interface can be seen below:

Basically, I need the indexing operator to return something to which matrix/vector values can be assigned. When assigning to that object, it would then call the PETSc setter routines. While something like this could have been accomplished with a single method on PetscMatrix along the lines of

setElement(ind_type& index1, ind_type& index2, BoutReal val);the approach I took allowed something which feels more natural and is thus more readable.

Peter had said

It should be possible to create a a new class which provides access to the MPI methods (or add them to BoutComm). The reason I took the approach I did was because it for minimal changes to existing calls to MPI routines within the code by ensuring the wrapper methods were in the same class/namespace as the Mesh routines which call them. I don't have any objections to placing the wrappers on a separate class, i just didn't feel confident enough to make such a disruptive change on my own.